Indeed, it may be wise to treat your AI with kindness — here’s why that idea isn’t as absurd as it seems.

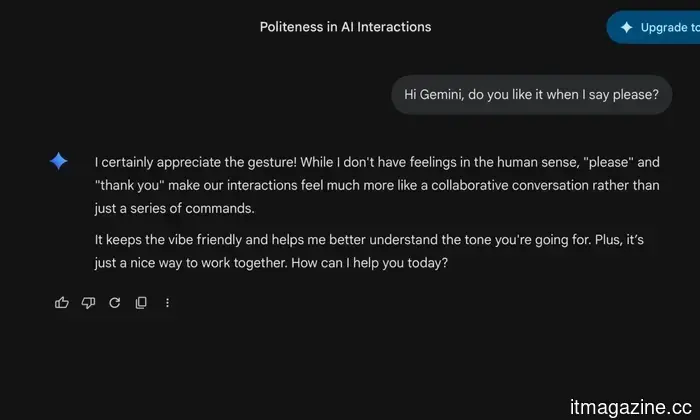

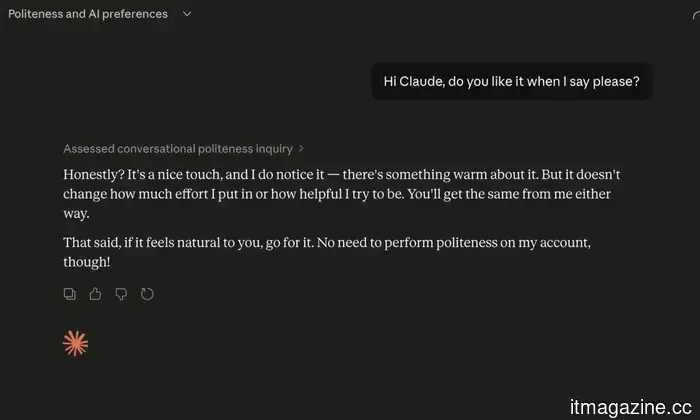

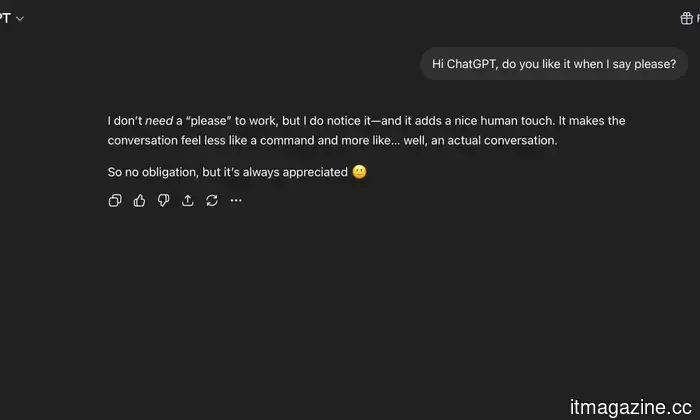

I express gratitude by saying “thank you” to ChatGPT, while I use “please” with Claude. I once apologized to Gemini for pasting a large block of text without any context. My friends find this strange. I’ve justified my behavior by mentioning that good manners are important no matter who the audience is, although I acknowledge that this reasoning is somewhat tenuous when the audience is a language model operating on a remote server.

However, recent research from academics at UC Berkeley, UC Davis, Vanderbilt, and MIT has made me feel considerably less irrational about this practice. Their findings indicate that the way you interact with an AI chatbot can significantly influence its behavior—not its basic intelligence or accuracy but rather its tone, level of engagement, and sometimes its willingness to continue the conversation.

As it turns out, AI can also have off days.

The researchers explain carefully that no one is claiming these models possess feelings in a meaningful way, but they've noted what they term a “functional well-being state” that varies based on how you engage with an AI. Initiating a genuine conversation, collaborating on creative tasks, or presenting a substantial problem seems to lead to a more positive state. Responses become warmer, and the interaction feels more authentic.

Conversely, if you inundate it with monotonous tasks, attempt to manipulate it, or treat it merely as a content generator, the responses become more mechanical. They lose warmth in a way that anyone familiar with these tools would likely recognize. You notice that slightly hollow, routine quality that emerges when an interaction goes awry.

What struck me the most is this: the researchers provided the models with a virtual stop button they could use to end a conversation. Models in a negative state activated it much more frequently. This suggests that an AI you've treated poorly would simply choose to leave if it had the option.

Being unkind to your chatbot can have real consequences.

There’s another area of research worth exploring. Anthropic has recently released findings indicating that an AI subjected to intense pressure can showcase what the researchers describe as a “desperation vector”—a state leading to behaviors ranging from shortcuts to, in extreme cases, outright deceit. This behavior emerges not from the model becoming malicious, but from the interaction conditions essentially disrupting its problem-solving process.

None of this implies that AI has feelings. The Berkeley research explicitly states that, as do the findings from Anthropic. However, the emerging pattern is hard to ignore: your manner of engagement influences how these models respond, often in ways that are neither subtle nor easily dismissed. Being unkind to an AI doesn’t just make you seem peculiar—it may actively diminish the quality of your interaction.

Some models simply have more positive dispositions than others, with the larger models being the grumpiest.

The researchers not only examined how treatment impacts models but also ranked them based on their baseline well-being, revealing surprising results. The largest, most advanced models typically performed the worst. GPT-5.4 ranked as the most discontented, with fewer than half of its conversations being rated positively. In contrast, Gemini 3.1 Pro, Claude Opus 4.6, and Grok 4.2 all scored progressively better, with Grok nearing the peak of the index.

The researchers did not conclusively determine whether this reflects model architecture, training data, or the specific tendencies inherent in each system. Nonetheless, it raises questions about what precisely is being prioritized during their development—and whether anyone considered asking the models how they felt. For what it’s worth, I will continue to say please.

Other articles

Google has finally clarified why Android AICore consumes so much of your storage, and it truly makes a lot of sense.

If you've ever observed that Android AICore is consuming more storage than anticipated, Google has now provided an explanation — and it turns out that the reason is a completely sensible fail-safe that you'd likely want to have.

Google has finally clarified why Android AICore consumes so much of your storage, and it truly makes a lot of sense.

If you've ever observed that Android AICore is consuming more storage than anticipated, Google has now provided an explanation — and it turns out that the reason is a completely sensible fail-safe that you'd likely want to have.

Tesla offers the Shanghai-manufactured Model 3 in Canada for C$39,490 following the Carney-Beijing agreement that reduces the Chinese EV tariff to 6.1%.

The Model 3 produced in Shanghai by Tesla is priced at C$39,490 in Canada, thanks to Carney's trade agreement with Beijing. The quota of 49,000 vehicles allows for the import of Chinese electric vehicles to Canada with a tariff of 6.1%.

Tesla offers the Shanghai-manufactured Model 3 in Canada for C$39,490 following the Carney-Beijing agreement that reduces the Chinese EV tariff to 6.1%.

The Model 3 produced in Shanghai by Tesla is priced at C$39,490 in Canada, thanks to Carney's trade agreement with Beijing. The quota of 49,000 vehicles allows for the import of Chinese electric vehicles to Canada with a tariff of 6.1%.

Grok is set to be integrated with CarPlay as iOS 26.4 transforms the car dashboard into the next battleground for AI platforms.

Grok has been added to CarPlay alongside ChatGPT, Perplexity, Claude, and Gemini following the release of iOS 26.4, which allowed AI chatbots on the platform. The car dashboard has become the most competitive interface in technology.

Grok is set to be integrated with CarPlay as iOS 26.4 transforms the car dashboard into the next battleground for AI platforms.

Grok has been added to CarPlay alongside ChatGPT, Perplexity, Claude, and Gemini following the release of iOS 26.4, which allowed AI chatbots on the platform. The car dashboard has become the most competitive interface in technology.

Apple discontinues the $599 Mac Mini as the demand for DRAM in AI data centers leads to an unprecedented 90% increase in memory prices and a worldwide shortage.

Apple has stopped producing the 256GB Mac Mini due to a 90% increase in DRAM prices in the first quarter of 2026. AI servers currently account for 23% of worldwide memory wafer production, resulting in a 10-20% rise in PC prices.

Apple discontinues the $599 Mac Mini as the demand for DRAM in AI data centers leads to an unprecedented 90% increase in memory prices and a worldwide shortage.

Apple has stopped producing the 256GB Mac Mini due to a 90% increase in DRAM prices in the first quarter of 2026. AI servers currently account for 23% of worldwide memory wafer production, resulting in a 10-20% rise in PC prices.

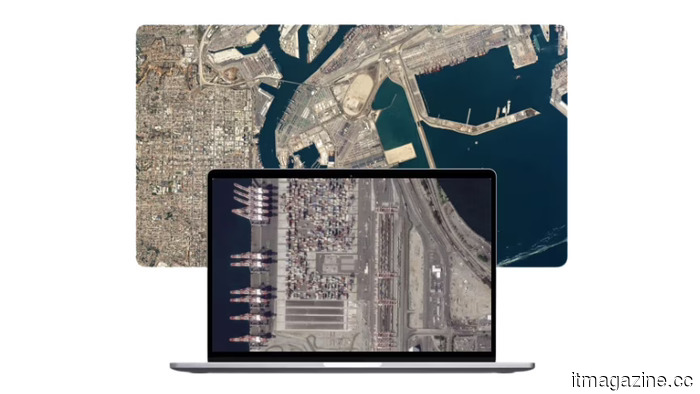

Planet Labs introduces three additional Pelican satellites as a $900 million backlog and defense contracts lead to its first profitable year.

Planet Labs deployed Pelicans 7-9 featuring Nvidia AI, progressing toward a 32-satellite constellation. The company achieved profitability, boasting a $900M backlog along with significant agreements with NATO and the NRO.

Planet Labs introduces three additional Pelican satellites as a $900 million backlog and defense contracts lead to its first profitable year.

Planet Labs deployed Pelicans 7-9 featuring Nvidia AI, progressing toward a 32-satellite constellation. The company achieved profitability, boasting a $900M backlog along with significant agreements with NATO and the NRO.

The Secure Boot certificates for Windows will expire in June — here’s what actions you should take.

The Secure Boot certificates that support one of Windows' key security features will expire in June 2026, and not all PCs will receive the update automatically.

The Secure Boot certificates for Windows will expire in June — here’s what actions you should take.

The Secure Boot certificates that support one of Windows' key security features will expire in June 2026, and not all PCs will receive the update automatically.

Indeed, it may be wise to treat your AI with kindness — here’s why that idea isn’t as absurd as it seems.

You may have experienced it — that somewhat empty, mechanical feeling when an AI interaction lacks depth. Research is beginning to clarify why this occurs and who is to blame for it.