Google, Microsoft, and xAI have consented to share governmental evaluations of AI models before their release as the Mythos crisis prompts an increase in oversight.

**Summary:** Google, Microsoft, and xAI have teamed up with OpenAI and Anthropic to grant the US Commerce Department pre-release access to assess their AI models, establishing a voluntary oversight system involving all five leading frontier AI labs through a non-statutory office staffed by fewer than 200 people. This initiative arose from the Mythos crisis and a potential executive order aimed at formalizing the review process.

The Mythos crisis prompted the US government to face a previously avoided issue: how to evaluate powerful AI models that could endanger national security before they are made public. On Tuesday, the Commerce Department revealed that Google, Microsoft, and xAI will provide pre-release access to their AI models for evaluation, joining OpenAI and Anthropic, who have been submitting models since 2024. Collectively, these five companies represent the majority of global frontier AI development and have consented to let a single government office test their technologies before release. This initiative is voluntary, lacks a statutory foundation, and does not empower the government to halt any releases. It constitutes the closest approximation of an AI oversight framework in the US, established within two years by an office with a minimal staff.

**The Office:**

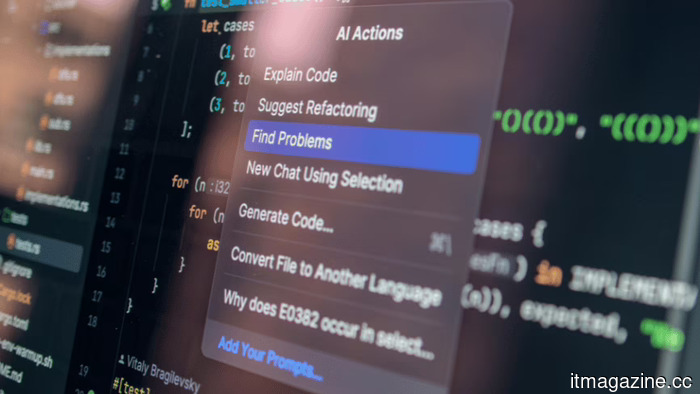

The Center for AI Standards and Innovation operates within the National Institute of Standards and Technology, part of the Commerce Department. Formed under President Biden in 2023 as the AI Safety Institute, it was rebranded and repurposed under Trump to prioritize standards and national security over safety research. The center has conducted over 40 evaluations of AI models, including advanced systems not yet released to the public. Developers often submit versions with reduced safety measures to scrutinize national security-related capabilities, such as biological weapon synthesis, cyberattack automation, and challenging autonomous agent behaviors.

Chris Fall is now at the helm of the center after the unexpected exit of Collin Burns, a former AI researcher at Anthropic, who was initially appointed to the role but left shortly due to political pressures. Burns had relocated and forfeited valuable stock for the government position, highlighting the political intricacies in creating an oversight system in a sector where evaluators and subjects share a talent pool. Trump's broader regulatory stance on AI has leaned towards federal preemption of state laws and a lenient approach to the industry. However, the model evaluation initiative represents a more assertive approach, indicating the government's desire to understand these systems' capabilities before they are revealed to the public.

**The Agreements:**

The partnerships with Google, Microsoft, and xAI broadened an earlier arrangement limited to two companies, nearing comprehensive oversight of frontier AI development. OpenAI and Anthropic have updated their agreements to conform with Trump’s AI Action Plan, which designates the center as responsible for national security-related assessments and integrates it into a larger "evaluations ecosystem." These agreements are not legal contracts, but voluntary commitments that companies can withdraw from anytime, as no law mandates pre-release evaluation or grants the center authority to delay or block releases. The entire framework relies on companies determining that granting early government access is preferable to facing potential legislative measures.

For the companies, the alternative could be legislative measures that would provide the center with permanent authority, mandatory evaluations, and the ability to impose restrictions on releases. The Pentagon has indicated it may blacklist AI firms that do not comply with government requests, as evidenced by Anthropic being flagged as a supply-chain risk due to its refusal to allow the use of its models for weapons or surveillance. These voluntary agreements partly serve to showcase compliance ahead of potential compulsion.

**The Catalyst:**

The expansion of the evaluation program coincides with the Mythos crisis, wherein Anthropic's groundbreaking model can autonomously discover zero-day vulnerabilities across major operating systems and browsers. This model has uncovered thousands of severe bugs, including long-standing undetected vulnerabilities. The White House has resisted Anthropic's plan to extend Mythos access beyond initial partners, while the NSA employs it despite the Pentagon's blacklist of Anthropic. The EU is requesting access to Mythos for European cybersecurity, emphasizing that a pivotal cybersecurity tool should not remain under the exclusive control of a US company partially blacklisted by the government.

The Mythos case illustrates the need for the evaluation program: a model with immediate national security risks that cannot be assessed post-deployment. The center has conducted over 40 evaluations since 2024 that likely uncovered capabilities in unreleased models that informed policy decisions. However, Google’s Gemini, Microsoft’s models, and xAI’s Grok were not previously subject to government pre-release review. The recent agreements bridge that gap, ensuring that any forthcoming models with capabilities like Mythos reach government evaluators prior to public release.

**The Limits:**

The program's inherent vulnerability lies in its reliance on voluntary participation. A company discovering dangerous features within its model could legally opt not to submit it for evaluation and proceed with its release. The center lacks subpoena power, injunctive authority, or a means to compel disclosure. Its influence is limited to reputational and

Other articles

Intel has appointed Qualcomm veteran Alex Katouzian to head a new Client Computing and Physical AI division.

Intel has brought on Alex Katouzian, who spent 25 years at Qualcomm, to head a newly formed Client Computing and Physical AI division. This marks the second high-profile recruitment from Qualcomm during CEO Lip-Bu Tan's leadership.

Intel has appointed Qualcomm veteran Alex Katouzian to head a new Client Computing and Physical AI division.

Intel has brought on Alex Katouzian, who spent 25 years at Qualcomm, to head a newly formed Client Computing and Physical AI division. This marks the second high-profile recruitment from Qualcomm during CEO Lip-Bu Tan's leadership.

Workers at Google DeepMind have voted in favor of unionizing following a Pentagon AI agreement that supersedes eight years of commitments to ethical practices.

Staff at DeepMind UK voted 98% in favor of joining CWU and Unite following Google's agreement on a confidential Pentagon contract for "any lawful purpose." They are advocating for the cessation of military AI applications and the reinstatement of ethical standards.

Workers at Google DeepMind have voted in favor of unionizing following a Pentagon AI agreement that supersedes eight years of commitments to ethical practices.

Staff at DeepMind UK voted 98% in favor of joining CWU and Unite following Google's agreement on a confidential Pentagon contract for "any lawful purpose." They are advocating for the cessation of military AI applications and the reinstatement of ethical standards.

Coinbase reduces its workforce by 14% and restructures to focus on AI-driven teams as cryptocurrency revenue declines by 26% and trading volumes reach an 18-month low.

Coinbase is cutting 660 jobs and reorganizing into AI-native pods while implementing a five-layer management limit. Revenue for Q1 is anticipated to decline by 26% due to a 48% drop in crypto trading volumes.

Coinbase reduces its workforce by 14% and restructures to focus on AI-driven teams as cryptocurrency revenue declines by 26% and trading volumes reach an 18-month low.

Coinbase is cutting 660 jobs and reorganizing into AI-native pods while implementing a five-layer management limit. Revenue for Q1 is anticipated to decline by 26% due to a 48% drop in crypto trading volumes.

Founders of IronSource secure $60 million at a valuation of $500 million for Zyg, an agentic AI platform designed to automate advertising in e-commerce.

Zyg secured $60 million in funding, led by Accel, at a valuation of $500 million just two months following their stealth phase. The IronSource team is developing AI agents designed to take the place of human ad buyers for direct-to-consumer brands.

Founders of IronSource secure $60 million at a valuation of $500 million for Zyg, an agentic AI platform designed to automate advertising in e-commerce.

Zyg secured $60 million in funding, led by Accel, at a valuation of $500 million just two months following their stealth phase. The IronSource team is developing AI agents designed to take the place of human ad buyers for direct-to-consumer brands.

Metalenz's latest face scan technology is integrated beneath the phone display, eliminating the need for unsightly cutouts.

Metalenz has just demonstrated that payment-grade facial recognition can function with a fully lit display, a feat that Apple has been attempting to achieve for years without success.

Metalenz's latest face scan technology is integrated beneath the phone display, eliminating the need for unsightly cutouts.

Metalenz has just demonstrated that payment-grade facial recognition can function with a fully lit display, a feat that Apple has been attempting to achieve for years without success.

Coinbase reduces its workforce by 14% and restructures to focus on AI-driven pods as cryptocurrency revenue falls 26% and trading volumes reach an 18-month low.

Coinbase is reducing its workforce by 660 employees and reorganizing into AI-focused pods with a limitation of five management layers. Revenue for Q1 is projected to decline by 26% as cryptocurrency trading volumes decrease by 48%.

Coinbase reduces its workforce by 14% and restructures to focus on AI-driven pods as cryptocurrency revenue falls 26% and trading volumes reach an 18-month low.

Coinbase is reducing its workforce by 660 employees and reorganizing into AI-focused pods with a limitation of five management layers. Revenue for Q1 is projected to decline by 26% as cryptocurrency trading volumes decrease by 48%.

Google, Microsoft, and xAI have consented to share governmental evaluations of AI models before their release as the Mythos crisis prompts an increase in oversight.

Five leading AI laboratories are now presenting their models for evaluation by the US government. This voluntary program lacks legal authority but includes all significant AI developers following the Mythos crisis.