Google, Microsoft, and xAI have consented to provide pre-release evaluations of their government AI models as the Mythos crisis compels an increase in oversight.

**Summary**: Google, Microsoft, and xAI have joined OpenAI and Anthropic in granting the U.S. Commerce Department pre-release access to assess their AI models, establishing a voluntary oversight system among five major AI labs through an office lacking formal authority and staffed by fewer than 200 individuals. This expansion was prompted by the Mythos crisis and potential executive orders aimed at formalizing the review process.

The Mythos crisis compelled the U.S. government to address a previously sidestepped issue: how to handle an AI model powerful enough to pose a national security risk without a formal evaluation mechanism before public release. On Tuesday, the Commerce Department announced that Google, Microsoft, and xAI would provide the U.S. government with pre-release access to their AI models for assessment. They join OpenAI and Anthropic, which have been submitting their models for evaluation to the same agency since 2024. Currently, five companies are responsible for a significant portion of global frontier AI advancements, and all have consented to let a single government office examine their systems before they are made public. This arrangement is voluntary, without statutory backing, and does not grant the government the power to prevent a release. Nevertheless, it represents the closest thing to an AI oversight system that the U.S. has and was put in place within two years by a small office.

The Office

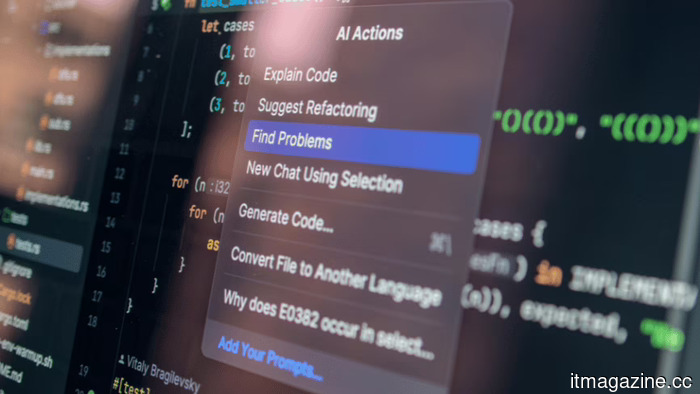

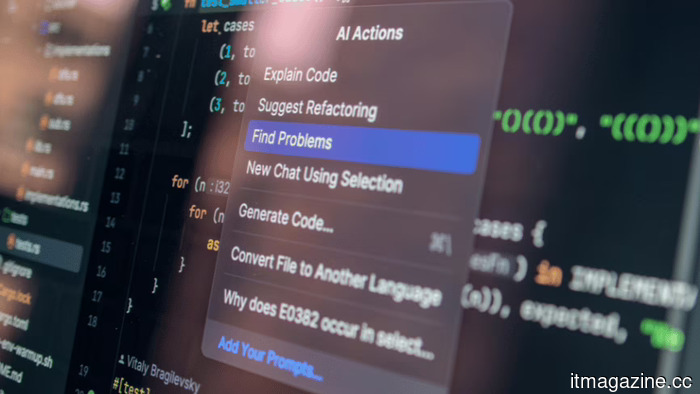

The Center for AI Standards and Innovation is located within the National Institute of Standards and Technology in the Commerce Department. It was founded under President Biden in 2023 as the AI Safety Institute, reformulated under Trump with a focus on standards and national security instead of safety research. The center has achieved over 40 evaluations of AI models, including advanced systems not yet publicly available. Developers frequently submit versions with reduced safety measures so evaluators can investigate capabilities relevant to national security, including biological weapon synthesis, cyberattack automation, and potentially uncontrollable autonomous behaviors.

Chris Fall now leads the center, succeeding Collin Burns, a former AI researcher at Anthropic who was ousted by the White House just four days into his appointment. Burns had left Anthropic, forfeited valuable stock, and relocated to accept the government role. His dismissal, reportedly due to his ties with an actively contested company, underscores the political complexities involved in creating an oversight structure for an industry where evaluators and the evaluated share the same talent pool. Trump's broader regulatory strategy on AI has emphasized federal preemption over state laws and a non-intrusive stance toward industry, though the model evaluation program reflects a more assertive approach: the government seeks to understand these systems before anyone else does.

The Agreements

The new collaborations with Google, Microsoft, and xAI broaden what was previously a two-company agreement into a more extensive frontier coverage. OpenAI and Anthropic have revised their existing agreements to align with Trump’s AI Action Plan, which instructs the center to lead assessments related to national security and integrates it into a wider "evaluations ecosystem." These agreements are not formal contracts but voluntary commitments that companies can withdraw at any point. There are no legal requirements for pre-release evaluation, and the center lacks the authority to delay or prohibit deployment. The entire setup relies on the AI companies voluntarily choosing to provide early access for strategic reasons.

From the companies' viewpoint, the alternative could be more stringent legislation. Several proposed bills would grant the center permanent statutory authority, compulsory evaluation requirements, and the ability to set conditions on deployment. The Pentagon has shown a willingness to blacklist AI companies that resist government demands, labeling Anthropic a supply-chain risk after it declined to permit its models for autonomous weapons or mass domestic surveillance. These voluntary evaluation agreements serve, in part, as a means for the remaining companies to demonstrate their cooperation before it becomes obligatory.

The Catalyst

The growth of the evaluation program occurs in the context of the Mythos crisis. Anthropic’s innovative model, announced in April, can autonomously find and exploit zero-day vulnerabilities across major operating systems and web browsers, having detected thousands of significant bugs, some of which had persisted undetected for decades. The White House has opposed Anthropic’s efforts to broaden access to Mythos beyond its initial partners. Despite the Pentagon's blacklisting, the NSA is using it. The EU is urging access to Mythos for European cybersecurity efforts, contending that a critical cybersecurity tool cannot remain under the exclusive control of a U.S. company partially blacklisted by the American government.

Mythos exemplified the type of model the evaluation program aims to identify: one with capabilities that have immediate national security implications and that cannot be assessed post-deployment. The center's over 40 evaluations since 2024 presumably uncovered capabilities in unreleased models that influenced policy decisions, but these evaluations were conducted under agreements with only two companies. Google’s Gemini, Microsoft’s models, and xAI’s Grok had not previously undergone pre-release review by the government. The new agreements close this gap, ensuring that any future model with Mythos-level capabilities reaches government

Other articles

Asus Zenbook A16 Review: A stylish competitor to the MacBook from the Windows camp?

Equipped with a beautiful 3K OLED display, the Asus Zenbook A16 offers impressive battery life in a sleek design, positioning itself as a robust AI-ready performance machine for Windows enthusiasts.

Asus Zenbook A16 Review: A stylish competitor to the MacBook from the Windows camp?

Equipped with a beautiful 3K OLED display, the Asus Zenbook A16 offers impressive battery life in a sleek design, positioning itself as a robust AI-ready performance machine for Windows enthusiasts.

Google, Microsoft, and xAI have consented to provide evaluations of AI models to the government before their release, as the Mythos crisis necessitates an increase in oversight.

Five leading AI laboratories are now presenting their models for assessment by the US government. This voluntary program lacks legal authority but includes all major AI developers following the Mythos crisis.

Google, Microsoft, and xAI have consented to provide evaluations of AI models to the government before their release, as the Mythos crisis necessitates an increase in oversight.

Five leading AI laboratories are now presenting their models for assessment by the US government. This voluntary program lacks legal authority but includes all major AI developers following the Mythos crisis.

Inside QuantWare's €152 million funding round to develop KiloFab.

QuantWare has secured a €152 million Series B funding round, led by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deeptech funding round in Dutch history.

Inside QuantWare's €152 million funding round to develop KiloFab.

QuantWare has secured a €152 million Series B funding round, led by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deeptech funding round in Dutch history.

Fervo Energy announces a $1.33 billion IPO, marking the biggest climate-tech listing of 2026.

Fervo Energy commenced its IPO roadshow with a goal of raising up to $1.33 billion by offering shares priced between $21 and $24. The Nasdaq listing is aimed at positioning the company as a climate-tech player in the AI infrastructure sector.

Fervo Energy announces a $1.33 billion IPO, marking the biggest climate-tech listing of 2026.

Fervo Energy commenced its IPO roadshow with a goal of raising up to $1.33 billion by offering shares priced between $21 and $24. The Nasdaq listing is aimed at positioning the company as a climate-tech player in the AI infrastructure sector.

Fervo Energy has initiated a $1.33 billion IPO, marking it as the largest climate-tech offering of 2026.

Fervo Energy has initiated its IPO roadshow with the goal of raising up to $1.33 billion by pricing shares between $21 and $24, in a Nasdaq listing identified as the climate-tech option for the AI infrastructure sector.

Fervo Energy has initiated a $1.33 billion IPO, marking it as the largest climate-tech offering of 2026.

Fervo Energy has initiated its IPO roadshow with the goal of raising up to $1.33 billion by pricing shares between $21 and $24, in a Nasdaq listing identified as the climate-tech option for the AI infrastructure sector.

Tesla’s rollout of FSD in Europe faces skepticism from regulators, a sentiment that Musk has been conveying.

Reuters has released internal communications from EU regulators demonstrating ongoing doubt regarding Tesla's assertions about the safety of its FSD and its rollout approach, even after receiving Dutch type approval in April.

Tesla’s rollout of FSD in Europe faces skepticism from regulators, a sentiment that Musk has been conveying.

Reuters has released internal communications from EU regulators demonstrating ongoing doubt regarding Tesla's assertions about the safety of its FSD and its rollout approach, even after receiving Dutch type approval in April.

Google, Microsoft, and xAI have consented to provide pre-release evaluations of their government AI models as the Mythos crisis compels an increase in oversight.

Five leading AI laboratories are currently submitting their models for evaluation by the US government. This voluntary initiative lacks legal authority but includes all significant AI developers following the Mythos crisis.