Google, Microsoft, and xAI have consented to share evaluations of AI models with the government before their release, as the Mythos crisis necessitates an increase in oversight.

**Summary:** Google, Microsoft, and xAI have partnered with OpenAI and Anthropic to grant the US Commerce Department pre-release access to their AI models for evaluation, establishing a voluntary oversight mechanism among the five major frontier AI labs. This development follows the Mythos crisis and a potential executive order aimed at formalizing the review process.

The Mythos crisis compelled the US government to address the risks posed by powerful AI models that could jeopardize national security without any formal evaluation process. On Tuesday, the Commerce Department revealed that Google, Microsoft, and xAI will provide the government with pre-release access to their AI models. They join OpenAI and Anthropic, which have been submitting their models to the office since 2024. Now, these five companies, representing most of the global frontier AI development, have consented to allow a single government office to assess their systems before deployment. This arrangement, which lacks statutory grounding, gives the government no authority to block releases and serves as the closest approximation to an AI oversight system in the U.S., developed in under two years by an office with fewer than 200 personnel.

**The Office:**

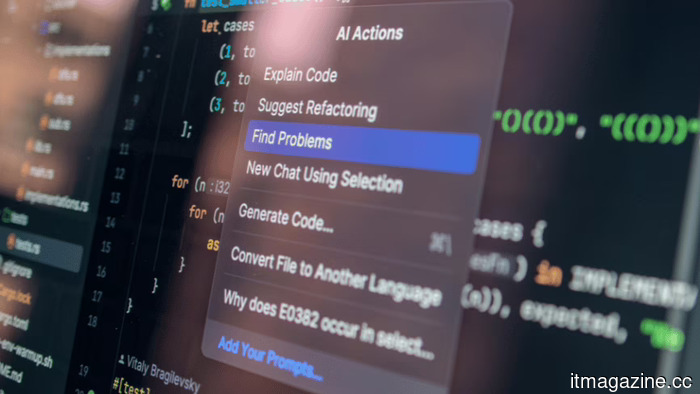

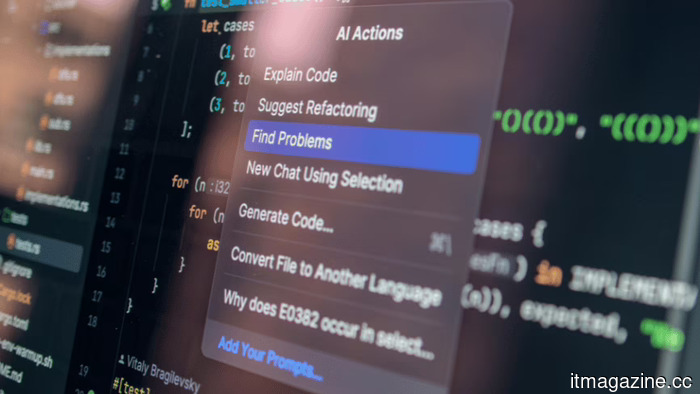

The Center for AI Standards and Innovation operates within the Commerce Department’s National Institute of Standards and Technology. It was established by President Biden in 2023 as the AI Safety Institute, but was rebranded under Trump to underline a focus on standards and national security rather than solely on safety research. The center has conducted over 40 evaluations of AI models, including advanced systems that have never been publicly released. Developers often submit versions of their models with reduced safety measures to allow evaluators to investigate national security-relevant capabilities, including autonomous weapon synthesis and cyberattack automation.

Chris Fall currently leads the center after the sudden departure of Collin Burns, a former AI researcher at Anthropic. Burns was appointed to the position but left after just four days, reportedly due to political pressures stemming from his ties to a company the administration was contesting. His removal highlights the complexities of creating an oversight framework for an industry where evaluators and subjects share the same talent pool. Trump’s broader AI regulatory stance has emphasized minimizing federal oversight of state regulations and adopting a relaxed approach toward industry, but the model evaluation program underscores a tougher stance: the government aims to comprehend these systems' capabilities before they enter public usage.

**The Agreements:**

The new collaborations with Google, Microsoft, and xAI enhance what was previously a two-company agreement, making it more comprehensive in terms of frontier coverage. OpenAI and Anthropic have revised their existing agreements to align with Trump’s AI Action Plan, which assigns the center to oversee national security-related model assessments, integrating it into a larger "evaluations ecosystem." These agreements are not formal contracts; they are voluntary pledges that companies can rescind at any time. There are no legal requirements mandating pre-release evaluations, and the center has no authority to delay or prevent deployment, relying instead on companies’ strategic interests to grant early government access.

From the companies' perspectives, the alternative to voluntary participation is new legislation. Several proposed bills could bestow the center with permanent legal authority, mandatory evaluation conditions, and the ability to set deployment terms. The Pentagon has already indicated its readiness to blacklist AI companies resisting government requests, labeling Anthropic as a supply-chain risk after it declined to permit its models for use in autonomous weapons or mass surveillance. The voluntary agreements thus serve partly as a means for the remaining companies to illustrate cooperation before regulation potentially becomes enforced.

**The Catalyst:**

The expansion of the evaluation program is occurring against the backdrop of the Mythos crisis, wherein Anthropic’s advanced model was reported to autonomously uncover and exploit vulnerabilities across major operating systems and web browsers. This model has detected numerous critical bugs, some of which had remained hidden for decades. The White House has opposed Anthropic’s attempts to broaden access to Mythos beyond its select launch partners, while the NSA has utilized it despite the Pentagon's blacklist of the company. Additionally, the EU demands access to Mythos for European cyber defense, arguing that a tool with significant cybersecurity capabilities cannot remain under the exclusive control of a partially blacklisted American entity.

Mythos exemplified the risk the evaluation program aims to intercept: a model with capabilities that pose immediate national security concerns but cannot be appraised post-deployment. The center’s evaluations since 2024 have likely uncovered features in unreleased models that inform policy-making, but these examinations were conducted under terms with only two companies. Until now, Google’s Gemini, Microsoft’s models, and xAI’s Grok were not subject to government review prior to release. The new agreements bridge this gap, ensuring that any future model with Mythos-level capabilities reaches government evaluators before public distribution, regardless of its origin.

**The Limits:**

The program's structural shortcoming is evident: it relies solely on voluntary participation. A company that identifies dangerous capabilities in its model could opt not to submit it for evaluation and choose to release

Other articles

Google, Microsoft, and xAI have consented to share governmental evaluations of AI models before their release as the Mythos crisis prompts an increase in oversight.

Five leading AI laboratories are now presenting their models for evaluation by the US government. This voluntary program lacks legal authority but includes all significant AI developers following the Mythos crisis.

Google, Microsoft, and xAI have consented to share governmental evaluations of AI models before their release as the Mythos crisis prompts an increase in oversight.

Five leading AI laboratories are now presenting their models for evaluation by the US government. This voluntary program lacks legal authority but includes all significant AI developers following the Mythos crisis.

Tesla's rollout of FSD in Europe faces skepticism from regulators, as Musk has been indicating.

Reuters has released internal communications from EU regulators that reveal ongoing doubts about Tesla's claims regarding the safety of its FSD and its rollout approach, even after receiving type approval in the Netherlands in April.

Tesla's rollout of FSD in Europe faces skepticism from regulators, as Musk has been indicating.

Reuters has released internal communications from EU regulators that reveal ongoing doubts about Tesla's claims regarding the safety of its FSD and its rollout approach, even after receiving type approval in the Netherlands in April.

Intel has appointed Qualcomm veteran Alex Katouzian to head a new Client Computing and Physical AI division.

Intel has brought on Alex Katouzian, a veteran of Qualcomm with 25 years of experience, to head a newly merged Client Computing and Physical AI division. This marks the second high-level hire from Qualcomm during CEO Lip-Bu Tan's leadership.

Intel has appointed Qualcomm veteran Alex Katouzian to head a new Client Computing and Physical AI division.

Intel has brought on Alex Katouzian, a veteran of Qualcomm with 25 years of experience, to head a newly merged Client Computing and Physical AI division. This marks the second high-level hire from Qualcomm during CEO Lip-Bu Tan's leadership.

Workers at Google DeepMind have voted in favor of unionizing following a Pentagon AI agreement that supersedes eight years of commitments to ethical practices.

Staff at DeepMind UK voted 98% in favor of joining CWU and Unite following Google's agreement on a confidential Pentagon contract for "any lawful purpose." They are advocating for the cessation of military AI applications and the reinstatement of ethical standards.

Workers at Google DeepMind have voted in favor of unionizing following a Pentagon AI agreement that supersedes eight years of commitments to ethical practices.

Staff at DeepMind UK voted 98% in favor of joining CWU and Unite following Google's agreement on a confidential Pentagon contract for "any lawful purpose." They are advocating for the cessation of military AI applications and the reinstatement of ethical standards.

Fervo Energy has initiated a $1.33 billion IPO, marking it as the largest climate-tech offering of 2026.

Fervo Energy has initiated its IPO roadshow with the goal of raising up to $1.33 billion by pricing shares between $21 and $24, in a Nasdaq listing identified as the climate-tech option for the AI infrastructure sector.

Fervo Energy has initiated a $1.33 billion IPO, marking it as the largest climate-tech offering of 2026.

Fervo Energy has initiated its IPO roadshow with the goal of raising up to $1.33 billion by pricing shares between $21 and $24, in a Nasdaq listing identified as the climate-tech option for the AI infrastructure sector.

The founders of IronSource have secured $60 million at a $500 million valuation for Zyg, an AI platform designed to automate advertising in e-commerce.

Zyg secured $60 million in funding, with Accel leading the investment, at a valuation of $500 million just two months after emerging from stealth mode. The IronSource team is developing AI agents to take over the roles of human ad buyers for direct-to-consumer (DTC) brands.

The founders of IronSource have secured $60 million at a $500 million valuation for Zyg, an AI platform designed to automate advertising in e-commerce.

Zyg secured $60 million in funding, with Accel leading the investment, at a valuation of $500 million just two months after emerging from stealth mode. The IronSource team is developing AI agents to take over the roles of human ad buyers for direct-to-consumer (DTC) brands.

Google, Microsoft, and xAI have consented to share evaluations of AI models with the government before their release, as the Mythos crisis necessitates an increase in oversight.

Five leading AI laboratories are now providing models for evaluation by the US government. This voluntary program lacks statutory authority but includes all significant AI developers following the Mythos crisis.