AI voice chats often feel uncomfortable because the assistants struggle to determine when to speak.

Thinking Machines Lab has announced that it is developing full duplex AI, which allows an AI system to listen to what a person is saying while simultaneously generating a response. In simpler terms, this means it functions more like a phone call rather than a walkie-talkie.

Founded last year by former OpenAI CTO Mira Murati, the startup introduced interaction models, beginning with TML-Interaction-Small. They claim that the system can reply in 0.40 seconds, which is comparable to typical human conversation speeds.

However, there is a limitation for those wanting to try it out immediately. This is currently a research preview, with limited access planned for the next few months and a wider release anticipated later this year.

A quicker type of AI exchange

The underlying concept is straightforward and significant. Rather than waiting for someone to complete their statement before formulating a response, the model processes incoming speech while simultaneously crafting its reply.

This reduction in delay is important because pauses can make AI assistants seem unnatural. Thinking Machines Lab presents TML-Interaction-Small's response time of 0.40 seconds as nearly akin to natural conversational pace, which could signify a significant improvement for voice technologies.

They also assert that this speed surpasses similar offerings from OpenAI and Google. While this benchmark lends credibility to the announcement, external users still need to verify whether the interaction feels as seamless as the figures suggest.

When speed influences interaction

An assistant that responds while still gathering information alters user expectations for voice conversations. While dialogue can progress more quickly, the system must also carefully manage timing.

This balance is critical when someone seeks a quick clarification instead of a lengthy response. Rapid replies are of little value if the assistant interjects prematurely, misinterprets the speaker, or disrupts the flow it’s meant to enhance.

For now, the architecture is the main highlight. The true test of the product will be whether the interaction model can create a natural timing experience.

Key points to observe before launch

The launch timeline is a crucial aspect at this point. Thinking Machines Lab has indicated that a limited research preview will be available in the coming months, followed by wider access later this year.

Details regarding availability, pricing, supported platforms, and performance outside of controlled environments remain uncertain. These factors are significant because a faster model will only be beneficial if users can integrate it into their everyday voice tools.

For those who utilize AI voice assistants, the best course of action is to monitor the preview closely. Full duplex AI holds promise, but practical testing will determine whether quicker responses genuinely enhance routine AI conversations.

Other articles

GameStop's $56 billion offer is rejected by eBay.

eBay's board has dismissed GameStop's $56 billion acquisition proposal, stating it is neither credible nor appealing, pointing to uncertainties in financing and leverage issues.

GameStop's $56 billion offer is rejected by eBay.

eBay's board has dismissed GameStop's $56 billion acquisition proposal, stating it is neither credible nor appealing, pointing to uncertainties in financing and leverage issues.

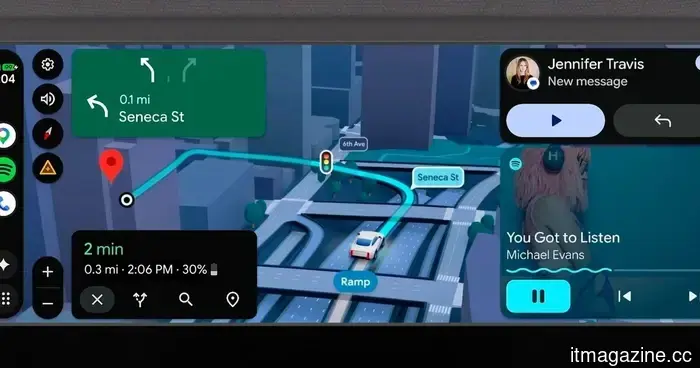

This Android Auto update aims to alter your driving habits and the way you interact with your vehicle.

Android Auto is set to receive a significant update that extends beyond mere design changes, offering improved navigation, enhanced entertainment options, and a more supportive driving experience.

This Android Auto update aims to alter your driving habits and the way you interact with your vehicle.

Android Auto is set to receive a significant update that extends beyond mere design changes, offering improved navigation, enhanced entertainment options, and a more supportive driving experience.

Claude has just assumed control of the data center that Grok needed the most.

Anthropic's partnership with SpaceX significantly enhances Claude's computing power from the Memphis data center, which xAI arguably needed the most. This highlights how critically infrastructure impacts the competition between Grok and its competitors.

Claude has just assumed control of the data center that Grok needed the most.

Anthropic's partnership with SpaceX significantly enhances Claude's computing power from the Memphis data center, which xAI arguably needed the most. This highlights how critically infrastructure impacts the competition between Grok and its competitors.

Google has significantly improved Gemini for Home, enhancing its ability to manage your smart home.

Google has released a new update for Gemini in Home, featuring enhanced camera search capabilities, quicker device controls, improved onboarding, and various upgrades to the Google Home app.

Google has significantly improved Gemini for Home, enhancing its ability to manage your smart home.

Google has released a new update for Gemini in Home, featuring enhanced camera search capabilities, quicker device controls, improved onboarding, and various upgrades to the Google Home app.

eBay turns down GameStop's $56 billion offer as

The board of eBay has dismissed GameStop's $56 billion acquisition proposal, describing it as neither credible nor appealing, due to concerns over financing uncertainty and leverage.

eBay turns down GameStop's $56 billion offer as

The board of eBay has dismissed GameStop's $56 billion acquisition proposal, describing it as neither credible nor appealing, due to concerns over financing uncertainty and leverage.

Google has significantly improved Gemini for Home, enhancing its ability to manage your smart home.

Google has launched a new update for Gemini for Home that features a more intelligent camera search, quicker device controls, enhanced onboarding, and various improvements to the Google Home app.

Google has significantly improved Gemini for Home, enhancing its ability to manage your smart home.

Google has launched a new update for Gemini for Home that features a more intelligent camera search, quicker device controls, enhanced onboarding, and various improvements to the Google Home app.

AI voice chats often feel uncomfortable because the assistants struggle to determine when to speak.

Thinking Machines Lab is experimenting with full duplex AI that can both listen and reply simultaneously, but the true evaluation will be whether quicker voice conversations feel beneficial when people get the chance to use them.