This latest voice update from OpenAI makes Siri and Alexa seem like they have a lot to learn.

The universal translator has transitioned from science fiction to your app store.

OpenAI has introduced three new audio models within its Realtime API, which are significant for developers creating voice-driven applications. The three models are GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper.

These models advance voice AI beyond mere exchanges, enabling comprehension, action, and engagement in real conversations.

If the demonstration is any indication, we are witnessing the next stage in the evolution of voice AI technologies.

So, what capabilities do these models have?

GPT-Realtime-2 is the standout feature, offering GPT-5-level reasoning for live voice interactions, allowing it to manage more complex requests without losing the conversational thread.

It can simultaneously operate several tools and articulate its actions with statements like “checking your calendar” or “let me investigate that.” With a broader context window of 128K tokens, it facilitates longer, more coherent sessions. Developers can also modify the reasoning effort proportionate to the request's complexity.

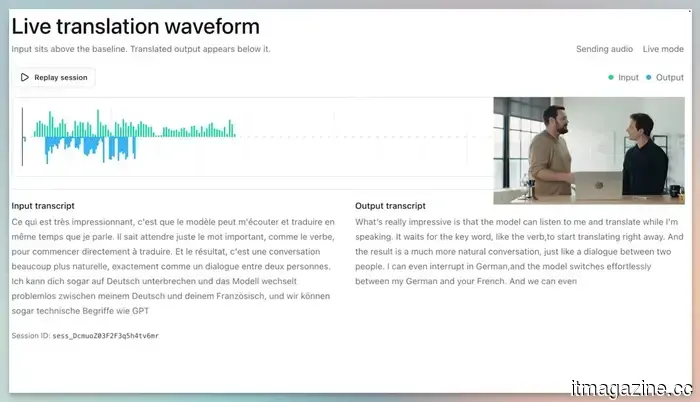

GPT-Realtime-Translate is likely my top pick. It is the closest approximation to Star Trek’s Universal Translator available today. It enables live speech translation for over 70 input languages and 13 output languages.

The highlight of the demo was how the model seamlessly translated both speakers into English in real-time, even as a new individual joined the conversation and spoke a different language.

Lastly, we have GPT-Realtime-Whisper. Unlike most speech-to-text models that wait for the speaker to finish before providing a full translation, this model offers streaming transcription, converting speech to text while the speaker is talking. It’s especially beneficial for live captions, meeting notes, and any voice-driven workflow where waiting for transcription is impractical.

Are these new voice AI models accessible to everyone?

Currently, OpenAI has made these models available to developers. However, the applications they create will impact a broad audience. For instance, a developer could create a real-time translation app that facilitates conversations between users speaking different languages.

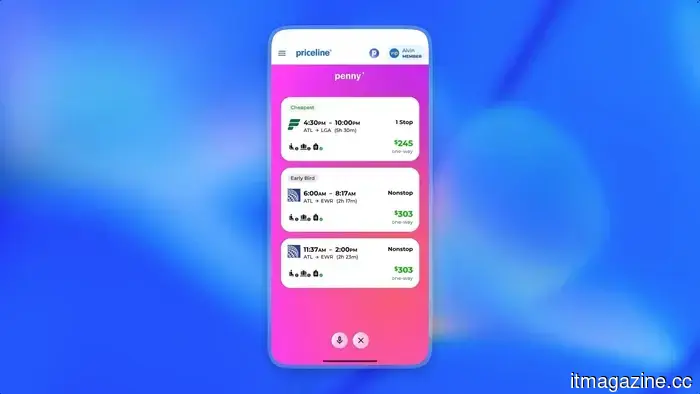

Numerous companies are already experimenting with these models. Zillow is developing a voice assistant that can search for homes and schedule tours with a single spoken request. Priceline can check flight and hotel details, cancel reservations, and book new ones. Vimeo is implementing it for real-time transcription, among others.

Pricing begins at $0.017 per minute for Whisper, $0.034 per minute for Translate, and $32 per million audio input tokens for GPT-Realtime-2.

Rachit is an experienced tech journalist with over seven years of expertise in covering the consumer technology sector.

Korea welcomes a robotic Buddhist monk at an actual monastery, indicating future trends. Buddhism has entered the robotic era with a monk named Gabi.

A humanoid robot made its appearance at a Buddhist ceremony in Seoul, captivating attendees. The robot, named Gabi, was introduced at Jogyesa Temple in central Seoul during a ceremony leading up to Buddha’s Birthday celebrations. Standing at 1.3 meters, Gabi received the Dharma name "Gabi" during a unique refuge ceremony conducted by the Jogye Order of Korean Buddhism, South Korea’s largest Buddhist organization.

Why did a robot become a real monk in a genuine temple?

OpenAI is adding a safety feature to ChatGPT, allowing a trusted individual to be contacted if serious issues arise.

AI chatbots have made it surprisingly easy to discuss even the most difficult topics. This openness, however, has its risks. OpenAI is addressing this concern by introducing a feature that enables users to designate a trusted contact to be informed when ChatGPT identifies a serious self-harm risk.

Perplexity’s AI answering engine will not be integrated into Snapchat after all.

Snapchat’s planned collaboration with Perplexity has been called off. Snap announced in its Q1 2026 investor letter that both companies “amicably concluded the relationship” during Q1, halting a $400 million cash-and-equity deal that was announced last November.

The arrangement would have integrated Perplexity’s AI answering engine into Snapchat’s Chat interface, allowing users to ask questions and receive conversational, sourced answers without leaving the app. Snap previously indicated that this partnership would start generating revenue in 2026, but its most recent sales forecast now does not anticipate any contribution from Perplexity.

Other articles

Lime has submitted its paperwork for an IPO on Nasdaq under the ticker symbol LIME.

Lime, the micromobility company supported by Uber, has submitted a filing for a US IPO using the corporate name Neutron Holdings. Goldman Sachs and JPMorgan are at the forefront of the offering.

Lime has submitted its paperwork for an IPO on Nasdaq under the ticker symbol LIME.

Lime, the micromobility company supported by Uber, has submitted a filing for a US IPO using the corporate name Neutron Holdings. Goldman Sachs and JPMorgan are at the forefront of the offering.

Apple's upcoming AirPods may provide Siri with visual capabilities, and they are currently in the testing phase.

AirPods equipped with cameras may seem outrageous, but they could be just what Siri requires to break free from outdated limitations.

Apple's upcoming AirPods may provide Siri with visual capabilities, and they are currently in the testing phase.

AirPods equipped with cameras may seem outrageous, but they could be just what Siri requires to break free from outdated limitations.

Nintendo is increasing the price of the Switch 2 in the US, but there's still an opportunity to purchase one for a lower price.

Starting September 1, Nintendo's Switch 2 will see a price increase of $50 in the US, aligning with Europe, Canada, and Japan in a significant price adjustment influenced by rising RAM costs driven by AI.

Nintendo is increasing the price of the Switch 2 in the US, but there's still an opportunity to purchase one for a lower price.

Starting September 1, Nintendo's Switch 2 will see a price increase of $50 in the US, aligning with Europe, Canada, and Japan in a significant price adjustment influenced by rising RAM costs driven by AI.

G2A appoints CVC veteran Krzysztof Krawczyk as the chair of its advisory board.

Krzysztof Krawczyk, who established CVC's Warsaw office, has taken a stake in G2A and has been appointed as the chair of its advisory board as the marketplace shifts its focus toward mergers and acquisitions and artificial intelligence.

G2A appoints CVC veteran Krzysztof Krawczyk as the chair of its advisory board.

Krzysztof Krawczyk, who established CVC's Warsaw office, has taken a stake in G2A and has been appointed as the chair of its advisory board as the marketplace shifts its focus toward mergers and acquisitions and artificial intelligence.

Top Global HR Software for International Businesses in 2026

In 2026, we evaluated the top global HR software platforms for international businesses, including HiBob, Rippling, BambooHR, Personio, UKG, Sage HR, and ADP.

Top Global HR Software for International Businesses in 2026

In 2026, we evaluated the top global HR software platforms for international businesses, including HiBob, Rippling, BambooHR, Personio, UKG, Sage HR, and ADP.

ChatGPT now allows you to designate someone to reach out to if you're feeling down.

ChatGPT can now notify a trusted person if situations become serious. This may be a straightforward feature, but it could be one of the most human-like aspects that OpenAI has incorporated into its chatbot.

ChatGPT now allows you to designate someone to reach out to if you're feeling down.

ChatGPT can now notify a trusted person if situations become serious. This may be a straightforward feature, but it could be one of the most human-like aspects that OpenAI has incorporated into its chatbot.

This latest voice update from OpenAI makes Siri and Alexa seem like they have a lot to learn.

OpenAI has introduced three new audio models capable of reasoning, translating over 70 languages, and transcribing speech in real time, enabling voice to become a truly valuable interface for developers.