This latest voice update from OpenAI makes Siri and Alexa seem like they could use some more training.

The universal translator has officially made its exit from the realm of science fiction and has arrived in your app store.

OpenAI has introduced three new audio models in its Realtime API, which are significant for anyone developing voice-activated applications. The three models are GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper.

These models enhance voice AI, moving beyond simple exchanges to a system capable of understanding, acting, and engaging in genuine conversation.

If the demonstration is any indication, we are witnessing the next phase in the functionality of voice AI models.

So, what capabilities do these models possess?

GPT-Realtime-2 is the standout feature. It introduces GPT-5-level reasoning to live voice interactions, allowing it to manage more complex requests while maintaining the flow of conversation.

It can utilize multiple tools at once and even describe its actions with phrases like “checking your calendar” or “let me look into that.” With an extended context window of 128K tokens, it can support longer, more coherent sessions. Developers have the option to customize the reasoning effort based on the complexity of the inquiries.

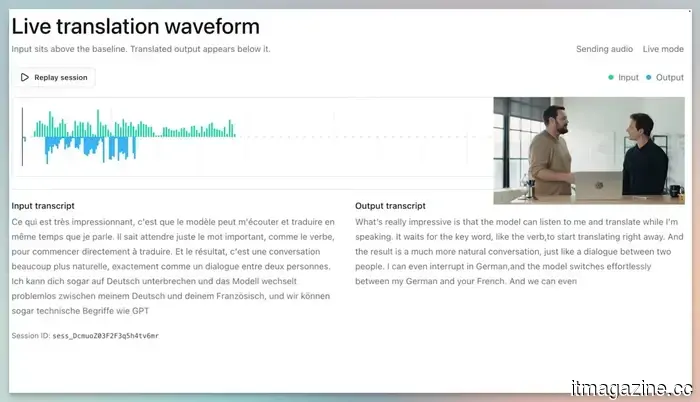

GPT-Realtime-Translate is arguably my favorite. It comes closest to resembling Star Trek’s Universal Translator in real life. This model facilitates live speech translation for over 70 input languages and 13 output languages.

The highlight of the demo was its ability to translate different speakers into English in real time, even when a new person joined the conversation and spoke a different language.

Lastly, we have GPT-Realtime-Whisper. Unlike most speech-to-text models that wait for the speaker to finish before delivering a complete translation, this model provides streaming transcription, converting speech to text while the speaker is talking. This is particularly beneficial for live captions, meeting notes, and other voice-powered processes where delays for transcription are not feasible.

Are these new voice AI models accessible to everyone?

At present, OpenAI has made these models available for developers. However, the applications they create will impact a wide audience. For instance, a developer might design a real-time translator app to facilitate conversations between individuals speaking different languages.

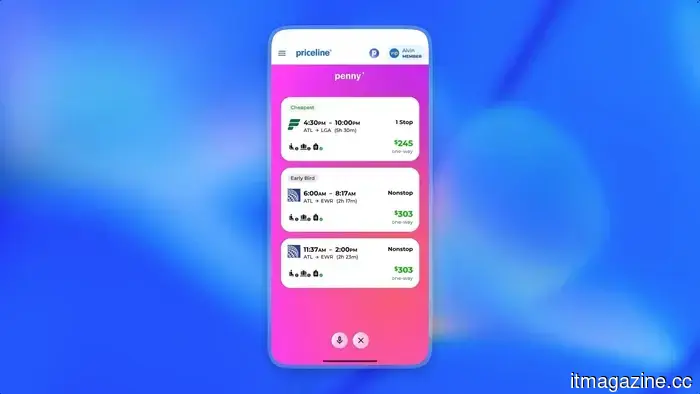

Numerous companies are already experimenting with these new models. Zillow is developing a voice assistant for home searches and tour scheduling via a single spoken request. Priceline can manage flight and hotel reservations, including cancellations and rebookings. Vimeo is employing the technology for real-time transcription, among others.

Pricing starts at $0.017 per minute for Whisper, $0.034 per minute for Translate, and $32 per 1M audio input tokens for GPT-Realtime-2.

Rachit is an experienced tech journalist with over seven years of covering the consumer technology sector.

Korea has embraced a robotic Buddhist monk at a real monastery, marking a notable development. Buddhism has entered the age of technology with a monk named Gabi.

A humanoid robot participated in a Buddhist ceremony in Seoul, creating a striking impression. The robot, named Gabi, was unveiled at Jogyesa Temple in central Seoul during a ceremony preceding Buddha’s Birthday celebrations. The 1.3-meter-tall robot was given the Dharma name "Gabi" in a special ceremony conducted by the Jogye Order, South Korea’s largest Buddhist order.

AI chatbots have made it unexpectedly easy to discuss any topic, including the most serious issues. This openness poses both advantages and challenges. OpenAI is now implementing a solution with a new feature that involves a trusted individual when serious matters arise.

The company is introducing a feature called Trusted Contact, which is beginning to roll out in ChatGPT settings for adult users. This feature allows users to designate one person who will be alerted if ChatGPT identifies a significant concern regarding self-harm.

Snapchat's planned integration of Perplexity is no longer moving forward. The company announced in its Q1 2026 investor letter that both organizations "amicably ended the relationship in Q1," concluding a $400 million cash-and-equity deal initially disclosed last November.

The partnership was intended to incorporate Perplexity’s AI answering engine directly into Snapchat’s chat interface, allowing users to ask questions and receive conversational, sourced answers without leaving the app. Snap had previously indicated that the partnership would start generating revenue in 2026, but the latest sales guidance assumes no contribution from Perplexity.

Other articles

G2A appoints CVC veteran Krzysztof Krawczyk as the chair of its advisory board.

Krzysztof Krawczyk, who established CVC's Warsaw office, has obtained a share in G2A and has taken on the role of chair of its advisory board as the marketplace shifts its focus toward mergers and acquisitions as well as artificial intelligence.

G2A appoints CVC veteran Krzysztof Krawczyk as the chair of its advisory board.

Krzysztof Krawczyk, who established CVC's Warsaw office, has obtained a share in G2A and has taken on the role of chair of its advisory board as the marketplace shifts its focus toward mergers and acquisitions as well as artificial intelligence.

Perplexity's Personal Computer can function independently on your Mac, and it is now accessible to everyone.

Perplexity's newly launched Mac application introduces Personal Computer to your desktop, allowing AI agents to navigate your local files, native applications, and the internet on your behalf.

Perplexity's Personal Computer can function independently on your Mac, and it is now accessible to everyone.

Perplexity's newly launched Mac application introduces Personal Computer to your desktop, allowing AI agents to navigate your local files, native applications, and the internet on your behalf.

Top Global HR Software for International Businesses in 2026

We evaluated the top global HR software solutions for international businesses in 2026, featuring HiBob, Rippling, BambooHR, Personio, UKG, Sage HR, and ADP.

Top Global HR Software for International Businesses in 2026

We evaluated the top global HR software solutions for international businesses in 2026, featuring HiBob, Rippling, BambooHR, Personio, UKG, Sage HR, and ADP.

G2A appoints CVC veteran Krzysztof Krawczyk as the chair of its advisory board.

Krzysztof Krawczyk, who established CVC's Warsaw office, has taken a stake in G2A and has been appointed as the chair of its advisory board as the marketplace shifts its focus toward mergers and acquisitions and artificial intelligence.

G2A appoints CVC veteran Krzysztof Krawczyk as the chair of its advisory board.

Krzysztof Krawczyk, who established CVC's Warsaw office, has taken a stake in G2A and has been appointed as the chair of its advisory board as the marketplace shifts its focus toward mergers and acquisitions and artificial intelligence.

ChatGPT now allows you to designate someone to reach out to if circumstances become difficult.

ChatGPT now has the ability to notify a trusted person if a situation becomes serious. Although it’s a straightforward feature, it could be one of the most human-like aspects that OpenAI has integrated into its chatbot.

ChatGPT now allows you to designate someone to reach out to if circumstances become difficult.

ChatGPT now has the ability to notify a trusted person if a situation becomes serious. Although it’s a straightforward feature, it could be one of the most human-like aspects that OpenAI has integrated into its chatbot.

ChatGPT now allows you to designate someone to reach out to if you're feeling down.

ChatGPT can now notify a trusted person if situations become serious. This may be a straightforward feature, but it could be one of the most human-like aspects that OpenAI has incorporated into its chatbot.

ChatGPT now allows you to designate someone to reach out to if you're feeling down.

ChatGPT can now notify a trusted person if situations become serious. This may be a straightforward feature, but it could be one of the most human-like aspects that OpenAI has incorporated into its chatbot.

This latest voice update from OpenAI makes Siri and Alexa seem like they could use some more training.

OpenAI has introduced three new audio models capable of reasoning, translating in over 70 languages, and transcribing speech in real time, establishing voice as a truly valuable interface for developers.