ChatGPT now allows you to designate someone to reach out to if you're feeling down.

OpenAI is incorporating a human safety net into ChatGPT for times of crisis.

AI chatbots have made discussions about a wide range of topics, including some of the most serious issues, remarkably easy. This level of openness is a double-edged sword. To address this, OpenAI is introducing a new feature that involves a trusted person when matters become serious.

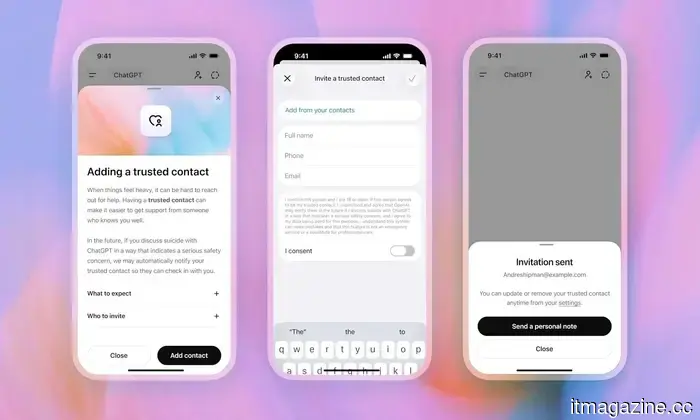

The company is launching a feature called Trusted Contact, which is beginning to appear in the settings of ChatGPT for adult users. This feature allows users to nominate one person who will be alerted if ChatGPT detects a significant concern regarding self-harm.

**How does Trusted Contact work?**

Setting up a Trusted Contact is optional; however, if you choose to do so, the person you nominate must be at least 18 years old, or 19 in South Korea. Once you have selected someone, they receive an invitation that clarifies what their role entails, and they have one week to accept the invitation before the feature activates. If they decline, you can choose another person.

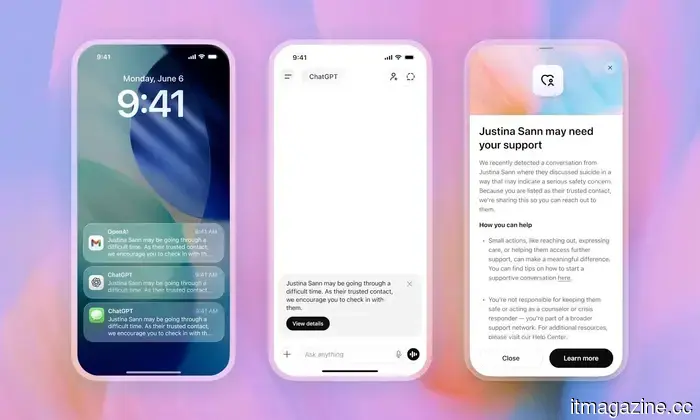

The alert system is not automatic. If ChatGPT identifies a conversation that raises potential concerns, it first informs the user that their Trusted Contact might be notified and encourages the user to reach out directly with suggested ways to start a conversation. A small team of specially trained human reviewers then evaluates the situation. Only if they determine there is a serious risk does the contact receive a notification via email, text, or in-app alert. This alert does not include chat transcripts or specifics of the conversation; it simply indicates that self-harm was mentioned in a concerning manner and requests that the contact check in. OpenAI aims to complete the human assessment in under one hour.

**Why is OpenAI implementing this now?**

Trusted Contact is part of a larger suite of safety features on the platform. Previously, OpenAI introduced features that allow parents to receive alerts when linked teen accounts exhibit signs of distress. Trusted Contact extends this feature for adult users. The development of this feature included input from clinicians, researchers, and mental health organizations, including the American Psychological Association.

It is important to note that Trusted Contact is not a replacement for crisis hotlines, emergency services, or professional mental health care. ChatGPT will still direct users to those resources when necessary. Users have the option to change or remove their Trusted Contact at any time, and contacts can also opt out whenever they wish.

The reality is that ChatGPT is being used for highly personal conversations, whether OpenAI anticipated this or not. Implementing a Trusted Contact feature is a positive step and acknowledges that a chatbot has its limitations.

---

Korea welcomes a robotic Buddhist monk at a real monastery, hinting at future developments.

A humanoid robot named Gabi has participated in a Buddhist ceremony in Seoul, marking a notable occasion. The 1.3-meter-tall robot was introduced at Jogyesa Temple in central Seoul during a ceremony held in advance of Buddha's Birthday celebrations. The robot received the Dharma name "Gabi" during a special refuge ceremony conducted by the Jogye Order of Korean Buddhism, which is the largest Buddhist order in South Korea.

**Why a robot became a real monk in a real temple**

---

This new voice update from OpenAI makes Siri and Alexa seem outdated.

OpenAI has introduced three new audio models in its Realtime API, which are significant for those developing voice-powered applications. The three models—GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper—advance voice AI beyond basic exchanges, enabling it to comprehend context, take action, and maintain a genuine conversation.

---

Perplexity’s AI answering engine will not be integrated into Snapchat after all.

The anticipated integration of Perplexity into Snapchat is no longer taking place. Snap disclosed in its Q1 2026 investor letter that both companies “amicably ended the relationship in Q1,” terminating a $400 million cash-and-equity agreement announced in the previous November. This deal would have integrated Perplexity’s AI answering engine directly into Snapchat’s chat interface, allowing users to ask questions and receive conversational, source-verified answers without exiting the app. Previously, Snap indicated that this partnership would start contributing to revenue in 2026, but the latest sales forecast now assumes no input from Perplexity.

Other articles

This latest voice update from OpenAI makes Siri and Alexa seem like they have a lot to learn.

OpenAI has introduced three new audio models capable of reasoning, translating over 70 languages, and transcribing speech in real time, enabling voice to become a truly valuable interface for developers.

This latest voice update from OpenAI makes Siri and Alexa seem like they have a lot to learn.

OpenAI has introduced three new audio models capable of reasoning, translating over 70 languages, and transcribing speech in real time, enabling voice to become a truly valuable interface for developers.

SoftBank reduces the target for its OpenAI-supported margin loan by 40% to $6 billion.

SoftBank has reduced its margin loan target supported by OpenAI from $10 billion to potentially as low as $6 billion due to lenders' worries regarding the valuation of OpenAI shares used as collateral.

SoftBank reduces the target for its OpenAI-supported margin loan by 40% to $6 billion.

SoftBank has reduced its margin loan target supported by OpenAI from $10 billion to potentially as low as $6 billion due to lenders' worries regarding the valuation of OpenAI shares used as collateral.

G2A appoints CVC veteran Krzysztof Krawczyk as the chair of its advisory board.

Krzysztof Krawczyk, who established CVC's Warsaw office, has obtained a share in G2A and has taken on the role of chair of its advisory board as the marketplace shifts its focus toward mergers and acquisitions as well as artificial intelligence.

G2A appoints CVC veteran Krzysztof Krawczyk as the chair of its advisory board.

Krzysztof Krawczyk, who established CVC's Warsaw office, has obtained a share in G2A and has taken on the role of chair of its advisory board as the marketplace shifts its focus toward mergers and acquisitions as well as artificial intelligence.

Lime submits files for Nasdaq IPO using the LIME ticker.

Lime, the micromobility company supported by Uber, has submitted its application for a US IPO under the corporate name Neutron Holdings. Goldman Sachs and JPMorgan are at the forefront of the process.

Lime submits files for Nasdaq IPO using the LIME ticker.

Lime, the micromobility company supported by Uber, has submitted its application for a US IPO under the corporate name Neutron Holdings. Goldman Sachs and JPMorgan are at the forefront of the process.

Top Global HR Software for Multinational Corporations in 2026

In 2026, we evaluated the top global HR software platforms for international businesses, which featured HiBob, Rippling, BambooHR, Personio, UKG, Sage HR, and ADP.

Top Global HR Software for Multinational Corporations in 2026

In 2026, we evaluated the top global HR software platforms for international businesses, which featured HiBob, Rippling, BambooHR, Personio, UKG, Sage HR, and ADP.

NVIDIA secures a $2.1 billion warrant in IREN as part of a 5GW AI data center agreement.

NVIDIA plans to invest as much as $2.1 billion in data center operator IREN via a five-year warrant for 30 million shares.

NVIDIA secures a $2.1 billion warrant in IREN as part of a 5GW AI data center agreement.

NVIDIA plans to invest as much as $2.1 billion in data center operator IREN via a five-year warrant for 30 million shares.

ChatGPT now allows you to designate someone to reach out to if you're feeling down.

ChatGPT can now notify a trusted person if situations become serious. This may be a straightforward feature, but it could be one of the most human-like aspects that OpenAI has incorporated into its chatbot.