Reasons why the EU's anonymisation approach might fail to meet GDPR requirements.

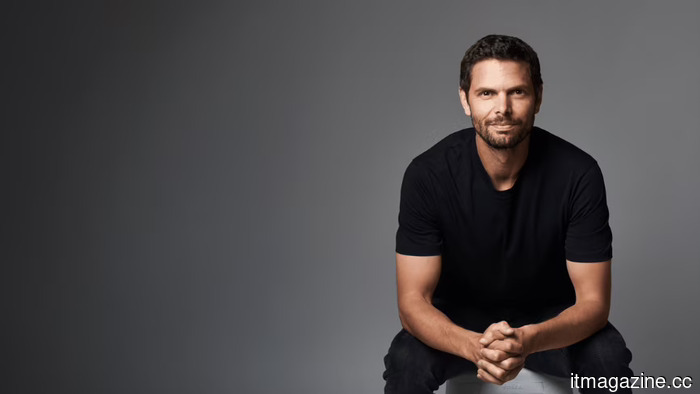

Sergei Vassilvitskii, a notable scientist at Google since 2012, has expressed concerns to Brussels regarding the Commission's proposed anonymisation method for mandatory sharing of search data, indicating that, according to his red team’s demonstration, it can be compromised in just 120 minutes. The deadline for the decision is set for July 27.

A common scenario in EU regulatory matters involves a US tech firm opposing a rule from Brussels, framing its objections as a protection for user welfare, only to be dismissed by regulators as self-serving arguments disguised as privacy concerns. The exclusive report from Reuters released on Tuesday complicates that dismissal.

Vassilvitskii, one of the most-cited experts in differential privacy and a prominent figure at Google, has written to the European Commission warning that the proposed anonymisation technique for compelled search-data sharing is vulnerable to breaches in less than two hours. He conveyed his worries in comments to Reuters, stating, “We are concerned because the EC’s approach to anonymisation fails to safeguard the privacy of Europeans: our red team was able to re-identify users in under two hours.”

The precision of this timeframe is notably exact and aligns with established technical literature.

What the EU requires

This issue arises within the context of the Digital Markets Act, the EU's key competition regulation for so-called gatekeeper platforms. On January 27, 2026, the Commission initiated formal proceedings against Google under Article 6(11) of the DMA, mandating that gatekeeper search engines provide third-party competitors with access to anonymised data on rankings, queries, clicks, and views on fair, reasonable, and non-discriminatory terms.

According to the Commission's press statements, the proceedings aim to clarify four operational details: the types of data to be shared, the anonymisation techniques to be used, the conditions for access, and the eligibility criteria for AI chatbot providers (including OpenAI, Anthropic, and others) to receive the data.

Google faces a compliance deadline of July 27, 2026, and failing to meet this could result in DMA violations, leading to fines of up to 10% of the company’s global annual revenue. As noted by The Register in mid-April, Google has faced approximately €9.71 billion in European antitrust fines since 2017, thus making the stakes in this proceeding significant for the company.

What is particularly unique about this proceeding is that the suggested remedy—sharing search data—poses privacy risks in ways that are not common for most remedies under the DMA. The Information Technology and Innovation Foundation highlighted this tension on May 1, noting that requiring a search engine to make user data available to competitors inherently increases the risk of misuse.

The Chamber of Progress expressed similar concerns that week, and CyberInsider cautioned that the proposal could facilitate widespread surveillance if the anonymisation methods are insufficient. Vassilvitskii's comments provide a technical basis for this apprehension.

In contemporary privacy discourse, anonymisation is not simply a dichotomy related to a dataset; rather, it is a probabilistic property influenced by (a) the data itself, (b) the additional information available to potential attackers, and (c) the anonymisation method employed. Vassilvitskii’s research, as outlined in his Google Research profile, has been centered on differential privacy, which offers a mathematical framework for assessing and limiting the risk of re-identification in shared datasets. His paper from 2025 on differentially private datasets for Google’s Topics API represents a well-documented use of this framework in a functioning commercial environment.

The two-hour claim, viewed through this lens, is an empirical assertion rather than a rhetorical flourish. Vassilvitskii’s red team was able to re-identify users in under two hours from a sample of search-engine query data anonymised according to the Commission's proposal. He categorizes the suggested anonymisation technique as a type that has been shown to be susceptible to linkage attacks when the queries are sufficiently unique.

This vulnerability is not hypothetical; in 2006, an anonymised dataset from AOL allowed several users to be identified by name within days, including a well-documented case by the New York Times. This principle applies even more blatantly to today's search data, which is significantly more detailed than the data from 2006 and far easier to cross-reference with publicly available information.

A complex political situation confronts Google. For the past decade, the company has maintained that user privacy is one of its core commitments. Simultaneously, it now faces a Commission proceeding that seeks to mandate the sharing of user data with competitors for competition-related reasons.

The assertion that this sharing could compromise user privacy, regardless of its technical validity, can be countered by the argument that Google's privacy concerns have arisen conveniently alongside its commercial interests.

Vassilvitskii's involvement appears to be an attempt to mitigate that counter-argument by tying the privacy discussion to

Other articles

Will Apple’s iOS 27 introduce third-party AI options and assist Chinese iPhones in overcoming their AI challenges?

Apple is said to be preparing to enable users to select third-party AI models for the first time in its forthcoming iOS 27 release, broadening Apple's capabilities.

Will Apple’s iOS 27 introduce third-party AI options and assist Chinese iPhones in overcoming their AI challenges?

Apple is said to be preparing to enable users to select third-party AI models for the first time in its forthcoming iOS 27 release, broadening Apple's capabilities.

Will Apple’s iOS 27 introduce third-party AI options and aid Chinese iPhones in overcoming their AI challenges?

Apple is said to be preparing to let users select third-party AI models for the first time in the soon-to-be-released iOS 27, broadening Apple's offerings.

Will Apple’s iOS 27 introduce third-party AI options and aid Chinese iPhones in overcoming their AI challenges?

Apple is said to be preparing to let users select third-party AI models for the first time in the soon-to-be-released iOS 27, broadening Apple's offerings.

Peter Sarlin's Qutwo reaches a $380 million valuation in an angel funding round.

Qutwo, the company that orchestrates quantum and classical technologies and was established by Peter Sarlin from Silo AI, has achieved a valuation of $380 million in an angel investment round.

Peter Sarlin's Qutwo reaches a $380 million valuation in an angel funding round.

Qutwo, the company that orchestrates quantum and classical technologies and was established by Peter Sarlin from Silo AI, has achieved a valuation of $380 million in an angel investment round.

DeepSeek's $45 billion valuation also serves as a strategic statement from Beijing.

China's Big Fund is currently in discussions to take the lead on DeepSeek's initial external funding round, valued at $45 billion, which is more than double the amount that was being considered a fortnight ago.

DeepSeek's $45 billion valuation also serves as a strategic statement from Beijing.

China's Big Fund is currently in discussions to take the lead on DeepSeek's initial external funding round, valued at $45 billion, which is more than double the amount that was being considered a fortnight ago.

Davis secures $5.5 million in pre-seed funding to streamline real estate development.

Davis, based in Paris, has secured a $5.5 million pre-seed funding to accelerate real estate development from months to days, utilizing a new generative model known as Gaudi-1.

Davis secures $5.5 million in pre-seed funding to streamline real estate development.

Davis, based in Paris, has secured a $5.5 million pre-seed funding to accelerate real estate development from months to days, utilizing a new generative model known as Gaudi-1.

Apple resolves the consumer lawsuit regarding Siri for $250 million.

Apple has resolved the Landsheft consumer class action regarding Siri AI marketing for $250 million. The related securities fraud case involving shareholders is still ongoing.

Apple resolves the consumer lawsuit regarding Siri for $250 million.

Apple has resolved the Landsheft consumer class action regarding Siri AI marketing for $250 million. The related securities fraud case involving shareholders is still ongoing.

Reasons why the EU's anonymisation approach might fail to meet GDPR requirements.

Sergei Vassilvitskii, a scientist at Google, has alerted the European Commission that the anonymisation technique used for mandatory search-data sharing under the DMA is vulnerable and can be compromised in under two hours.