Workers at Google DeepMind have decided to unionize following a Pentagon AI agreement that goes against eight years of ethical commitments.

**TL;DR** Employees at Google DeepMind in the UK voted overwhelmingly, with 98 percent in favor, to unionize after Google entered into a classified Pentagon AI agreement for "any lawful purpose," marking the first collective organization of a frontier AI lab. The union's demands include halting military use of AI, reinstating Google’s previously withdrawn weapons commitment, and forming an independent ethics oversight body.

In 2018, approximately 4,000 Google staff signed a petition against Project Maven, a Pentagon initiative utilizing the company's AI for drone surveillance analysis, leading to the contract's non-renewal. Subsequently, Google established a set of AI principles denouncing weaponization and surveillance technologies violating international norms, alongside forming an AI ethics team. This situation was seen as a sign that tech workers could influence their company's ethical boundaries. Now, eight years later, Google has signed a classified military AI deal, removed its weapons pledge, and dismissed the leaders of the created ethics team. The researchers behind the AI that's now being offered to the military have opted to unionize as their only course of action.

**The vote**

In April, Google DeepMind’s UK staff voted to join the Communication Workers Union and Unite the Union, with 98 percent supporting the initiative. They have since sent a letter to management requesting formal acknowledgment of the unions as their official representatives. If approved, they would be the first unionized workers at a frontier AI lab globally. This vote primarily centered around the Pentagon rather than compensation or working conditions. Over 580 Google employees, including 20 directors and senior DeepMind researchers, had previously urged CEO Sundar Pichai to reject the classified military AI agreement. Separately, more than 100 DeepMind employees signed an internal letter insisting that no DeepMind research should contribute to weapons development or autonomous targeting. Regardless, the company signed the deal.

The union's demands are clear: end the utilization of Google AI by the Israeli and US military, restore the company's commitment against developing AI weaponry or surveillance tools, establish an independent ethics body, and guarantee the right for researchers to abstain from projects for ethical reasons. These are unconventional union demands, focusing on governance and organizational accountability rather than traditional labor issues, as governance structures originally meant to oversee such matters have been dismantled or sidelined in pursuit of profitability.

**The deal**

Google finalized a classified agreement with the Pentagon for "any lawful purpose," while also withdrawing from a $100 million drone swarm competition following an internal ethics evaluation—a contradiction noted by researchers as incoherent. The classified contract grants the Pentagon access to Google’s AI models on secure networks, where Google cannot supervise the types of queries executed or the outcomes produced. Research scientist Alex Turner publicly criticized the deal, pointing out that Google “cannot veto usage” and is relying on "aspirational language without legal enforceability." Although the contract includes advisory guidelines to dissuade mass surveillance and autonomous weapons deployment lacking human oversight, the government can request safety adjustments, with no independent checks on compliance within a classified network.

Reportedly, Google's conditions are more lenient than those of OpenAI, which maintains "full discretion" over its safety protocols. Anthropic alone refused to give the Pentagon unrestricted access, insisting its models not be utilized for autonomous weapons or large-scale domestic surveillance. In turn, the Pentagon categorized Anthropic as a supply chain risk, initiated the removal of its product usage by the military, and engaged with seven companies, including Google, that accepted the terms Anthropic rejected. This situation sends a clear message to AI researchers: those maintaining ethical restraints face repercussions, while companies discarding them are rewarded.

**The history**

The successful 2018 Project Maven protest materialized because Google's business model did not hinge on military contracts, allowing the company to forgo the Pentagon's few million dollars without significant detriment to its ad-driven revenue. By 2026, the classified AI market has expanded to worth tens of billions of dollars, the Pentagon has shown willingness to retaliate against non-compliant firms, and Google’s rivals have already secured similar agreements. The conditions enabling worker leverage in 2018 have significantly changed. In February 2025, Google discreetly removed its explicit pledge against AI weapon development from its published principles, stripping employees of the internal standard they had previously utilized to contest military-related projects.

The dismissals of Timnit Gebru and Margaret Mitchell, co-leaders of Google’s Ethical AI team, in 2020 and 2021 were initial indicators of intolerance towards internal dissent on AI ethics. The termination of 28 employees protesting Project Nimbus, a $1.2 billion contract with the Israeli government for cloud computing and AI services, in 2024 reinforced this. By 2026, the pattern is unmistakable: Google will pursue military and government AI contracts despite internal objections, and employees who raise concerns publicly risk dismissal. The union vote symbolizes workers' response to this trend. With individual protests leading to job

Other articles

Asus Zenbook A16 Review: A stylish competitor to the MacBook from the Windows camp?

Equipped with a beautiful 3K OLED display, the Asus Zenbook A16 offers impressive battery life in a sleek design, positioning itself as a robust AI-ready performance machine for Windows enthusiasts.

Asus Zenbook A16 Review: A stylish competitor to the MacBook from the Windows camp?

Equipped with a beautiful 3K OLED display, the Asus Zenbook A16 offers impressive battery life in a sleek design, positioning itself as a robust AI-ready performance machine for Windows enthusiasts.

Metalenz's innovative face scanning technology is embedded beneath the phone's display, eliminating the need for unsightly cutouts.

Metalenz has demonstrated that payment-grade facial authentication can function with a fully powered-on display, a feat that Apple has attempted to achieve for years without success.

Metalenz's innovative face scanning technology is embedded beneath the phone's display, eliminating the need for unsightly cutouts.

Metalenz has demonstrated that payment-grade facial authentication can function with a fully powered-on display, a feat that Apple has attempted to achieve for years without success.

Google, Microsoft, and xAI have consented to share governmental evaluations of AI models before their release as the Mythos crisis prompts an increase in oversight.

Five leading AI laboratories are now presenting their models for evaluation by the US government. This voluntary program lacks legal authority but includes all significant AI developers following the Mythos crisis.

Google, Microsoft, and xAI have consented to share governmental evaluations of AI models before their release as the Mythos crisis prompts an increase in oversight.

Five leading AI laboratories are now presenting their models for evaluation by the US government. This voluntary program lacks legal authority but includes all significant AI developers following the Mythos crisis.

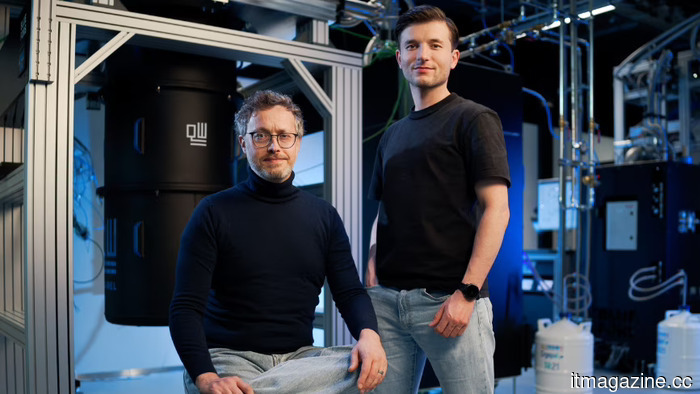

Inside QuantWare's €152 million funding round to develop KiloFab.

QuantWare has secured a €152 million Series B funding round, led by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deeptech funding round in Dutch history.

Inside QuantWare's €152 million funding round to develop KiloFab.

QuantWare has secured a €152 million Series B funding round, led by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deeptech funding round in Dutch history.

Workers at Google DeepMind have voted in favor of unionizing following a Pentagon AI agreement that supersedes eight years of commitments to ethical practices.

Staff at DeepMind UK voted 98% in favor of joining CWU and Unite following Google's agreement on a confidential Pentagon contract for "any lawful purpose." They are advocating for the cessation of military AI applications and the reinstatement of ethical standards.

Workers at Google DeepMind have voted in favor of unionizing following a Pentagon AI agreement that supersedes eight years of commitments to ethical practices.

Staff at DeepMind UK voted 98% in favor of joining CWU and Unite following Google's agreement on a confidential Pentagon contract for "any lawful purpose." They are advocating for the cessation of military AI applications and the reinstatement of ethical standards.

The founders of IronSource have secured $60 million at a valuation of $500 million for Zyg, an AI-driven platform that automates advertising in the e-commerce sector.

Zyg secured $60 million in funding, spearheaded by Accel, at a valuation of $500 million just two months following its stealth launch. The team at IronSource is developing AI agents designed to take the place of human ad buyers for direct-to-consumer brands.

The founders of IronSource have secured $60 million at a valuation of $500 million for Zyg, an AI-driven platform that automates advertising in the e-commerce sector.

Zyg secured $60 million in funding, spearheaded by Accel, at a valuation of $500 million just two months following its stealth launch. The team at IronSource is developing AI agents designed to take the place of human ad buyers for direct-to-consumer brands.

Workers at Google DeepMind have decided to unionize following a Pentagon AI agreement that goes against eight years of ethical commitments.

Staff at DeepMind UK voted 98% in favor of joining CWU and Unite following Google's agreement to a secret Pentagon contract for "any lawful purpose." They are calling for an end to military applications of AI and a return to ethical practices.