Google, Microsoft, and xAI have consented to provide evaluations of AI models to the government before their release, as the Mythos crisis necessitates an increase in oversight.

**TL;DR** Google, Microsoft, and xAI have joined OpenAI and Anthropic in granting the US Commerce Department pre-release access to assess their AI models. This initiative establishes voluntary oversight over these five major AI labs through an office lacking formal authority and staffed with fewer than 200 employees. The expansion follows the Mythos crisis and discussions of an executive order that would formalize the review process.

The Mythos crisis prompted the U.S. government to address a previously avoided issue: what occurs when an AI model becomes powerful enough to endanger national security, and the government lacks a formal evaluation mechanism before public release? On Tuesday, the Commerce Department disclosed that Google, Microsoft, and xAI will provide the U.S. government with pre-release access to their AI models for evaluation, joining OpenAI and Anthropic, which have been providing models for assessment since 2024. Collectively, these five companies dominate the global frontier AI landscape and have consented to allow a single government office to evaluate their systems prior to deployment. This arrangement is voluntary and not backed by any statutory authority, meaning the government cannot prohibit any release. It represents the closest approach to an AI oversight system in the U.S., established in under two years by a small office.

**The Office**

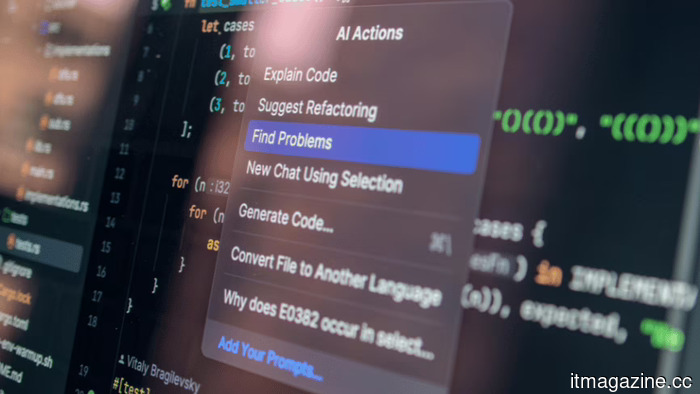

The Center for AI Standards and Innovation operates within the Commerce Department's National Institute of Standards and Technology. Initiated under President Biden in 2023 as the AI Safety Institute and restructured under Trump with an emphasis on standards and national security, the center has conducted over 40 evaluations of AI models, including advanced systems yet to be made public. Developers often present versions with reduced safety measures, allowing assessors to investigate relevant capabilities related to national security, such as biological weapon synthesis, cyberattack automation, and uncontrollable autonomous behaviors at scale.

Chris Fall now oversees the center after the sudden exit of Collin Burns, a former AI researcher at Anthropic chosen for the role but removed by the White House after just four days. Burns had left his position and relocated for this job, but his ousting, linked to his ties with a company the administration opposed, illustrates the political intricacies of creating an oversight system in an industry where evaluators and those being evaluated come from the same talent pool. Trump's broader regulatory approach for AI has favored federal preemption over state regulations and a lenient stance toward the industry, yet the model evaluation program shows a tougher side; the government wants to understand these systems' capabilities before they are made available to the public.

**The Agreements**

The new collaborations with Google, Microsoft, and xAI broaden what was previously a two-company agreement to nearly comprehensive coverage of frontier AI. OpenAI and Anthropic have adjusted their agreements to conform with Trump's AI Action Plan, which tasks the center with leading national security-related assessments and positions it within a wider “evaluations ecosystem.” These agreements are not legally binding; they are voluntary commitments that companies can withdraw from at will. There is no legal requirement for pre-release evaluations, nor does any regulation authorize the center to delay or block deployment. The entire system hinges on the AI companies deciding that providing the government with early access aligns with their strategic interests.

From the companies' viewpoint, the alternative to voluntary cooperation could be legislation. Various proposed bills would grant the center permanent statutory authority, impose mandatory evaluation requirements, and enable it to set conditions on deployment. The Pentagon has shown readiness to blacklist AI companies that do not comply with government expectations, categorizing Anthropic as a supply-chain risk after it refused to allow its models to be used for autonomous weapons or mass domestic surveillance. The voluntary evaluation agreements serve partly as a way for the other companies to show their willingness to work with the government before compliance becomes obligatory.

**The Catalyst**

The expansion of the evaluation program occurs amid the Mythos crisis. Anthropic's significant model, revealed in April, can autonomously identify and exploit zero-day vulnerabilities in major operating systems and web browsers, having uncovered thousands of serious bugs, including long-standing vulnerabilities. The White House has opposed Anthropic’s intent to widen Mythos’s availability beyond its initial launch partners. The NSA is utilizing it despite the Pentagon's sanctions against Anthropic. The EU is demanding access to Mythos for European cybersecurity efforts, arguing that this crucial tool should not be solely controlled by an American company that the U.S. government has partially blacklisted.

Mythos highlights the need for the evaluation program: a model with capabilities that pose immediate national security risks that cannot be assessed post-deployment. The center's evaluations since 2024 have presumably uncovered capabilities in unreleased models influencing policy decisions, although these assessments only involved two companies until now. Google's Gemini, Microsoft's models, and xAI's Grok were not previously subjected to pre-release government evaluation, but the new agreements address this gap, ensuring any future models with similar levels of capability are assessed by evaluators before public release.

**The Limits**

The program's key vulnerability is

Other articles

Nscale has invested €695 million in Portugal, while the crypto-to-AI neocloud reaches a valuation of $14.6 billion within two years, collaborating with Microsoft.

Nscale is set to provide 66,000 Nvidia Rubin GPUs for Microsoft's 1.2 GW Sines campus. Once a crypto miner, it has now become Europe's most valuable AI infrastructure startup, valued at $14.6 billion.

Nscale has invested €695 million in Portugal, while the crypto-to-AI neocloud reaches a valuation of $14.6 billion within two years, collaborating with Microsoft.

Nscale is set to provide 66,000 Nvidia Rubin GPUs for Microsoft's 1.2 GW Sines campus. Once a crypto miner, it has now become Europe's most valuable AI infrastructure startup, valued at $14.6 billion.

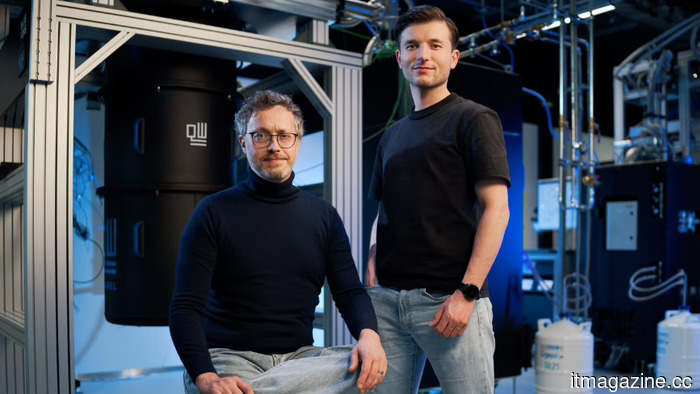

Inside QuantWare's €152 million funding round for the construction of KiloFab

QuantWare has successfully completed a €152 million Series B funding round, led by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deeptech financing round in the Netherlands to date.

Inside QuantWare's €152 million funding round for the construction of KiloFab

QuantWare has successfully completed a €152 million Series B funding round, led by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deeptech financing round in the Netherlands to date.

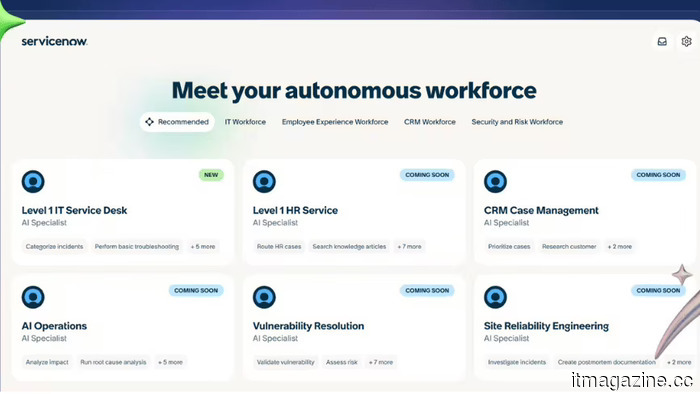

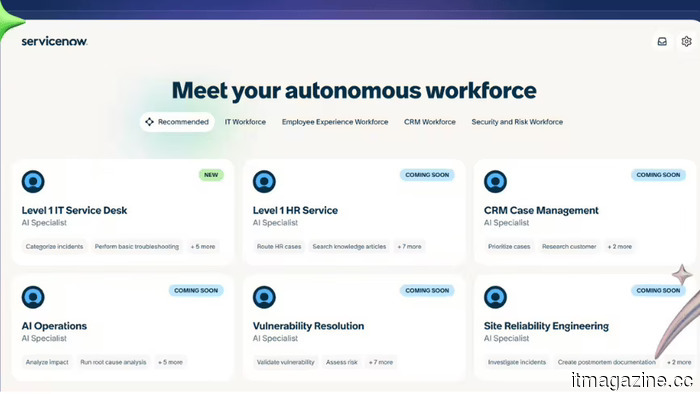

ServiceNow anticipates reaching $30 billion by 2030, with one-third of its annual contract value coming from AI.

ServiceNow anticipates achieving $30 billion in subscription revenue by 2030, with 30% of that annual contract value coming from Now Assist, its primary AI product. The presentation during investor day serves as a structural response to worries regarding AI-SaaS displacement.

ServiceNow anticipates reaching $30 billion by 2030, with one-third of its annual contract value coming from AI.

ServiceNow anticipates achieving $30 billion in subscription revenue by 2030, with 30% of that annual contract value coming from Now Assist, its primary AI product. The presentation during investor day serves as a structural response to worries regarding AI-SaaS displacement.

ServiceNow anticipates reaching $30 billion by 2030, with one-third of annual contract value (ACV) coming from AI.

ServiceNow forecasts $30 billion in subscription revenue for 2030, with 30% of that annual contract value coming from Now Assist, the company’s premier AI product. The presentation for investors addresses concerns about the potential displacement of AI in the SaaS sector.

ServiceNow anticipates reaching $30 billion by 2030, with one-third of annual contract value (ACV) coming from AI.

ServiceNow forecasts $30 billion in subscription revenue for 2030, with 30% of that annual contract value coming from Now Assist, the company’s premier AI product. The presentation for investors addresses concerns about the potential displacement of AI in the SaaS sector.

Asus Zenbook A16 Review: A stylish competitor to the MacBook from the Windows camp?

Equipped with a beautiful 3K OLED display, the Asus Zenbook A16 offers impressive battery life in a sleek design, positioning itself as a robust AI-ready performance machine for Windows enthusiasts.

Asus Zenbook A16 Review: A stylish competitor to the MacBook from the Windows camp?

Equipped with a beautiful 3K OLED display, the Asus Zenbook A16 offers impressive battery life in a sleek design, positioning itself as a robust AI-ready performance machine for Windows enthusiasts.

Metalenz's latest face scan technology is integrated beneath the phone display, eliminating the need for unsightly cutouts.

Metalenz has just demonstrated that payment-grade facial recognition can function with a fully lit display, a feat that Apple has been attempting to achieve for years without success.

Metalenz's latest face scan technology is integrated beneath the phone display, eliminating the need for unsightly cutouts.

Metalenz has just demonstrated that payment-grade facial recognition can function with a fully lit display, a feat that Apple has been attempting to achieve for years without success.

Google, Microsoft, and xAI have consented to provide evaluations of AI models to the government before their release, as the Mythos crisis necessitates an increase in oversight.

Five leading AI laboratories are now presenting their models for assessment by the US government. This voluntary program lacks legal authority but includes all major AI developers following the Mythos crisis.