Employees at Google DeepMind have decided to unionize following a Pentagon AI agreement that contradicts eight years of ethical commitments.

TL;DR: Employees at Google DeepMind's UK offices voted overwhelmingly (98%) to unionize after Google entered into a classified AI contract with the Pentagon for "any lawful purpose," making it the first frontier AI lab to organize collectively. The union's demands include ending military AI use, reinstating Google’s canceled weapons policy, and establishing an independent ethics body.

In 2018, four thousand Google employees opposed Project Maven, a military contract utilizing the company's AI for drone surveillance analysis. Google chose not to renew the contract, stating its commitment to abstaining from developing weapons or surveillance technology that violates international norms, and created an AI ethics team. This incident showcased tech workers' potential to influence their companies' ethical stances. However, eight years later, Google has signed a classified Pentagon AI contract while retracting its weapons pledge, and dismissing the ethics team leaders initiated in response to Project Maven. The researchers involved in the AI development for military use have responded by voting to unionize.

The vote

In April, workers at Google DeepMind in the UK voted to join the Communication Workers Union and Unite the Union, with 98% support. They sent a letter to management seeking formal recognition of the unions as their representatives. If acknowledged, these workers would be the first in a frontier AI lab to unionize. The focus of the vote was primarily on the Pentagon contract, rather than pay or working conditions. Over 580 Google employees, including 20 directors and senior researchers, previously urged CEO Sundar Pichai to reject the military AI deal. Additionally, more than 100 DeepMind employees signed an internal letter insisting that no DeepMind research be applied to weapons development or autonomous targeting—but the contract was signed regardless.

The union has made specific demands: cessation of Google AI use by the US and Israeli military, restoration of the company's scrapped commitment against AI weapon development, creation of an independent ethics oversight body, and allowing individual researchers to opt out of morally objectionable projects. These requests represent governance calls stemming from disillusionment after internal governance structures, such as AI principles and ethics boards, were dismantled when they conflicted with revenue interests.

The deal

Google’s classified Pentagon agreement allows access to its AI models on networks where Google cannot track usage or output. Researchers have voiced objections, highlighting that Google “can’t veto usage” and relies on “aspirational language with no enforceable restrictions.” The contract contains advisory guidelines against mass surveillance and autonomous weapons but allows the government to alter safety settings without independent oversight. Reports indicate that Google’s deal is more permissive than OpenAI’s, which maintains control over its safety protocols. Anthropic refused unrestricted access to the Pentagon, which led to it being marked as a supply-chain risk, while Google and others signed agreements with fewer ethical constraints. This highlights a trend where companies adhering to ethical limits are penalized while those that don’t are favored.

The history

The successful 2018 Project Maven protests occurred because Google was not reliant on military contracts, allowing it to forfeit projected Pentagon revenue without significant impact. By 2026, the classified AI market is projected to be worth billions, and the Pentagon has shown willingness to respond harshly to non-compliance, while Google’s competitors have already completed equivalent agreements. The conditions enabling employee leverage have diminished significantly since 2018. In February 2025, Google quietly retracted its non-development pledge for military AI from its principles, removing a key internal standard used by employees to oppose military endeavors.

The dismissals of Timnit Gebru and Margaret Mitchell, co-leads of Google’s Ethical AI team, and the firings of 28 employees who protested Project Nimbus, demonstrate a clear intolerance for internal dissent concerning AI ethics. By 2026, the pattern is evident: Google will procure military contracts despite internal opposition, and employees who voice dissent will face termination. The union vote represents a collective response to this trend, as individual protests have proven ineffective.

The context

Meta and Microsoft have laid off 23,000 employees while significantly increasing investment in AI infrastructure. This restructuring within Big Tech is leading to job losses in various fields while channeling resources into the roles of researchers and engineers who develop AI models. DeepMind's workers are among the industry's most valuable, and their choice to unionize signals an understanding that their influence is temporary, as advancing models may require fewer researchers over time.

In China, courts have ruled against dismissals solely based on AI replacements, setting a precedent for limitations even in an aggressive AI economy. This underscores a divergence where governments are starting to delineate AI's impacts on jobs, though differences exist across jurisdictions, particularly regarding ethical military AI applications, which lack a legal framework. DeepMind’s union is operating in an ambiguous space between labor laws that support the right to organize and defense procurement, where governmental discretion is vast.

The question

The effectiveness of the DeepMind union hinges on whether Google voluntarily recognizes it. UK law offers a statutory

Other articles

Google, Microsoft, and xAI have consented to provide evaluations of AI models to the government before their release, as the Mythos crisis necessitates an increase in oversight.

Five leading AI laboratories are now presenting their models for assessment by the US government. This voluntary program lacks legal authority but includes all major AI developers following the Mythos crisis.

Google, Microsoft, and xAI have consented to provide evaluations of AI models to the government before their release, as the Mythos crisis necessitates an increase in oversight.

Five leading AI laboratories are now presenting their models for assessment by the US government. This voluntary program lacks legal authority but includes all major AI developers following the Mythos crisis.

The founders of IronSource have secured $60 million at a valuation of $500 million for Zyg, an AI-driven platform that automates advertising in the e-commerce sector.

Zyg secured $60 million in funding, spearheaded by Accel, at a valuation of $500 million just two months following its stealth launch. The team at IronSource is developing AI agents designed to take the place of human ad buyers for direct-to-consumer brands.

The founders of IronSource have secured $60 million at a valuation of $500 million for Zyg, an AI-driven platform that automates advertising in the e-commerce sector.

Zyg secured $60 million in funding, spearheaded by Accel, at a valuation of $500 million just two months following its stealth launch. The team at IronSource is developing AI agents designed to take the place of human ad buyers for direct-to-consumer brands.

Intel has appointed Qualcomm veteran Alex Katouzian to head a new Client Computing and Physical AI division.

Intel has brought on Alex Katouzian, who spent 25 years at Qualcomm, to head a newly formed Client Computing and Physical AI division. This marks the second high-profile recruitment from Qualcomm during CEO Lip-Bu Tan's leadership.

Intel has appointed Qualcomm veteran Alex Katouzian to head a new Client Computing and Physical AI division.

Intel has brought on Alex Katouzian, who spent 25 years at Qualcomm, to head a newly formed Client Computing and Physical AI division. This marks the second high-profile recruitment from Qualcomm during CEO Lip-Bu Tan's leadership.

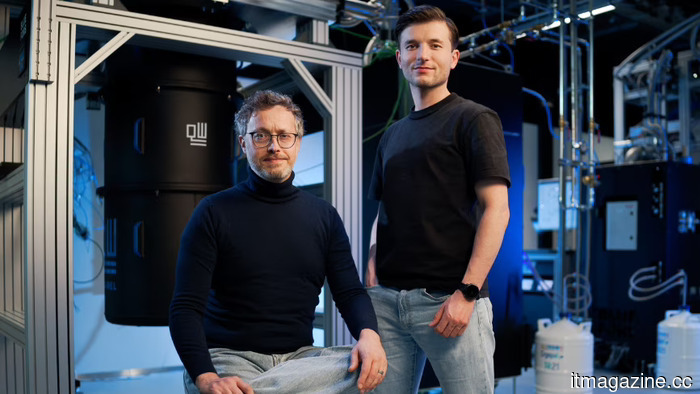

Inside QuantWare's €152 million funding round aimed at developing KiloFab.

QuantWare has completed a €152 million Series B funding round, spearheaded by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deep tech funding round in the Netherlands to date.

Inside QuantWare's €152 million funding round aimed at developing KiloFab.

QuantWare has completed a €152 million Series B funding round, spearheaded by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deep tech funding round in the Netherlands to date.

Inside QuantWare's €152 million funding round for the construction of KiloFab

QuantWare has successfully completed a €152 million Series B funding round, led by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deeptech financing round in the Netherlands to date.

Inside QuantWare's €152 million funding round for the construction of KiloFab

QuantWare has successfully completed a €152 million Series B funding round, led by Intel Capital, In-Q-Tel, and ETF Partners, marking the largest deeptech financing round in the Netherlands to date.

Coinbase reduces its workforce by 14% and reorganizes around AI-focused pods as cryptocurrency revenue declines by 26% and trading volumes reach an 18-month low.

Coinbase is reducing its workforce by 660 employees and reorganizing into AI-focused teams with a maximum of five layers of management. Revenue for Q1 is projected to decline by 26% due to a 48% decrease in cryptocurrency trading volumes.

Coinbase reduces its workforce by 14% and reorganizes around AI-focused pods as cryptocurrency revenue declines by 26% and trading volumes reach an 18-month low.

Coinbase is reducing its workforce by 660 employees and reorganizing into AI-focused teams with a maximum of five layers of management. Revenue for Q1 is projected to decline by 26% due to a 48% decrease in cryptocurrency trading volumes.

Employees at Google DeepMind have decided to unionize following a Pentagon AI agreement that contradicts eight years of ethical commitments.

Staff at DeepMind UK voted 98% in favor of joining the CWU and Unite following Google's signing of a classified Pentagon agreement for "any lawful purpose." They are advocating for the cessation of military AI applications and the reinstatement of ethical standards.