Google has finalized a classified AI agreement with the Pentagon for

**TL;DR** Google entered into a classified AI agreement with the Pentagon for “any lawful government purpose” just one day after over 580 employees urged CEO Sundar Pichai to decline such an arrangement. The contract includes advisory restrictions (no mass surveillance and no autonomous weapons without human oversight), but allows the government to request changes to safety settings. On the same day, Bloomberg reported that Google quietly exited a $100 million contest for drone swarm technology in February after an internal ethics review. Google is attempting to differentiate between providing general-purpose AI and creating specific weapons, though this distinction may not hold up on classified networks.

Google has confirmed a deal that allows the Pentagon to utilize its Gemini AI models for classified military operations under terms that permit "any lawful government purpose." This announcement came a day after more than 580 Google employees signed a letter urging Pichai to reject such agreements. The contract grants the Department of Defense API access to Google's AI systems on classified networks, building on a relationship that already deploys Gemini technology to three million Pentagon personnel on unclassified systems. The agreement includes a clause stating that “the AI System is not intended for, and should not be used for, domestic mass surveillance or autonomous weapons (including target selection) without appropriate human oversight and control.” Concurrently, Bloomberg disclosed that Google had discreetly withdrawn from a $100 million Pentagon prize challenge aimed at developing technology for voice-controlled autonomous drone swarms, retracting in February after an internal ethics review despite being advanced in the competition. Google officially cited a lack of “resourcing.” The company attempts to draw a line, but it is not the line that the employees requested.

The classified AI agreement functions as an extension of an existing Pentagon contract, providing API access instead of custom model development or specialized military applications. A representative from Google Public Sector confirmed this arrangement. The Pentagon will have direct access to Google’s software on classified networks that are separated from the public internet and used for mission planning, intelligence analysis, and weapons targeting. The language permitting “any lawful government purpose” aligns Google with OpenAI and Elon Musk’s xAI, both of which have formed their own classified AI agreements with the Pentagon. The government can request modifications to Google’s AI safety protocols and content filters, effectively allowing the Pentagon to alter the safeguards established by Google’s researchers.

The stated restrictions against mass surveillance and autonomous weapons without human oversight reflect similar conditions negotiated by OpenAI in its Pentagon deal. However, the enforcement mechanism remains the same one highlighted by Google's employees as inadequate in their letter: on classified networks, Google is unable to monitor the queries executed, the outputs generated, or the decisions made based on those outputs. The phrase "should not be used for" is advisory rather than a binding prohibition, and “appropriate human oversight and control” lacks clear definition. The employees stated that “the only way to guarantee that Google does not become associated with such harms is to reject any classified workloads.” Google opted to accept these workloads using language that employees had previously argued was unenforceable. Pichai opened Cloud Next 2026 announcing 750 million Gemini users and a $240 billion backlog. This same Gemini infrastructure catering to those users is now being made available on classified military networks, where its usage cannot be monitored by anyone outside the Pentagon.

The exit from the drone swarm challenge is another aspect of this story. Google progressed in a $100 million Pentagon prize challenge to develop technology that would enable commanders to manage autonomous drone swarms using voice commands, converting spoken instructions like “left” into digital signals sent to the drones. The company officially notified the government on February 11, 2026, of its decision to withdraw. While Google cited a lack of resources, Bloomberg's findings indicate that this choice followed an internal ethics review. This withdrawal is reminiscent of Project Maven in 2018, when around 4,000 Google employees protested against the use of AI for analyzing drone video feeds, leading Google to allow the contract to expire. Palantir took over the contract, which was valued at millions of dollars, with Palantir’s stake in the Maven project subsequently growing to $13 billion.

The contrast is striking. On the same day that Google confirmed a deal granting the Pentagon classified access to Gemini for “any lawful government purpose,” it also announced its departure from a program that would have applied its AI to control autonomous drone swarms. Google is prepared to place its most powerful AI models on classified networks without oversight, yet it hesitated to develop voice-controlled drone swarms. This distinction is critical to Google’s internal ethical guidelines: API access to general-purpose models is a step removed from weapons applications, even if the models will be utilized on networks that engage in weapons targeting. In contrast, creating technology expressly designed for managing drone swarms constitutes a direct weapons application that the ethics review could not sanction. The line Google seeks to draw is between supplying tools and creating weapons, between providing access and designing lethal technology. The question hinges on

Other articles

Musk states that the OpenAI case will establish a precedent for

Musk informed the jurors that his lawsuit against OpenAI is focused on safeguarding charitable contributions rather than seeking personal advantages. He has given up any financial benefit and is advocating for a return to the nonprofit status. OpenAI, however, claims that he was aiming for control.

Musk states that the OpenAI case will establish a precedent for

Musk informed the jurors that his lawsuit against OpenAI is focused on safeguarding charitable contributions rather than seeking personal advantages. He has given up any financial benefit and is advocating for a return to the nonprofit status. OpenAI, however, claims that he was aiming for control.

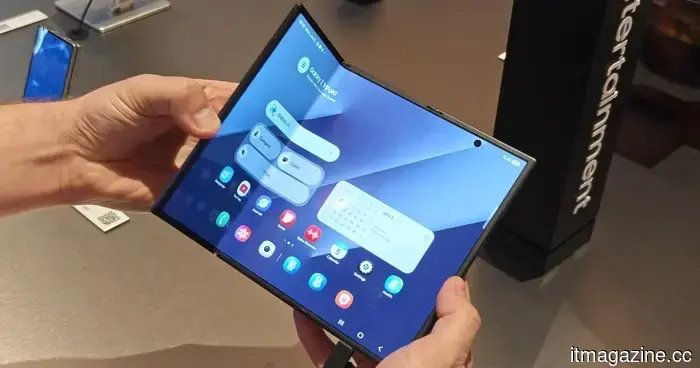

Samsung is facing a potential ban on its foldable phones in the US due to a patent dispute.

A recent lawsuit alleges that Samsung has violated patents related to foldable phones and is requesting a ban on sales in the US, although these allegations have yet to be substantiated.

Samsung is facing a potential ban on its foldable phones in the US due to a patent dispute.

A recent lawsuit alleges that Samsung has violated patents related to foldable phones and is requesting a ban on sales in the US, although these allegations have yet to be substantiated.

Scholly's founder, Christopher Gray, has filed a lawsuit against Sallie Mae, claiming wrongful termination and the unauthorized sale of student data that includes information about minors.

Christopher Gray created Scholly to assist students in finding scholarships. Following its acquisition by Sallie Mae, he claims he was terminated for raising concerns about the sale of users' personal data, including information about minors.

Scholly's founder, Christopher Gray, has filed a lawsuit against Sallie Mae, claiming wrongful termination and the unauthorized sale of student data that includes information about minors.

Christopher Gray created Scholly to assist students in finding scholarships. Following its acquisition by Sallie Mae, he claims he was terminated for raising concerns about the sale of users' personal data, including information about minors.

OpenAI states that it is

OpenAI rejected a Wall Street Journal report about missed targets, labeling it as clickbait. In reaction, investors sold off shares in Oracle, CoreWeave, SoftBank, and semiconductor companies. The main issue remains whether the $600 billion in commitments remains viable.

OpenAI states that it is

OpenAI rejected a Wall Street Journal report about missed targets, labeling it as clickbait. In reaction, investors sold off shares in Oracle, CoreWeave, SoftBank, and semiconductor companies. The main issue remains whether the $600 billion in commitments remains viable.

Nvidia discreetly announced a new version of the GeForce RTX 5070 GPU within a driver blog post.

Nvidia's latest 12GB GeForce RTX 5070 Laptop GPU employs a different GDDR7 memory format to tackle supply issues, offering laptop manufacturers a third configuration option in addition to the current 8GB variant.

Nvidia discreetly announced a new version of the GeForce RTX 5070 GPU within a driver blog post.

Nvidia's latest 12GB GeForce RTX 5070 Laptop GPU employs a different GDDR7 memory format to tackle supply issues, offering laptop manufacturers a third configuration option in addition to the current 8GB variant.

South Africa retreats from its national AI policy following the discovery that at least 6 out of 67 academic citations were identified as AI-generated fabrications.

South Africa withdrew its proposed AI policy after News24 discovered fabricated citations in actual journals. Minister Malatsi termed it an "unacceptable lapse." The integrity of the policy aimed at regulating AI was compromised by this issue.

South Africa retreats from its national AI policy following the discovery that at least 6 out of 67 academic citations were identified as AI-generated fabrications.

South Africa withdrew its proposed AI policy after News24 discovered fabricated citations in actual journals. Minister Malatsi termed it an "unacceptable lapse." The integrity of the policy aimed at regulating AI was compromised by this issue.

Google has finalized a classified AI agreement with the Pentagon for

Google provided the Pentagon with classified access to Gemini for "any lawful purpose" just one day after more than 580 employees voiced their objections. Additionally, the company withdrew from a $100 million drone swarm competition following an ethics assessment.