AI has significantly accelerated coding but has also created its own set of challenges.

AI has the capacity to generate code ten times faster, but companies are now overwhelmed by it.

AI coding tools were intended to expedite and simplify software development. They indeed succeeded, perhaps excessively so. People are coding more rapidly than ever, leading to a new array of issues for businesses.

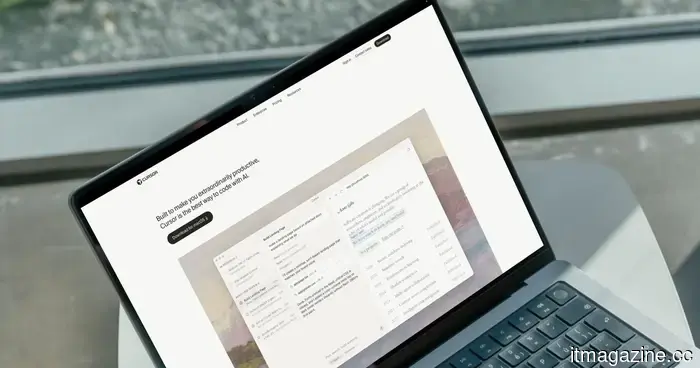

As reported by The New York Times, one financial services firm began using Cursor, an AI coding tool, increasing its code output from 25,000 to 250,000 lines monthly. While this seems beneficial, it resulted in a backlog of one million lines of unreviewed code.

“The volume of code being produced, alongside the rise in vulnerabilities, is something they can’t manage,” noted Joni Klippert, CEO of StackHawk, a security firm collaborating with the company.

This issue has permeated Silicon Valley. Businesses are generating more code than they have personnel to evaluate, leading to rising security concerns.

So, what's the issue?

Application security engineers are tasked with identifying errors in AI-generated code, but there is a severe shortage. “There aren't enough application security engineers globally to meet the demands of just American firms,” stated Joe Sullivan, an advisor to Costanoa Ventures.

The problem extends beyond staffing. AI coding tools tend to function better on personal laptops instead of secure corporate servers, resulting in engineers downloading entire codebases onto their personal devices. If a laptop is lost, significant sensitive data is also at risk.

Is increasing AI truly the solution?

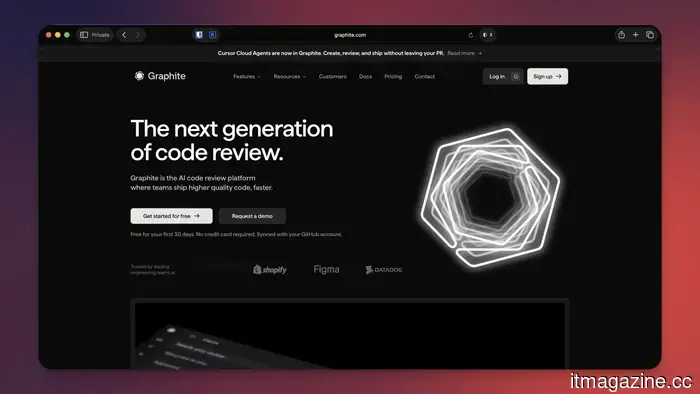

As expected, Silicon Valley believes so. Companies like Anthropic, OpenAI, and Cursor are already creating AI-driven review tools to detect errors in AI-generated code. Cursor has even acquired a code-review startup to integrate this functionality into its offering.

According to Cursor’s head of engineering, “The software development factory has effectively broken. We’re attempting to rearrange the components in some way.”

I am skeptical. While AI will eventually be able to identify code errors, human oversight is still essential before final production. Recently, an AI-generated code led to an Amazon outage, resulting in more than 100,000 lost orders and 1.6 million errors.

No company wants to face such an incident, and I am uncertain that AI code reviewers are the solution.

Rachit is an experienced tech journalist with more than seven years of experience covering the consumer technology landscape.

Apple is already developing the next MacBook Neo, which is set to receive significant upgrades.

With more RAM, a faster chip, and a 2027 timeline, the upcoming MacBook Neo is being prepped even before the current model has fully launched. A short time after the first MacBook Neo hit shelves, Apple is reportedly working on its successor. Tim Culpan, a tech columnist based in Taiwan and former Bloomberg reporter, has mentioned in his Culpium newsletter that a next-generation MacBook Neo is on schedule for a 2027 release, featuring two major upgrades.

What changes are anticipated, and what remains the same?

Gemini is introducing a Projects feature to help organize your AI conversations.

Gemini is set to launch the chat organization feature that power users have been eagerly awaiting. For those who frequently use Gemini, multiple chats are usually strewn across the sidebar, spanning various topics from work-related inquiries to weekend getaway planning. Locating an old chat can feel like searching for a needle in a haystack.

Thus, Google is testing a new feature in Gemini called Projects, allowing users to categorize their chats into specific folders, similar to ChatGPT's existing folder system. This feature has begun rolling out to a select group of users, although it is not fully operational yet.

Google’s AI mental health features seem useful – yet they are insufficient on their own.

Google is placing a stronger emphasis on mental health safety with a significant update to its Gemini platform, introducing a “one-touch” crisis support feature to facilitate quicker access to real-world assistance. This initiative is part of a broader effort to ensure that AI tools operate responsibly in delicate situations, particularly when users might be in distress.

At the core of this enhancement is a revamped safety protocol that activates when Gemini identifies signs of potential mental health crises, such as self-harm or suicidal thoughts. Instead of continuing a standard AI interaction, the system transitions to immediate intervention. Users will see a simplified interface that enables them to quickly connect with professional support via calls, texts, live chat, or visits to official crisis hotline websites.

Other articles

Anthropic is in discussions to invest $200 million in a private equity initiative aimed at promoting Claude within the enterprise sector.

Anthropic is in discussions for a joint venture with Blackstone, H&F, and Permira to integrate Claude into private equity portfolio companies while competing with OpenAI for a share of the enterprise market.

Anthropic is in discussions to invest $200 million in a private equity initiative aimed at promoting Claude within the enterprise sector.

Anthropic is in discussions for a joint venture with Blackstone, H&F, and Permira to integrate Claude into private equity portfolio companies while competing with OpenAI for a share of the enterprise market.

AI has significantly accelerated coding, but it has also created its own set of issues.

Thanks to AI, everyone has become a coder. However, an increase in code leads to more bugs and vulnerabilities, while there aren't enough engineers to address them.

AI has significantly accelerated coding, but it has also created its own set of issues.

Thanks to AI, everyone has become a coder. However, an increase in code leads to more bugs and vulnerabilities, while there aren't enough engineers to address them.

neuroClues has secured €10 million to enhance Parkinson’s diagnosis.

neuroClues has secured €10 million in a Series A funding round to commercialize its CE-marked eye-tracking technology aimed at aiding the early diagnosis of Parkinson’s and Alzheimer’s diseases.

neuroClues has secured €10 million to enhance Parkinson’s diagnosis.

neuroClues has secured €10 million in a Series A funding round to commercialize its CE-marked eye-tracking technology aimed at aiding the early diagnosis of Parkinson’s and Alzheimer’s diseases.

EIB provides PLD Space with a loan of €30 million to complete the construction of its MIURA 5 rocket.

EIB has agreed to a €30M venture debt loan with PLD Space, marking its initial direct investment in a small satellite launcher, in anticipation of MIURA 5's first test flight.

EIB provides PLD Space with a loan of €30 million to complete the construction of its MIURA 5 rocket.

EIB has agreed to a €30M venture debt loan with PLD Space, marking its initial direct investment in a small satellite launcher, in anticipation of MIURA 5's first test flight.

EIB provides PLD Space with a €30 million loan to complete the construction of its MIURA 5 rocket.

The EIB has finalized a €30 million venture debt loan agreement with PLD Space, marking its initial direct investment in a small satellite launch vehicle, in anticipation of the first test flight of MIURA 5.

EIB provides PLD Space with a €30 million loan to complete the construction of its MIURA 5 rocket.

The EIB has finalized a €30 million venture debt loan agreement with PLD Space, marking its initial direct investment in a small satellite launch vehicle, in anticipation of the first test flight of MIURA 5.

neuroClues has secured €10M to aid in the diagnosis of Parkinson's disease.

neuroClues has secured €10 million in a Series A funding round to commercialize its CE-marked eye-tracking device aimed at aiding early diagnosis of Parkinson’s and Alzheimer’s diseases.

neuroClues has secured €10M to aid in the diagnosis of Parkinson's disease.

neuroClues has secured €10 million in a Series A funding round to commercialize its CE-marked eye-tracking device aimed at aiding early diagnosis of Parkinson’s and Alzheimer’s diseases.

AI has significantly accelerated coding but has also created its own set of challenges.

Nowadays, thanks to AI, everyone has become a coder. However, an increase in code results in more bugs and vulnerabilities, along with a shortage of engineers to address them.