AI has significantly accelerated coding, but it has also created its own set of issues.

AI is capable of generating code at ten times the speed, but as a result, companies are now overwhelmed by it.

AI coding tools were expected to streamline software development, and they certainly have. However, this rapid acceleration has led to new challenges for businesses.

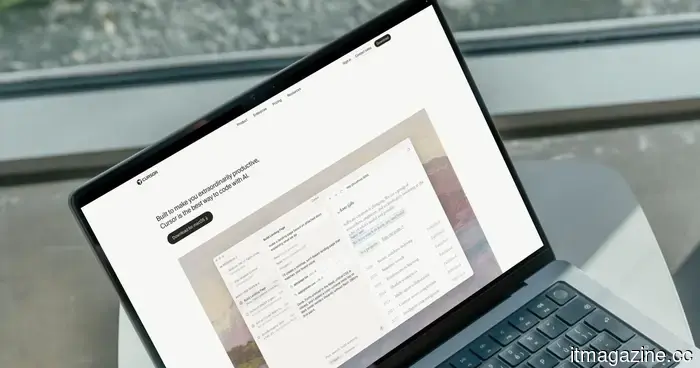

As reported by The New York Times, a financial services firm began utilizing Cursor, an AI coding tool, and saw its output surge from 25,000 to 250,000 lines of code each month. While this increase appears beneficial, it resulted in a backlog of one million lines of code that had not been reviewed.

"The enormous volume of code being produced, along with the rise in vulnerabilities, is something they're struggling to keep up with," stated Joni Klippert, CEO of StackHawk, a security startup collaborating with the company.

This issue has permeated Silicon Valley. Companies are generating more code than they have personnel available to evaluate, leading to growing security concerns.

What’s the issue?

The role tasked with identifying flaws in AI-generated code is known as an application security engineer, and there simply aren’t enough of them. "There aren't enough application security engineers globally to meet even the demands of American companies," remarked Joe Sullivan, an advisor to Costanoa Ventures.

This isn't merely a staffing shortage. AI coding tools perform better on personal computers than on secure company servers, resulting in engineers downloading entire codebases onto personal devices. If a laptop is lost, it can lead to a significant loss of sensitive information.

Is additional AI truly the solution?

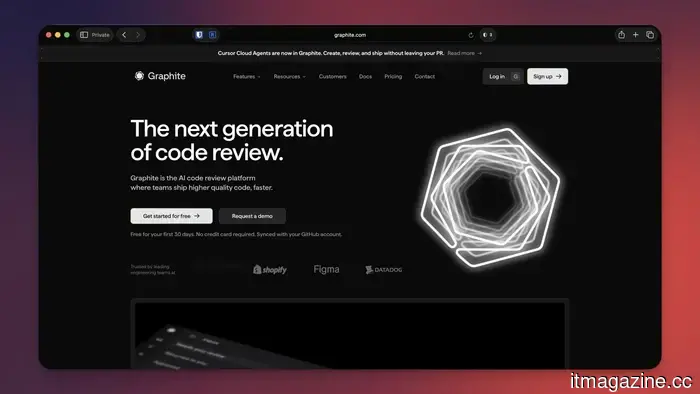

Inevitably, Silicon Valley believes so. Companies like Anthropic, OpenAI, and Cursor are developing AI-backed review tools to catch mistakes in AI-generated code. Cursor has even acquired a code-reviewing startup to integrate this capability into its offerings.

As the head of engineering at Cursor stated, "The software development factory kind of broke. We’re working on reconfiguring the components in some way."

I remain skeptical. Indeed, AI will eventually identify errors in code, but human oversight will still be essential before final production releases. A recent incident involving AI-generated code caused an Amazon outage, leading to over 100,000 lost orders and 1.6 million errors.

No company wants to face such outcomes, and I am uncertain whether AI code reviewers are the solution.

Rachit is an experienced technology journalist with over seven years of experience covering the consumer technology field.

Apple's upcoming MacBook Neo is already in development and is set for a significant upgrade.

With more RAM, a faster chip, and a projected release window in 2027, the next MacBook Neo is taking shape even before the current model has fully launched. Shortly after the initial MacBook Neo arrived in stores, Apple is already strategizing its successor. According to Tim Culpan, a tech columnist based in Taiwan and former Bloomberg reporter, who shared insights in his Culpium newsletter, the next-generation MacBook Neo is anticipated to debut in 2027 with two major enhancements.

What changes are expected, and which aspects will remain the same?

Gemini is set to introduce a Projects feature to assist users in organizing their AI conversations.

Gemini will finally offer a chat organization feature that power users have eagerly awaited.

Regular users of Gemini likely have numerous chats scattered across the sidebar, ranging from work research to weekend trip planning. Locating an old conversation can feel like an arduous task. This is why Google is currently testing a new feature called Projects in Gemini, allowing users to arrange their chats into specific folders, similar to the folder system utilized by ChatGPT. The feature is starting to roll out to a limited group of users, though it isn't fully operational yet.

Google’s AI mental health functionalities show promise but are insufficient on their own.

Google is enhancing its commitment to mental health support with a critical update to its Gemini platform, adding a "one-touch" crisis support feature designed to provide quicker connections to real-world assistance. This initiative is part of a broader effort to ensure AI tools are used responsibly in delicate situations, particularly when users may be facing distress.

At the core of this update is a revamped safety protocol that activates when Gemini detects indicators of possible mental health crises, such as self-harm or suicidal ideation. Rather than continuing a typical AI interaction, the system redirects to immediate assistance. Users will encounter a simplified interface that enables them to quickly reach professional help through calls, texts, live chats, or official crisis hotline websites.

Other articles

AI has significantly accelerated coding but has also created its own set of challenges.

Nowadays, thanks to AI, everyone has become a coder. However, an increase in code results in more bugs and vulnerabilities, along with a shortage of engineers to address them.

AI has significantly accelerated coding but has also created its own set of challenges.

Nowadays, thanks to AI, everyone has become a coder. However, an increase in code results in more bugs and vulnerabilities, along with a shortage of engineers to address them.

Anthropic is in discussions to invest $200 million in a private equity initiative aimed at advancing Claude in the enterprise sector.

Anthropic is in discussions for a joint venture with Blackstone, H&F, and Permira to implement Claude within their private equity portfolio companies as it competes with OpenAI for a share of the enterprise market.

Anthropic is in discussions to invest $200 million in a private equity initiative aimed at advancing Claude in the enterprise sector.

Anthropic is in discussions for a joint venture with Blackstone, H&F, and Permira to implement Claude within their private equity portfolio companies as it competes with OpenAI for a share of the enterprise market.

EIB provides PLD Space with a €30 million loan to complete the construction of its MIURA 5 rocket.

The EIB has finalized a €30 million venture debt loan agreement with PLD Space, marking its initial direct investment in a small satellite launch vehicle, in anticipation of the first test flight of MIURA 5.

EIB provides PLD Space with a €30 million loan to complete the construction of its MIURA 5 rocket.

The EIB has finalized a €30 million venture debt loan agreement with PLD Space, marking its initial direct investment in a small satellite launch vehicle, in anticipation of the first test flight of MIURA 5.

QR code traffic scams may seem clever, but they raise significant concerns.

Fresh phishing schemes are employing QR codes in fraudulent traffic violation messages to acquire personal and financial information.

QR code traffic scams may seem clever, but they raise significant concerns.

Fresh phishing schemes are employing QR codes in fraudulent traffic violation messages to acquire personal and financial information.

Anthropic is in discussions to invest $200 million in a private equity venture aimed at promoting Claude for enterprise use.

Anthropic is in discussions for a joint venture with Blackstone, H&F, and Permira to integrate Claude into their private equity portfolio companies, as they compete with OpenAI for market share in the enterprise sector.

Anthropic is in discussions to invest $200 million in a private equity venture aimed at promoting Claude for enterprise use.

Anthropic is in discussions for a joint venture with Blackstone, H&F, and Permira to integrate Claude into their private equity portfolio companies, as they compete with OpenAI for market share in the enterprise sector.

AI has significantly accelerated coding, but it has also created its own set of issues.

Thanks to AI, everyone has become a coder. However, an increase in code leads to more bugs and vulnerabilities, while there aren't enough engineers to address them.