Roblox now employs AI moderation to prevent harmful content from reaching you.

Roblox is enhancing its safety measures with a new AI moderation system

If you have ever used Roblox, you understand how chaotic and unpredictable the platform can be. Now, Roblox is leveraging that unpredictability to transform its content moderation approach.

The platform has introduced a real-time multimodal AI moderation system that not only analyzes individual items but also scans complete in-game scenes in real-time to identify content that may have evaded previous checks.

How does Roblox’s new AI moderation function?

In contrast to older moderation tools that assess one element at a time, the new multimodal system evaluates an entire scene, including avatars, text, and 3D objects, to ascertain whether the overall combination violates Roblox’s Community Standards.

For instance, if someone uses a free-drawing tool to create an offensive symbol — which wouldn't be flagged during a single-object review but is clearly problematic in context.

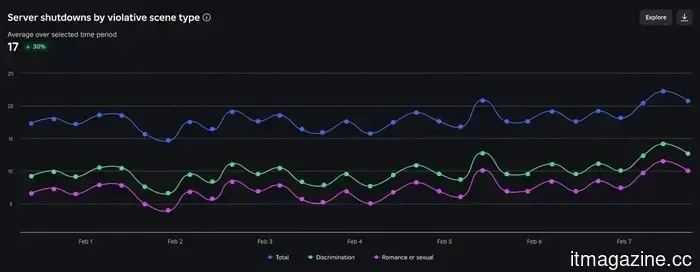

When the system detects repeated violations in a single game instance, it disables only that particular server instead of the entire game. Since its launch, approximately 5,000 servers have been taken down daily.

Roblox aims to monitor 100% of playtime with this system. In addition to server shutdowns, the platform is also creating tools to identify and eliminate individual offenders without negatively impacting others’ experiences.

What does this entail for Roblox creators?

Creators are kept informed as well. A new chart in the current Creator Dashboard displays the number of game servers that were shut down on any given day due to user misconduct.

Increases in this number can indicate a problem that needs attention, allowing creators the opportunity to review and modify aspects like custom emotes or in-game building tools before issues escalate.

Introducing a new certification program for improved online gaming

Roblox is also addressing a broader industry challenge. In collaboration with Keyword Studios and Riot Games, it is co-developing the DLC Leadership Program aimed at online community managers and moderators.

Research psychologist Rachel Kowert, who is the Research Director at Games for Change, is overseeing the academic component. The objective is to create standardized, evidence-based training for individuals managing gaming communities, something that has previously been lacking in the industry.

Roblox has been strengthening its safety measures for some time, from implementing parental controls to age-based chat filters, and this recent update represents its most significant initiative to date.

Other articles

Chinese technology firms are shifting their focus to Hong Kong as restrictions from the US and EU become more stringent.

Mainland Chinese listings on the Hong Kong Stock Exchange increased by 153% in 2025. As Western markets contract, Hong Kong is emerging as China's technology launchpad.

Chinese technology firms are shifting their focus to Hong Kong as restrictions from the US and EU become more stringent.

Mainland Chinese listings on the Hong Kong Stock Exchange increased by 153% in 2025. As Western markets contract, Hong Kong is emerging as China's technology launchpad.

Vivo X300 Ultra is designed to take the place of your camera, rather than just serve as your smartphone.

Vivo has introduced the X300 Ultra, featuring a professional-grade camera system aimed at competing with standalone cameras.

Vivo X300 Ultra is designed to take the place of your camera, rather than just serve as your smartphone.

Vivo has introduced the X300 Ultra, featuring a professional-grade camera system aimed at competing with standalone cameras.

Midas secures $50 million in Series A funding.

Midas secures $50 million in Series A funding to expand its on-chain investment platform and introduce instant-redemption staked-liquidity infrastructure.

Midas secures $50 million in Series A funding.

Midas secures $50 million in Series A funding to expand its on-chain investment platform and introduce instant-redemption staked-liquidity infrastructure.

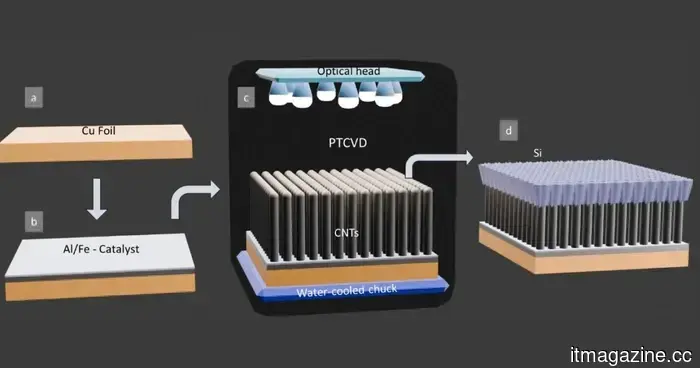

Battery technology that can hold more than nine times the energy is now available, making it ideal for your devices.

Researchers have created a novel silicon-carbon battery design that has the capability to store up to nine times more energy while maintaining stability over time.

Battery technology that can hold more than nine times the energy is now available, making it ideal for your devices.

Researchers have created a novel silicon-carbon battery design that has the capability to store up to nine times more energy while maintaining stability over time.

Rebellions has wrapped up a $400 million pre-IPO funding round, achieving a valuation of $2.34 billion.

Rebellions secures $400M in pre-IPO funding at a valuation of $2.34B, supported by South Korea's government growth fund, and unveils two new AI infrastructure products.

Rebellions has wrapped up a $400 million pre-IPO funding round, achieving a valuation of $2.34 billion.

Rebellions secures $400M in pre-IPO funding at a valuation of $2.34B, supported by South Korea's government growth fund, and unveils two new AI infrastructure products.

The judge has dismissed X's antitrust lawsuit against advertisers with prejudice.

A US judge determined that X did not present a valid antitrust claim against advertisers such as Mars, Unilever, and Nestlé, preventing the company from refiling.

The judge has dismissed X's antitrust lawsuit against advertisers with prejudice.

A US judge determined that X did not present a valid antitrust claim against advertisers such as Mars, Unilever, and Nestlé, preventing the company from refiling.

Roblox now employs AI moderation to prevent harmful content from reaching you.

Roblox's updated AI moderation system analyzes entire game scenes in real-time, detecting harmful content that many older systems would overlook, and is already closing down 5,000 servers each day.