Roblox now employs AI moderation to eliminate harmful content before it can reach users.

Roblox is enhancing its safety measures with a new AI moderation system.

If you’ve played Roblox, you’re likely aware of the platform’s chaotic and unpredictable nature. In response, Roblox is leveraging this unpredictability to revamp its content moderation approach.

The platform has introduced a real-time multimodal AI moderation system that analyses entire in-game scenes instead of just individual occurrences to identify content that may have evaded previous checks.

How does Roblox’s new AI moderation function?

In contrast to older moderation tools that assess one object at a time, the new multimodal system evaluates entire scenes, incorporating avatars, text, and 3D objects, to ascertain whether the overall combination violates Roblox’s Community Standards.

For example, if someone uses a free-drawing tool to create an offensive symbol—something that wouldn’t trigger a single-item check but is clearly problematic within context—this system is designed to identify it.

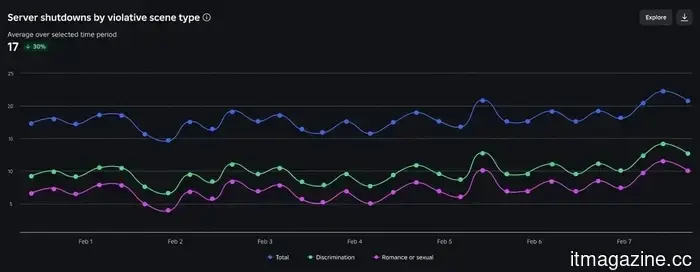

When repeated violations are identified in a single game instance, the system disables just that server rather than the whole game. Since its implementation, about 5,000 servers have been taken down daily.

Roblox aims to monitor all playtime with this system. In addition to server suspensions, it is also working on tools to pinpoint and remove individual offenders without negatively affecting the experience for others.

What does this entail for Roblox creators?

Creators won’t be left uninformed. A new chart on the existing Creator Dashboard displays the number of your game servers taken down each day due to misconduct.

Notable increases in this number can indicate issues worth looking into, providing creators an opportunity to review and modify aspects like custom emotes or in-game building tools before the situation escalates.

A new certification initiative for improving online gaming

Roblox is also addressing a broader industry concern. In collaboration with Keyword Studios and Riot Games, it is co-developing the DLC Leadership Program for online community managers and moderators.

Research psychologist Rachel Kowert, who serves as Research Director at Games for Change, is overseeing the academic component. The aim is to establish standardized, evidence-based training for individuals managing gaming communities, which the industry has previously lacked.

Roblox has been strengthening its safety measures for some time, including parental controls and age-based chat filters, with this latest update being its most ambitious effort to date.

Other articles

The judge has dismissed X's antitrust lawsuit against the advertisers with prejudice.

A US judge determined that X did not present a legitimate antitrust claim against advertisers such as Mars, Unilever, and Nestlé, preventing the company from re-filing the case.

The judge has dismissed X's antitrust lawsuit against the advertisers with prejudice.

A US judge determined that X did not present a legitimate antitrust claim against advertisers such as Mars, Unilever, and Nestlé, preventing the company from re-filing the case.

The Asus Morph 96 Wireless provides a personalized keyboard experience without the need for a DIY project.

The Asus ROG Strix Morph 96 Wireless offers a personalized keyboard experience without the difficulties of DIY, showcasing a gasket-mount structure, foam damping, and hot-swappable switches for effortless experimentation.

The Asus Morph 96 Wireless provides a personalized keyboard experience without the need for a DIY project.

The Asus ROG Strix Morph 96 Wireless offers a personalized keyboard experience without the difficulties of DIY, showcasing a gasket-mount structure, foam damping, and hot-swappable switches for effortless experimentation.

The judge has dismissed X's antitrust lawsuit against advertisers with prejudice.

A US judge determined that X did not present a valid antitrust claim against advertisers such as Mars, Unilever, and Nestlé, preventing the company from refiling.

The judge has dismissed X's antitrust lawsuit against advertisers with prejudice.

A US judge determined that X did not present a valid antitrust claim against advertisers such as Mars, Unilever, and Nestlé, preventing the company from refiling.

PUBG's top-down experiment has concluded before it truly began.

PUBG: Blindspot’s distinctive top-down tactical approach captured a portion of its audience, yet the game is being discontinued before it has a chance to achieve a full release.

PUBG's top-down experiment has concluded before it truly began.

PUBG: Blindspot’s distinctive top-down tactical approach captured a portion of its audience, yet the game is being discontinued before it has a chance to achieve a full release.

Rebellions has wrapped up a $400 million pre-IPO funding round, achieving a valuation of $2.34 billion.

Rebellions secures $400M in pre-IPO funding at a valuation of $2.34B, supported by South Korea's government growth fund, and unveils two new AI infrastructure products.

Rebellions has wrapped up a $400 million pre-IPO funding round, achieving a valuation of $2.34 billion.

Rebellions secures $400M in pre-IPO funding at a valuation of $2.34B, supported by South Korea's government growth fund, and unveils two new AI infrastructure products.

Roblox now employs AI moderation to eliminate harmful content before it can reach users.

Roblox's updated AI moderation system examines entire game scenes in real time, identifying harmful content that many previous systems would have overlooked, and is currently shutting down 5,000 servers each day.