Google's TurboQuant reduces AI memory usage by six times, causing fluctuations in chip stocks.

On Tuesday, Google released a research blog post discussing a new compression algorithm for AI models. Shortly thereafter, memory stocks began to decline. Micron fell by 3 percent, Western Digital dropped 4.7 percent, and SanDisk decreased by 5.7 percent, as investors reassessed the actual memory requirements of the AI industry.

The algorithm, named TurboQuant, targets one of the most costly limitations in operating large language models: the key-value cache, a high-speed data storage that retains contextual information, preventing the model from having to recalculate it for every new token generated. As models handle longer inputs, the cache expands quickly, using GPU memory that could be better utilized for serving more users or supporting larger models. TurboQuant reduces the cache size to just 3 bits per value, down from the conventional 16 bits, minimizing its memory usage by at least six times with, according to Google's benchmarks, no significant accuracy loss.

The research paper will be presented at ICLR 2026 and was written by Amir Zandieh, a research scientist at Google, and Vahab Mirrokni, a vice president and Google Fellow, alongside collaborators at Google DeepMind, KAIST, and New York University. It builds on two previous papers from the same group: QJL, published at AAAI 2025, and PolarQuant, which is set to appear at AISTATS 2026.

**How It Functions**

The key innovation of TurboQuant lies in removing the overhead associated with most compression methods that makes their efficiency less effective than their figures imply. Traditional quantization techniques diminish data vector sizes but must hold extra constants, normalization values needed for accurate data decompression. These constants usually add an additional bit or two per number, partially negating the compression benefits.

TurboQuant sidesteps this issue with a two-stage approach. The first stage, named PolarQuant, transforms data vectors from standard Cartesian coordinates into polar coordinates, splitting each vector into magnitude and angular components. Because the angular distributions exhibit predictable patterns, the system can completely bypass the costly per-block normalization stage. The second stage implements QJL, a method based on the Johnson-Lindenstrauss transform, which reduces the small residual error from the first stage to a single sign bit per dimension. The overall outcome is a representation that allocates most of its compression budget to retaining the original data's meaning while minimizing error correction expenses, with no overhead used for normalization constants.

Google evaluated TurboQuant using five standard benchmarks for long-context language models, including LongBench, Needle in a Haystack, and ZeroSCROLLS, employing open-source models from the Gemma, Mistral, and Llama families. With 3 bits, TurboQuant either matched or exceeded KIVI, the current standard baseline for key-value cache quantization published at ICML 2024. In needle-in-a-haystack retrieval tasks, which assess whether a model can find specific information within a lengthy passage, TurboQuant achieved perfect scores while compressing the cache by a factor of six. At 4-bit precision, the algorithm resulted in up to an eight-fold speed increase in computing attention on Nvidia H100 GPUs compared to the uncompressed 32-bit baseline.

**Market Reaction**

The stock market responded quickly, and various analysts viewed the reaction as excessive. Wells Fargo analyst Andrew Rocha pointed out that TurboQuant directly challenges the cost curve for memory in AI systems. If widely adopted, it raises the question of how much memory capacity the industry actually requires. However, Rocha and others cautioned that the demand for AI memory remains robust and that compression algorithms have existed for years without fundamentally changing procurement volumes.

Despite these concerns, they are not unfounded. AI infrastructure investment is growing at unprecedented rates, with Meta recently committing up to $27 billion in a deal with Nebius for dedicated computing capacity, and Google, Microsoft, and Amazon collectively planning hundreds of billions in capital expenditures on data centers through 2026. A technology that cuts memory requirements by six times won’t directly lead to a similar reduction in spending, as memory is just one aspect of a data center's costs. However, it alters the ratio, and in an industry with such high expenditure, even slight efficiency improvements can quickly accumulate.

**The Efficiency Debate**

TurboQuant emerges during a time when the AI industry must address the economics of inference. Training a model is a one-time major expense, but running it—serving millions of queries daily with acceptable speed and accuracy—constitutes the ongoing cost that determines the financial viability of AI products at scale. The key-value cache is critical to this calculation, acting as the bottleneck that restricts how many concurrent users a single GPU can support and the practical length of a context window a model can manage.

Compression methods like TurboQuant are part of a wider initiative to lower inference costs, alongside hardware advancements such as Nvidia’s Vera Rubin architecture and Google’s Ironwood TPUs. The crucial question is whether these efficiency gains will

Other articles

This might be our initial glimpse of Samsung's forthcoming Galaxy Z Fold 8 Wide.

Leaked CAD images of the Galaxy Z Fold 8 Wide show a foldable device that is wider and shorter, which Samsung is developing to compete directly with Apple's inaugural foldable iPhone.

This might be our initial glimpse of Samsung's forthcoming Galaxy Z Fold 8 Wide.

Leaked CAD images of the Galaxy Z Fold 8 Wide show a foldable device that is wider and shorter, which Samsung is developing to compete directly with Apple's inaugural foldable iPhone.

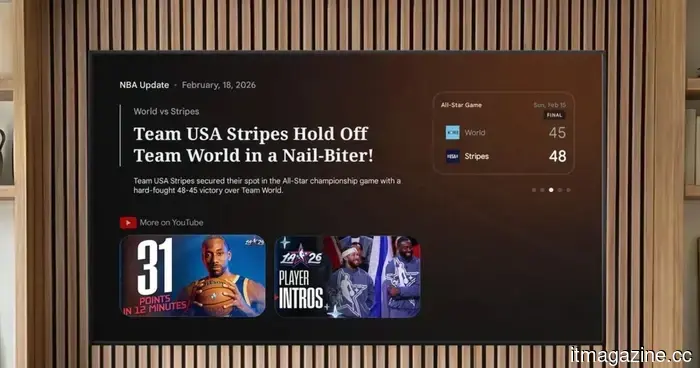

Gemini on Google TV can now provide answers to your questions, explain concepts, and offer sports updates.

Google is introducing Gemini functionalities to Google TV, allowing users to ask questions, delve into subjects, and keep up with sports updates, all in a single location.

Gemini on Google TV can now provide answers to your questions, explain concepts, and offer sports updates.

Google is introducing Gemini functionalities to Google TV, allowing users to ask questions, delve into subjects, and keep up with sports updates, all in a single location.

Enhance Your Home Gym with RITFIT’s Discounts This March

Strength training no longer requires a trip to the gym. RitFit's March sale on versatile workout equipment allows you to create your home gym. Whether for your personal use or as a gift for fellow fitness lovers, the M2 Smith Package is transforming workouts with its convenience.

Enhance Your Home Gym with RITFIT’s Discounts This March

Strength training no longer requires a trip to the gym. RitFit's March sale on versatile workout equipment allows you to create your home gym. Whether for your personal use or as a gift for fellow fitness lovers, the M2 Smith Package is transforming workouts with its convenience.

A new application aims to combat loneliness by encouraging individuals to disconnect from their phones and gather in the same physical space.

A startup named Friending has introduced a social platform focused on a concept that seems almost nostalgic in 2026: assisting individuals in forming friendships through face-to-face interactions. This app, located in Raleigh, North Carolina, links users through common interests.

A new application aims to combat loneliness by encouraging individuals to disconnect from their phones and gather in the same physical space.

A startup named Friending has introduced a social platform focused on a concept that seems almost nostalgic in 2026: assisting individuals in forming friendships through face-to-face interactions. This app, located in Raleigh, North Carolina, links users through common interests.

Meta and YouTube were held responsible in a groundbreaking trial concerning social media addiction.

A jury in Los Angeles determined that Meta and YouTube purposefully created addictive platforms that caused harm to a young user, marking the first verdict among 1,500 ongoing cases.

Meta and YouTube were held responsible in a groundbreaking trial concerning social media addiction.

A jury in Los Angeles determined that Meta and YouTube purposefully created addictive platforms that caused harm to a young user, marking the first verdict among 1,500 ongoing cases.

Google's TurboQuant reduces AI memory usage by six times, causing a stir in chip stock markets.

Google's TurboQuant algorithm reduces LLM key-value caches to 3 bits without any loss of accuracy. Following the announcement, memory stocks dropped within hours.

Google's TurboQuant reduces AI memory usage by six times, causing a stir in chip stock markets.

Google's TurboQuant algorithm reduces LLM key-value caches to 3 bits without any loss of accuracy. Following the announcement, memory stocks dropped within hours.

Google's TurboQuant reduces AI memory usage by six times, causing fluctuations in chip stocks.

Google's TurboQuant algorithm reduces LLM key-value caches to 3 bits without any loss in accuracy. Shortly after the announcement, memory stocks declined.