The upcoming Chrome update from Google is significant for Android users.

Google

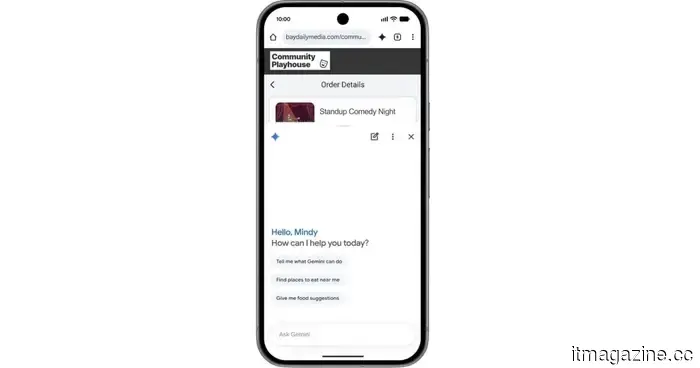

Gemini is undoubtedly becoming the focal point of Google's AI strategy, and this emphasis is now being integrated more deeply into Chrome on Android. Beginning in June, Chrome will receive a new array of AI-driven features centered around Gemini, with a straightforward objective: to transform your browser into a tool that genuinely assists you in thinking, planning, and taking action, rather than merely displaying web pages.

Chrome is set to become exceptionally helpful in the best manner.

Central to this update is a more contextual iteration of Gemini within Chrome. Google aims for it to operate like a genuine assistant that comprehends what you're viewing on a webpage. Rather than copying text into a different app or switching between tabs, you can click on a Gemini icon and pose questions directly related to the page you're on. It will be capable of breaking down lengthy articles, simplifying intricate subjects, and providing clearer explanations without requiring you to leave the page.

Google

However, Google isn't just stopping at summaries. Gemini is also being introduced into productivity features within Chrome. The idea is to connect it with Google's broader ecosystem and perform tasks for you. You will have the ability to add events to your calendar, save recipe ingredients to Keep, or extract specific information from Gmail, all while maintaining your browsing experience. This approach leans less towards searching and more towards finishing small tasks contextualized within your work, making it feel genuinely practical.

It aims to take care of the mundane aspects so you won't have to.

Additionally, Nano Banana emphasizes the more creative side. It allows users to generate and customize visuals based on their online experiences. In an educational setting, it can convert complex text into visual summaries, demonstrating Google's intention for Gemini to tailor content to your preferred consumption method, rather than the other way around.

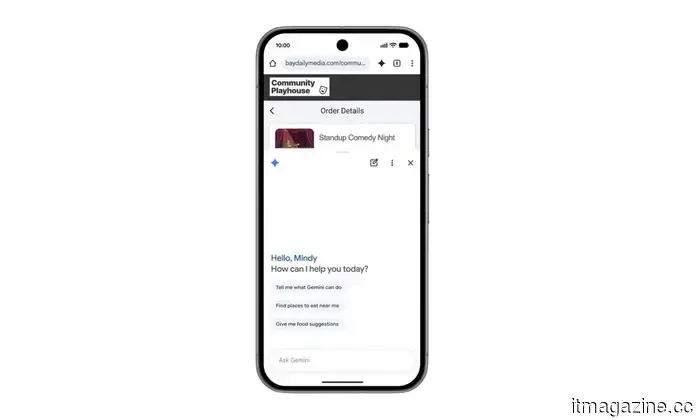

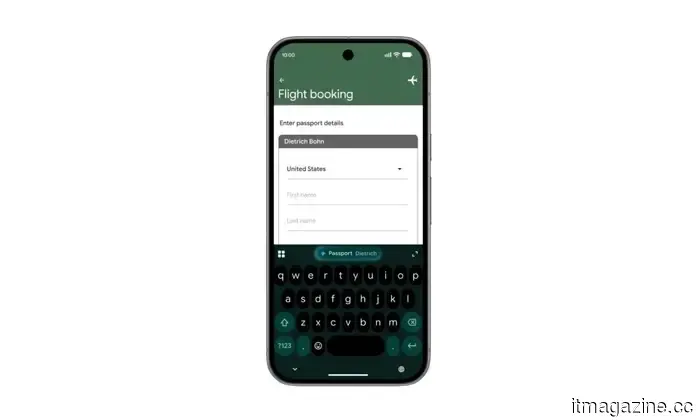

Chrome on Android will also feature something called auto-browse, which is intended to handle repetitive or tedious tasks in the background. For instance, if you're planning to visit a location and require information like parking specifics, you can simply share the event, and Chrome will autonomously gather the relevant details for you. It’s a feature designed to effortlessly enhance everyday browsing, even if it initially sounds somewhat futuristic.

Google

Naturally, Google is placing a strong emphasis on security as well. These features are being designed with safeguards against emerging threats like prompt injection attacks, indicating Google's efforts to prevent AI from being manipulated into undesirable actions.

The rollout will commence in June for select Android 12 or newer devices in the U.S. Meanwhile, auto-browse will be restricted to AI Pro and Ultra subscribers on compatible devices at the start. While it's still in the early stages, Chrome is evidently evolving from a conventional browser to something that seeks to actively engage in your online activities.

Shimul is a contributor at Digital Trends, with over five years of experience in the tech sector.

6 things Gemini Intelligence is about to do across your Android devices

Google is introducing Gemini Intelligence to Android, bringing the best features of Gemini to its most advanced devices. The company is keen for you to leverage Gemini throughout your day, all while maintaining control and protecting your data. These features will start rolling out this summer, beginning with Samsung Galaxy and Google Pixel devices. Furthermore, we can expect these features to appear on other Android devices, including watches, cars, glasses, and laptops later this year.

Your assistant is about to become much more proactive, without requiring you to ask repeatedly.

Read more

Rice grain-sized sensor could give robots a delicate touch and prevent them from causing damage.

Robots are incredibly precise, yet gentleness is not always their strong suit. A machine that can assemble a car with near-perfect accuracy might still apply too much pressure in sensitive situations, such as during delicate surgery or when interacting with a human eye. To address this, researchers at Shanghai Jiao Tong University are developing a new type of force sensor aimed at enhancing robots' ability to “feel” what they contact more accurately.

This sensor is tiny, about the size of a grain of rice at only 1.7 millimeters, making it suitable for integration into advanced surgical tools. What makes it particularly intriguing is its operation without traditional electronics. Instead, it uses light to detect force from all directions, including pressure, sliding, and twisting movements. Here's how it functions: at the end of an optical fiber, there is a soft material that slightly alters its shape upon contact. This tiny change affects the light's path through the sensor. The modified light pattern is then transmitted through optical fibers to a camera, which captures it like an image. Researchers subsequently utilize a machine learning model to analyze these light patterns and convert them into exact force readings. In simpler terms, the system learns to interpret touch through light alone, without the need for extensive wiring or numerous separate sensors in such a compact form factor.

Read more

Meta’s employees are finding it challenging to adapt to AI. Who would've expected that?

If you’re looking for an example of how a tech giant attempts to impose an AI-driven future

Other articles

Huawei is said to be experimenting with new technology for its largest smartphone battery to date.

A recent leak suggests that Huawei is exploring new battery materials and designs that may allow smartphone capacities to exceed 10,000mAh.

Huawei is said to be experimenting with new technology for its largest smartphone battery to date.

A recent leak suggests that Huawei is exploring new battery materials and designs that may allow smartphone capacities to exceed 10,000mAh.

Google has just unveiled a new type of laptop that integrates Gemini throughout.

Google has officially announced Googlebooks, a new line of laptops centered on its Gemini AI assistant. Laptops from Acer, Asus, Dell, HP, and Lenovo are anticipated to launch this fall, but pricing and complete hardware specifications have yet to be revealed.

Google has just unveiled a new type of laptop that integrates Gemini throughout.

Google has officially announced Googlebooks, a new line of laptops centered on its Gemini AI assistant. Laptops from Acer, Asus, Dell, HP, and Lenovo are anticipated to launch this fall, but pricing and complete hardware specifications have yet to be revealed.

OpenAI introduces Daybreak to compete with Anthropic’s Mythos in cyber defense.

OpenAI's newly launched Daybreak platform combines GPT-5.5 with Codex Security to compete against Anthropic's Mythos in AI-driven cybersecurity.

OpenAI introduces Daybreak to compete with Anthropic’s Mythos in cyber defense.

OpenAI's newly launched Daybreak platform combines GPT-5.5 with Codex Security to compete against Anthropic's Mythos in AI-driven cybersecurity.

Google has discovered the first zero-day exploit developed by AI and has successfully prevented a planned large-scale exploitation event.

Google's GTIG discovered the first zero-day exploit created with AI and prevented a widespread exploitation incident. The report indicates that state actors are leveraging AI for vulnerability research and autonomous malware development.

Google has discovered the first zero-day exploit developed by AI and has successfully prevented a planned large-scale exploitation event.

Google's GTIG discovered the first zero-day exploit created with AI and prevented a widespread exploitation incident. The report indicates that state actors are leveraging AI for vulnerability research and autonomous malware development.

Kevin Hartz's A* adopts a 'less-is-more' strategy with its new $450 million fund.

Kevin Hartz's A* has finalized a $450 million fund, presented as a response to AI megafunds pursuing billion-dollar valuations at all stages.

Kevin Hartz's A* adopts a 'less-is-more' strategy with its new $450 million fund.

Kevin Hartz's A* has finalized a $450 million fund, presented as a response to AI megafunds pursuing billion-dollar valuations at all stages.

How apprenticeship programs are broadening access to job opportunities

Craft Education and WGU are collaborating to broaden apprenticeship degree options that integrate paid employment, hands-on training, and academic advancement in areas such as education and nursing.

How apprenticeship programs are broadening access to job opportunities

Craft Education and WGU are collaborating to broaden apprenticeship degree options that integrate paid employment, hands-on training, and academic advancement in areas such as education and nursing.

The upcoming Chrome update from Google is significant for Android users.

Chrome on Android is receiving a significant AI enhancement with Gemini at its center, transforming the browser into a more useful assistant capable of comprehending pages, managing tasks, and streamlining your web experience.