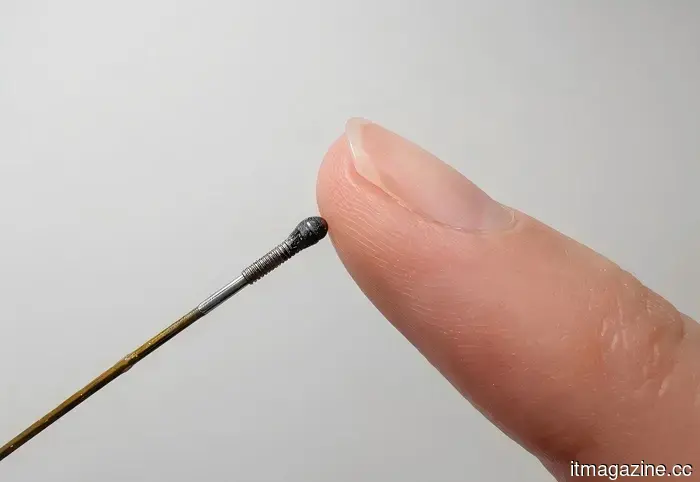

A sensor the size of a rice grain could provide robots with a gentle touch, helping them avoid breaking items.

Fauna Robotics

Robots are remarkably accurate, yet their ability to be gentle is often lacking. A machine that can assemble a car with near-perfect precision may still exert too much force in sensitive areas where even the tiniest error is critical, such as within a human eye or during intricate surgical procedures. To address this issue, researchers at Shanghai Jiao Tong University are creating a novel type of force sensor designed to help robots better "feel" what they are touching.

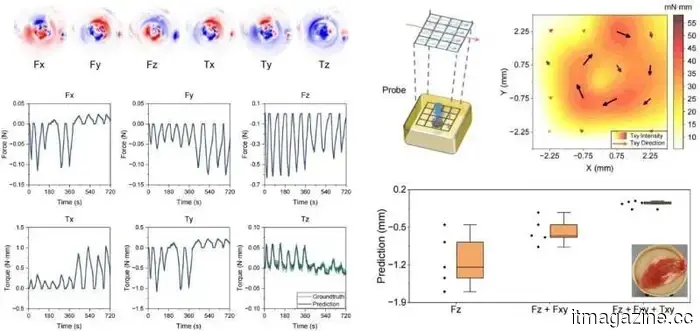

The sensor is miniature, measuring just 1.7 millimeters in width—about the size of a grain of rice—allowing it to be integrated into advanced surgical instruments. What sets it apart is that it does not depend on conventional electronics. Instead, it utilizes light to gauge force from all angles, including pressure, sliding, and twisting movements. Here's how it operates: at the end of an optical fiber sits a soft material that slightly changes shape upon contact with an object. This small deformation affects the way light passes through the sensor. The modified light pattern is transmitted through optical fibers to a camera, which captures it like an image. Researchers then employ a machine learning model to analyze these light patterns and convert them into accurate force measurements. In essence, the system learns to "interpret" touch through light alone, eliminating the need for numerous wires or multiple sensors crammed into such a compact space.

Why robots require the ability to feel, not just see

Modern surgical imaging has advanced significantly, allowing surgeons to view the human body with remarkable clarity. However, they still struggle, particularly during minimally invasive surgeries, with the ability to actually feel what their instruments are contacting. A surgeon may see an area clearly on a screen, but differentiating between healthy tissue and problematic areas often relies on experience and intuition rather than feedback from the tools themselves.

This new sensor aims to tackle that challenge. In experiments, researchers tested it on a soft gelatin block with a hard sphere concealed beneath, simulating a tumor within human tissue. The sensor detected the concealed object by sensing variations in stiffness as it moved across the surface. In robotic surgeries, where physicians operate in extremely confined spaces and cannot always rely on direct touch, this kind of tactile feedback could enhance safety, precision, and reduce reliance on guesswork.

There’s more work ahead before it can be used in operating rooms

Currently, these findings serve more as proof of concept than as a fully developed medical advancement. The researchers acknowledge that there is still much to be done. Manufacturing sensors this small with consistent quality at scale is considerably more complex than creating a single working prototype in a lab setting. Additionally, the setup process needs to be streamlined and made more reliable before it can be feasibly implemented in hospitals. Furthermore, the sensor has yet to undergo the rigorous long-term stress testing required for medical devices to earn doctors' trust during real procedures.

Nonetheless, the underlying concept of the technology is genuinely promising. Rather than depending on several complicated sensing components, the system employs a more straightforward design centered around a single optical channel and a camera. This simplified structure often allows for easier improvements and scaling over time as engineering progresses. The team is currently focused on integrating the sensor into actual robotic surgical tools and testing it in more realistic operating room environments. While a sensor the size of a grain of rice that can "feel" may appear to be a minor innovation, it could prove to be extremely valuable for surgeons maneuvering robotic instruments through spaces smaller than a fingernail.

Shimul is a contributor at Digital Trends, boasting over five years of experience in the tech industry.

Meta’s employees are struggling to adapt to AI. Who would’ve thought?

If you're looking for an example of how a tech giant attempts to impose an AI-driven future on its workforce, look no further than Meta's current situation. The company that built its empire by knowing everything about its users has now turned that same focus inward, much to the dissatisfaction of its employees. Last month, Meta discreetly informed tens of thousands of its U.S. staff that their corporate laptops would start monitoring their keystrokes, mouse movements, clicks, and screen activity. The goal was to feed that behavioral data into Meta's AI models to help them understand how people actually utilize computers. The response was immediate — within hours, internal comment threads were inundated with anger, confusion, and over a hundred emoji reactions that clearly communicated employee sentiments.

When an engineering manager inquired about opting out, Meta's chief technology officer, Andrew Bosworth, responded bluntly: there was no opt-out option for company laptops. This is the same company that is linking AI tool usage to performance evaluations, conducting mandatory "AI Transformation Weeks" to retrain employees, and developing internal dashboards that gamify daily AI token consumption — a metric tracked so intensively that some employees resorted to creating AI agents to manage their other AI agents. The entire situation began to resemble a self-consuming feedback loop.

Read more

Sci-fi got the gadgets right, but the vibes

Other articles

Alibaba combines Qwen AI with Taobao to enable a complete agentic shopping experience.

Alibaba is integrating its Qwen AI application with Taobao and Tmall, allowing the agent to access over 4 billion products.

Alibaba combines Qwen AI with Taobao to enable a complete agentic shopping experience.

Alibaba is integrating its Qwen AI application with Taobao and Tmall, allowing the agent to access over 4 billion products.

Even Meta's employees are struggling to grasp AI. Who could have predicted that?

Meta is monitoring employee keystrokes, linking AI utilization to performance evaluations, and simultaneously laying off thousands, yet appears shocked that morale is plummeting.

Even Meta's employees are struggling to grasp AI. Who could have predicted that?

Meta is monitoring employee keystrokes, linking AI utilization to performance evaluations, and simultaneously laying off thousands, yet appears shocked that morale is plummeting.

Alibaba incorporates Qwen AI into Taobao for a complete agent-assisted shopping experience.

Alibaba is linking its Qwen AI application to Taobao and Tmall, enabling the agent to access over 4 billion products.

Alibaba incorporates Qwen AI into Taobao for a complete agent-assisted shopping experience.

Alibaba is linking its Qwen AI application to Taobao and Tmall, enabling the agent to access over 4 billion products.

Trump Media announces a $405.9 million loss in Q1, primarily due to cryptocurrency write-downs.

In the first quarter of 2026, Trump Media & Technology Group reported a net loss of $405.9 million, primarily due to unrealized losses on its bitcoin and CRO investments.

Trump Media announces a $405.9 million loss in Q1, primarily due to cryptocurrency write-downs.

In the first quarter of 2026, Trump Media & Technology Group reported a net loss of $405.9 million, primarily due to unrealized losses on its bitcoin and CRO investments.

Trump Media announces a Q1 loss of $405.9 million, primarily due to reductions in cryptocurrency values.

In the first quarter of 2026, Trump Media & Technology Group reported a net loss of $405.9 million, primarily due to unrealized losses on its bitcoin and CRO investments.

Trump Media announces a Q1 loss of $405.9 million, primarily due to reductions in cryptocurrency values.

In the first quarter of 2026, Trump Media & Technology Group reported a net loss of $405.9 million, primarily due to unrealized losses on its bitcoin and CRO investments.

NVIDIA surpasses $40 billion in AI equity investments by 2026.

In early 2026, NVIDIA has pledged more than $40 billion towards AI equity investments, with $30 billion allocated to OpenAI.

NVIDIA surpasses $40 billion in AI equity investments by 2026.

In early 2026, NVIDIA has pledged more than $40 billion towards AI equity investments, with $30 billion allocated to OpenAI.

A sensor the size of a rice grain could provide robots with a gentle touch, helping them avoid breaking items.

Scientists have developed a force sensor the size of a grain of rice that enables robots to detect pressure and twisting forces through light, and it is already being used to locate hidden tumors in tissue during initial tests.