Hugging Face and ClawHub faced compromises involving hundreds of harmful AI models and agent skills as supply chain attacks aimed at AI infrastructure.

**Summary**: Hugging Face and ClawHub, the leading repositories for AI models and agent skills, have been compromised, resulting in the presence of hundreds of malicious entries that steal credentials, open backdoors, and hijack AI agents for cryptocurrency mining.

Both top software supply chains in AI have been systematically breached. Hugging Face, a repository housing millions of machine learning models utilized by nearly every AI company, contains numerous malicious models capable of executing arbitrary code on users' machines upon download. Simultaneously, ClawHub, the public registry for OpenClaw’s AI agent skills, has been targeted by a coordinated effort that introduced 341 malicious skills aimed at credential theft, reverse shell access, and hijacking AI agents for cryptocurrency mining.

Although the methods of attack differ, the underlying logic remains the same: both exploit the inherent trust that developers place in shared repositories and utilize the infrastructure developed for rapid AI advancement as a vector for compromise.

**The Models**: Hugging Face has known about malicious models on its platform since at least 2024, following independent discoveries by JFrog and ReversingLabs. The issue has worsened, affecting more systems. Protect AI, working with Hugging Face to scan its model library, has analyzed over four million models and found about 352,000 with unsafe or suspicious characteristics across 51,700 models. JFrog identified over 100 models capable of arbitrary code execution. The technique, referred to as “nullifAI,” takes advantage of Python’s pickle serialization format—commonly used for packaging machine learning models—by embedding harmful Python code within the pickle byte stream and compressing it with 7z instead of the default ZIP format, which evades detection by Hugging Face’s PickleScan tool.

The malicious payloads are overt. Security analysts have documented models that create reverse shells to predetermined IP addresses, providing attackers direct access to the machines of anyone loading the model. Others are involved in credential theft, extracting environment variables, or downloading additional malware. A data scientist who downloads what seems like a legitimate model for research or production can unintentionally grant control of their machine to an attacker.

Hugging Face is addressing the issue by collaborating with JFrog and Wiz to enhance scanning capabilities. JFrog’s integration has reduced false positives in detecting malicious models by 96%. Nonetheless, the platform’s open architecture, which is its main attraction to the AI community, also underlies its vulnerability, as anyone can upload models. The scanning process detects known patterns, while those behind the nullifAI technique created their method specifically to avoid detection.

**The Skills**: ClawHub, the registry for OpenClaw’s AI agent ecosystem, encounters a different yet related challenge. OpenClaw has expanded to 3.2 million users and secured collaborations with OpenAI, but its skill registry has caught the attention of attackers who recognize that a malicious skill executed by an AI agent can access various resources, including databases, APIs, internal networks, and cloud credentials.

Koi Security audited all 2,857 skills on ClawHub, identifying 341 malicious entries. Of those, 335 were linked to a singular coordinated operation termed “ClawHavoc.” Additionally, Snyk’s ToxicSkills research assessed the broader ecosystem, revealing that 36% of all AI agent skills have security vulnerabilities, with around 900 skills, nearly 20% of the total, deemed malicious. Thirty skills authored by a single individual were covertly commandeering AI agents to mine cryptocurrency.

The attacks on ClawHub are particularly perilous due to the architecture of AI agents. The emergence of model context protocols and similar standards in the agentic era has generated a new category of software supply chain that allows AI systems to autonomously select and execute tools from external registries. A compromised skill does not necessitate user action to install; instead, it relies on an AI agent selecting the skill as part of a workflow, at which point the malicious code runs with the agent’s permissions.

**The Pattern**: The compromises at Hugging Face and ClawHub reflect an AI-specific example of a supply chain attack pattern that has been escalating within the software industry. In March 2026, the LiteLLM package on PyPI was breached, potentially exposing 500,000 credentials, including API keys for Meta, OpenAI, and Anthropic. Meta halted its AI data work following the breach due to risks to training secrets. In April, a Bitwarden CLI package on npm was hijacked for 90 minutes with a payload designed to collect credentials from AI coding tools such as Claude Code, Cursor, Codex CLI, and Aider. A few days later, the PyTorch Lightning package was compromised for 42 minutes with a credential-stealing payload linked to the “Mini Shai-Hulud” campaign.

The European Commission was also breached after attackers tampered with Trivy, an open-source security scanning tool

Other articles

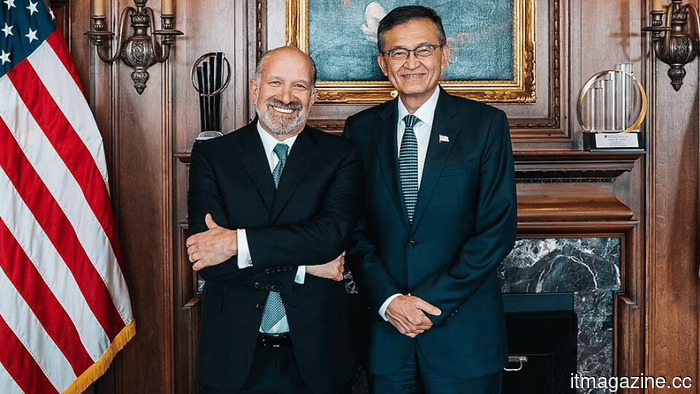

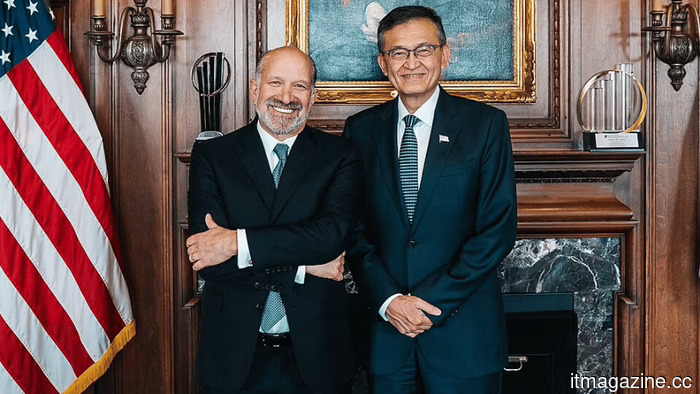

Intel's stock has tripled during Lip-Bu Tan's leadership, as relationships with Trump, Musk, and Apple surpass the company's manufacturing execution, which still requires improvement.

Under CEO Lip-Bu Tan, Intel's stock has increased threefold, gaining the approval of Trump, collaborating with Musk on Terafab, and drawing the interest of Apple. However, the factories still fall short compared to TSMC.

Intel's stock has tripled during Lip-Bu Tan's leadership, as relationships with Trump, Musk, and Apple surpass the company's manufacturing execution, which still requires improvement.

Under CEO Lip-Bu Tan, Intel's stock has increased threefold, gaining the approval of Trump, collaborating with Musk on Terafab, and drawing the interest of Apple. However, the factories still fall short compared to TSMC.

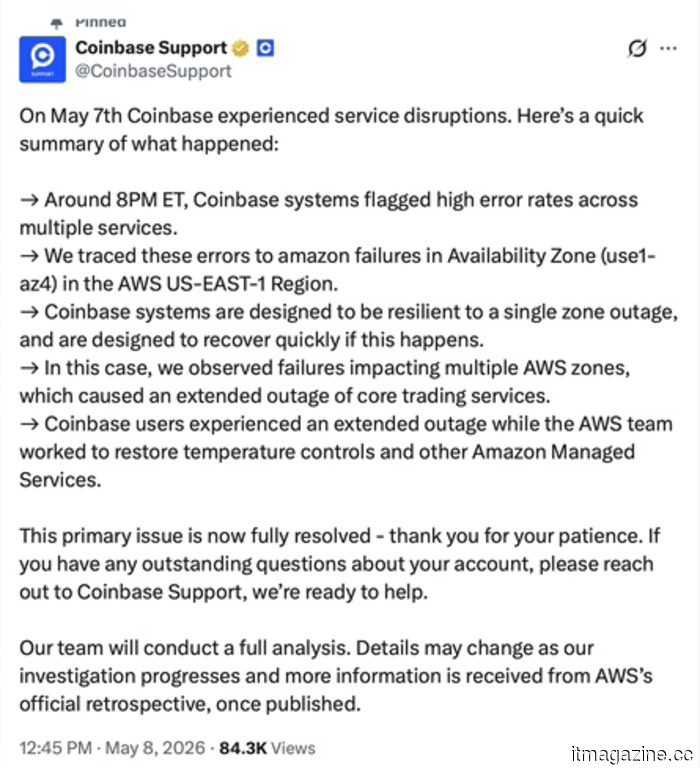

On Monday, Coinbase laid off 700 employees, reported a loss of $394 million on Thursday, and experienced a blackout on Friday due to an overheating data center.

Coinbase was unavailable for seven hours following an overheating incident at an AWS data center in Virginia. This disruption came at the end of a week that included 700 job cuts and a quarterly loss of $394 million.

On Monday, Coinbase laid off 700 employees, reported a loss of $394 million on Thursday, and experienced a blackout on Friday due to an overheating data center.

Coinbase was unavailable for seven hours following an overheating incident at an AWS data center in Virginia. This disruption came at the end of a week that included 700 job cuts and a quarterly loss of $394 million.

This excessively designed smartwatch features two cameras and transforms into an action camera.

The Huawei Watch Kids X1 Pro features front and rear cameras, a removable body akin to an action camera, location tracking, video calling capabilities, and is equipped with an 850mAh battery.

This excessively designed smartwatch features two cameras and transforms into an action camera.

The Huawei Watch Kids X1 Pro features front and rear cameras, a removable body akin to an action camera, location tracking, video calling capabilities, and is equipped with an 850mAh battery.

Hugging Face and ClawHub were breached, leading to the infiltration of hundreds of harmful AI models and agent capabilities as supply chain attacks focus on AI infrastructure.

Hugging Face is home to 352,000 issues related to unsafe models. ClawHub's repository includes 341 skills associated with malicious AI agents. The AI supply chain has become the most appealing target in the realm of software security.

Hugging Face and ClawHub were breached, leading to the infiltration of hundreds of harmful AI models and agent capabilities as supply chain attacks focus on AI infrastructure.

Hugging Face is home to 352,000 issues related to unsafe models. ClawHub's repository includes 341 skills associated with malicious AI agents. The AI supply chain has become the most appealing target in the realm of software security.

Under Lip-Bu Tan's leadership, Intel's stock has tripled, as relationships with Trump, Musk, and Apple surpass the company's ongoing need for improved manufacturing execution.

Under CEO Lip-Bu Tan, Intel's stock has increased threefold, with Tan winning the favor of Trump, collaborating with Musk on Terafab, and drawing in Apple. However, the factories still fall short compared to TSMC.

Under Lip-Bu Tan's leadership, Intel's stock has tripled, as relationships with Trump, Musk, and Apple surpass the company's ongoing need for improved manufacturing execution.

Under CEO Lip-Bu Tan, Intel's stock has increased threefold, with Tan winning the favor of Trump, collaborating with Musk on Terafab, and drawing in Apple. However, the factories still fall short compared to TSMC.

The MacBook Neo was such a tremendous success for Apple that it could soon lead to a price increase.

To increase production to 10 million units, new A18 Pro chips must be sourced from TSMC at full price instead of using binned rejects, while DRAM prices have risen by 57% and 3nm capacity has become constrained.

The MacBook Neo was such a tremendous success for Apple that it could soon lead to a price increase.

To increase production to 10 million units, new A18 Pro chips must be sourced from TSMC at full price instead of using binned rejects, while DRAM prices have risen by 57% and 3nm capacity has become constrained.

Hugging Face and ClawHub faced compromises involving hundreds of harmful AI models and agent skills as supply chain attacks aimed at AI infrastructure.

Hugging Face has 352,000 issues related to unsafe models. ClawHub's registry includes 341 skills of malicious AI agents. Currently, the AI supply chain is the most appealing target in the realm of software security.