The European Commission officially files charges against Meta.

The European Commission has released initial findings indicating that Meta has breached Digital Services Act (DSA) obligations by failing to prevent minors from accessing its platforms, a claim previously directed only at adult content websites. The findings, made public on Wednesday, mark the first occasion that the Commission has applied this specific accusation—failure to prevent underage access—to a mainstream social media platform, having previously confined it to adult content services.

This distinction is significant. In March 2026, the Commission issued identical preliminary findings against four adult sites—Pornhub, Stripchat, XNXX, and XVideo—for permitting minors to access their content with a simple click to confirm they were over 18. The action against Meta applies the same legal standards to a platform that not only attracts child users but allows them to create accounts. Despite requiring users to be at least 13 years old, Meta’s age verification for Facebook and Instagram predominantly depends on self-declaration, a method that independent research has repeatedly found to be ineffective.

The finding is part of broader formal proceedings that the Commission initiated against Meta in May 2024 concerning child protection obligations under DSA Articles 28, 34, and 35. This specific finding focuses on Article 28(1), which mandates that platforms implement suitable and proportional measures to ensure a high level of safety, privacy, and security for children and to prevent those under the national minimum age from accessing the service.

The timing of the finding is intentional. Just two weeks prior, on April 15, European Commission President Ursula von der Leyen introduced a privacy-focused EU age verification application that employs zero-knowledge proof technology, enabling users to confirm their age without disclosing personal data to platforms. Von der Leyen made it clear: “Online platforms can easily use our age verification app, leaving no room for excuses. We will have zero tolerance for companies that do not respect our children’s rights.”

By issuing the finding under Article 28(1) against Meta two weeks after the app’s launch, the Commission signals that the argument of technical infeasibility—that robust age verification is unattainable without compromising user privacy—is no longer valid. The EU has provided a solution, which Meta has failed to implement, resulting in the preliminary finding.

While the app itself has faced its issues—security researchers showed it could be circumvented within two minutes of its launch—the Commission's enforcement approach seems unaffected by this setback. The relevant question, from a regulatory perspective, is not whether the app is flawless but whether Meta has adopted any similarly robust alternatives. Its ongoing reliance on self-declaration and AI-based age assessment does not appear to meet the necessary standards.

Research conducted by the Interface-EU think tank in 2025 tested the sign-up processes of major platforms used by children in the EU, including Instagram. The findings were clear: all platforms allowed a simulated 14-year-old to create an account simply by entering a false date of birth, with no document verification or external checks. Meta’s explanation of its methods, shared with TechCrunch when the formal proceedings began in 2024, claimed it uses self-reported age alongside AI evaluations to detect users who might have lied, allowing reporting of suspected underage accounts.

The company stated that internal tests showed it had prevented 96% of teens from changing their Instagram birthdays from under 18 to over 18. However, the Commission’s preliminary finding suggests it considers these measures inadequate according to the DSA standard of being 'appropriate and proportionate.'

The precedent established by the findings on adult platforms is revealing. The Commission specifically noted that these platforms allowed minors to access their services “by a simple click confirming they are over 18.” The enforced standard is not perfection but rather the replacement of easily evaded self-declaration with measures that are significantly more difficult to bypass. By this standard, Facebook and Instagram’s current practices seem to fall short in the same manner.

What are the next steps? Preliminary findings do not equate to a final decision on non-compliance. Meta now has the opportunity to review the Commission’s evidence and submit a response in writing. The company may also present proposed remedies, with the European Board for Digital Services being consulted concurrently.

If the Commission's views are ultimately upheld and a decision on non-compliance is made, Meta could face a penalty of up to 6% of its global annual revenue, which could amount to billions of dollars based on Meta’s 2025 earnings. The Commission may also impose ongoing penalty payments to ensure compliance.

There is no set timeframe for the completion of the proceedings. However, Wednesday’s action is part of a broader acceleration in enforcement that also included preliminary findings on addictive design and recommendation systems. For Meta, the overarching message from Brussels is clear: the era of negotiated goodwill is coming to an end. The Commission is now leveling formal charges on multiple fronts at once, and the potential fines are becoming a reality. As of the time of publication, Meta had not publicly commented

Other articles

Amadeus has reached an agreement to purchase IDEMIA's public security division for $1.2 billion.

Amadeus has reached an agreement to acquire IDEMIA Public Security for $1.2 billion, thereby enhancing its airport technology portfolio with biometric border control and identity systems for law enforcement.

Amadeus has reached an agreement to purchase IDEMIA's public security division for $1.2 billion.

Amadeus has reached an agreement to acquire IDEMIA Public Security for $1.2 billion, thereby enhancing its airport technology portfolio with biometric border control and identity systems for law enforcement.

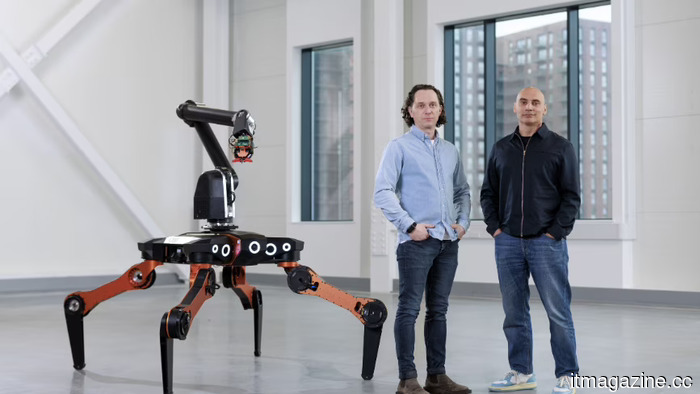

All3 secures $25 million to automate construction using legged robots.

All3 secures $25 million in seed funding, led by RTP Global, to implement legged robots and AI software at construction sites in Germany, aiming to address the housing crisis.

All3 secures $25 million to automate construction using legged robots.

All3 secures $25 million in seed funding, led by RTP Global, to implement legged robots and AI software at construction sites in Germany, aiming to address the housing crisis.

After 12 hours of discussions, the EU and Parliament could not reach an agreement on changes to the AI Act, delaying the deal until next month.

After 12 hours of discussions on April 29, EU member states and the European Parliament were unable to reach a consensus on amendments to the AI Act, with May remaining the final opportunity before the August 2026 deadline.

After 12 hours of discussions, the EU and Parliament could not reach an agreement on changes to the AI Act, delaying the deal until next month.

After 12 hours of discussions on April 29, EU member states and the European Parliament were unable to reach a consensus on amendments to the AI Act, with May remaining the final opportunity before the August 2026 deadline.

Scientists from Perm Polytechnic and China have created a neural network that predicts underground pressure with an accuracy of 99.5%.

Drilling wells has long ceased to be just "drilling a hole in the ground." Today, it is a confrontation between man and rock, where mistakes can have serious consequences in the literal sense.

Scientists from Perm Polytechnic and China have created a neural network that predicts underground pressure with an accuracy of 99.5%.

Drilling wells has long ceased to be just "drilling a hole in the ground." Today, it is a confrontation between man and rock, where mistakes can have serious consequences in the literal sense.

Scientists from Perm Polytechnic and China have created a neural network that predicts underground pressure with 99.5% accuracy.

Drilling wells has long ceased to be just "drilling a hole in the ground." Today, it is a confrontation between man and rock, where mistakes can have serious consequences in the literal sense.

Scientists from Perm Polytechnic and China have created a neural network that predicts underground pressure with 99.5% accuracy.

Drilling wells has long ceased to be just "drilling a hole in the ground." Today, it is a confrontation between man and rock, where mistakes can have serious consequences in the literal sense.

The European Commission officially accuses Meta.

The EU has released initial results indicating that Meta violated DSA regulations by not preventing underage children from accessing Facebook and Instagram, marking the first accusation of this kind against a major social media platform.

The European Commission officially accuses Meta.

The EU has released initial results indicating that Meta violated DSA regulations by not preventing underage children from accessing Facebook and Instagram, marking the first accusation of this kind against a major social media platform.

The European Commission officially files charges against Meta.

The EU has released initial findings indicating that Meta violated DSA regulations by not preventing underage users from accessing Facebook and Instagram, marking the first accusation of this kind against a major social media platform.