Sony's table tennis robot prompted me to consider the implications of AI having a physical form.

Initially, I wanted to write off Sony’s table tennis robot as just another costly demonstration for the lab. Sure, a machine that can rally with top players is impressive, but it seems like a showcase aimed at impressing executives in a pre-arranged applause scenario.

However, table tennis presents a tougher challenge than it appears. The ball is small, fast, spinning, and unpredictable, changing direction the instant it hits the table. Sony’s robot is confronted with something more challenging than pure calculation; it must see, predict, and react before the rally is lost.

Sony pitted Ace against five elite players and two professionals under official competition rules, and the robot secured several victories.

The key takeaway is what Ace had to contend with during these matches: quick, high-spin shots that altered their course post-bounce and penalized even the slightest delays. In simpler terms, Ace wasn't merely returning the ball; it was interpreting movement, forecasting actions, and responding before the rally slipped away.

AI is advancing beyond conventional spaces.

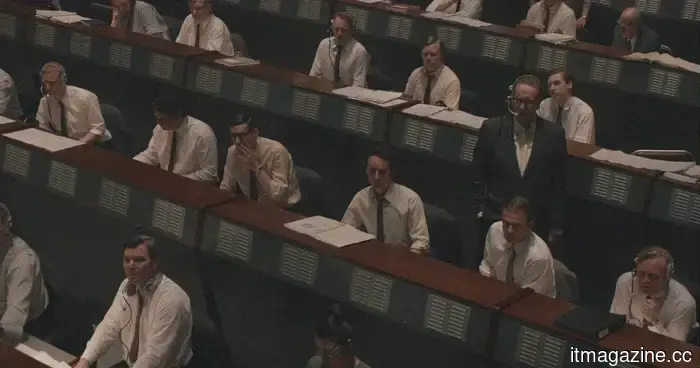

The typical “AI defeats human” narrative diminishes the actual capabilities Ace is demonstrating. We've seen this story play out in cleaner contexts before. In 1997, IBM’s Deep Blue defeated Garry Kasparov, and that landmark continues to loom over the ongoing narrative of human versus machine intellect.

Chess, despite its strategic complexity, is manageable for computers. The board remains stable, the pieces are stationary, and a knight won’t return at 60 miles per hour due to an awkward angle of impact.

Sony’s robot indicates a different evolution. When AI must be physically active, intelligence turns into a matter of timing. The system needs to perceive its environment swiftly enough to respond effectively. This presents greater utility, but is also significantly more challenging to contain neatly.

The physical aspect alters the problem.

This is where the table tennis demonstration gains deeper significance. A robot capable of tracking spin, predicting movement, and adjusting its reaction in real time doesn’t automatically qualify as a factory worker, warehouse picker, nursing assistant, farm worker, or disaster response unit. Such a leap would be excessively simplistic, and simplifications often lead to misunderstandings.

The overall robotics sector has long moved beyond mere demonstrations. According to the International Federation of Robotics, 542,000 industrial robots were installed in 2024, more than double the number from ten years prior. Projections suggest installations will hit 575,000 in 2025 and surpass 700,000 by 2028. While this doesn’t categorically position Ace as a factory machine, it does integrate it into a broader narrative of automation that is already evident on production floors.

On controlled industrial grounds, robots must manage variability instead of endlessly repeating a single flawless motion. In logistics, they contend with crushed boxes, improper angles, missing labels, and unexpected foot traffic at inconvenient moments. Outside, obstacles like mud, weather, uneven terrain, and naturally shaped produce disregard software limitations.

The employment implications paint a less appealing picture. McKinsey estimates current technology could theoretically automate activities encompassing about 57% of today’s work hours in the US. This number isn't a simple loss of jobs, and McKinsey is cautious in emphasizing that point.

The implications are subtler and likely more complicated: tasks get fragmented, roles are redefined, and some employees find that “efficiency” often arrives with a spreadsheet and a forced smile.

Certain environments heighten the consequences of mistakes. A chatbot making an error can waste an afternoon, but a robot misinterpreting a patient's balance, a wheelchair, or a hospital corridor can cause significant harm. As AI becomes increasingly embodied, the repercussions of its errors become less forgiving.

The costs are linked to its physical form.

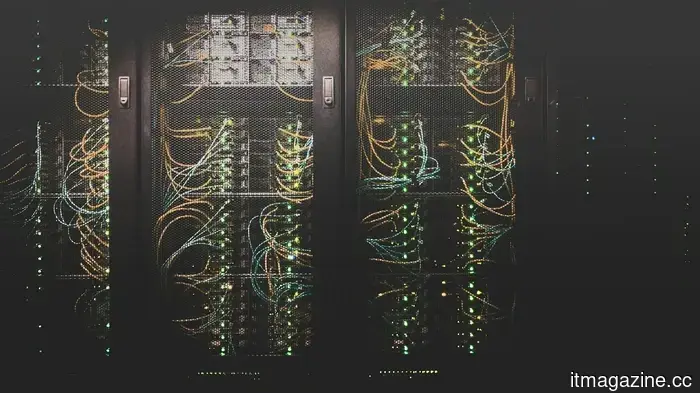

The infrastructure necessary doesn't vanish when AI takes on a physical presence, whether in the form of legs, wheels, or robotic arms. It still relies on chips, data centers, cooling systems, electric power, water, and a grid not designed for a sudden surge in computational need from multiple companies.

The International Energy Agency anticipates that global data center electricity consumption will double to around 945 TWh by 2030, accounting for nearly 3% of worldwide electricity use. That percentage may appear minor until a local grid, water system, or community near a new data center has to cope with this concentration.

However, there are positive aspects as well. More intelligent robots could minimize factory waste, assist in hazardous site inspections, enhance precision farming, and undertake labor-intensive tasks that strain human bodies. The benefits are tangible, but the costs are also significant.

Deep Blue made AI seem formidable within a board game context. Ace, however, illustrates that the board has disappeared, and the pieces now include factories, hospitals, farms, grids, and workers attempting to predict future outcomes.

Asimov envisioned robots constrained by rules. The reality of what we are developing may be first governed by economic factors.

Other articles

The discussion around "iPhone clones" is outdated.

For many years, labeling a phone as an “iPhone clone” was an immediate way to disregard it completely. This label suggested unoriginal design, inferior materials, and an experience that deteriorated as soon as you used it. The initial imitators deserved that image. They replicated the appearance of Apple’s iPhone but lacked any real quality. Poor screens, sluggish performance, [...]

The discussion around "iPhone clones" is outdated.

For many years, labeling a phone as an “iPhone clone” was an immediate way to disregard it completely. This label suggested unoriginal design, inferior materials, and an experience that deteriorated as soon as you used it. The initial imitators deserved that image. They replicated the appearance of Apple’s iPhone but lacked any real quality. Poor screens, sluggish performance, [...]

Fed up with Gemini and ChatGPT? Claude is now here to assist you with Spotify, Uber, and additional integrations.

Claude now integrates with AllTrails, Uber, Spotify, Instacart, TripAdvisor, and more, combining your daily apps into one conversation, allowing you to plan, shop, and book without the need to switch between tabs.

Fed up with Gemini and ChatGPT? Claude is now here to assist you with Spotify, Uber, and additional integrations.

Claude now integrates with AllTrails, Uber, Spotify, Instacart, TripAdvisor, and more, combining your daily apps into one conversation, allowing you to plan, shop, and book without the need to switch between tabs.

Xbox Game Pass may become more affordable with a partnership with Discord.

Subscribers of Discord Nitro might soon be offered a bundle of the Xbox Game Pass Starter Edition that includes more than 50 games and offers restricted cloud streaming.

Xbox Game Pass may become more affordable with a partnership with Discord.

Subscribers of Discord Nitro might soon be offered a bundle of the Xbox Game Pass Starter Edition that includes more than 50 games and offers restricted cloud streaming.

Porsche unveils an all-electric Cayenne Coupe featuring an impressive power enhancement.

Porsche's Cayenne Coupe will transition to a fully electric version in 2026, featuring three models that offer power outputs from 435 hp to 1,139 hp, with a base price of $113,800.

Porsche unveils an all-electric Cayenne Coupe featuring an impressive power enhancement.

Porsche's Cayenne Coupe will transition to a fully electric version in 2026, featuring three models that offer power outputs from 435 hp to 1,139 hp, with a base price of $113,800.

This AI bot handles the mindless scrolling on the internet for you, allowing you to avoid the mental clutter.

Noscroll is an innovative AI-driven service that keeps track of your social media feeds, news websites, and more, and then sends you the key points via text. No need to scroll.

This AI bot handles the mindless scrolling on the internet for you, allowing you to avoid the mental clutter.

Noscroll is an innovative AI-driven service that keeps track of your social media feeds, news websites, and more, and then sends you the key points via text. No need to scroll.

The 'Star City' spinoff of For All Mankind finally presents the Soviet perspective on the space race in a new trailer.

Apple TV has released a trailer for Star City, the spinoff of For All Mankind that delves into the Soviet perspective of the alternate history space race, taking place entirely in the paranoid-infused 1970s.

The 'Star City' spinoff of For All Mankind finally presents the Soviet perspective on the space race in a new trailer.

Apple TV has released a trailer for Star City, the spinoff of For All Mankind that delves into the Soviet perspective of the alternate history space race, taking place entirely in the paranoid-infused 1970s.

Sony's table tennis robot prompted me to consider the implications of AI having a physical form.

Sony’s table tennis robot resembles a laboratory exercise with a paddle. The actual narrative begins when AI moves beyond responding to commands and starts to navigate in our environment.