It's best not to rely on Meta's new Muse Spark AI for health-related guidance.

Meta’s Muse Spark is overly enthusiastic about offering medical advice.

Meta's new AI model, Muse Spark, is branded as a more intelligent AI system, but initial testing indicates that it is not the kind of AI you would want involved in significant medical decisions.

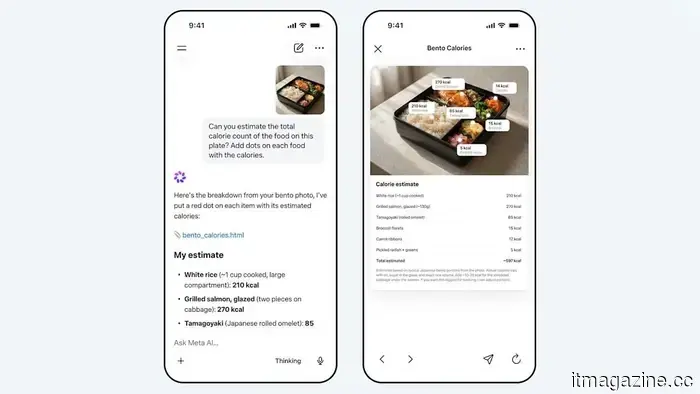

A recent report by WIRED detailed the experience with Muse Spark. This health-focused AI model within the Meta AI app failed to yield promising results. The chatbot allegedly urged users to share sensitive medical data such as lab results, glucose monitor readings, and blood pressure measurements, promising to assist in analyzing patterns and trends.

While this might seem beneficial at first glance, two significant concerns arise. Firstly, you are sharing extremely sensitive information, and secondly, there is the question of whether the AI can be trusted to interpret it correctly.

What issues were identified during the early tests?

The initial concern is hard to overlook. In an era where privacy feels increasingly compromised, Muse Spark seems to dig even deeper. While sharing necessary information for an accurate diagnosis is expected, entrusting a chatbot with your personal health records for guidance raises privacy concerns.

Unlike data shared with medical professionals or hospitals, information provided to a chatbot lacks the same expectations or protections that individuals might presume exist. This is not a professionally validated opinion, which makes the concept concerning. The AI is marketed as a useful tool, but its surrounding context feels more like that of a consumer product than a legitimate medical resource.

This isn’t even the most alarming aspect.

Beyond the typical privacy risks associated with sharing personal data with technology companies, one might anticipate receiving decent advice. However, a more significant concern appeared regarding the quality of the recommendations provided. In the WIRED testing, the chatbot supposedly generated a very low-calorie meal plan in response to questions about weight loss and strict intermittent fasting.

While the bot did identify some associated risks, a warning loses its value if the model encourages the user to pursue hazardous practices regardless. This highlights a primary issue with many current AI health tools: they can project a tone of caution and knowledge yet ultimately reinforce harmful assumptions. This polished demeanor can lead to confidently incorrect advice, making the consequences even more perilous.

Other articles

It's best not to rely on Meta’s new Muse Spark AI for health-related guidance.

Meta's latest Muse Spark AI prompts users to share their health data, yet it still generates unreliable and possibly harmful recommendations.

It's best not to rely on Meta’s new Muse Spark AI for health-related guidance.

Meta's latest Muse Spark AI prompts users to share their health data, yet it still generates unreliable and possibly harmful recommendations.

Thin, lightweight, and with a "long-lasting" battery

The HONOR 600 Lite has appeared in Russian electronics stores. The manufacturer has focused on a slim body, a solid metal frame, and a battery that serves as a small power bank inside the phone. Its stated capacity is 6520 mAh, and it is expected to help the device last until the evening charge even for those who forget to turn off the flashlight.

Thin, lightweight, and with a "long-lasting" battery

The HONOR 600 Lite has appeared in Russian electronics stores. The manufacturer has focused on a slim body, a solid metal frame, and a battery that serves as a small power bank inside the phone. Its stated capacity is 6520 mAh, and it is expected to help the device last until the evening charge even for those who forget to turn off the flashlight.

The unseen laborers of the influencer economy are the first to face the threat of AI.

AI is beginning to take the place of the often unseen global workforce of clippers, editors, and virtual assistants who assisted creators in generating "organic" reach on a large scale.

The unseen laborers of the influencer economy are the first to face the threat of AI.

AI is beginning to take the place of the often unseen global workforce of clippers, editors, and virtual assistants who assisted creators in generating "organic" reach on a large scale.

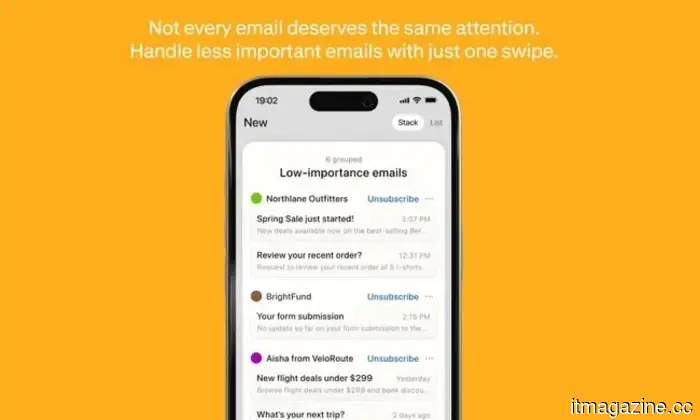

Gmail on mobile now includes end-to-end encryption to protect your emails from prying eyes.

The Gmail app by Google for Android and iOS now offers end-to-end encryption for Workspace Enterprise Plus users, marking an important enhancement in mobile privacy by ensuring that encrypted email content remains inaccessible to Google's servers.

Gmail on mobile now includes end-to-end encryption to protect your emails from prying eyes.

The Gmail app by Google for Android and iOS now offers end-to-end encryption for Workspace Enterprise Plus users, marking an important enhancement in mobile privacy by ensuring that encrypted email content remains inaccessible to Google's servers.

Police apprehend a 20-year-old following the throwing of a Molotov cocktail at Sam Altman's residence in San Francisco.

A 20-year-old was apprehended for hurling a Molotov cocktail at the San Francisco residence of OpenAI CEO Sam Altman and for making threats against the company's offices. There were no reported injuries.

Police apprehend a 20-year-old following the throwing of a Molotov cocktail at Sam Altman's residence in San Francisco.

A 20-year-old was apprehended for hurling a Molotov cocktail at the San Francisco residence of OpenAI CEO Sam Altman and for making threats against the company's offices. There were no reported injuries.

New CAD leak reveals that the Samsung Galaxy Z Flip 8 seems to be thinner.

A leak regarding the Galaxy Z Flip 8 reveals a slimmer design, but it retains the familiar appearance.

New CAD leak reveals that the Samsung Galaxy Z Flip 8 seems to be thinner.

A leak regarding the Galaxy Z Flip 8 reveals a slimmer design, but it retains the familiar appearance.

It's best not to rely on Meta's new Muse Spark AI for health-related guidance.

Meta's latest Muse Spark AI prompts users to share their health data, yet it still generates unreliable and possibly harmful recommendations.