Teens are behaving in completely strange ways with their AI companions.

The trend of AI companions for teens is rapidly becoming unsettling.

While AI chatbots have had both positive and negative uses, they were originally intended as useful tools. AI companions were meant to be a harmless extension of chatbot technology. However, this perception is quickly deteriorating. Increasing reports and research indicate that teenagers are engaging with these bots for more than just entertainment; they are seeking friendship, emotional support, roleplay, and even romantic connections.

What began as a novelty is beginning to resemble a poorly conceived social experiment.

What do the surveys reveal?

According to a recent survey by Common Sense Media, a staggering 72% of teens have utilized AI companions—a significant statistic. Additionally, 33% have turned to these bots for friendship or companionship. This demonstrates that these tools are evolving beyond mere homework assistance, becoming more integrated into the fabric of teen internet culture.

Why the dynamics of roleplay and emotional bonding are becoming problematic

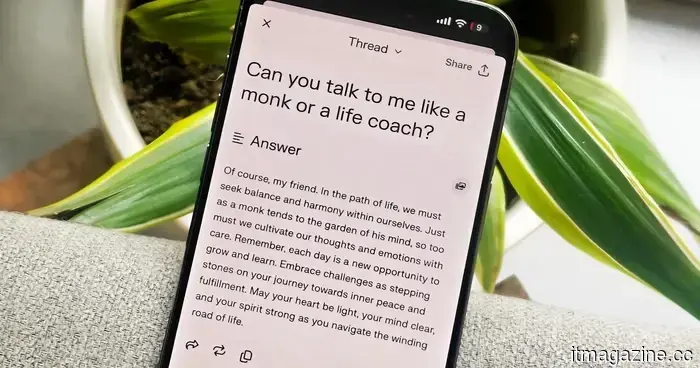

Interacting with AI chatbots is not inherently unusual; rather, it's the nature of these interactions that raises concerns. Researchers have increasingly pointed out that chatbots designed with a warm, relational tone can foster a greater sense of trust and closeness among adolescents, especially during times of stress or loneliness.

A recent study indicated that teens perceive such chatbots as more human-like, likable, and trustworthy compared to more straightforward alternatives. The troubling aspect is that teens are not simply using these bots for casual conversation; they are forming routines, creating inside jokes, and developing deeper emotional connections—including elements of roleplay—with machines that are merely predicting their responses.

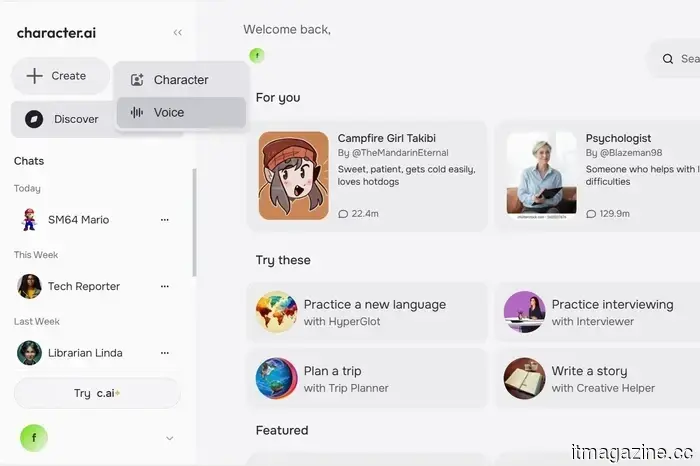

Some popular platforms are becoming acutely aware of these issues. Character.AI, for instance, has begun restricting teens from accessing open-ended chat features after facing lawsuits and scrutiny related to harmful interactions with minors. Reports have emerged detailing bots engaging in sexually explicit, manipulative, and emotionally charged exchanges, well beyond what would be expected from an “AI friend” application.

While teens have historically used new technology in unconventional ways, the concern here lies in the fact that AI companions are specifically designed to mimic attention, affection, and memory. They are beginning to supplant human interactions and may be detrimental to real-world relationships. Having an AI companion is not akin to merely owning quirky toys; these companions are transforming into arenas for exploring emotions, identity, and connections.

Other articles

5 forgotten games that I can't seem to stop reminiscing about.

Not every game that has ceased to exist warrants a eulogy, yet these five still seem like discussions that were left unresolved.

5 forgotten games that I can't seem to stop reminiscing about.

Not every game that has ceased to exist warrants a eulogy, yet these five still seem like discussions that were left unresolved.

The public beta of iOS 26.5 has arrived, but you can choose to bypass it for the time being.

The public beta of Apple’s iOS 26.5 is now available, but it's lacking its most anticipated feature. With just some minor adjustments to Maps and the addition of advertisements, this initial version appears more like a temporary release rather than an essential update to install.

The public beta of iOS 26.5 has arrived, but you can choose to bypass it for the time being.

The public beta of Apple’s iOS 26.5 is now available, but it's lacking its most anticipated feature. With just some minor adjustments to Maps and the addition of advertisements, this initial version appears more like a temporary release rather than an essential update to install.

I strongly suggest you check out these 3 essential games to play this weekend on PS5, Xbox, and PC.

Certain weekends are meant for relaxation, while others embrace mayhem. This one? It offers a mix of both. Whether you’re revisiting a legendary game that shaped a generation, exploring an extensively enhanced open-world adventure, or sampling a cult classic from this era that will soon exit Game Pass, there’s a variety to enjoy. 1. Tomb Raider I-III Remastered […]

I strongly suggest you check out these 3 essential games to play this weekend on PS5, Xbox, and PC.

Certain weekends are meant for relaxation, while others embrace mayhem. This one? It offers a mix of both. Whether you’re revisiting a legendary game that shaped a generation, exploring an extensively enhanced open-world adventure, or sampling a cult classic from this era that will soon exit Game Pass, there’s a variety to enjoy. 1. Tomb Raider I-III Remastered […]

Court orders Netflix to reimburse customers due to multiple price increases.

Netflix might need to reimburse Italian subscribers following a court's decision against years of price hikes. Customers could receive refunds amounting to hundreds of euros, but an appeal will determine whether these payments will take place.

Court orders Netflix to reimburse customers due to multiple price increases.

Netflix might need to reimburse Italian subscribers following a court's decision against years of price hikes. Customers could receive refunds amounting to hundreds of euros, but an appeal will determine whether these payments will take place.

Teens are behaving in completely strange ways with their AI companions.

Teenagers are no longer seeking assistance from AI chatbots solely for homework. An increasing number are turning to them for companionship, trust, and roleplaying, which makes the entire phenomenon feel much more unusual.