Fitness tracking faces criticism as a leak of Strava military data reveals personal details of personnel.

Shared activity logs assisted in tracking personnel movements across military installations.

Your Strava runs may feel private, but a recent leak of Strava military data illustrates how easily that information can expose more than just your workout routine. In this instance, activity logs have been associated with over 500 UK military personnel, linking their everyday exercises to sensitive locations.

This issue extends beyond just visible routes. By combining shared histories and account details, individuals can be identified, revealing where they live and work. Known locations become increasingly significant when behavioral patterns are added.

A recent case highlighted how a single tracked session disclosed the location of a naval vessel. Routine updates can have serious repercussions. The core issue lies in the level of visibility and the default settings that leave information open.

Public runs associated with real individuals

The investigation revealed shared routes linked to personnel across various UK bases, such as Northwood, Faslane, and North Yorkshire. These were not vague traces; account histories facilitated linking sessions to specific individuals.

Once an account is identified, it can disclose habits, frequent pathways, and social connections through shared features. This rapidly expands the scope and simplifies tracking over time.

In one instance, a run label indicated the user recognized the risks, yet it remained accessible. This disconnect between awareness and action contributes to the problem. Analysts caution that even small details can be pieced together into a comprehensive narrative.

Small details compile a larger picture

The real threat accumulates over time. Regular uploads create a traceable footprint that becomes increasingly easy to follow with each entry.

Even if locations are not confidential, surrounding behaviors provide context. Movements between sites, timing, and consistency can all be deduced. For an outside observer, that's sufficient for mapping routines and identifying patterns.

At a submarine base, shared logs enabled the identification of personnel and even family members through linked accounts. This kind of exposure extends beyond the original user, increasing the value of the data.

A single setting can mitigate the risk

A solution is already available, but many users overlook it. Strava features privacy settings that restrict who can see your sessions and routes. Keeping those settings unchanged maintains visibility by default.

Switching activities to private immediately reduces exposure, limiting how easily routes can be traced and complicating the development of long-term patterns. Alternatively, users can explore other fitness applications.

The broader lesson applies to any fitness app sharing location data. If you're using Strava, it's advisable to review your settings now and restrict what others can view. A minor change can prevent your routine from sending signals.

Paulo Vargas is an English major who transitioned from reporter to technical writer, with a career that has consistently returned to...

Teens are behaving in increasingly strange ways with their AI companions.

The trend of teens forming relationships with AI friends is rapidly becoming odd.

Although AI chatbots have been employed for both beneficial and harmful purposes, they were initially designed as convenient tools. AI companions were meant to serve as a harmless extension of this chatbot culture. However, that perception is quickly deteriorating. An increasing amount of reporting and research indicates that teens are not merely playing with these bots for amusement; they are seeking friendship, emotional support, roleplay, and even romance. Thus, what began as a novelty is rapidly resembling a social experiment gone awry.

Microsoft invested years promoting Copilot, but now it’s cautioning against over-reliance on it.

This disclaimer appears rather convenient.

For the past couple of years, Microsoft has fully committed to Copilot. It’s integrated everywhere—Windows, Edge, Office, and even in core workflows where it’s hard to overlook. The message has been clear: this is the future of productivity, your AI assistant for completing real work. Yet now, out of the blue, Microsoft is advising… don’t take it too seriously.

Microsoft challenges Google and OpenAI with its own AI models.

Microsoft's artificial intelligence team has publicly introduced its first production AI models, and the pricing strategy sends a clear message to every competitor present.

Microsoft has launched its own AI models, aiming to rival OpenAI and Google. The company has officially released three proprietary models: MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2. These models are available through the Microsoft Foundry platform and the MAI Playground.

Other articles

I strongly suggest you check out these 3 essential games to play this weekend on PS5, Xbox, and PC.

Certain weekends are meant for relaxation, while others embrace mayhem. This one? It offers a mix of both. Whether you’re revisiting a legendary game that shaped a generation, exploring an extensively enhanced open-world adventure, or sampling a cult classic from this era that will soon exit Game Pass, there’s a variety to enjoy. 1. Tomb Raider I-III Remastered […]

I strongly suggest you check out these 3 essential games to play this weekend on PS5, Xbox, and PC.

Certain weekends are meant for relaxation, while others embrace mayhem. This one? It offers a mix of both. Whether you’re revisiting a legendary game that shaped a generation, exploring an extensively enhanced open-world adventure, or sampling a cult classic from this era that will soon exit Game Pass, there’s a variety to enjoy. 1. Tomb Raider I-III Remastered […]

Teens are behaving in completely strange ways with their AI companions.

Teenagers are no longer seeking assistance from AI chatbots solely for homework. An increasing number are turning to them for companionship, trust, and roleplaying, which makes the entire phenomenon feel much more unusual.

Teens are behaving in completely strange ways with their AI companions.

Teenagers are no longer seeking assistance from AI chatbots solely for homework. An increasing number are turning to them for companionship, trust, and roleplaying, which makes the entire phenomenon feel much more unusual.

Reports indicate that Xbox Cloud Gaming may be set to reintroduce classic games that were previously lost.

Leaks regarding Xbox Cloud Gaming suggest that classic and delisted games could make a comeback through cloud testing, indicating a larger movement towards enhancing backward compatibility across current devices and upcoming Xbox hardware.

Reports indicate that Xbox Cloud Gaming may be set to reintroduce classic games that were previously lost.

Leaks regarding Xbox Cloud Gaming suggest that classic and delisted games could make a comeback through cloud testing, indicating a larger movement towards enhancing backward compatibility across current devices and upcoming Xbox hardware.

You Inquired: Comparison of OLED and QLED at a distance and resolving Dolby Atmos problems.

You Asked: Every week, we will select some of the most frequently asked questions and respond to them as clearly and effectively as possible. Updated within the last 5 hours. In today's episode of You Asked: What is the lifespan of an OLED TV? Will you really see a difference between various types of TVs? And […]

You Inquired: Comparison of OLED and QLED at a distance and resolving Dolby Atmos problems.

You Asked: Every week, we will select some of the most frequently asked questions and respond to them as clearly and effectively as possible. Updated within the last 5 hours. In today's episode of You Asked: What is the lifespan of an OLED TV? Will you really see a difference between various types of TVs? And […]

This discontinued LG Rollable phone makes current designs appear outdated.

A rare disassembly reveals that LG’s discontinued rollable phone was more sophisticated than current devices, featuring technology that has yet to be incorporated into mainstream smartphones.

This discontinued LG Rollable phone makes current designs appear outdated.

A rare disassembly reveals that LG’s discontinued rollable phone was more sophisticated than current devices, featuring technology that has yet to be incorporated into mainstream smartphones.

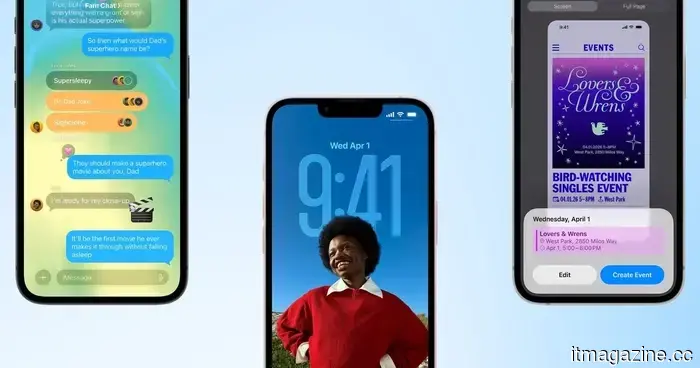

The public beta of iOS 26.5 has arrived, but you can choose to bypass it for the time being.

The public beta of Apple’s iOS 26.5 is now available, but it's lacking its most anticipated feature. With just some minor adjustments to Maps and the addition of advertisements, this initial version appears more like a temporary release rather than an essential update to install.

The public beta of iOS 26.5 has arrived, but you can choose to bypass it for the time being.

The public beta of Apple’s iOS 26.5 is now available, but it's lacking its most anticipated feature. With just some minor adjustments to Maps and the addition of advertisements, this initial version appears more like a temporary release rather than an essential update to install.

Fitness tracking faces criticism as a leak of Strava military data reveals personal details of personnel.

Activity logs from Strava connected to more than 500 military personnel in the UK demonstrate how collective fitness data can expose identities, habits, and sensitive sites, transforming ordinary workouts into a possible security threat.