This novel AI attack takes models without needing to access the system directly.

A side-channel attack can allow for the remote reconstruction of AI models utilizing leaked signals.

AI systems have historically been regarded as opaque entities, particularly in fields such as facial recognition and self-driving technology. Recent findings indicate that their defenses may not be as robust as previously believed.

A research team from KAIST has demonstrated that AI systems can be reverse engineered from a distance by capturing emissions occurring during normal operations, eliminating the need for direct interference. This approach relies on eavesdropping.

Using a small antenna, the researchers detected faint electromagnetic emissions from GPUs and reconstructed the system's design. While it may seem like a clever heist maneuver, the findings are credible, and the security ramifications are significant.

Understanding the side channel

The system, known as ModelSpy, gathers electromagnetic emissions produced while GPUs execute AI tasks. These emissions are subtle yet exhibit trends associated with the architectural configuration.

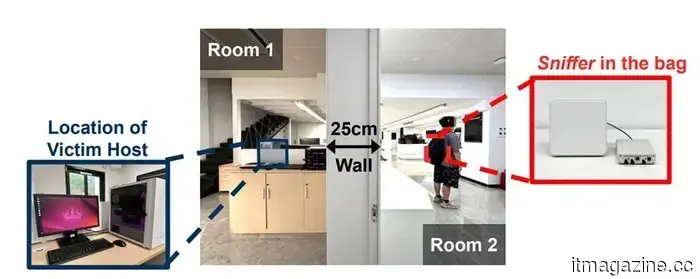

Emissions from AI model architectures can be intercepted through walls with an antenna concealed in a bag, as noted in research presented by Jun Han et al. at the NDSS Symposium 2026.

By examining these trends, the team was able to deduce essential information, such as layer configurations and parameter selections. Their experiments revealed that fundamental structures could be identified with an accuracy of up to 97.6 percent.

The design of the system is particularly alarming. The antenna can be concealed in a bag and does not require physical entry. It has successfully operated from distances of up to six meters, even through walls, across various GPU types. In this case, computation itself serves as a side channel, revealing the system’s architecture without a conventional breach.

Repercussions for AI security

This advancement shifts the landscape of AI security into unfamiliar realms. The majority of protective measures focus on software vulnerabilities or network access. In contrast, ModelSpy leverages the tangible byproducts of computation.

Even systems that are isolated may leak sensitive data if hardware emissions are not properly managed. For corporations, this architecture often represents crucial intellectual property, thus transforming this into a direct business vulnerability.

This work presents it as a challenge in the cyber-physical sphere, where securing AI now requires consideration of both digital defenses and the physical environment, raising the standard for what constitutes adequate protection.

Current defense mechanisms

The team has also proposed strategies to mitigate risk, including the introduction of electromagnetic noise and modifying computation processes to obscure patterns, making them harder to decipher.

These solutions imply a broader transformation. Safeguarding AI may necessitate hardware-level modifications rather than relying solely on software enhancements, complicating implementation for industries that are already committed to existing systems.

The research has gained acclaim at a prominent security conference, emphasizing the seriousness with which this threat is regarded. Future vulnerabilities may emerge not through direct breaches but by simply observing what systems inadvertently disclose.

Other articles

Samsung is finally introducing much-awaited Google Cast support to its older television models.

Samsung is finally responding to a long-awaited demand from its users: Google Cast support is being introduced not only to its new TVs but also to certain older models. This highly sought-after feature will be available for selected 2023 and 2024 models. It may also be extended to Smart Monitors and other recently released Tizen OS devices. The rollout has begun [...]

Samsung is finally introducing much-awaited Google Cast support to its older television models.

Samsung is finally responding to a long-awaited demand from its users: Google Cast support is being introduced not only to its new TVs but also to certain older models. This highly sought-after feature will be available for selected 2023 and 2024 models. It may also be extended to Smart Monitors and other recently released Tizen OS devices. The rollout has begun [...]

Videos of the Artemis II crew demonstrate astronauts having fun with an iPhone while in space.

New Artemis II footage features astronauts casually using an iPhone in space, giving NASA’s first crewed lunar mission in more than 50 years a surprisingly relatable vibe.

Videos of the Artemis II crew demonstrate astronauts having fun with an iPhone while in space.

New Artemis II footage features astronauts casually using an iPhone in space, giving NASA’s first crewed lunar mission in more than 50 years a surprisingly relatable vibe.

Google has introduced Gemma 4.

Google has introduced Gemma 4, which includes four open-weight models ranging from E2B edge to 31B Dense, developed based on Gemini 3 research and released under the Apache 2.0 license.

Google has introduced Gemma 4.

Google has introduced Gemma 4, which includes four open-weight models ranging from E2B edge to 31B Dense, developed based on Gemini 3 research and released under the Apache 2.0 license.

Anker Solix E10 Complete Home Backup System Review: Power That Truly Suits Your Lifestyle

Following thorough testing, I can assert that the Anker Solix E10 manages essential household needs effortlessly, excelling in practicality and convenience.

Anker Solix E10 Complete Home Backup System Review: Power That Truly Suits Your Lifestyle

Following thorough testing, I can assert that the Anker Solix E10 manages essential household needs effortlessly, excelling in practicality and convenience.

Google has introduced Gemma 4.

Google has introduced Gemma 4, which consists of four open-weight models ranging from E2B edge to 31B Dense, developed from Gemini 3 research and released under the Apache 2.0 license.

Google has introduced Gemma 4.

Google has introduced Gemma 4, which consists of four open-weight models ranging from E2B edge to 31B Dense, developed from Gemini 3 research and released under the Apache 2.0 license.

This novel AI attack takes models without needing to access the system directly.

AI models might not be secure behind barriers anymore, as researchers demonstrate that signals emitted by GPUs can disclose their internal architecture without the need for hacking, utilizing a small antenna and side-channel analysis from a distance of several meters.