This novel AI attack extracts models without interacting with the system.

A side-channel attack can remotely reconstruct AI models by utilizing leaked signals.

AI systems have traditionally been regarded as secure black boxes, particularly in fields such as facial recognition and autonomous driving. However, recent findings indicate that this presumed protection may be weaker than previously thought.

A research team from KAIST has demonstrated that AI systems can be reverse engineered from a distance by capturing emissions that occur during normal operations, without any form of direct intrusion. Instead, this method relies on passive listening.

Using a small antenna, the researchers detected faint electromagnetic emissions from GPUs and were able to reconstruct the system's design. While it may sound like a method from a heist movie, the findings are credible, and the security ramifications are significant.

Understanding the side channel's operation

The system, termed ModelSpy, gathers electromagnetic output generated by GPUs processing AI tasks. These emissions are subtle but reveal patterns associated with the architecture's arrangement.

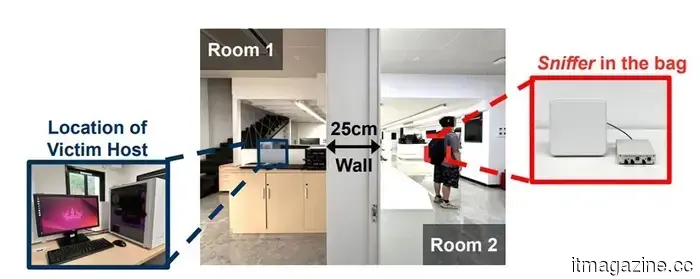

AI model configurations can be extracted from a distance using an antenna concealed in a bag, as noted in a paper presented by Jun Han et al. at the NDSS Symposium 2026.

By examining these patterns, the researchers deduced essential information, including the arrangement of layers and parameter selections. Their tests showed that fundamental structures could be recognized with an accuracy of up to 97.6 percent.

What makes this alarming is the device's setup. The antenna can fit inside a bag and does not require physical access. It successfully operated from as far as six meters away, even penetrating walls, across various GPU types. The very act of computation becomes a channel, revealing the system's design without any conventional breach.

Implications for AI security

This development shifts the landscape of AI security into more uncharted waters. Most protective measures have concentrated on software vulnerabilities or network access. ModelSpy, however, focuses on the physical byproducts of computational processes.

Even isolated systems could potentially expose sensitive information if hardware emissions are not properly managed. For organizations, this architecture often constitutes critical intellectual property, transforming this issue into a direct business hazard.

The work presents this as a challenging cyber-physical problem, indicating that AI protection now necessitates both digital defenses and considerations of the surrounding environment, thus raising the standards for what constitutes adequate protection.

Current defense strategies

The research team also proposed strategies for minimizing risks, such as introducing electromagnetic noise and altering computation methods to obscure patterns.

These solutions suggest a wider shift. Safeguarding AI may require modifications at the hardware level, rather than solely relying on software updates, complicating implementation for industries already entrenched in existing systems.

This research garnered recognition at a prominent security conference, reflecting the seriousness with which this threat is regarded. Future exposures may not stem from direct infiltration but could instead arise from passively observing what systems inadvertently disclose.

Other articles

Videos featuring the Artemis II crew display astronauts having fun with an iPhone in space.

New videos from Artemis II reveal astronauts casually using an iPhone in space, making NASA’s first crewed lunar mission in more than 50 years seem surprisingly relatable.

Videos featuring the Artemis II crew display astronauts having fun with an iPhone in space.

New videos from Artemis II reveal astronauts casually using an iPhone in space, making NASA’s first crewed lunar mission in more than 50 years seem surprisingly relatable.

Google Vids receives a significant boost from AI to simplify video creation with some exciting new features.

AI is everywhere, but it is functioning appropriately.

Google Vids receives a significant boost from AI to simplify video creation with some exciting new features.

AI is everywhere, but it is functioning appropriately.

This novel AI attack takes models without needing to access the system directly.

AI models might not be secure behind barriers anymore, as researchers demonstrate that signals emitted by GPUs can disclose their internal architecture without the need for hacking, utilizing a small antenna and side-channel analysis from a distance of several meters.

This novel AI attack takes models without needing to access the system directly.

AI models might not be secure behind barriers anymore, as researchers demonstrate that signals emitted by GPUs can disclose their internal architecture without the need for hacking, utilizing a small antenna and side-channel analysis from a distance of several meters.

The Steam survey indicates that Linux has reached a record high among gamers.

Linux has reached an unprecedented 5.33% in Valve’s latest Steam survey, a significant achievement that highlights the progress of PC gaming on Linux.

The Steam survey indicates that Linux has reached a record high among gamers.

Linux has reached an unprecedented 5.33% in Valve’s latest Steam survey, a significant achievement that highlights the progress of PC gaming on Linux.

Samsung is finally introducing much-awaited Google Cast support to its older television models.

Samsung is finally responding to a long-awaited demand from its users: Google Cast support is being introduced not only to its new TVs but also to certain older models. This highly sought-after feature will be available for selected 2023 and 2024 models. It may also be extended to Smart Monitors and other recently released Tizen OS devices. The rollout has begun [...]

Samsung is finally introducing much-awaited Google Cast support to its older television models.

Samsung is finally responding to a long-awaited demand from its users: Google Cast support is being introduced not only to its new TVs but also to certain older models. This highly sought-after feature will be available for selected 2023 and 2024 models. It may also be extended to Smart Monitors and other recently released Tizen OS devices. The rollout has begun [...]

This novel AI attack extracts models without interacting with the system.

AI models might no longer be secure within protected environments, as researchers demonstrate that signals from GPUs can disclose their internal architecture without the need for hacking, utilizing a small antenna and side-channel analysis from a distance of several meters.