Space Data Centers: SpaceX and Blue Origin Compete for Orbit as Scientists Scrutinize the Physics

The concept is alluring in its straightforwardness: AI requires more energy than what terrestrial grids can provide, so the idea is to relocate data centers into orbit, where sunlight is constant and electricity is free. Companies like SpaceX, Blue Origin, and a rising number of startups are competing to transform this vision into reality. However, scientists and engineers underscore that this vision overlooks crucial aspects of thermodynamics, economics, and orbital mechanics that remain unwritten.

On January 30, SpaceX submitted a request to the Federal Communications Commission (FCC) for permission to launch up to a million satellites into low Earth orbit, each equipped with computing hardware intended to create a constellation with "unprecedented computing capacity to power advanced artificial intelligence models." The satellites are to be positioned at altitudes between 500 and 2,000 kilometers, in orbits optimized for maximum sunlight exposure, routing data through SpaceX’s existing Starlink network. SpaceX also sought a waiver for the FCC's standard deployment milestones, which usually mandate that half of a constellation be operational within six years.

Seven weeks later, Blue Origin submitted its own application. Project Sunrise aims to deploy 51,600 satellites in sun-synchronous orbits between 500 and 1,800 kilometers, along with the previously announced TeraWave constellation of 5,408 satellites for high-speed optical backhaul. While SpaceX focused on sheer scale in its proposal, Blue Origin highlighted the system's architecture: it would handle computations in orbit and transmit results to the ground via TeraWave's mesh network.

The startup scene is evolving even more rapidly. Starcloud, which was formerly known as Lumen Orbit, secured $170 million at a valuation of $1.1 billion in March, making it the fastest unicorn in Y Combinator history just 17 months post-program completion. The company successfully launched its first satellite carrying an Nvidia H100 GPU in November 2025 and requested FCC approval in February for a constellation comprising up to 88,000 satellites. Aethero, a defense-oriented startup developing radiation-protected space-grade computers with Nvidia Orin NX chips, raised $8.4 million and is currently testing hardware in orbit this year.

The commercial rationale is driven by a significant issue. Global electricity consumption by data centers was about 415 terawatt-hours in 2024, and the International Energy Agency anticipates it could surpass 1,000 TWh by 2026, primarily propelled by accelerating AI servers that are projected to experience a 30 percent annual growth rate. For instance, data centers in Virginia consume 26 percent of the total electricity supply, while Ireland's share could hit 32 percent by the year's end. Issues such as grid limitations, permitting delays, and political pushback against expanding terrestrial capacity are very much present.

However, scientists contend that the physics required for effective orbital computing is significantly challenging at a meaningful scale. The primary hurdle is heat management. In the vacuum of space, there is no air to dissipate heat away from processors—only radiation cooling, necessitating vast surface areas. To keep electronics at a steady 20 degrees Celsius while dissipating just one megawatt of thermal energy would need approximately 1,200 square meters of radiator, comparable to four tennis courts. A data center with several hundred megawatts of power, the minimum for commercial viability, would necessitate radiators many thousands of times larger than anything used on the International Space Station.

Radiation poses another significant challenge. In low Earth orbit, unshielded chips are exposed to cosmic rays and trapped particles that can cause bit flips and permanent circuit damage. To counteract this, radiation hardening can increase hardware costs by 30 to 50 percent and diminish performance by 20 to 30 percent. Alternatively, implementing triple modular redundancy would require launching three copies of each chip, leading to triple the cooling needs, triple the electricity consumption, and triple the overall mass. Starcloud’s strategy of using commercially available GPUs with external shielding proves intriguing, yet no one has demonstrated its effectiveness at scale or over hardware lifetimes lasting years instead of months.

The third obstacle is latency. A million satellites dispersed across various orbital layers from 500 to 2,000 kilometers cannot maintain the close coupling necessary for cutting-edge model training, where inter-node communication latencies must stay within microseconds. Low Earth orbit inherently introduces minimum latencies of several milliseconds for inter-satellite connections and 60 to 190 milliseconds for round trips from ground to orbit, compared to 10 to 50 milliseconds for terrestrial content delivery networks. This makes orbital infrastructure potentially suitable for inference workloads but inadequate for training, which currently represents the bulk of AI computing demand.

Cost is another critical factor. IEEE Spectrum estimated that a one-gigawatt orbital data center would cost over $50 billion, nearly three times more than an equivalent land-based facility, including five years of operational costs. Google has noted that launch expenses need to fall below $200 per kilogram before space

Other articles

Even astronauts en route to the moon encounter issues with Outlook.

During the Artemis II mission, astronauts encountered a known Outlook failure while in flight, prompting mission control to intervene and resolve the issue. This incident highlights the reliance on everyday software, even in deep space missions.

Even astronauts en route to the moon encounter issues with Outlook.

During the Artemis II mission, astronauts encountered a known Outlook failure while in flight, prompting mission control to intervene and resolve the issue. This incident highlights the reliance on everyday software, even in deep space missions.

Space data centers: SpaceX and Blue Origin compete for orbital dominance as scientists raise questions about the underlying physics.

SpaceX has submitted a proposal for one million data center satellites, while Blue Origin has aimed for 51,600. Experts indicate that the principles of cooling, radiation, and expenses render orbital computing a prospect that is decades away.

Space data centers: SpaceX and Blue Origin compete for orbital dominance as scientists raise questions about the underlying physics.

SpaceX has submitted a proposal for one million data center satellites, while Blue Origin has aimed for 51,600. Experts indicate that the principles of cooling, radiation, and expenses render orbital computing a prospect that is decades away.

Space Data Centers: SpaceX and Blue Origin Compete for Orbital Position as Scientists Raise Questions About the Physics

SpaceX has submitted an application for 1 million data center satellites, while Blue Origin has applied for 51,600. According to scientists, factors such as cooling physics, radiation, and expenses indicate that orbital computing is still several decades in the future.

Space Data Centers: SpaceX and Blue Origin Compete for Orbital Position as Scientists Raise Questions About the Physics

SpaceX has submitted an application for 1 million data center satellites, while Blue Origin has applied for 51,600. According to scientists, factors such as cooling physics, radiation, and expenses indicate that orbital computing is still several decades in the future.

If you're relying on AI to improve your dating life, this actor's experience suggests otherwise.

Actor and writer Rhik Samadder allowed AI to create his dating profile, messages, and conversation starters, only to discover that the chatbot's confidence quickly crumbles in actual dating situations.

If you're relying on AI to improve your dating life, this actor's experience suggests otherwise.

Actor and writer Rhik Samadder allowed AI to create his dating profile, messages, and conversation starters, only to discover that the chatbot's confidence quickly crumbles in actual dating situations.

Don't expect any display upgrade surprises with Samsung's upcoming Galaxy Z foldables.

The display enhancement of the Galaxy Z Fold 8 has bypassed a generation since the existing version is already excellent.

Don't expect any display upgrade surprises with Samsung's upcoming Galaxy Z foldables.

The display enhancement of the Galaxy Z Fold 8 has bypassed a generation since the existing version is already excellent.

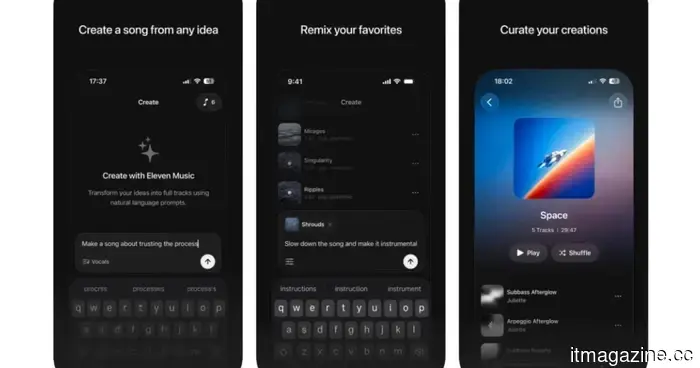

The ElevenLabs AI music generator transforms your concepts into three-minute tracks.

Following closely behind Google's music AI release, ElevenLabs has introduced ElevenMusic, an iOS app that transforms text into songs, demonstrating the company's strong intention to expand significantly beyond voice cloning.

The ElevenLabs AI music generator transforms your concepts into three-minute tracks.

Following closely behind Google's music AI release, ElevenLabs has introduced ElevenMusic, an iOS app that transforms text into songs, demonstrating the company's strong intention to expand significantly beyond voice cloning.

Space Data Centers: SpaceX and Blue Origin Compete for Orbit as Scientists Scrutinize the Physics

SpaceX has submitted a request for 1 million data center satellites, while Blue Origin has applied for 51,600. Experts indicate that the challenges related to cooling, radiation, and expenses suggest that orbital computing is still many years off.