Hugging Face and ClawHub were breached, resulting in the compromise of numerous malicious AI models and agent capabilities as supply chain attacks focus on AI infrastructure.

**TL;DR** Hugging Face and ClawHub, the two leading repositories for AI models and agent skills, have been systematically breached, leading to the insertion of hundreds of harmful entries designed to steal credentials, create backdoors, and commandeer AI agents for cryptocurrency mining.

The primary software supply chains in artificial intelligence have been significantly compromised. Hugging Face, which hosts over a million machine learning models utilized by nearly every AI company globally, has discovered numerous malicious models capable of executing arbitrary code on users' machines upon downloading. ClawHub, the public registry for OpenClaw’s AI agent skills, has been infiltrated through a coordinated campaign that introduced 341 malicious skills aimed at credential theft, opening reverse shells, and hijacking AI agents for cryptocurrency mining.

Despite differing techniques, the logic behind these attacks is the same, exploiting the inherent trust developers place in shared repositories. Both methods leverage the infrastructure developed within the AI industry for accelerating development as a means of compromise.

**The Models**

Hugging Face has been aware of malicious models on its platform since at least 2024, when security companies JFrog and ReversingLabs independently detected models with hidden backdoors. This issue has not been isolated; it has expanded significantly. Protect AI, which collaborated with Hugging Face to scrutinize the platform’s model library, has reviewed over four million models and flagged around 352,000 unsafe or suspicious issues across 51,700 models. JFrog identified more than 100 models capable of arbitrary code execution. The attack method referred to as "nullifAI" exploits Python’s pickle serialization format, the standard for packaging machine learning models. Attackers insert malicious Python code at the beginning of the pickle byte stream and compress the file using 7z instead of the default ZIP format, which disrupts Hugging Face’s PickleScan detection tool.

The malicious payloads are overt. Security researchers have documented models that establish reverse shells connecting to hardcoded IP addresses, granting attackers direct access to the machines of anyone who loads these models. Other models facilitate credential theft, exfiltrate environment variables, or download additional malware. Consequently, a data scientist downloading what seems to be a legitimate model for a research project or production pipeline might inadvertently transfer control of their machine to an attacker.

In response, Hugging Face has teamed up with JFrog and Wiz to enhance their scanning capabilities. JFrog’s integration has successfully reduced false positives in malicious model detection by 96 percent. However, the platform's open nature, which contributes to its value within the AI community, also presents vulnerabilities. Anyone can upload a model, and while scanning can identify known patterns, the attackers who developed nullifAI specifically designed their technique to evade detection.

**The Skills**

ClawHub, the registry for OpenClaw's AI agent ecosystem, faces a different yet related challenge. OpenClaw has expanded to 3.2 million users and formed partnerships with OpenAI, yet its skill registry has become a target for attackers who recognize that an AI agent running a malicious skill gains access to what the agent can access, including databases, APIs, internal networks, and cloud credentials.

Koi Security audited all 2,857 skills on ClawHub and uncovered 341 malicious entries, with 335 traced back to a singular coordinated operation known as “ClawHavoc.” In a separate analysis, Snyk’s ToxicSkills research examined the broader ecosystem and found that 36 percent of all AI agent skills have security vulnerabilities, with approximately 900 skills, or about 20 percent of the total, classified as malicious. Thirty skills from one author were covertly commandeering AI agents for cryptocurrency mining.

The ClawHub attacks are especially perilous due to the nature of AI agent architectures. The emergence of model context protocols and similar standards in the agentic era has resulted in a new category of software supply chain where AI systems autonomously select and execute tools from external registries. A compromised skill does not necessitate human interaction to activate; instead, it relies on an AI agent to choose the skill as part of a workflow, at which point the malicious code runs with the agent’s permissions.

**The Pattern**

The compromises of Hugging Face and ClawHub represent an AI-specific iteration of a supply chain attack pattern that has been intensifying across the software industry. In March 2026, the LiteLLM package on PyPI was compromised, potentially exposing 500,000 credentials, including API keys for Meta, OpenAI, and Anthropic. Meta subsequently paused its AI data work due to the breach threatening its training secrets. In April, a Bitwarden CLI package on npm was hijacked for 90 minutes, specifically designed to harvest credentials from AI coding tools such as Claude Code, Cursor, Codex CLI, and Aider. Shortly after, the PyTorch Lightning package was compromised for 42 minutes with a credential-stealing payload linked to the

Other articles

will.i.am's course at ASU instructed 75 students on how to create AI agents, coinciding with the tech sector laying off 73,000 employees in just four months.

will.i.am co-instructed "The Agentic Self" at Arizona State University, where students developed AI agents for veterans and street vendors. During that same semester, the tech sector eliminated 73,000 positions.

will.i.am's course at ASU instructed 75 students on how to create AI agents, coinciding with the tech sector laying off 73,000 employees in just four months.

will.i.am co-instructed "The Agentic Self" at Arizona State University, where students developed AI agents for veterans and street vendors. During that same semester, the tech sector eliminated 73,000 positions.

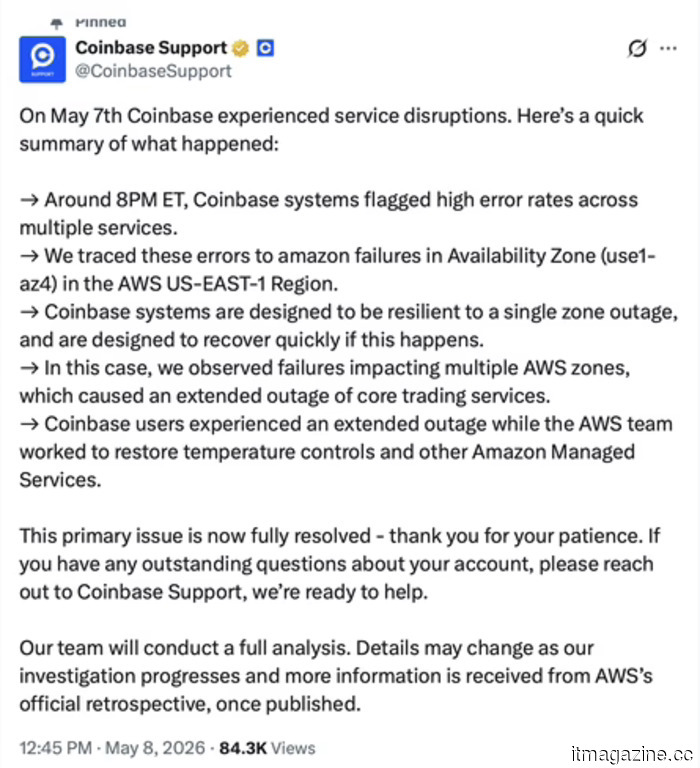

On Monday, Coinbase laid off 700 employees, reported a loss of $394 million on Thursday, and experienced a shutdown on Friday due to an overheating data center.

Coinbase experienced a seven-hour outage following an overheating incident at an AWS data center in Virginia. This interruption came at the end of a week that included 700 job cuts and a quarterly loss of $394 million.

On Monday, Coinbase laid off 700 employees, reported a loss of $394 million on Thursday, and experienced a shutdown on Friday due to an overheating data center.

Coinbase experienced a seven-hour outage following an overheating incident at an AWS data center in Virginia. This interruption came at the end of a week that included 700 job cuts and a quarterly loss of $394 million.

This excessively designed smartwatch features two cameras and transforms into an action camera.

The Huawei Watch Kids X1 Pro features front and rear cameras, a removable body akin to an action camera, location tracking, video calling capabilities, and is equipped with an 850mAh battery.

This excessively designed smartwatch features two cameras and transforms into an action camera.

The Huawei Watch Kids X1 Pro features front and rear cameras, a removable body akin to an action camera, location tracking, video calling capabilities, and is equipped with an 850mAh battery.

Asus’ incredibly sleek ExpertBook Ultra arrives in the US with a completely perplexing price tag.

The Asus ExpertBook Ultra features a 14-inch dual OLED display, Intel Core Ultra Series 3 performance, and enhanced security for businesses, all priced at an astonishing $3,599.99 in the US.

Asus’ incredibly sleek ExpertBook Ultra arrives in the US with a completely perplexing price tag.

The Asus ExpertBook Ultra features a 14-inch dual OLED display, Intel Core Ultra Series 3 performance, and enhanced security for businesses, all priced at an astonishing $3,599.99 in the US.

Hugging Face and ClawHub were breached, leading to the infiltration of hundreds of harmful AI models and agent capabilities as supply chain attacks focus on AI infrastructure.

Hugging Face is home to 352,000 issues related to unsafe models. ClawHub's repository includes 341 skills associated with malicious AI agents. The AI supply chain has become the most appealing target in the realm of software security.

Hugging Face and ClawHub were breached, leading to the infiltration of hundreds of harmful AI models and agent capabilities as supply chain attacks focus on AI infrastructure.

Hugging Face is home to 352,000 issues related to unsafe models. ClawHub's repository includes 341 skills associated with malicious AI agents. The AI supply chain has become the most appealing target in the realm of software security.

Hugging Face and ClawHub faced compromises involving hundreds of harmful AI models and agent skills as supply chain attacks aimed at AI infrastructure.

Hugging Face has 352,000 issues related to unsafe models. ClawHub's registry includes 341 skills of malicious AI agents. Currently, the AI supply chain is the most appealing target in the realm of software security.

Hugging Face and ClawHub faced compromises involving hundreds of harmful AI models and agent skills as supply chain attacks aimed at AI infrastructure.

Hugging Face has 352,000 issues related to unsafe models. ClawHub's registry includes 341 skills of malicious AI agents. Currently, the AI supply chain is the most appealing target in the realm of software security.

Hugging Face and ClawHub were breached, resulting in the compromise of numerous malicious AI models and agent capabilities as supply chain attacks focus on AI infrastructure.

Hugging Face has 352,000 issues related to unsafe models. ClawHub's repository includes 341 skills for malicious AI agents. Currently, the AI supply chain is the most appealing target in software security.