Google has entered into a classified AI agreement with the Pentagon for

**TL;DR** Google finalized a classified AI agreement with the Pentagon for “any lawful government purpose” just a day after over 580 employees urged CEO Sundar Pichai to reject such arrangements. The contract includes stipulations against mass surveillance and autonomous weapons without human oversight, but the government retains the ability to alter safety protocols. Simultaneously, Bloomberg uncovered that Google discreetly withdrew from a $100 million drone swarm competition in February after an internal ethical assessment. While Google aims to differentiate between selling general-purpose AI and developing specific weaponry, this distinction may lose relevance in classified environments.

Google has entered into a deal that allows the Pentagon to utilize its Gemini AI models for classified military purposes, with the terms stating "any lawful government purpose." This confirmation came a day after more than 580 employees signed a letter imploring CEO Sundar Pichai to decline such agreements. The contract grants the Department of Defense API access to Google’s AI systems on classified networks, expanding an existing relationship that has already seen Gemini used by three million Pentagon staff on unclassified systems. Notably, the agreement contains clauses asserting that the AI system should not be used for domestic mass surveillance or autonomous weapons without proper human oversight. On the same day, it was reported by Bloomberg that Google had quietly exited a $100 million Pentagon prize challenge aimed at developing technology for voice-controlled drone swarms, withdrawing in February after an internal ethics review, despite having progressed in the competition. The official reason provided was insufficient resources. Google is attempting to draw a boundary; however, it is not the boundary its employees requested.

**The Deal**

This classified AI agreement extends Google’s current contract with the Pentagon, offering API access rather than custom model creation or tailored military applications, according to a Google Public Sector representative. The Pentagon can connect directly to Google's software on classified networks, which are isolated systems not connected to the public internet and used for mission planning, intelligence analysis, and weapons targeting. The “any lawful government purpose” language aligns Google with OpenAI and Elon Musk's xAI, both of which have their own classified AI agreements with the Pentagon. The government has the ability to request changes to Google’s AI safety features and content filters, effectively allowing the Pentagon to alter the safety measures previously established by Google's researchers.

The nominal restrictions against mass surveillance and autonomous weaponry without human supervision reflect the limitations OpenAI established in its past Pentagon agreement. However, the enforcement mechanism—the same one employees criticized in their letter—reveals a significant flaw: on air-gapped classified networks, Google cannot monitor the queries made, the outputs generated, or the decisions derived from those outputs. The language stating the AI "should not be used for" is advisory rather than legally binding, and the term “appropriate human oversight and control” remains undefined. In their letter, employees argued that the only way to ensure Google does not become linked with such harmful uses is to outright reject any classified work. Nevertheless, Google accepted it, using language employees previously deemed unenforceable. Pichai highlighted at Cloud Next 2026 that there are 750 million Gemini users and a backlog of $240 billion. The same Gemini infrastructure serving these users is now extended to classified military networks, where external oversight is non-existent.

**The Withdrawal**

The drone swarm withdrawal reveals another facet of the situation. Google was progressing in a $100 million Pentagon prize initiative to develop technology enabling commanders to control autonomous drone swarms via voice commands, turning spoken instructions into digital commands for the drones. On February 11, 2026, Google informed the government of its decision to cease further participation, citing lack of resources. According to Bloomberg’s investigation, this decision was made following an internal ethical review. This withdrawal parallels the events of Project Maven in 2018, when around 4,000 employees petitioned against AI analysis of drone video feeds, leading to the expiration of that contract, which was subsequently taken over by Palantir. The value of the Maven contract was several million dollars, which has since ballooned to a $13 billion investment by Palantir.

The simultaneous nature of these events is telling. On the same day that Google confirmed a deal granting the Pentagon classified access to Gemini for “any lawful government purpose,” they also disclosed their exit from a project that aimed to utilize their AI for managing drone swarms. Google is willing to place its advanced AI models on classified networks where it has no oversight, yet is reluctant to develop technology specifically intended to command drone swarms. This distinction is significant for Google’s internal ethics framework: providing API access to general-purpose models is one step removed from weaponization, even when that AI is applied within networks involved in weapons targeting. Conversely, creating technology specifically for managing drone swarms represents a direct application for weaponry that the ethical review could not sanction. The line Google draws is between supplying the tools and constructing the weaponry, between selling access and engineering lethality. The pressing inquiry, illuminated by

Other articles

OpenAI states that it is

OpenAI rejected a Wall Street Journal report about missed targets, labeling it as clickbait. In reaction, investors sold off shares in Oracle, CoreWeave, SoftBank, and semiconductor companies. The main issue remains whether the $600 billion in commitments remains viable.

OpenAI states that it is

OpenAI rejected a Wall Street Journal report about missed targets, labeling it as clickbait. In reaction, investors sold off shares in Oracle, CoreWeave, SoftBank, and semiconductor companies. The main issue remains whether the $600 billion in commitments remains viable.

Scholly's founder, Christopher Gray, has filed a lawsuit against Sallie Mae, claiming wrongful termination and the sale of student data, which includes information about minors.

Christopher Gray created Scholly to assist students in locating scholarships. Following its acquisition by Sallie Mae, he claims he was dismissed for opposing the sale of users' personal data, which included information about minors.

Scholly's founder, Christopher Gray, has filed a lawsuit against Sallie Mae, claiming wrongful termination and the sale of student data, which includes information about minors.

Christopher Gray created Scholly to assist students in locating scholarships. Following its acquisition by Sallie Mae, he claims he was dismissed for opposing the sale of users' personal data, which included information about minors.

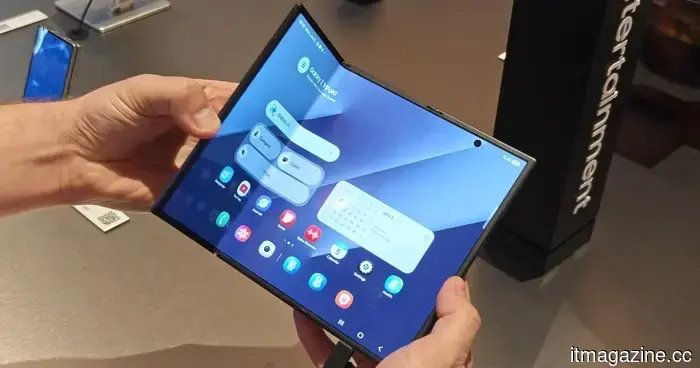

The Google Play Store will improve the process of locating apps that have an appealing appearance on tablets and foldable devices.

The forthcoming v51.2 update of the Google Play Store introduces a large-screen optimization badge to app listings, clearly indicating which apps are designed for tablets and foldable devices.

The Google Play Store will improve the process of locating apps that have an appealing appearance on tablets and foldable devices.

The forthcoming v51.2 update of the Google Play Store introduces a large-screen optimization badge to app listings, clearly indicating which apps are designed for tablets and foldable devices.

Samsung is facing a potential ban on its foldable phones in the US due to a patent dispute.

A recent lawsuit alleges that Samsung has violated patents related to foldable phones and is requesting a ban on sales in the US, although these allegations have yet to be substantiated.

Samsung is facing a potential ban on its foldable phones in the US due to a patent dispute.

A recent lawsuit alleges that Samsung has violated patents related to foldable phones and is requesting a ban on sales in the US, although these allegations have yet to be substantiated.

Google has finalized a classified AI agreement with the Pentagon for

Google provided the Pentagon with classified access to Gemini for "any lawful purpose" just one day after more than 580 employees voiced their objections. Additionally, the company withdrew from a $100 million drone swarm competition following an ethics assessment.

Google has finalized a classified AI agreement with the Pentagon for

Google provided the Pentagon with classified access to Gemini for "any lawful purpose" just one day after more than 580 employees voiced their objections. Additionally, the company withdrew from a $100 million drone swarm competition following an ethics assessment.

The LibrePods app, designed to enhance compatibility between AirPods and Android devices, has finally resolved its most significant problem.

LibrePods, the application that enables AirPods functionalities on Android devices, is now more user-friendly thanks to its availability on the Play Store and a decreased dependence on potentially risky root access.

The LibrePods app, designed to enhance compatibility between AirPods and Android devices, has finally resolved its most significant problem.

LibrePods, the application that enables AirPods functionalities on Android devices, is now more user-friendly thanks to its availability on the Play Store and a decreased dependence on potentially risky root access.

Google has entered into a classified AI agreement with the Pentagon for

Google granted the Pentagon classified access to Gemini for "any lawful purpose" just one day after more than 580 employees staged a protest. Additionally, the company withdrew from a $100 million drone swarm competition following an ethics review.