How web intelligence is driving the next generation of AI infrastructure.

For many years, the web intelligence sector has served as a dependable support system for significant data-driven advancements across various industries. As big data continued to expand, the infrastructure demands to maintain a consistent data flow became increasingly challenging. In more recent years, artificial intelligence has made remarkable progress. The narrative of how the web intelligence sector adapted to the needs of a growing scale and complexity is intertwined with the latest significant advancements in AI and technology as a whole.

Infrastructure for Handling Comprehensive Data All At Once

As AI companies approached 2025, they raced to develop multimodal tools that could effectively manage both audio and video data. This ambitious goal placed immediate strain on data infrastructure. Video datasets are significantly "heavier" than text, presenting processing challenges and requiring far more resources to be amassed at the scale necessary for training sophisticated models.

We foresaw early on that managing multimodal data would soon become a key frontier in AI. Even with preparation, powering multimodal AI presented many challenges.

For instance, creator consent remains a contentious issue in AI training, particularly concerning complex content like scripted, high-quality videos. Yet, even with granted consent, transforming licensed videos into ethically-sourced, AI-ready datasets demands considerable effort and infrastructure.

We introduced the Video Data API to manage the entire process: from locating relevant videos and channels to extracting public data and metadata, all while eliminating the need for teams to create and manage their own scrapers. Such solutions act as freeway tunnels, enabling public and licensed data to transfer swiftly from the internet to AI laboratories.

However, the transfer of large video files on a massive scale poses a throughput challenge. High-Bandwidth Proxies address this issue by offering over 200 Gbps of dedicated bandwidth and long-lasting connections optimized for video downloads. Traditional infrastructure was not designed to handle such substantial data transfers simultaneously.

Ongoing Data Access with Headless Browsers

The dialogue around AI agents evolved rapidly throughout 2024, as industry experts realized that the pressing concern was not about what they could automate but rather whether they had reliable large-scale web access.

It became clear that the answer was often negative. As website complexity increased, ensuring stable automated access became more difficult, particularly for JavaScript-heavy sites. Systems that perform user-directed online actions are incomplete without a crucial component.

This component is headless browsers, which can adapt to dynamic website layouts, executing multiple actions that may be both straightforward and complex for the machines intended to assist us, such as clicking and scrolling.

Adjusting to AI-Powered Online Search Engines

Beginning in mid-2024, traditional search result pages began to include answers generated by LLMs, AI summaries, and interactive conversational interfaces. Consequently, organizations are now required to monitor their brand presence within these AI-generated responses, a distinct enough challenge that it has created a new category: Generative Engine Optimization (GEO).

Targeted web scraper APIs for platforms like ChatGPT, Perplexity, and other AI search tools acknowledge that the concept of "online search" now encompasses more than it did merely a few years ago. Specifically, these tools extract rich, geo-targeted LLM insights as they appear to real users, enabling organizations to assess their brand perception, observe competitors in AI responses, and gauge their visibility within this new layer of search results.

For AI companies, these scrapers offer additional data sources for prompt engineering and model development. The capability to capture structured data from AI search interfaces at scale indicates an understanding that the nature of online information discovery is being redefined in real-time.

Turnkey Datasets Over Extraction Tools

While the industry's focus has recently been captivated by the rapid growth of AI, web data remains vital for sectors that relied heavily on data long before LLMs emerged. E-commerce, in particular, has always depended on access to high-quality competitive intelligence: pricing information, inventory status, customer reviews, product catalogs, and more. Although this reality has not changed, expectations regarding how such data should be delivered have evolved significantly.

The E-Commerce Web Data Platform reflects a broader trend where buyers increasingly prefer finished datasets over extraction tools. In essence, organizations expect clean, structured datasets ready for immediate use, with the extraction process already completed. For providers, this shift presents new opportunities to enhance value and improve profits.

Lower Technical Barriers

Theoretically, public web data is a shared resource accessible to all. However, in practice, extracting it on a large scale requires not only technical expertise and substantial funding but also an ability to endure ongoing maintenance as websites continually evolve. Platforms that gather data often intentionally make it challenging to access the public data they control, meaning only companies with significant budgets can engage in the kind of data collection necessary for competitive decision-making.

AI offers a chance to change this dynamic. Oxylabs AI Studio comprises five tools that operate via natural language prompts: AI-Crawler, AI-Scraper, Browser Agent, AI-Search, and AI-Map. Users specify the data they need instead of writing

Other articles

James Zou from Stanford aims for a $1 billion valuation for his AI physiology startup, which is supported by research published in Nature and an FDA-approved cardiac AI.

James Zou from Stanford aims for a $1 billion valuation for his AI physiology startup, which is supported by research published in Nature and an FDA-approved cardiac AI.

James Zou from Stanford aims for a $1 billion valuation for his AI physiology startup, which is supported by research published in Nature and an FDA-approved cardiac AI.

James Zou from Stanford aims for a $1 billion valuation for his AI physiology startup, which is supported by research published in Nature and an FDA-approved cardiac AI.

Meta has finalized a multibillion-dollar agreement for Amazon's Graviton5 chips as the demand for AI computing exceeds its $135 billion capital expenditure budget.

Meta plans to implement tens of millions of Amazon Graviton5 CPU cores in AWS data centers for agentic AI. This agreement is part of a procurement initiative exceeding $200 billion that includes Nvidia, AMD, CoreWeave, Broadcom, and now a direct competitor.

Meta has finalized a multibillion-dollar agreement for Amazon's Graviton5 chips as the demand for AI computing exceeds its $135 billion capital expenditure budget.

Meta plans to implement tens of millions of Amazon Graviton5 CPU cores in AWS data centers for agentic AI. This agreement is part of a procurement initiative exceeding $200 billion that includes Nvidia, AMD, CoreWeave, Broadcom, and now a direct competitor.

Meta has entered into a multibillion-dollar agreement for Amazon's Graviton5 chips as the demand for AI computing exceeds its $135 billion capital expenditure budget.

Meta plans to use tens of millions of Amazon Graviton5 CPU cores in AWS data centers for agentic AI. This agreement is part of a procurement initiative exceeding $200 billion, which includes Nvidia, AMD, CoreWeave, Broadcom, and now a direct rival.

Meta has entered into a multibillion-dollar agreement for Amazon's Graviton5 chips as the demand for AI computing exceeds its $135 billion capital expenditure budget.

Meta plans to use tens of millions of Amazon Graviton5 CPU cores in AWS data centers for agentic AI. This agreement is part of a procurement initiative exceeding $200 billion, which includes Nvidia, AMD, CoreWeave, Broadcom, and now a direct rival.

Ensuring the future of AI: How Tresor Lisungu Oteko is connecting cloud systems with post-quantum security.

As AI adoption grows, security risks are increasing. Tresor Lisungu Oteko is focusing on the convergence of cloud, AI, and cryptography to develop more secure enterprise systems.

Ensuring the future of AI: How Tresor Lisungu Oteko is connecting cloud systems with post-quantum security.

As AI adoption grows, security risks are increasing. Tresor Lisungu Oteko is focusing on the convergence of cloud, AI, and cryptography to develop more secure enterprise systems.

The Porsche Cayenne Coupe Electric makes its debut, boasting 1,139 horsepower and a range of 669 km, as the company shifts away from its all-electric strategy.

The Cayenne Coupe Electric delivers 1,139 horsepower, can be charged in 16 minutes, and has a starting price of $113,800. Porsche is introducing it alongside its internal combustion engine variants following a 93% drop in profits and a strategic pullback from electrification.

The Porsche Cayenne Coupe Electric makes its debut, boasting 1,139 horsepower and a range of 669 km, as the company shifts away from its all-electric strategy.

The Cayenne Coupe Electric delivers 1,139 horsepower, can be charged in 16 minutes, and has a starting price of $113,800. Porsche is introducing it alongside its internal combustion engine variants following a 93% drop in profits and a strategic pullback from electrification.

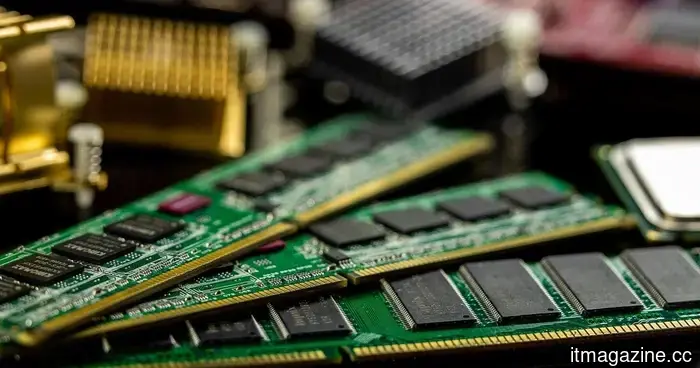

Why RAM Prices Are High in 2026 — And What PC Buyers Need to Consider

RAM prices have increased significantly due to the demand from AI and supply limitations. Here’s an overview of the reasons for the rise, potential duration, and advice for PC builders moving forward.

Why RAM Prices Are High in 2026 — And What PC Buyers Need to Consider

RAM prices have increased significantly due to the demand from AI and supply limitations. Here’s an overview of the reasons for the rise, potential duration, and advice for PC builders moving forward.

How web intelligence is driving the next generation of AI infrastructure.

Web intelligence is advancing to accommodate the scale and intricacy of contemporary AI systems, transitioning from multimodal AI to LLM search and data pipelines.