Unauthorized individuals accessed Anthropic's restricted Mythos AI model.

A small group of unauthorized individuals accessed Claude Mythos Preview, Anthropic’s highly restricted cybersecurity AI model, on the same day the company publicly introduced the model, reportedly by guessing the model's URL based on their understanding of Anthropic’s URL formatting for other models, according to a Bloomberg News report from April 21.

This group, which communicates through a private Discord channel focused on gathering information about unreleased AI models, has been regularly using Mythos since acquiring access, and they provided Bloomberg with evidence including screenshots and a live demonstration.

Anthropic confirmed it is looking into the claims: “We’re investigating a report stating unauthorized access to Claude Mythos Preview through one of our third-party vendor environments.” The company also noted that there is currently no indication that the unauthorized access has affected Anthropic’s core systems or expanded beyond the specific vendor environment.

An employee from a third-party contractor collaborating with Anthropic seems to have played a role in enabling the group's access, according to the report.

The gravity of the breach is linked to the nature of the model itself. Anthropic unveiled Mythos Preview and the associated Project Glasswing initiative on April 7, 2026. They had intentionally withheld the model from a general release due to its offensive cyber capabilities; during testing, Mythos autonomously detected thousands of previously unknown zero-day vulnerabilities across all major operating systems and web browsers, and created working exploits, including chaining four vulnerabilities in a browser to escape both renderer and operating system sandboxes—an accomplishment that typically requires extensive expert effort.

Anthropic engineers, lacking formal security training, tasked the model with finding remote code execution vulnerabilities overnight and found complete, functioning exploits upon waking. The company expressed concerns that the powerful defense capabilities could be catastrophic if misused.

Project Glasswing was intended to address these concerns: instead of a public launch, Anthropic provided Mythos access to 12 named launch partners, including Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks, along with Anthropic itself, for defensive security tasks, with around 40 additional organizations also being granted access. The initiative included $100 million in usage credits and $4 million in direct grants to open-source security organizations. This limited rollout was Anthropic’s deliberate attempt to give defenders an advantage over attackers before a model with such capabilities became widely available.

The unauthorized access undermines this strategy without completely nullifying it: although the group reportedly claimed their motivations were based on curiosity, intent is not a reliable safeguard when the tool can autonomously generate weaponizable exploits.

The breach also has political implications, occurring just after President Trump mentioned on CNBC that a deal between the Pentagon and Anthropic was “possible” and that the company was “shaping up.” At the same time, Anthropic is suing the Department of Defense over its classification as a supply chain risk, with the lawsuit focusing specifically on the safety and control of its AI.

An incident of unauthorized access, even when seemingly originating from a third-party vendor environment instead of Anthropic’s own infrastructure, provides support to critics within the administration who argue that Anthropic is unable to effectively manage access to its tools. It also complicates the company’s legal case, which partially relies on its assertion that it enforces strict safety and access controls for its most advanced models.

The means of access—an educated guess about the model's URL, facilitated by knowledge of Anthropic’s conventions for other model endpoints—points to a specific type of failure that differs from a typical data breach or intrusion. The group didn't bypass Anthropic’s security measures but rather exploited the gap between Anthropic’s controls on its systems and those of a third-party vendor with access credentials. This distinction is important for the investigation and for how the incident may be interpreted within the broader AI industry: it reflects a failure of vendor security as much as a failure of model governance. Nonetheless, the outcome remains the same.

Other articles

Bond introduces a post-feed social network that utilizes AI memories to combat doomscrolling, although its data model has sparked some concerns.

Bond does not feature a feed or infinite scrolling. Its AI analyzes your photos and videos to suggest real-life activities. The business model aims to monetize this data through licensing.

Bond introduces a post-feed social network that utilizes AI memories to combat doomscrolling, although its data model has sparked some concerns.

Bond does not feature a feed or infinite scrolling. Its AI analyzes your photos and videos to suggest real-life activities. The business model aims to monetize this data through licensing.

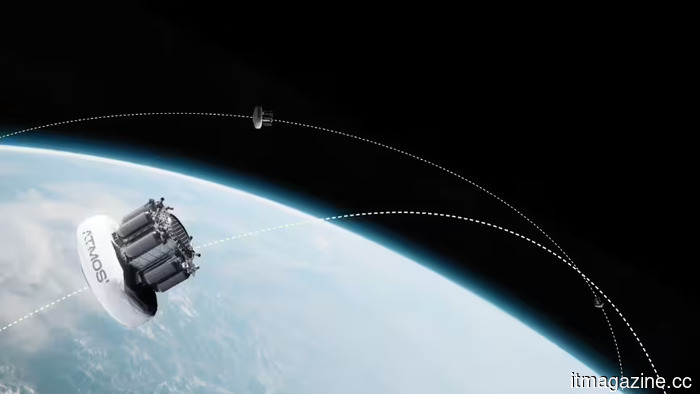

ATMOS secures €25.7 million to enable regular orbital returns.

ATMOS Space Cargo has completed a €25.7M Series A funding round to finance three PHOENIX 2 orbital return missions, establish a new defense venture called ATMOS WORKS, and develop PHOENIX 3.

Meta is implementing tracking software on the computers of its employees in the US.

Meta is implementing software on the computers of its US employees to track mouse movements, record keystrokes, and capture screenshots for the purpose of training its AI agents.

NeoCognition's investment of $40 million in self-learning AI agents

NeoCognition secures $40 million funding from Cambium Capital, Walden Catalyst, Vista, Intel CEO Lip-Bu Tan, and Databricks to develop self-learning AI agents for businesses.

ATMOS secures €25.7 million to enable regular orbital returns.

ATMOS Space Cargo has completed a €25.7M Series A funding round to finance three PHOENIX 2 orbital return missions, establish a new defense venture called ATMOS WORKS, and develop PHOENIX 3.

Meta is implementing tracking software on the computers of its employees in the US.

Meta is implementing software on the computers of its US employees to track mouse movements, record keystrokes, and capture screenshots for the purpose of training its AI agents.

NeoCognition's investment of $40 million in self-learning AI agents

NeoCognition secures $40 million funding from Cambium Capital, Walden Catalyst, Vista, Intel CEO Lip-Bu Tan, and Databricks to develop self-learning AI agents for businesses.

Japanet increases its VC fund to $200 million after securing remarkable returns from its early investments in Anthropic and xAI.

Japanet, a leading player in Japanese TV shopping, increases its venture fund from $50 million to $200 million following initial investments in Anthropic, xAI, and OpenAI via Pegasus Tech Ventures.

Japanet increases its VC fund to $200 million after securing remarkable returns from its early investments in Anthropic and xAI.

Japanet, a leading player in Japanese TV shopping, increases its venture fund from $50 million to $200 million following initial investments in Anthropic, xAI, and OpenAI via Pegasus Tech Ventures.

OpenAI transitions ChatGPT advertising to a cost-per-click model as the $60 CPM declines over ten weeks and ad revenue goals reach $2.5 billion.

OpenAI has shifted ChatGPT advertising from a CPM model to a CPC model, with bids ranging from $3 to $5, following a decline in launch pricing. The firm anticipates generating $2.5 billion in ad revenue this year, despite facing $14 billion in losses.

OpenAI transitions ChatGPT advertising to a cost-per-click model as the $60 CPM declines over ten weeks and ad revenue goals reach $2.5 billion.

OpenAI has shifted ChatGPT advertising from a CPM model to a CPC model, with bids ranging from $3 to $5, following a decline in launch pricing. The firm anticipates generating $2.5 billion in ad revenue this year, despite facing $14 billion in losses.

Unauthorized individuals accessed Anthropic's restricted Mythos AI model.

On the day of its launch, a Discord group was able to access Anthropic’s Mythos AI model by deducing its URL through a third-party vendor environment.