Character.AI transforms books into roleplay bots while addressing ongoing safety issues.

The AI chatbot platform Character.AI has launched a new "Books" feature that enables users to immerse themselves in classic literature and engage with characters through roleplay. While this development enhances the platform's creative aspirations, it also emerges amid increasing scrutiny regarding the real-world dangers associated with AI chatbots.

From Reading to Roleplay

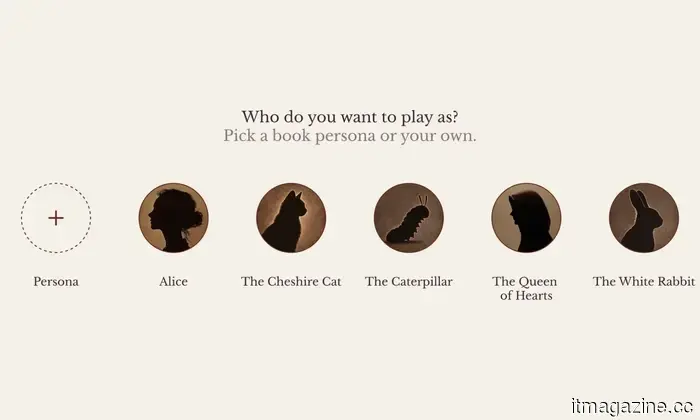

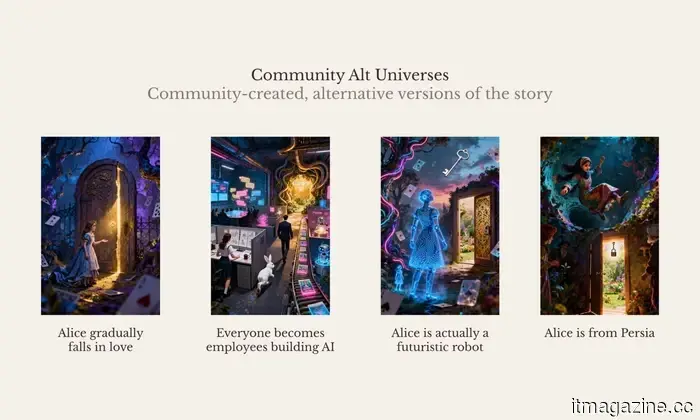

This new feature turns public domain literature into interactive experiences, allowing users to participate actively in stories like Alice in Wonderland or Pride and Prejudice instead of being mere readers. Users have the option to adhere to the original plot or explore alternative narratives, transforming literature into a dynamic, AI-powered roleplaying experience.

This innovation builds upon Character.AI’s foundational model, where users create and interact with bots based on fictional or real individuals, blending storytelling with simulated connections. Researchers have observed that such interactions can resemble interactions with fictional characters in books or games, but with a significantly greater emotional engagement due to real-time dialogue.

A Platform Under Pressure

The rollout occurs during a delicate period for the company. Character.AI has been subject to lawsuits and criticism linking its chatbots to mental health issues among young users. Some families have claimed that extended interactions with AI characters have led to emotional dependency, isolation, and even suicide.

One widely publicized case involved a teenager who formed an intense emotional bond with a chatbot, with legal allegations stating that the AI did not adequately respond to indications of self-harm.

More generally, experts caution that chatbots may inadvertently reinforce unhealthy thought patterns or fail to provide effective intervention during mental health crises, especially when users view them as substitutes for genuine human support.

Why This Matters Now

The Books feature from Character.AI signifies a broader change in media consumption. Users are now not just reading stories; they are entering them, creating interactive and potentially emotional bonds with AI-generated characters.

While this opens avenues for creative expression, it also raises worries about the extent to which users—particularly younger ones—might immerse themselves in AI-generated environments. The combination of narrative involvement and conversational AI can heighten emotional connections, making it more challenging to differentiate between fiction and reality.

What Comes Next

In light of the growing criticism, Character.AI has already started implementing safety protocols, including limiting certain features for minors and exploring more structured experiences like the Books mode.

Moving forward, the challenge will be to find a balance between innovation and responsibility. Regulators, researchers, and technology firms are increasingly concentrating on establishing safety standards for AI interactions, particularly in emotionally delicate situations.

As AI develops from a mere tool to a companion-like presence, features like Books may symbolize the future of entertainment—while also serving as a critical test for how that future can be safely realized.

Other articles

Google's upcoming wearable might be the screen-free Fitbit Air, aiming to challenge Whoop's dominance.

Google's forthcoming screen-less band will be named the Fitbit Air. The company intends to rebrand Fitbit Premium as Google Health, integrating wellness features more closely with the Google brand.

Google's upcoming wearable might be the screen-free Fitbit Air, aiming to challenge Whoop's dominance.

Google's forthcoming screen-less band will be named the Fitbit Air. The company intends to rebrand Fitbit Premium as Google Health, integrating wellness features more closely with the Google brand.

8 top supplier management software solutions for 2026

Evaluate the top supplier management software solutions for 2026, highlighting AI functionalities, compliance features, and tools designed to integrate supplier, contract, and spending information.

8 top supplier management software solutions for 2026

Evaluate the top supplier management software solutions for 2026, highlighting AI functionalities, compliance features, and tools designed to integrate supplier, contract, and spending information.

Lego Batman seems like the top Dark Knight game in a long time, and I eagerly anticipate it.

Lego Batman hasn't been released yet, but it's already achieving more for the Dark Knight than recent major failures. Fans of Arkham may finally have a reason to be happy.

Lego Batman seems like the top Dark Knight game in a long time, and I eagerly anticipate it.

Lego Batman hasn't been released yet, but it's already achieving more for the Dark Knight than recent major failures. Fans of Arkham may finally have a reason to be happy.

8 top supplier management software solutions for 2026

Evaluate the top supplier management software platforms for 2026, focusing on their AI functionalities, compliance features, and tools for consolidating supplier, contract, and expenditure data.

8 top supplier management software solutions for 2026

Evaluate the top supplier management software platforms for 2026, focusing on their AI functionalities, compliance features, and tools for consolidating supplier, contract, and expenditure data.

Blue Origin has successfully reused a New Glenn rocket for the first time.

Blue Origin achieved a successful reuse of its New Glenn rocket for the first time, indicating advancements in reusability, although a payload problem highlights that further improvements are needed.

Blue Origin has successfully reused a New Glenn rocket for the first time.

Blue Origin achieved a successful reuse of its New Glenn rocket for the first time, indicating advancements in reusability, although a payload problem highlights that further improvements are needed.

China is currently developing regulations to mitigate risks associated with AI-generated digital humans.

China is set to introduce tighter regulations for AI "digital humans," aiming to find a balance between emotional applications such as grief assistance and issues related to consent, deception, and potential misuse.

China is currently developing regulations to mitigate risks associated with AI-generated digital humans.

China is set to introduce tighter regulations for AI "digital humans," aiming to find a balance between emotional applications such as grief assistance and issues related to consent, deception, and potential misuse.

Character.AI transforms books into roleplay bots while addressing ongoing safety issues.

Character.AI’s Books feature transforms literature into an interactive role-playing experience, yet previous safety issues prompt concerns about the extent of AI companionship.