Stanford's yearly AI report reveals a divide between those immersed in AI and the general public.

The 2026 AI Index from Stanford’s Institute for Human-Centered AI highlights a growing divide between expert optimism and public unease regarding AI. There is an escalating anger among Gen Z towards AI, with a decline in employment within AI-related fields for younger workers already evident. Additionally, the US has the lowest level of trust in its government’s ability to regulate AI when compared to other countries surveyed.

Stanford University's Institute for Human-Centered Artificial Intelligence (HAI) published its annual AI Index Report on Monday, revealing that the most compelling finding is not centered on model performance or investment amounts, but rather the widening chasm between those developing AI technologies and those experiencing their effects.

Throughout the 423-page report, expert insights and public perspectives frequently diverge. The report concludes that "AI experts and the US public have disagreements on nearly all aspects of AI’s future," with the key exception being that both groups agree AI will negatively impact elections and personal relationships.

The statistics underscore the stark contrast. A Pew Research study referenced in the report found that only 10% of Americans expressed more excitement than concern regarding the increased integration of AI into everyday life. Conversely, among AI experts surveyed, 56% believed AI would positively impact the US over the next two decades.

The most significant gap appears in perceptions of AI's effects on the economy and job market: 69% of experts deemed AI beneficial for the economy, while only 21% of the general public agreed. Regarding AI's positive influence on job performance, 73% of experts responded positively, contrasting with just 23% of the public. Furthermore, while 84% of experts believed AI would predominantly enhance medical care within the next 20 years, only 44% of the American public concurred. Meanwhile, approximately 64% of Americans anticipate that AI will lead to job reductions in the next 20 years.

The report indicates that job opportunities for younger workers in AI-related fields have already begun to wane, suggesting that public concerns might not be just theoretical.

The attitude of Gen Z towards AI is particularly noteworthy. A Gallup poll conducted for the Walton Family Foundation and GSV Ventures in February and March 2026, which surveyed 1,572 individuals aged 14 to 29, revealed that the number of Gen Z respondents who considered themselves excited about AI decreased from 36% in 2025 to 22% in 2026. Similarly, those feeling hopeful declined from 27% to 18%, while the proportion expressing anger increased from 22% to 31%. This shift occurs despite nearly half of Gen Z using AI either daily or weekly.

Zach Hrynowski, a senior education researcher at Gallup, linked the rising frustration to deteriorating prospects for entry-level job seekers, noting that the oldest members of Gen Z, who are most exposed to the workforce, exhibit the most anger.

Regarding regulation, the geographic discrepancy is particularly striking. The US demonstrated the lowest trust in its government’s capacity to regulate AI among the countries surveyed, at just 31%. In contrast, Singapore rated the highest, at 81%.

Globally, 41% of Americans felt that federal AI regulation would be insufficient, while only 27% thought it would be excessive. A separate Pew survey of 25 countries found that the EU is perceived as more trustworthy than the US or China in effectively regulating AI.

The report also outlines the disconnect between AI's technological advancements and its societal pitfalls. AI reached 53% of the population more rapidly than personal computers or the internet. Documented incidents involving AI systems, classified as harms or near-harms, totaled 362 in 2025, an increase from 233 in 2024, as 88% of organizations now report the use of AI.

This trend correlates with a growing environmental impact: training xAI’s Grok 4 reportedly produced over 72,000 tonnes of CO₂, and the water needed for GPT-4o inference tasks is estimated to be sufficient for 12 million people.

The report also points out, somewhat ironically, that despite the rapid development of AI, leading models can only read analog clocks correctly about 50% of the time, compared to roughly 90% accuracy for non-specialized humans.

Stanford's report acknowledges its own constraints: it is backed financially by Google, OpenAI, and others, and was created with assistance from ChatGPT and Claude. Its assertion that “Responsible AI is not progressing at the same pace as AI capability, with safety benchmarks falling behind and incidents rising sharply” serves as an implicit critique of the very organizations that provided funding for its release.

Other articles

Amazon has finalized an agreement to purchase Globalstar in a deal worth $11.6 billion.

Amazon has reached an agreement to purchase Globalstar for $11.6 billion and will handle the powering of Emergency SOS features on iPhone. The Amazon Leo D2D service is set to launch in 2028.

Amazon has finalized an agreement to purchase Globalstar in a deal worth $11.6 billion.

Amazon has reached an agreement to purchase Globalstar for $11.6 billion and will handle the powering of Emergency SOS features on iPhone. The Amazon Leo D2D service is set to launch in 2028.

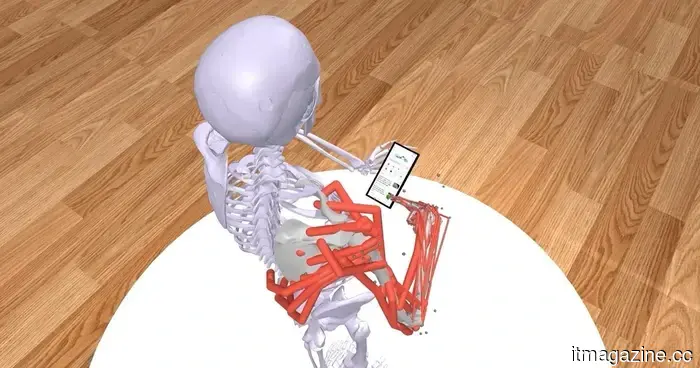

We use our phones constantly throughout the day, and researchers have evaluated which ones are the most exhausting.

Scientists developed an AI model that mimics the amount of physical effort exerted by your finger when using a smartphone, and the findings could be quite surprising.

We use our phones constantly throughout the day, and researchers have evaluated which ones are the most exhausting.

Scientists developed an AI model that mimics the amount of physical effort exerted by your finger when using a smartphone, and the findings could be quite surprising.

Why contemporary work environments are reconsidering hydration and its impact on employee performance.

Learn why ensuring proper hydration is increasingly important in today's work environments and how enhanced access to water can boost employee productivity, concentration, and overall effectiveness.

Why contemporary work environments are reconsidering hydration and its impact on employee performance.

Learn why ensuring proper hydration is increasingly important in today's work environments and how enhanced access to water can boost employee productivity, concentration, and overall effectiveness.

Synera secures $40 million to integrate agentic AI into engineering processes.

Synera has secured $40 million in a Series B funding round to expand its agentic AI platform for engineering, which is already utilized by NASA, BMW, Airbus, Volvo Trucks, and Hyundai.

Synera secures $40 million to integrate agentic AI into engineering processes.

Synera has secured $40 million in a Series B funding round to expand its agentic AI platform for engineering, which is already utilized by NASA, BMW, Airbus, Volvo Trucks, and Hyundai.

Helical secures $10 million in seed funding to transform biological foundation models into systems.

Helical has secured $10 million to develop the application layer that enables the reproducibility of bio foundation models in pharmaceutical research and development. It is already in use with Pfizer.

Helical secures $10 million in seed funding to transform biological foundation models into systems.

Helical has secured $10 million to develop the application layer that enables the reproducibility of bio foundation models in pharmaceutical research and development. It is already in use with Pfizer.

OpenAI's valuation of $852 billion is being questioned by its investors.

Certain investors in OpenAI are raising concerns about its valuation of $852 billion as the company updates its strategic plan. A leaked memo claims that Anthropic has exaggerated its run rate of $30 billion by $8 billion.

OpenAI's valuation of $852 billion is being questioned by its investors.

Certain investors in OpenAI are raising concerns about its valuation of $852 billion as the company updates its strategic plan. A leaked memo claims that Anthropic has exaggerated its run rate of $30 billion by $8 billion.

Stanford's yearly AI report reveals a divide between those immersed in AI and the general public.

The 2026 AI Index from Stanford reveals a significant disagreement between AI specialists and the general public regarding almost all aspects of AI's future. Just 10% of Americans feel more enthusiasm than apprehension about it.