The Emergence of AI in Penetration Testing: Analyzing the Future of Cybersecurity

Artificial intelligence has evolved from being merely a lab experiment to becoming an integral part of everyday software. It is seamlessly integrated into various applications, aiding developers in coding, supporting analysts with research, and enhancing tools utilized by banks, hospitals, and technology firms. In recent years, large language models (LLMs) have transitioned from a point of curiosity to a fundamental component of many digital products.

However, amidst the rush to develop advanced systems, one critical area remains underdeveloped: security. The behavior of AI systems significantly differs from that of traditional software, prompting the cybersecurity sector to reconsider how protective measures function. Consequently, a new field known as AI penetration testing, often called AI pentesting, is emerging within the security landscape.

### The New Security Risks Associated with AI Systems

Most software operates in predictable manners. With a given input, the code follows a series of predefined rules to produce an output. Traditionally, security testing has relied on this consistent structure.

Conversely, large language models operate quite differently. They process language, infer intent, and generate responses based on probabilities rather than rigid logic. This capability can lead to impressive outcomes but can also create vulnerabilities that security teams may not anticipate.

Some of the risks currently being examined by security professionals include:

- Prompt injection attacks, where harmful input alters the model’s behavior

- Data leakage, where concealed training data surfaces in responses

- Model manipulation, where attackers sway AI decisions through crafted prompts

- Unsafe API actions, where an AI assistant inadvertently triggers unintended commands

These threats become increasingly critical when AI systems are linked to databases, APIs, or automated processes.

### The Increased Stakes When AI Interfaces with Real Systems

Many contemporary AI applications do not function independently. They frequently serve as the front end for intricate systems operating behind the scenes. Consider a typical AI-powered tool today; it may involve reading corporate documents, accessing customer databases, launching backend services, or requesting data from an external API. Security experts highlight that risks often arise not from the model itself, but from its interactions with other systems. Even a seemingly innocent prompt can lead an AI Assistant to access sensitive information or execute unintended actions.

### The Expanding Domain of AI Pentesting

To assess these risks, security specialists are adapting conventional penetration testing methods to accommodate AI environments.

AI pentesting focuses on how language models perform when exposed to hostile inputs, unexpected prompts, or altered data sources. Instead of probing network ports or software flaws, testers investigate how AI systems comprehend language and the impact this understanding has on downstream systems.

Among the engineers delving into this area is Nayan Goel, a Principal Application Security Engineer whose work centers on the interplay between AI systems and modern application security.

Recent research explores the implications of transitioning large language models from controlled environments to real-world software systems. As AI interacts with APIs, data pipelines, and automated workflows, the potential failure points expand rapidly.

### Research is Aligning with Emerging Needs

For an extended period, most AI security research has remained largely within academic circles. Scholars analyzed theoretical attacks or scrutinized how machine-learning systems might be exploited.

Goel has made valuable contributions through research on topics like federated learning for secure AI models, reinforcing AI systems in challenging environments, and protecting autonomous systems. His work has been showcased at international conferences such as IEEE and Springer, indicating a growing acknowledgment of these challenges in both academic and industry realms.

### Establishing Security Standards for AI Applications

With the increasing deployment of AI tools, the necessity for standardized security guidelines is becoming clear. Organizations like OWASP have begun to issue guidance specifically geared toward generative AI systems and large language models (LLMs).

Goel has also played a role in community initiatives aimed at defining security practices for AI-enhanced systems, including contributions linked to OWASP’s agentic security projects.

These guidelines mark an early effort to bring structure to a rapidly evolving field. The intent of these initiatives is to assist developers in embedding security controls into AI applications before vulnerabilities become widespread.

### Transitioning Research into Practical Security Tools

In addition to research frameworks, security teams require practical methods for testing AI systems.

To address this need, Goel's recent efforts have included developing and evaluating techniques designed to uncover vulnerabilities such as prompt injection across various AI models, an area gaining attention as generative systems become more prevalent. A notable feature of this tool is its multi-agent testing approach, where different analyzer agents assess each other's behavior during testing. This setup simulates coordinated attack strategies that might occur in real-world contexts.

A version of this framework was presented at events like BSides Chicago, where researchers and practitioners discuss strategies for assessing the resilience of AI systems in real-world environments.

### AI's Role in Defense

While AI brings new security challenges, it also has the potential to address some of them. Security researchers are exploring machine-learning systems that monitor behavior patterns, identify suspicious activities, and automate threat detection.

### Educating Future Security Engineers

An essential aspect of the AI security landscape is education. Universities

Other articles

Space data centers: SpaceX and Blue Origin compete for orbital dominance as scientists raise questions about the underlying physics.

SpaceX has submitted a proposal for one million data center satellites, while Blue Origin has aimed for 51,600. Experts indicate that the principles of cooling, radiation, and expenses render orbital computing a prospect that is decades away.

Space data centers: SpaceX and Blue Origin compete for orbital dominance as scientists raise questions about the underlying physics.

SpaceX has submitted a proposal for one million data center satellites, while Blue Origin has aimed for 51,600. Experts indicate that the principles of cooling, radiation, and expenses render orbital computing a prospect that is decades away.

Thinking AI can improve your dating life? This actor's experience suggests otherwise.

Actor and writer Rhik Samadder asked AI to generate his dating profile, messages, and conversation starters, but soon discovered that the chatbot's confidence quickly crumbles in actual dating situations.

Thinking AI can improve your dating life? This actor's experience suggests otherwise.

Actor and writer Rhik Samadder asked AI to generate his dating profile, messages, and conversation starters, but soon discovered that the chatbot's confidence quickly crumbles in actual dating situations.

Even Realities introduces Even Hub to transform G2 smart glasses into a comprehensive app ecosystem.

Even Realities has officially introduced Even Hub, a new app store and developer platform tailored for its G2 smart glasses, representing a major advancement in the functionality of wearable technology. The platform is currently active and available to all G2 users via the Even Realities app, enabling them to explore and install third-party applications […]

Even Realities introduces Even Hub to transform G2 smart glasses into a comprehensive app ecosystem.

Even Realities has officially introduced Even Hub, a new app store and developer platform tailored for its G2 smart glasses, representing a major advancement in the functionality of wearable technology. The platform is currently active and available to all G2 users via the Even Realities app, enabling them to explore and install third-party applications […]

Microsoft introduces three proprietary AI models as a direct competition to OpenAI.

Microsoft launched MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 through Foundry, developed by the superintelligence team led by Mustafa Suleyman. These models are in direct competition with OpenAI.

Microsoft introduces three proprietary AI models as a direct competition to OpenAI.

Microsoft launched MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 through Foundry, developed by the superintelligence team led by Mustafa Suleyman. These models are in direct competition with OpenAI.

NordVPN has introduced a new complimentary tool that reveals the extent of your location data exposure on the internet.

NordVPN has introduced My Location, a complimentary tool that displays your actual physical location alongside your IP-based virtual location, assisting you in understanding what information websites have regarding your whereabouts.

NordVPN has introduced a new complimentary tool that reveals the extent of your location data exposure on the internet.

NordVPN has introduced My Location, a complimentary tool that displays your actual physical location alongside your IP-based virtual location, assisting you in understanding what information websites have regarding your whereabouts.

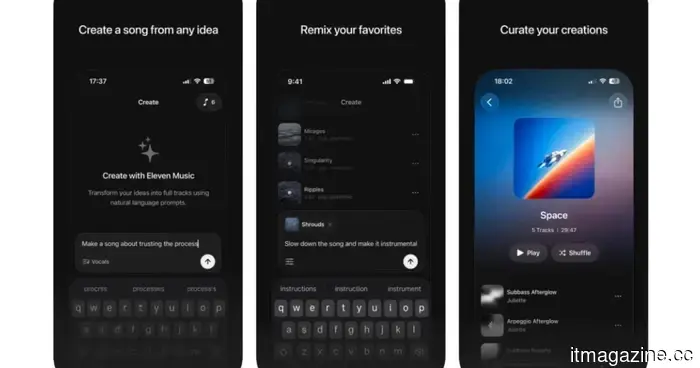

The ElevenLabs AI music generator transforms your concepts into three-minute tracks.

Following closely behind Google's music AI release, ElevenLabs has introduced ElevenMusic, an iOS app that transforms text into songs, demonstrating the company's strong intention to expand significantly beyond voice cloning.

The ElevenLabs AI music generator transforms your concepts into three-minute tracks.

Following closely behind Google's music AI release, ElevenLabs has introduced ElevenMusic, an iOS app that transforms text into songs, demonstrating the company's strong intention to expand significantly beyond voice cloning.

The Emergence of AI in Penetration Testing: Analyzing the Future of Cybersecurity

Artificial intelligence has transitioned from being merely a laboratory experiment to increasingly integrating into everyday software. It is subtly assisting developers in writing code, supporting analysts with research, and driving tools within banks, hospitals, and technology firms. In recent years, large language models (LLMs) have evolved from a point of interest to essential components of numerous digital products. However, as companies have hurried to create [...]