AI analytics agents require boundaries instead of an increase in model size.

Imagine a Vice President of Finance at a large retail company. She poses a straightforward question to the company's new AI analytics system: “What was our revenue for the last quarter?” The response arrives in mere seconds.

Confident.

Clear.

Incorrect.

This exact situation occurs more often than many organizations would like to acknowledge. AtScale, a company that specializes in offering governed analytics environments and semantic consistency, has discovered that merely increasing model parameterization cannot solve the AI governance and context challenges that enterprises face.

When AI systems process inconsistent or ungoverned data, merely adding complexity to the model exacerbates the issue rather than resolving it. Companies across various sectors have swiftly created agentic AI systems, enabling these technologies to analyze data, produce insights, and initiate automated workflows. In reaction to this trend, AI models have evolved to respond more swiftly through expanded parameters, enhanced computing power, and added features. The foundational belief has been that sufficiently enlarging the model will ultimately yield reliable results.

However, there are signs that this belief might be flawed. Recent research by TDWI revealed that nearly half of the participants view their AI governance initiatives as either underdeveloped or very underdeveloped. This may be more related to data lineage and the business definitions that underpin these models, rather than the models’ capabilities themselves.

Why larger models fail to address governance issues

The AI industry often operates under an unexamined premise regarding what enhances performance: that as we create more sophisticated models, they will implicitly correct their performance flaws. In the realm of enterprise analytics, this assumption can quickly unravel.

While increased scale might enhance the reasoning capacity of a model, it does not enforce adherence to the agreed-upon definition of concepts like gross margin. It does not eliminate metric inconsistencies that have persisted across separate dashboards for years. Moreover, it does not inherently provide traceable data lineage.

Governance issues do not resolve merely by scaling. Business rules embedded within individual tools, inconsistent definitions among teams, and outputs lacking an audit trail are structural challenges. A larger model does not rectify these structural flaws; it simply generates unreliable answers more adeptly.

At AtScale, we observe a consistent theme among our clients: when organizations carry inconsistent data definitions into their AI systems, issues tend to escalate from there. These problems typically advance more rapidly and with less transparency than in the preceding layers.

Performance and accountability are distinct responsibilities. A model processes information, while a governance layer specifies what the model analyzes, limits its application of business logic, and ensures outputs can be traced back to a record source. One cannot replace the other.

The real danger: Unchecked agents in enterprise environments

The main concern with AI agents rarely lies in the model itself. Instead, it pertains to the data the model utilizes and the visibility of its actions.

With a unified context, AI agents may interpret data differently across various systems. In large enterprises, even slight differences in definitions can lead to divergent outcomes. Structural risks generally arise from four primary factors:

- Agents retrieve data from sources where the same metric can have differing meanings for different teams, leading to ambiguous data definitions.

- Metrics from disparate departments that do not align—two agents may provide two responses, but it remains unclear which is accurate.

- Unclear reasoning generates outputs lacking a clear lineage regarding how decisions were made.

- Audit gaps emerge when outputs cannot be traced back to a governed record, hindering the ability to identify mistakes, assign accountability, or make corrections.

These issues are not indicators of AI failure; they highlight that the surrounding infrastructure for AI has not kept pace.

What guardrails truly imply in AI analytics

Guardrails are often perceived as constraints. However, they can also create the very conditions that enable AI agents to function more reliably.

Guardrails help ensure that AI-generated outputs align with established business logic, providing a structure that allows autonomous agents to operate effectively; thus, as autonomy increases, reliability also improves. In analytics, guardrails typically take several specific forms:

- Shared data definitions: A unified definition for terms like revenue, churn, or margin that is consistent across all systems.

- Business logic constraints: Regulations dictating how calculations should be executed, regardless of the tools or agents involved.

- Lineage visibility: The ability to trace the origin of any output.

- Access controls: Defined permissions that determine what data an agent can access.

- Standardization of metrics: Uniform definitions applicable across various departments and platforms.

The goal is not to hinder AI performance but to provide a foundational base upon which it can operate.

The semantic layer as a framework for constraints

The semantic layer exists between the data and the applications or AI agents utilizing it, defining business concepts, enforcing logical processes, and offering a shared terminology for all applications and AI agents.

A semantic layer does not manipulate or replicate data; instead, it clarifies what the data signifies. By querying a governed semantic layer rather than the base data, AI agents can yield outputs based on business-defined logic instead of mere

Other articles

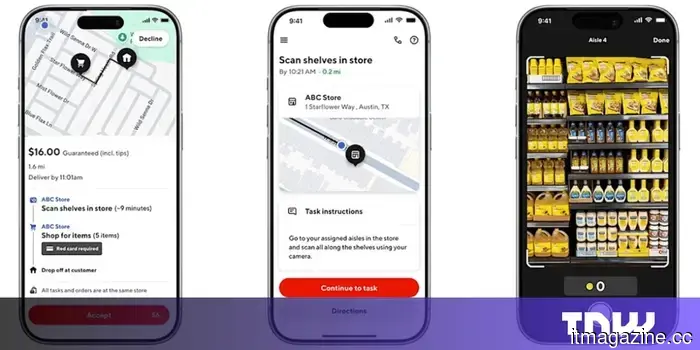

DoorDash introduces Tasks.

DoorDash has introduced Tasks, an independent app that compensates Dashers for capturing videos of household tasks and recording audio to help train AI models.

DoorDash introduces Tasks.

DoorDash has introduced Tasks, an independent app that compensates Dashers for capturing videos of household tasks and recording audio to help train AI models.

Microsoft's MAI-Image-2 ranks among the top three AI image generators.

Microsoft's MAI-Image-2 launches at #3 on Arena.ai's text-to-image leaderboard, trailing Google and OpenAI, and starts to be implemented on Copilot.

Microsoft's MAI-Image-2 ranks among the top three AI image generators.

Microsoft's MAI-Image-2 launches at #3 on Arena.ai's text-to-image leaderboard, trailing Google and OpenAI, and starts to be implemented on Copilot.

Uber and Rivian have finalized a $1.25 billion agreement for robotaxi services.

Uber plans to invest as much as $1.25 billion in Rivian by 2031, aiming for a fleet of as many as 50,000 autonomous R2 robotaxis in 25 cities.

Uber and Rivian have finalized a $1.25 billion agreement for robotaxi services.

Uber plans to invest as much as $1.25 billion in Rivian by 2031, aiming for a fleet of as many as 50,000 autonomous R2 robotaxis in 25 cities.

The German agritech company eternal.ag has secured €8 million in funding.

eternal.ag has secured €8 million to implement autonomous tomato harvesting robots that have been trained in virtual greenhouses prior to their actual deployment in the field.

The German agritech company eternal.ag has secured €8 million in funding.

eternal.ag has secured €8 million to implement autonomous tomato harvesting robots that have been trained in virtual greenhouses prior to their actual deployment in the field.

AI analytics agents require limitations rather than increased model size.

AI analytics agents require safeguards rather than larger models. Discover why governed data, common definitions, and semantic layers are more crucial than the size of the model.

AI analytics agents require limitations rather than increased model size.

AI analytics agents require safeguards rather than larger models. Discover why governed data, common definitions, and semantic layers are more crucial than the size of the model.

Your inbox serves as a revenue stream for others. It doesn’t have to be that way.

Free email could end up being more costly than you realize. We examine why investing in a paid email service might be the most prudent privacy choice you make this year.

Your inbox serves as a revenue stream for others. It doesn’t have to be that way.

Free email could end up being more costly than you realize. We examine why investing in a paid email service might be the most prudent privacy choice you make this year.

AI analytics agents require boundaries instead of an increase in model size.

AI analytics agents require boundaries rather than larger models. Discover why managed data, common definitions, and semantic layers are more important than the size of the model.