Is the standard iPad too widely used to receive Apple Intelligence?

**Apple**

In a time when Apple emphasizes its AI-driven Apple Intelligence, it seems peculiar that this technology hasn’t been integrated into the entry-level iPad (2025). The reason for this may now be more apparent.

At first glance, there’s the clear hardware limitation of the basic iPad not having sufficient power to support AI capabilities. However, Apple likely anticipated this issue and made a conscious decision to withhold AI enhancements for this model.

According to 9to5mac, referencing a sales chart from CRIP, the sales of the base model iPad have seen a yearly increase. This presents the assertion that Apple may intentionally be making the base iPad less attractive.

The implication here is that by reducing sales of the base iPad, Apple aims to prevent it from negatively impacting the sales of its higher-end models. Ultimately, this could lead to increased sales for the iPad Pro and iPad Air, resulting in greater overall profits.

This assumption is based on the idea that customers opting for the base model do so by choice rather than necessity.

Apple is providing accessible options.

Another perspective is that Apple is simply maintaining a basic iPad model to ensure more people can afford a version of this popular tablet. As noted by Digital Trends’ Nadeem Sarwar: “The $349 price simply doesn’t justify hardware capable of supporting generative AI.”

Thus, Apple seems to be segmenting its hardware in tandem with its software—offering enhanced features in premium models while still making available essential Apple features for those who prefer or require a more affordable option with the base iPad.

This year, the iPad (2025) has actually received more attention from Apple, highlighted by an upgrade to 6GB of RAM from the previous 4GB, enhancing its value in terms of future-proofing, provided users do not prioritize Apple Intelligence.

---

**Luke has over two decades of experience covering tech, science, and health. Among many other topics, Luke writes about health tech…**

**Network tests show Apple C1 modem in iPhone 16e excels where it counts**

When Apple unveiled the iPhone 16e a few weeks ago, the most significant aspect that garnered attention was its network chip. The C1 marks Apple's first in-house modem integrated into an iPhone, moving away from the company's complete dependency on Qualcomm. However, concerns arose regarding the competitiveness of this modem.

The popular speed testing platform Ookla conducted tests on the Apple-designed modem and found that it outperformed Qualcomm’s solution used in the iPhone 16 across several critical metrics. This analysis, spanning approximately two weeks, included AT&T, Verizon, and T-Mobile networks.

Generally, the iPhone 16e outperformed the iPhone 16 when connected to AT&T and Verizon networks, whereas the opposite was true for T-Mobile. Ookla attributes the differing results with T-Mobile to the carrier’s nationwide 5G standalone network (SA), whereas Apple’s C1 modem has limited SA compatibility.

**When challenges arise, C1 excels**

**HuggingSnap app provides a prime Apple AI tool, with a convenient twist**

The machine learning platform Hugging Face has rolled out an iOS app designed to interpret the surroundings as seen through your iPhone’s camera. Simply point it at a scene or take a picture, and it will utilize AI to describe it, identify objects, translate languages, or extract text details.

Named HuggingSnap, the app employs a multi-model strategy to comprehend the environment around you as input and is now available for free on the App Store. It operates with SmolVLM2, an open AI model capable of processing text, image, and video inputs.

The app's primary aim is to educate users about the objects and environments around them, including recognizing plants and animals. This concept is similar to the Visual Intelligence feature on iPhones, but HuggingSnap has a notable advantage over its Apple counterpart.

It does not require an internet connection to function.

All that’s needed is an iPhone running iOS 18 to get started. The user interface of HuggingSnap closely resembles that of Visual Intelligence. However, there’s a key distinction.

Apple depends on ChatGPT for the functionality of Visual Intelligence because Siri is currently unable to perform as a generative AI tool, such as ChatGPT or Google’s Gemini, both of which maintain their own knowledge bases. Instead, Siri transfers user requests to ChatGPT, necessitating an internet connection since ChatGPT is unable to operate offline. Conversely, HuggingSnap can function effectively without an internet connection. Additionally, this offline nature ensures that no user data is transferred from your phone, which is a notable privacy advantage.

**Apple might have to implement significant changes to how your iPhone operates**

Apple is encountering another significant challenge in Europe that could unlock some of the essential features of its ecosystem. The European Commission has recently outlined several broad interoperability requirements that Apple must adhere to in order to comply

Other articles

Amazon has reduced the price of the Kindle Colorsoft to just $225.

The Amazon Kindle Colorsoft Signature Edition is offered at a 20% discount during Amazon's Big Spring Sale, despite having only been launched late last year.

Amazon has reduced the price of the Kindle Colorsoft to just $225.

The Amazon Kindle Colorsoft Signature Edition is offered at a 20% discount during Amazon's Big Spring Sale, despite having only been launched late last year.

Patrick Stewart, Ian McKellen, and other X-Men actors join the cast of Avengers: Doomsday.

The X-Men are becoming part of the world's premier superhero team. Discover which X-Men actors have been added to the cast of Avengers: Doomsday.

Patrick Stewart, Ian McKellen, and other X-Men actors join the cast of Avengers: Doomsday.

The X-Men are becoming part of the world's premier superhero team. Discover which X-Men actors have been added to the cast of Avengers: Doomsday.

5 must-see movies departing from Hulu in March 2025

Only a limited number of movies are departing from Hulu in March, yet locating a quality film among them is more challenging than it ought to be.

5 must-see movies departing from Hulu in March 2025

Only a limited number of movies are departing from Hulu in March, yet locating a quality film among them is more challenging than it ought to be.

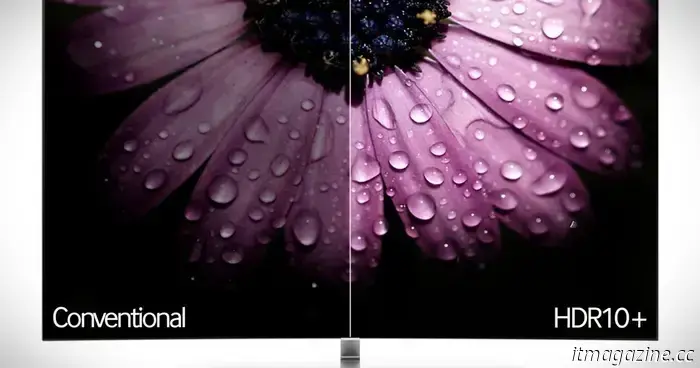

Netflix eliminated my last doubt about purchasing a Samsung OLED TV.

By adopting HDR10+, Netflix has alleviated my concerns about purchasing a Samsung TV. Here's the reason.

Netflix eliminated my last doubt about purchasing a Samsung OLED TV.

By adopting HDR10+, Netflix has alleviated my concerns about purchasing a Samsung TV. Here's the reason.

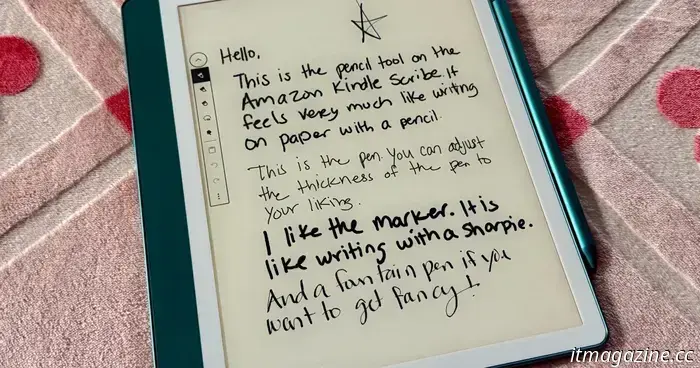

The Kindle Scribe is now available at a rare discount during Amazon's Big Spring Sale.

This eReader could potentially surpass a paper book, even if you're hesitant, because of its excellent note-taking features and affordable price. You can get an Amazon Kindle Scribe for $365.

The Kindle Scribe is now available at a rare discount during Amazon's Big Spring Sale.

This eReader could potentially surpass a paper book, even if you're hesitant, because of its excellent note-taking features and affordable price. You can get an Amazon Kindle Scribe for $365.

Hisense's ULED TVs for 2025 are exceptionally bright and come in three models, each measuring 100 inches.

Hisense's ULED TVs for 2025 will feature screen sizes reaching as large as 100 inches, offering brightness levels of up to 5,000 nits.

Hisense's ULED TVs for 2025 are exceptionally bright and come in three models, each measuring 100 inches.

Hisense's ULED TVs for 2025 will feature screen sizes reaching as large as 100 inches, offering brightness levels of up to 5,000 nits.

Is the standard iPad too widely used to receive Apple Intelligence?

In a time when Apple focuses heavily on AI technology, it seems peculiar that this capability isn’t included in the base model. The reason for this may now be more apparent. At first glance, the clear hardware limitation of the base iPad is that it doesn’t possess the necessary power to support AI functionalities. [...]