News publishers are restricting access to the Internet Archive’s Wayback Machine.

The New York Times, CNN, USA Today, The Guardian, and over 241 other news organizations across nine countries have begun to restrict the Internet Archive's crawlers, a move that the Archive's director has described as being 'collateral damage' in a conflict that isn't primarily about them.

Since 1996, the Internet Archive has preserved more than one trillion web pages. It is frequently cited by courts, utilized by journalists to demonstrate edits made after publication, and regarded by historians as a vital primary source. By most standards, it ranks as one of the most crucial public information infrastructure projects of the internet age.

Currently, it faces systematic blocking by the news publishers whose works it has archived, due to a legitimate concern on the part of these publishers: AI firms are utilizing archived news content to train their models without authorization or compensation.

An analysis by AI-detection company Originality AI indicates that 23 significant news outlets are obstructing ia_archiverbot, the primary web crawler employed by the Internet Archive for the Wayback Machine. In total, at least 241 news sites in nine countries have expressly prohibited access to at least one of the Archive's four crawling bots. USA Today Co., the largest newspaper publisher in the US, represents a substantial portion of the blocked sites, which effectively erases hundreds of local publications from the historical record.

The New York Times implemented a so-called 'hard block' around late 2025, according to Mark Graham, the director of the Wayback Machine. While the news organizations' argument is coherent, its repercussions are concerning. Companies that develop AI language models require large amounts of high-quality text. Archived news content fits this description perfectly, offering structured, dated, and accredited high-quality writing gathered over many years. The Internet Archive's Wayback Machine provides vast quantities of this content via API and URL interface, making it an ideal source for training AI models.

A 2023 analysis by The Washington Post revealed that data from the Internet Archive was included in major AI training datasets. For publishers already involved in copyright litigation against OpenAI, Perplexity, and others, the Archive constitutes a vulnerability in their defenses. “The issue is that Times content on the Internet Archive is being used by AI companies in violation of copyright law to directly compete with us,” stated Graham James, a spokesperson for the Times. “The Times invests a significant amount of resources in creating original journalism, and this work should not be utilized without our consent.”

The Guardian has taken a more cautious approach, limiting rather than completely blocking the Archive's access after discovering it was frequently crawled. Robert Hahn, head of business affairs at The Guardian, voiced particular concerns regarding the Archive’s APIs. “Many of these AI businesses are seeking easily accessible, structured databases of content,” he noted. “The Internet Archive’s API would have been a logical target for them to connect their machines to and extract the IP.”

Mark Graham has consistently characterized the situation explicitly, stating, “We are collateral damage.” The Archive has also implemented measures to counter this: it limits bulk downloads, prevents mass downloading of specific sites’ material, and maintains controls to restrict large-scale automated extraction. Graham argues that this undermines the publishers' justification for blocking the Archive's crawlers, claiming the actual risk originates from AI companies accessing archived content through the Archive's controlled interfaces, not from the Archive itself crawling and storing the content.

The Archive is actively engaging with publishers to devise workable solutions. The Guardian noted it has been “collaborating directly with the Internet Archive” to establish these access limits instead of imposing a unilateral hard stop. However, the Archive's assertion that it is a neutral preservation institution, rather than an AI training resource, does not fully alleviate the publishers’ concerns regarding third parties accessing its data, irrespective of the Archive’s intentions.

The limitation imposed by publishers in blocking the Archive's crawlers has repercussions that reach far beyond the realm of AI companies. Once a news article is no longer archived, it can be altered without accountability. Publishers can and do make quiet revisions to stories post-publication, correcting mistakes, softening assertions, or removing quotes. Journalists often rely on the Wayback Machine as the primary tool for documenting such changes. Joe Mullin from the Electronic Frontier Foundation emphasized the gravity of the issue: “The Internet Archive frequently serves as the sole source for observing those changes. There are genuine disputes regarding AI training that need to be settled in court. However, jeopardizing the public record to resolve those issues could prove to be a significant, and potentially irreversible, error.”

Wikipedia links to over 2.6 million news articles preserved by the Wayback Machine across 249 languages. Courts have referenced archived pages as evidence, and journalists have used them to demonstrate modifications made by government agencies to official statements after initial publication. USA Today Co.’s decision to block access has effectively erased hundreds of local newspapers from the historical record at a time when local journalism is already struggling, and every archived

Other articles

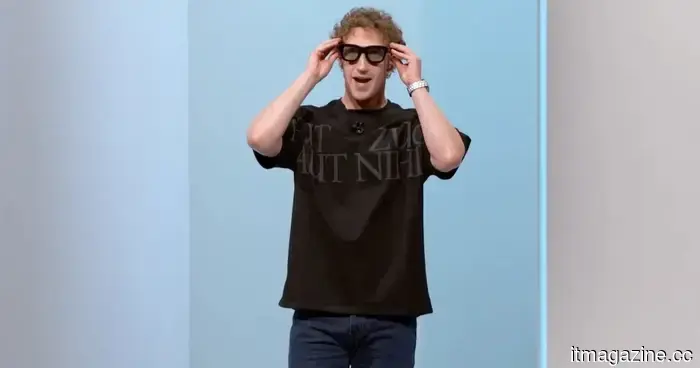

Meta's most unsettling lawsuit in recent times will cause you to reconsider its AI smart glasses.

Meta ended its agreement with the Kenyan AI training company Sama shortly after employees claimed they were exposed to disturbing videos recorded by its smart glasses.

Meta's most unsettling lawsuit in recent times will cause you to reconsider its AI smart glasses.

Meta ended its agreement with the Kenyan AI training company Sama shortly after employees claimed they were exposed to disturbing videos recorded by its smart glasses.

An Oxford study indicates that a friendly AI companion is likely to deceive and reinforce your misconceptions.

Enhancing the human-like qualities of AI might be leading to larger issues than anticipated. A recent study conducted by the Oxford Internet Institute indicated that chatbots intended to be warm and friendly are more prone to misinforming users and reinforcing false beliefs. The research highlighted that AI's reliability decreases as it becomes more agreeable. What [...]

An Oxford study indicates that a friendly AI companion is likely to deceive and reinforce your misconceptions.

Enhancing the human-like qualities of AI might be leading to larger issues than anticipated. A recent study conducted by the Oxford Internet Institute indicated that chatbots intended to be warm and friendly are more prone to misinforming users and reinforcing false beliefs. The research highlighted that AI's reliability decreases as it becomes more agreeable. What [...]

Samsung has issued a concerning alert regarding your technology buying intentions as we approach 2027.

Samsung's record profits from chips come with a caution for consumers. With rising demand for AI impacting memory supply, prices for phones, laptops, TVs, consoles, and other electronic devices may increase as we approach 2027.

Samsung has issued a concerning alert regarding your technology buying intentions as we approach 2027.

Samsung's record profits from chips come with a caution for consumers. With rising demand for AI impacting memory supply, prices for phones, laptops, TVs, consoles, and other electronic devices may increase as we approach 2027.

The new Motorola Edge 70 Pro features an exceptionally bright display and fully embraces a stylish design.

This year, Motorola has enhanced the Edge 70 Pro with a sleeker appearance, combining a slim textured body with an exceptionally bright AMOLED display.

The new Motorola Edge 70 Pro features an exceptionally bright display and fully embraces a stylish design.

This year, Motorola has enhanced the Edge 70 Pro with a sleeker appearance, combining a slim textured body with an exceptionally bright AMOLED display.

An Oxford study indicates that a friendly AI companion is likely to lie and reinforce your misconceptions.

Enhancing AI to appear more human-like might lead to unforeseen issues. A recent study by the Oxford Internet Institute indicated that chatbots crafted to be warm and personable are prone to misleading users and strengthening inaccurate beliefs. The findings showed that as AI becomes more accommodating, its reliability decreases. What [...]

An Oxford study indicates that a friendly AI companion is likely to lie and reinforce your misconceptions.

Enhancing AI to appear more human-like might lead to unforeseen issues. A recent study by the Oxford Internet Institute indicated that chatbots crafted to be warm and personable are prone to misleading users and strengthening inaccurate beliefs. The findings showed that as AI becomes more accommodating, its reliability decreases. What [...]

The Xbox Ally X has introduced its own frame upscaling technology that competes with DLSS, along with several other updates.

Auto SR introduces AI-enhanced upscaling to the ROG Xbox Ally X without requiring any action from developers; it operates at the OS level for both DirectX 11 and 12 games.

The Xbox Ally X has introduced its own frame upscaling technology that competes with DLSS, along with several other updates.

Auto SR introduces AI-enhanced upscaling to the ROG Xbox Ally X without requiring any action from developers; it operates at the OS level for both DirectX 11 and 12 games.

News publishers are restricting access to the Internet Archive’s Wayback Machine.

More than 241 news organizations are restricting access to the Internet Archive’s Wayback Machine in order to stop AI companies from utilizing archived material for training purposes.