China initiates a prolonged campaign to combat the misuse of AI.

The annual ‘Qinglang’ campaign organized by the Cyberspace Administration is taking place this year in a notably different regulatory landscape compared to last year, coinciding with accusations from the White House alleging that China is engaging in large-scale AI theft operations.

According to Reuters, China has initiated a months-long enforcement campaign aimed at addressing the misuse of artificial intelligence. This effort, led by the Cyberspace Administration of China (CAC) in conjunction with the Ministry of Public Security and other agencies, focuses on AI-related fraud, deepfakes, disinformation, and illegal applications that infringe on privacy and intellectual property rights.

This campaign is the 2026 iteration of the ongoing ‘Qinglang’ (Clear and Bright) special campaign series, following the previous one, which began on April 30, 2025, titled ‘Rectification of AI Technology Misuse’, and ran for three months in two phases. By the end of the first phase in June 2025, authorities had eliminated over 3,500 AI-related products, removed more than 960,000 pieces of illegal or harmful content, and either shut down or penalized over 3,700 accounts.

This year's campaign is set against a significantly more developed regulatory backdrop and a geopolitically charged context, making its focus and objectives more complex than those of its predecessor.

What areas are targeted by the campaign?

China’s enforcement campaigns against AI misuse have evolved, categorizing various forms of abuse that have increased with the technological and criminal advancements in AI. Utilizing the established Qinglang enforcement framework along with the new regulatory measures introduced in 2025 and early 2026, this campaign will likely address multiple categories at once.

The first and most commercially critical category is AI-enabled fraud and impersonation. There has been a sharp rise in the use of voice-cloning and face-swapping deepfake technology to imitate celebrities, business leaders, and government officials in scams aimed at ordinary citizens.

The CAC’s 2025 campaign specifically addressed the use of AI to impersonate friends and family for illegal acts such as online fraud, and the misuse of AI to create likenesses of deceased individuals without consent. On April 3, 2026, the CAC released draft regulations for digital virtual human services that cover consent requirements and prohibit circumventing biometric authentication systems, with a public comment period ending on May 6.

The second key area involves AI-generated disinformation and the activities of ‘online water armies’. This entails the large-scale deployment of AI to create fake social media profiles, produce and disseminate coordinated content, manipulate engagement metrics, and generate artificial trending topics. The 2025 campaign prioritized this issue during its second phase, focusing on platforms that enable AI-driven account farming and bulk content generation.

The third focus is on non-compliance with required filing and registration procedures. China mandates that large language models providing generative AI services to the public undergo security assessments and complete registrations with the CAC before launch. By March 2025, 346 generative AI services had completed the required filings, while many others had not. The first phase of the 2025 campaign highlighted unregistered AI products as a main target for correction, leading local regulators, including the Shanghai CAC, to penalize three AI applications offering services without fulfilling the necessary procedures, and the Zhejiang CAC to instruct app stores to remove a face-swapping app that had not passed a security evaluation.

Fourth, there is a focus on the management of training data, particularly the use of training datasets that infringe on intellectual property rights, privacy rights, or consent requirements. This enforcement angle takes on heightened sensitivity in 2026 due to the White House's official accusations from April 23, alleging that Chinese companies are conducting 'industrial-scale' campaigns to extract capabilities from U.S. frontier AI models through jailbreaking techniques and numerous proxy accounts.

China’s domestic enforcement initiative does not directly address the U.S. accusations; it instead concentrates on safeguarding the rights of Chinese individuals and users. However, both regulatory environments are evolving with a clear awareness of each other.

The 2026 campaign is backed by a more established domestic regulatory framework compared to its predecessor. Several key regulations either came into effect or were published in draft form leading up to this enforcement initiative. China’s mandatory standards for labeling AI-generated content (AIGC), which require clear technical labels on all AI-generated text, images, audio, and video, took effect on September 1, 2025. On April 10, 2026, the CAC released the Interim Measures for the Management of Anthropomorphic AI Interactive Services, overseeing chatbots, AI companions, and AI customer service agents that imitate human personality and communication styles, effective from July 15, 2026. Additionally, draft rules for digital virtual human services concerning biometric deepfakes were published on April 3, with a public comment period ending on May 6, 2026. In April 2026, the

Other articles

SPRIND launches application process for €125 million competition.

The Next Frontier AI Challenge from SPRIND is now accepting applications: €125 million in funding is available for ten teams developing groundbreaking AI architectures.

SPRIND launches application process for €125 million competition.

The Next Frontier AI Challenge from SPRIND is now accepting applications: €125 million in funding is available for ten teams developing groundbreaking AI architectures.

China initiates a campaign lasting several months to combat the misuse of AI.

China's CAC has initiated a multi-month enforcement campaign focused on addressing the misuse of AI, specifically targeting deepfakes, fraud, disinformation, and illegal applications.

China initiates a campaign lasting several months to combat the misuse of AI.

China's CAC has initiated a multi-month enforcement campaign focused on addressing the misuse of AI, specifically targeting deepfakes, fraud, disinformation, and illegal applications.

The upcoming Grand Theft Auto won’t be extremely expensive, contrary to expectations, as Take-Two's CEO discusses GTA 6.

Take-Two CEO Strauss Zelnick has virtually eliminated the possibility of a super-premium price for GTA 6, stating that the game's price will seem "quite reasonable."

The upcoming Grand Theft Auto won’t be extremely expensive, contrary to expectations, as Take-Two's CEO discusses GTA 6.

Take-Two CEO Strauss Zelnick has virtually eliminated the possibility of a super-premium price for GTA 6, stating that the game's price will seem "quite reasonable."

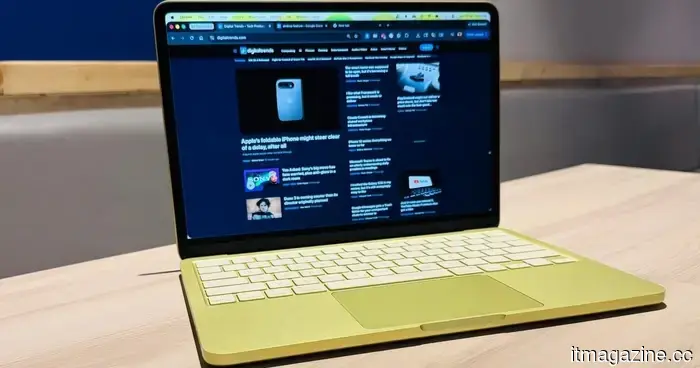

The upcoming MacBook Neo may receive a RAM enhancement. Indeed, in this economy, no less!

The upcoming MacBook Neo from Apple is anticipated to include the A19 Pro chip along with 12GB of RAM — a much-needed enhancement that may at last justify the cost of the entry-level Mac laptop.

The upcoming MacBook Neo may receive a RAM enhancement. Indeed, in this economy, no less!

The upcoming MacBook Neo from Apple is anticipated to include the A19 Pro chip along with 12GB of RAM — a much-needed enhancement that may at last justify the cost of the entry-level Mac laptop.

China initiates a months-long effort to combat the misuse of AI.

China’s CAC has initiated a prolonged enforcement campaign focusing on the misuse of AI, including deepfakes, fraud, disinformation, and unlawful applications.

China initiates a months-long effort to combat the misuse of AI.

China’s CAC has initiated a prolonged enforcement campaign focusing on the misuse of AI, including deepfakes, fraud, disinformation, and unlawful applications.

The upcoming MacBook Neo may receive a RAM enhancement. Incredible, especially considering the current economic situation!

The upcoming MacBook Neo from Apple is said to feature the A19 Pro chip along with 12GB of RAM — a long-awaited enhancement that may ultimately make the entry-level Mac laptop a worthwhile investment.

The upcoming MacBook Neo may receive a RAM enhancement. Incredible, especially considering the current economic situation!

The upcoming MacBook Neo from Apple is said to feature the A19 Pro chip along with 12GB of RAM — a long-awaited enhancement that may ultimately make the entry-level Mac laptop a worthwhile investment.

China initiates a prolonged campaign to combat the misuse of AI.

China's CAC has initiated a several-month enforcement campaign against the misuse of AI, focusing on deepfakes, fraud, disinformation, and illegal applications.