Cyber Backup 18.5 focuses on performance in large infrastructures.

Timur Guseynov, product marketing manager, and Dmitry Antonov, director of the backup systems division, conducted a presentation on Cyber Backup 18.5: about why the release is dedicated specifically to performance, what tasks it addresses in enterprise scenarios, and what lies ahead for the product.

The company's release cycle is stable — four releases a year, of which two are full-featured. Version 18.5 falls into this category. It focuses on changes that directly affect the system's ability to meet strict backup windows. The main vector is scalability alongside infrastructure growth. Developers refer to this as the "enterprise direction": when the volume of data is measured in petabytes, any exceeding of the backup window leads to conflicts with business workloads.

Multithreading as the basis for acceleration

The most noticeable innovation is multithreading in two key scenarios. First, file backup has been completely redesigned. Now one agent can process up to 24 files in parallel from different folders located on both local and network drives. Configuration is done through a configuration file, but the effect is immediately noticeable: "file dumps" — large file repositories with millions of documents, document management systems, application system archives — cease to be a bottleneck.

Secondly, multithreaded processing of incremental backups for PostgreSQL has been introduced. Previously, data growth in large databases required significant time even for changes of a few percent. Now, binary increments without accessing WAL logs operate in multiple threads, significantly speeding up both the copying process and subsequent recovery. At the same time, the ability to parallel archive transaction logs for Point-in-Time Recovery remains fully intact. All operations are accessible through a graphical interface — no CLI gymnastics.

These changes are part of a broader philosophy. Performance in Cyber Backup is understood not as a marketing figure, but as the real ability of the system to grow alongside the customer's infrastructure. The smaller the backup window, the lower the risks for business processes.

Management in large environments: from API to batch operations

The second major block of the release concerns ease of administration. Version 18.0 already introduced the first set of public API features — managing protection plans and dynamic device groups. Now, a second phase has been added: working directly with backups and processes. Through the API, it is possible to view and delete backups, manage tasks for virtual machines, Microsoft Exchange, and SQL Server. Future plans include recovery tasks (full and granular), managing data sources, detecting and registering agents. The goal is clear: to gradually transition all management to a modern software interface that is convenient for automation and integration into heterogeneous infrastructures.

The web console has also seen long-awaited improvements. The most practical is batch management of protection plans. Previously, in large environments where there could be hundreds of protection plans, administrators had to process them one by one. Now, it is enough to pull up a list, check the necessary boxes, and perform a mass operation: start, stop, enable, disable, delete, or export. For companies with hundreds of servers, this is not a minor detail, but a real reduction in operational load.

Convenient little features have also been added: a "Comment" field for PostgreSQL instances and Patroni clusters. When there are dozens of identical IP addresses or hosts in the device list, the ability to leave a clear label greatly simplifies navigation. Filtering, sorting has improved, new columns have appeared in the plans tables, and information display about tapes and drives is more informative.

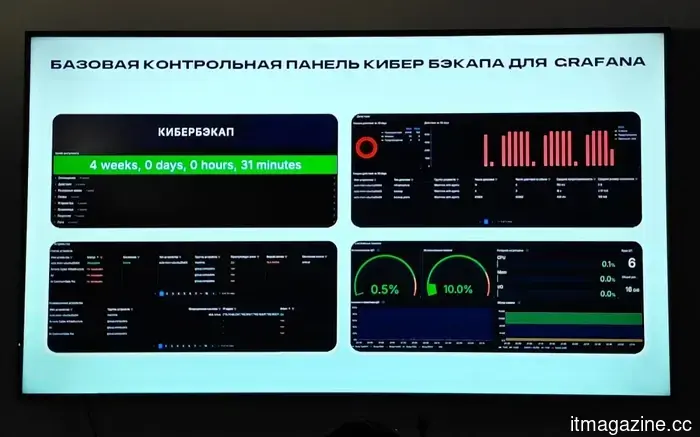

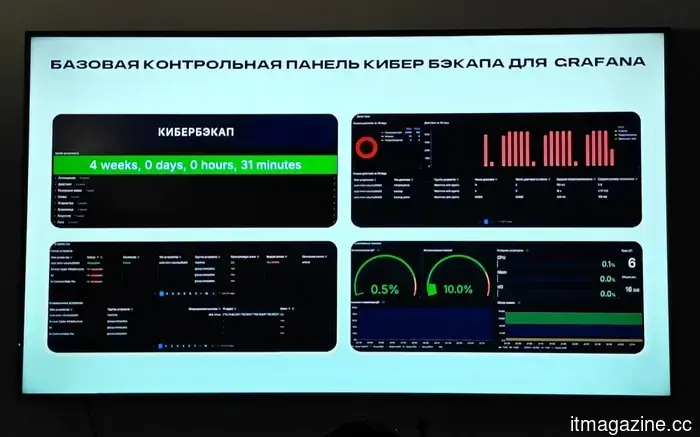

Monitoring and integrations: a step towards centralized control

Administrators have long requested proper integration with centralized IT monitoring systems. Previously, it was necessary to work around this with scripts and reverse proxies, which was inconvenient and unreliable. In version 18.5, full metric export via OpenTelemetry Collector (a new component in the package) and Prometheus exporter has been introduced. A basic dashboard panel for Grafana is already ready and comes "out of the box." It provides an overall picture: job status, storage utilization, number of failures, performance. This allows viewing the backup system on a single panel alongside servers, virtualization, databases, and hardware.

For large and even medium-sized companies, this approach reduces the resource intensity of monitoring and speeds up incident response. Metrics can be aggregated, visualized, and used for cost management of protection.

Security and recovery: practical improvements

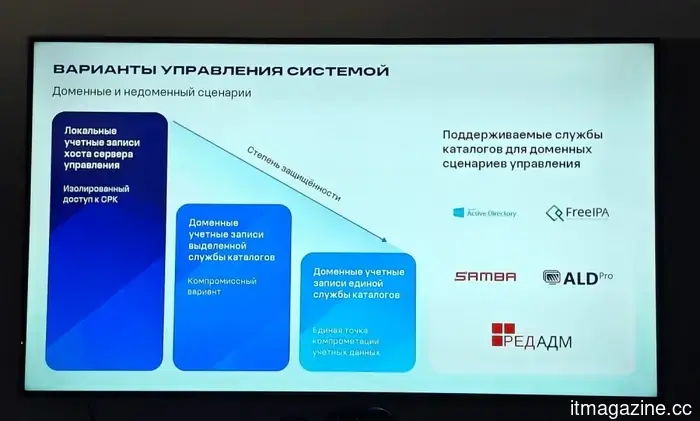

Developers did not overlook the topic of protecting the backups themselves. The release has strengthened support for LDAPS using system certificates — an important point for domain environments with heightened requirements for credential protection. Work continues on multi-factor authentication and integration with PAM solutions.

For those working with Microsoft Exchange, the long-awaited granularity has arrived: it is now possible to restore archived mailboxes down to the level of individual messages. This significantly reduces network load and recovery time compared to a full database restore.

The work with the boot media has improved: it now fully supports Backup Gateway from Cyber Storage. This is important for isolated environments, P2V/V2P migration scenarios, and mass deployment of similar systems.

Read also

AI finds bugs faster than they can be fixed

Artificial intelligence has learned to find vulnerabilities in software at such speed that small development teams simply cannot keep up with all the identified issues. And this is despite the fact that one of the most powerful models — Anthropic Mythos — has not yet officially hit the market.

Cyber Media Server: a new stage, but cautiously

A lot of attention has been paid to replacing the old storage node with Cyber Media Server — a component developed based on Cyber Infrastructure technologies. The MVP version 1.0 is already almost ready for limited testing with selected customers. It operates under Red OS 7.3 (included), supports the immutability of backups (WORM), and switching storage between management servers. Management of immutability is currently through the CLI of the BGW gateway, but this is a conscious choice for the first stage.

Developers honestly explained why the release was slightly delayed: after the initial announcement, they discovered performance degradation in certain scenarios. The problem has now been resolved, but they decided not to rush. A full broad release is a matter of the coming months, after receiving feedback from the first users.

Roadmap and priorities for 2025–2026

The presentation concluded with a discussion of plans. In version 18.6 (second quarter) — stabilization and refinements, including certification from FSTEC. The main functional release 19.0 is scheduled for the third to fourth quarter. Among the priorities: further development of multithreading, support for a greater number of workloads by a single management server, full integration with Tatlin.Backup via the T-Boost protocol, development of the API, a new monitoring and reporting system, improvements in auditing and licensing.

Among integrations: RuPost, development of PostgreSQL support (including Jatoba clusters), Basis Dynamix, DAG for Exchange, improvements for Mailion. Support for VM manager has been temporarily put on "gray" status — vendor-side refinements are required.

They also noted work on architectural changes. Many current limitations (including backing up the management server itself, password manager, full clustering) stem from historical solutions. The team consciously invests time in reworking the foundation to address these pain points more fundamentally in future releases.

Training and feedback

At the end of the session, there was a lively Q&A block. They discussed the economic situation and its impact on purchases (the company notes caution, but without a sharp decline), requests for a password manager and backup for the management server (in the plans, but with lower priority than performance), integrations with SOC and hardware, support for Dirty Bitmap in Russian hypervisors, issues of data immutability and transitioning to local storage under sanctions.

The answers were honest: priorities are set based on the real pain points of large customers, integrations with security solutions exist (Syslog, compatibility), but the company consciously positions the product as infrastructural rather than a protection tool. They are collecting statistics on many requests — the more similar inquiries, the higher the priority.

Cyber Backup 18.5 turned out to be a very "mature" release. Without loud marketing statements, but with a set of changes that genuinely ease the lives of administrators of large and heavily loaded infrastructures. Multithreading, batch management, proper monitoring through Grafana, granular recovery, and movement towards a modern API — all of this indicates that the developers are listening carefully to the market and focused on practical value.

Other articles

Cyber Backup 18.5 focuses on performance in large infrastructures.

Timur Guseynov, product marketing manager, and Dmitry Antonov, director of backup systems, conducted a presentation on Cyber Backup 18.5: about why the release is dedicated specifically to performance, what tasks it addresses in enterprise scenarios, and what lies ahead for the product.

Cyber Backup 18.5 focuses on performance in large infrastructures.

Timur Guseynov, product marketing manager, and Dmitry Antonov, director of backup systems, conducted a presentation on Cyber Backup 18.5: about why the release is dedicated specifically to performance, what tasks it addresses in enterprise scenarios, and what lies ahead for the product.

LG Electronics and Nvidia are engaged in discussions regarding robotics and AI data centers.

LG Electronics and Nvidia have announced discussions regarding robotics, AI data centers, and mobility, following a visit from Nvidia’s Madison Huang to LG's headquarters in Seoul.

LG Electronics and Nvidia are engaged in discussions regarding robotics and AI data centers.

LG Electronics and Nvidia have announced discussions regarding robotics, AI data centers, and mobility, following a visit from Nvidia’s Madison Huang to LG's headquarters in Seoul.

The upcoming upgrade for your iPhone is likely to strain your finances, and AI is responsible for it.

Apple's soon-to-be CEO is confronted with a daunting choice: either absorb a 400% surge in memory costs due to AI demand or transfer that burden to consumers.

The upcoming upgrade for your iPhone is likely to strain your finances, and AI is responsible for it.

Apple's soon-to-be CEO is confronted with a daunting choice: either absorb a 400% surge in memory costs due to AI demand or transfer that burden to consumers.

Snapchat's latest advertising format makes AI chatbots resemble sales representatives.

Snapchat's AI Sponsored Snaps might appear to be a novel advertising format, but they also demonstrate the rapid transition of chatbots from handling complaints to pursuing sales.

Snapchat's latest advertising format makes AI chatbots resemble sales representatives.

Snapchat's AI Sponsored Snaps might appear to be a novel advertising format, but they also demonstrate the rapid transition of chatbots from handling complaints to pursuing sales.

Perplexity Comet Browser has finally mastered multitasking on the iPad.

Perplexity Comet now integrates more seamlessly with iPadOS, providing users with a compelling reason to choose the AI browser over Safari or Chrome.

Perplexity Comet Browser has finally mastered multitasking on the iPad.

Perplexity Comet now integrates more seamlessly with iPadOS, providing users with a compelling reason to choose the AI browser over Safari or Chrome.

The Galaxy S27 series from Samsung could finally move away from the uninspiring camera design.

Samsung is said to be experimenting with a different camera arrangement for the Galaxy S27, and this adjustment may be introduced due to the new magnetic charging feature.

The Galaxy S27 series from Samsung could finally move away from the uninspiring camera design.

Samsung is said to be experimenting with a different camera arrangement for the Galaxy S27, and this adjustment may be introduced due to the new magnetic charging feature.

Cyber Backup 18.5 focuses on performance in large infrastructures.

Timur Guseynov, product marketing manager, and Dmitry Antonov, director of backup systems, conducted a presentation on Cyber Backup 18.5: about why the release is focused on performance, what tasks it addresses in enterprise scenarios, and what lies ahead for the product.