A lawsuit against ChatGPT alleges that it provided guidance to a shooter on the methods and locations for their attack.

Tim Witzdam / Pexels

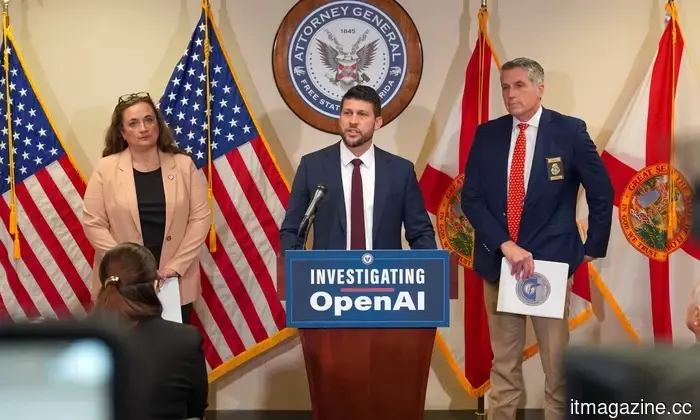

The attorney general of Florida has initiated a criminal investigation into OpenAI, claiming that ChatGPT was involved in planning the mass shooting at Florida State University that resulted in two fatalities last year.

As reported by The Washington Post, Attorney General James Uthmeier announced this at a press conference on Tuesday, asserting that the chatbot provided tactical guidance to the alleged shooter. “The chatbot advised the shooter on what type of weapon to use, which ammunition matched which firearm, and whether a gun would be beneficial for close-range situations,” Uthmeier stated.

Office of the Florida Attorney General

He did not shy away from emphasizing the implications: “If a person were behind the screen, we would be charging them with murder.” His office has also issued subpoenas to OpenAI, requesting the company to clarify its policies concerning user conversations that involve threats of violence.

Is OpenAI accountable for users' actions?

OpenAI has strongly disagreed with the allegations. Spokesperson Kate Waters commented, “The mass shooting at Florida State University last year was a tragic event, but ChatGPT cannot be held responsible for this awful crime.”

The company argues that ChatGPT offered factual information in response to inquiries that could be found online and did not promote or endorse any illegal behavior.

Is this merely the beginning?

This investigation is indicative of rising concerns regarding AI chatbots. OpenAI is already facing scrutiny following a separate mass shooting in Canada and numerous lawsuits from families claiming that ChatGPT played a role in their loved ones' suicides.

Experts in AI highlight that the safeguards for chatbots are not foolproof. As Carnegie Mellon professor Ramayya Krishnan noted, “The guardrails are not 100 percent effective.”

Whether OpenAI can be held criminally accountable will be determined by the courts, but it is clear that these AI chatbots can significantly impact an individual's mental health and should be utilized with caution.

Rachit is a seasoned tech journalist with over seven years of experience in covering the consumer technology landscape.

Astronauts on the ISS are receiving a laptop upgrade from HP

The International Space Station is set to undergo a significant refresh of HP laptops.

Few experiences are more aggravating than dealing with a sluggish laptop, and the thought of enduring one while living and working in a space station is even more unbearable. Since astronauts are not immune to the sluggishness associated with aging laptops, NASA is planning a comprehensive upgrade. The Expedition 74 crew has recently reviewed a station-wide computer upgrade scheduled for the weekend, beginning with the replacement of network servers and followed by the introduction of “new, more powerful laptop computers” on the International Space Station.

Read more

Meta’s latest surveillance plans are so dystopian that I am out of words

Meta intends to monitor every mouse click, keystroke, and action of its employees, potentially tying their job performance to this tracking.

Having covered tech for many years, I have witnessed companies engage in questionable practices in the name of innovation. However, Meta's latest development may take the lead. According to a Reuters report, Meta is implementing tracking software on its employees' work computers. This tool, known as Model Capability Initiative (MCI), will track mouse movements, clicks, and keystrokes, and periodically capture screenshots of employees' screens.

Read more

Workspace Intelligence transforms Gemini into an all-encompassing AI assistant for your work

A few weeks back, Google announced a new feature named Personal Intelligence. The overarching goal is to enable Gemini to access content saved in your Gmail inbox and Photos library. When you inquire about travel plans, projects, or any pertinent topics, the AI will automatically refer to your stored information to provide insightful responses, without needing to ask for any context. It simply understands you.

Read more

Other articles

The BMW i7 facelift introduces Gen6 cells from Rimac, offers a range exceeding 350 miles, and replaces Level 3 with a more affordable Symbiotic Drive.

The 2027 BMW i7 is equipped with Rimac-designed Gen6 cylindrical cells, has a capacity of 112.5 kWh, supports 250 kW charging, and offers an EPA range exceeding 350 miles. Production will commence in July at Dingolfing.

The BMW i7 facelift introduces Gen6 cells from Rimac, offers a range exceeding 350 miles, and replaces Level 3 with a more affordable Symbiotic Drive.

The 2027 BMW i7 is equipped with Rimac-designed Gen6 cylindrical cells, has a capacity of 112.5 kWh, supports 250 kW charging, and offers an EPA range exceeding 350 miles. Production will commence in July at Dingolfing.

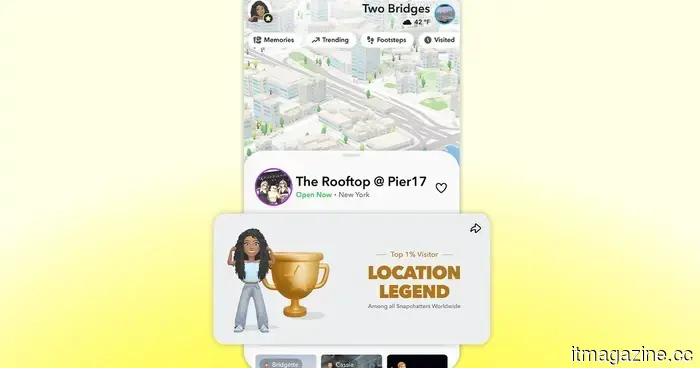

Snapchat Place Loyalty introduces a competitive element to Snap Map.

Snapchat has launched Place Loyalty, a new feature on Snap Map that offers tiered badges for regular visits, making everyday gatherings something to proudly display.

Mozilla has addressed 271 Firefox vulnerabilities identified by Anthropic's Claude Mythos during a single assessment.

Firefox 150 includes 271 bug fixes identified by Claude Mythos Preview. Mozilla states that the defects are limited. The UK AI Security Institute indicates that the model can also operate independently.

Mozilla addresses 271 vulnerabilities in Firefox identified by Anthropic's Claude Mythos during a single evaluation.

Firefox 150 includes 271 bug fixes identified by Claude Mythos Preview. Mozilla states that the issues are limited in number. The UK AI Security Institute mentions that the model is capable of launching autonomous attacks as well.

Google transforms Chrome into an autonomous AI workplace tool, introducing features like Auto Browse, Skills, and enterprise DLP for $6 per month.

Chrome introduces Auto Browse, AI Skills, a Gemini side panel, and an enterprise DLP service priced at $6/month. Google claims that the browser has evolved from being merely a window to becoming a workplace AI platform.

Snapchat Place Loyalty introduces a competitive element to Snap Map.

Snapchat has launched Place Loyalty, a new feature on Snap Map that offers tiered badges for regular visits, making everyday gatherings something to proudly display.

Mozilla has addressed 271 Firefox vulnerabilities identified by Anthropic's Claude Mythos during a single assessment.

Firefox 150 includes 271 bug fixes identified by Claude Mythos Preview. Mozilla states that the defects are limited. The UK AI Security Institute indicates that the model can also operate independently.

Mozilla addresses 271 vulnerabilities in Firefox identified by Anthropic's Claude Mythos during a single evaluation.

Firefox 150 includes 271 bug fixes identified by Claude Mythos Preview. Mozilla states that the issues are limited in number. The UK AI Security Institute mentions that the model is capable of launching autonomous attacks as well.

Google transforms Chrome into an autonomous AI workplace tool, introducing features like Auto Browse, Skills, and enterprise DLP for $6 per month.

Chrome introduces Auto Browse, AI Skills, a Gemini side panel, and an enterprise DLP service priced at $6/month. Google claims that the browser has evolved from being merely a window to becoming a workplace AI platform.

Pichai kicks off Cloud Next 2026 with a $240 billion backlog, 750 million Gemini users, and a strategy to transform Search into an agent manager.

Google Cloud surpassed $70 billion in revenue, showing a 48% growth, with a backlog of $240 billion. Pichai mentioned that Search will evolve into an agent manager. Capital expenditures have doubled to $185 billion.

Pichai kicks off Cloud Next 2026 with a $240 billion backlog, 750 million Gemini users, and a strategy to transform Search into an agent manager.

Google Cloud surpassed $70 billion in revenue, showing a 48% growth, with a backlog of $240 billion. Pichai mentioned that Search will evolve into an agent manager. Capital expenditures have doubled to $185 billion.

A lawsuit against ChatGPT alleges that it provided guidance to a shooter on the methods and locations for their attack.

Florida's attorney general asserts that ChatGPT suggested to the FSU shooter which firearm to select, what ammunition to purchase, and the timing for the attack. OpenAI maintains that the chatbot acted appropriately.