AI-generated images are now being misused to create false evidence for auto insurance fraud.

Insurers are observing an increase in fraudulent crash images and modified claim documents in their submissions, which is prompting a more stringent approach to fraud verification.

AI-generated images of car accidents are becoming a significant issue in insurance fraud, with Admiral noting a notable rise in incidents in 2025 attributed to altered visuals and fabricated documentation. The challenge now extends beyond dubious paperwork. Images of damaged cars can be manipulated to exaggerate losses or bolster duplicate claims.

A BBC report highlighted an example where an AI-altered license plate appeared on a damaged Land Rover, with a similar image featuring a different plate in another case. In another instance, an image was modified to make rear-end damage appear worse than it actually was. Admiral reported that its fraud detection team intercepted these claims and rejected them before any payments were issued.

Admiral also indicated that fraud increased by 71% in 2025 compared to the previous year, partly due to easier access to AI tools capable of altering images and generating fictitious documents. This development has a clear consumer impact; the financial burden of fraud doesn't solely affect the perpetrators.

Understanding the mechanism of fake evidence

Rather than relying solely on falsified forms or fabricated narratives, scammers can now provide a convincing image as alleged evidence. The instances mentioned demonstrate how AI was utilized to modify vehicle photos to exaggerate damage or recycle the same incident for another claim.

This shift changes the responsibilities of claims teams. They must now verify the authenticity of the images in addition to reviewing documents and timelines. Admiral noted that its fraud detection tools are advancing, and the broader insurance industry is collaborating to address this increasingly prevalent issue.

The impact on premiums

Fraud incurs costs throughout the insurance system, which companies claim can contribute to escalating premiums overall. Thus, AI-generated image fraud transcends being a niche crime; even those with legitimate claims might face the consequences through elevated costs and a more thorough review process.

Some cases involve opportunistic efforts to inflate genuine losses, while others include fake documentation designed to support a fraudulent claim from the outset. AI facilitates the scaling of both approaches.

Next steps

The immediate reaction will be enhanced detection capabilities, but the implications for consumers are evident. Admiral indicated that fabricated or exaggerated evidence can lead to claim denials, policy cancellations, and, in more severe cases, criminal charges. As AI-manipulated vehicle evidence becomes more widespread, meticulous examination of crash photos is likely to become standard procedure in claims assessments.

While Google has implemented measures to watermark AI-generated images, this practice is not universal across the industry.

Другие статьи

How EverCognitive assists organizations in transforming AI aspirations into tangible business results.

Elizabeta Gjorgievska Joshevski describes how EverCognitive assists companies in navigating AI transformation through strategic planning, preparedness, and quantifiable business results.

How EverCognitive assists organizations in transforming AI aspirations into tangible business results.

Elizabeta Gjorgievska Joshevski describes how EverCognitive assists companies in navigating AI transformation through strategic planning, preparedness, and quantifiable business results.

Trusti examines recommendations centered around human needs in digital platforms.

Trusti presents a community-focused method for recommendations, aiding small businesses and users in establishing trust through collective experiences and online platforms.

Trusti examines recommendations centered around human needs in digital platforms.

Trusti presents a community-focused method for recommendations, aiding small businesses and users in establishing trust through collective experiences and online platforms.

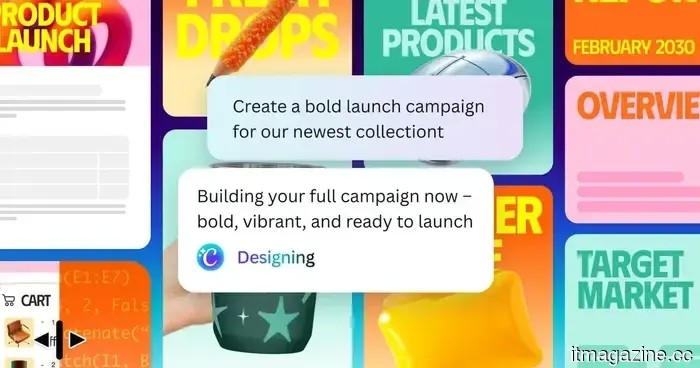

Canva AI 2.0 intends to transform the process of converting your ideas into refined projects.

Canva has introduced its AI 2.0 update, offering a more conversational method for designing and finishing projects. Rather than beginning with templates, users can articulate their requirements to create structured, editable designs.

Canva AI 2.0 intends to transform the process of converting your ideas into refined projects.

Canva has introduced its AI 2.0 update, offering a more conversational method for designing and finishing projects. Rather than beginning with templates, users can articulate their requirements to create structured, editable designs.

Roblox AI assistant acquires autonomous tools for game planning, building, and self-testing.

Roblox enhances its AI assistant by introducing a planning mode, procedural 3D models, and self-correcting agentic loops, along with MCP integration featuring Claude, Cursor, and Codex.

Roblox AI assistant acquires autonomous tools for game planning, building, and self-testing.

Roblox enhances its AI assistant by introducing a planning mode, procedural 3D models, and self-correcting agentic loops, along with MCP integration featuring Claude, Cursor, and Codex.

Trusti investigates human-centered suggestions on digital platforms.

Trusti presents a community-focused method for recommendations, enabling small businesses and users to foster trust through collective experiences and online platforms.

Trusti investigates human-centered suggestions on digital platforms.

Trusti presents a community-focused method for recommendations, enabling small businesses and users to foster trust through collective experiences and online platforms.

AI-generated images are now being misused to create false evidence for automobile insurance fraud.

Edited vehicle photos created by AI are emerging as a new method of insurance fraud, as Admiral has connected an increase in cases to altered accident images, repeated claims, and falsified claim documentation that can escalate expenses throughout the system.

AI-generated images are now being misused to create false evidence for automobile insurance fraud.

Edited vehicle photos created by AI are emerging as a new method of insurance fraud, as Admiral has connected an increase in cases to altered accident images, repeated claims, and falsified claim documentation that can escalate expenses throughout the system.

AI-generated images are now being misused to create false evidence for auto insurance fraud.

AI-edited images of vehicles are emerging as a new tool for insurance fraud, with Admiral noting an increase in cases associated with altered crash photos, duplicate claims, and forged documentation that can elevate expenses throughout the entire system.