Adobe introduces Firefly AI Assistant to coordinate tasks throughout Creative Cloud.

Adobe has unveiled the Firefly AI Assistant, a conversational tool that facilitates task management across Photoshop, Premiere, Lightroom, Illustrator, Express, and Frame.io using natural language commands. Formerly known as Project Moonlight, it will enter public beta in the upcoming weeks, incorporates third-party models such as Anthropic’s Claude, and retains context between sessions. Adobe has also introduced Firefly Image Model 5, Custom Models, and the node-based Project Graph workflow system. This launch is occurring as CEO Narayen prepares to step down and the company contends with competition from Canva, which has 260 million monthly active users, and Figma.

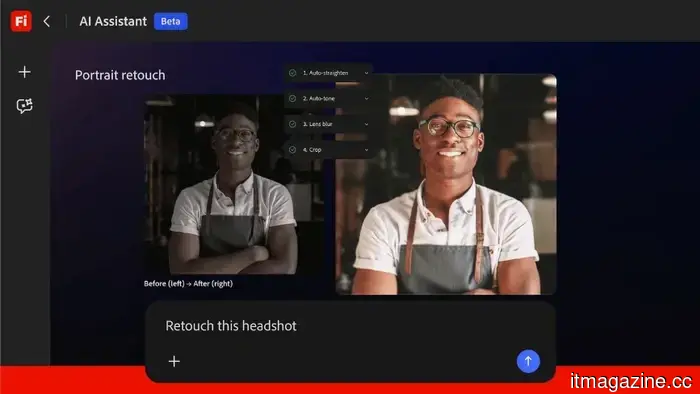

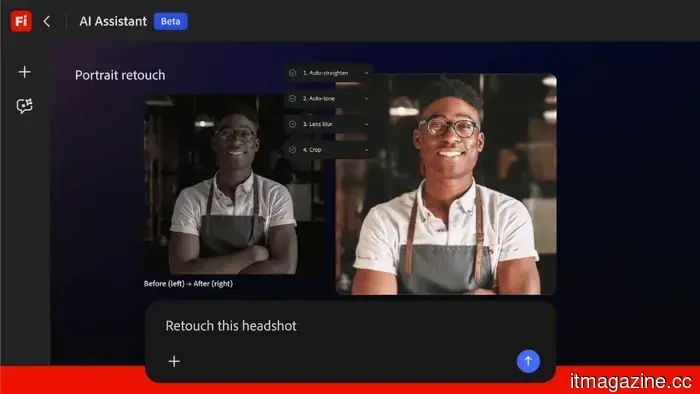

The Firefly AI Assistant is designed to function seamlessly across Photoshop, Premiere Pro, Lightroom, Illustrator, Express, and the Firefly web app for accomplishing creative tasks expressed in simple terms. Instead of toggling between applications and navigating menus, designers can communicate their needs directly to the assistant—such as resizing images for social media, color-grading video footage to align with brand standards, or generating various vector logo designs. The system then coordinates the tasks across the relevant Adobe applications.

Entering public beta in the coming weeks, the assistant was first showcased as “Project Moonlight” at Adobe MAX in October 2025. It retains the context of ongoing projects, remembering parameters, brand guidelines, and past decisions, rather than resetting with each session. It is also integrated with Frame.io, Adobe's platform for collaborative video reviews, allowing for feedback and approval processes to flow directly into the assistant's task management.

Adobe confirmed that the assistant will be compatible with third-party AI models, including Anthropic's Claude, alongside its own Firefly models and partners such as Google, OpenAI, Runway, Luma AI, and ElevenLabs. The new image and video editing capabilities announced with the assistant are now available to customers with Firefly plans.

The emergence of the Firefly AI Assistant marks Adobe's entry into the era of agentic software, representing a shift from tools that respond to singular commands to systems that comprehend intent and can autonomously carry out multi-step workflows. This transition signifies a major structural change for a company historically focused on selling standalone applications, each featuring its own interface, learning curve, and subscription options.

The competitive impetus behind this move is reflected in Adobe’s financial data. The company reported revenues of $23.77 billion for fiscal year 2025, with digital media's annual recurring revenue reaching $19.20 billion, indicating a year-on-year growth of 11.5%. It aims for $26 billion in revenue for FY2026. While these figures are substantial, the growth rate has slowed, and Adobe's stock has dropped about 43% as investors question whether the company’s traditional per-application model can thrive in a market where every software provider is integrating AI and competitors are presenting increasingly powerful creative tools at lower prices.

Canva boasts over 260 million monthly active users, many of whom are small businesses and marketing teams targeted by Adobe's Express product. Figma holds an estimated 80 to 90 percent market share in UI and UX design, a space Adobe attempted to enter with a $20 billion acquisition offer, which regulators ultimately blocked. Both companies are developing their own AI-powered creative assistants and do not carry the legacy of a product suite created prior to the advent of large language models.

In practical terms, the Firefly AI Assistant serves as an orchestration layer above Adobe's standalone applications. A user could ask it to process a raw image from Lightroom, apply a specific editing style, create three variations with different aspect ratios in Photoshop, generate a series of corresponding social media graphics in Express, and get the assets ready for review in Frame.io—all within a single conversation. The assistant determines which application is best suited for each task and executes them accordingly.

This capability is particularly significant because multi-application workflows are typically where creative professionals lose the most time. Video editors in Premiere Pro who need a title card designed in Illustrator, color correction from Lightroom, and audio processing from Adobe’s tools currently have to handle these transfers manually. The goal of the assistant is to eliminate this friction by providing a single interface, making the applications themselves invisible and focusing solely on the results.

Adobe is also introducing Firefly Image Model 5 in public beta, its latest image generation model, and expanding Firefly Custom Models, which allow creators to train a model using their own image libraries to capture specific visual styles, character designs, or photographic appearances. Custom models are private by default and reusable across projects, catering to enterprise teams and studios that prioritize consistent visual output without exposing their assets to external training sets.

Simultaneously, Adobe is advancing Project Graph, a visual node-based system that enables creators to design, connect, and automate AI-driven workflows across Creative Cloud. While the Firefly AI Assistant employs natural language, Project Graph utilizes a visual editor where users can integrate AI models, Adobe tools, and effects into reusable "capsules," which serve

Other articles

More than a hundred Chrome extensions have been found causing significant issues. See if you're using any of them.

A recent report associates 108 Chrome extensions with identity theft, session hijacking, and misuse of browsers, suggesting that if you haven't reviewed your Chrome extensions recently, it's time to examine your seemingly harmless add-ons more closely.

More than a hundred Chrome extensions have been found causing significant issues. See if you're using any of them.

A recent report associates 108 Chrome extensions with identity theft, session hijacking, and misuse of browsers, suggesting that if you haven't reviewed your Chrome extensions recently, it's time to examine your seemingly harmless add-ons more closely.

Adobe introduces the Firefly AI Assistant to manage tasks throughout Creative Cloud.

Adobe's Firefly AI Assistant employs natural language to coordinate activities across Photoshop, Premiere, Lightroom, and Illustrator. It will soon be available in public beta and includes integration with Anthropic Claude.

Adobe introduces the Firefly AI Assistant to manage tasks throughout Creative Cloud.

Adobe's Firefly AI Assistant employs natural language to coordinate activities across Photoshop, Premiere, Lightroom, and Illustrator. It will soon be available in public beta and includes integration with Anthropic Claude.

Wayve expands its $1.2 billion funding round with an additional $60 million from AMD, Arm, and Qualcomm.

Wayve has secured $60 million from AMD, Arm, and Qualcomm Ventures, thereby expanding its Series D funding round to $1.2 billion. Plans for robotaxi trials with Uber are set for Tokyo and London starting in 2026.

Wayve expands its $1.2 billion funding round with an additional $60 million from AMD, Arm, and Qualcomm.

Wayve has secured $60 million from AMD, Arm, and Qualcomm Ventures, thereby expanding its Series D funding round to $1.2 billion. Plans for robotaxi trials with Uber are set for Tokyo and London starting in 2026.

Auctor steps out of stealth mode with $20M funding led by Sequoia.

Auctor has come out of stealth mode, securing $20 million in funding led by Sequoia Capital, aimed at addressing issues in enterprise software implementation, a sector where 50% of projects fail to meet their deadlines.

Auctor steps out of stealth mode with $20M funding led by Sequoia.

Auctor has come out of stealth mode, securing $20 million in funding led by Sequoia Capital, aimed at addressing issues in enterprise software implementation, a sector where 50% of projects fail to meet their deadlines.

Norwegian defense startup Stendr secures $5.4 million in funding.

Stendr, a startup focused on AI counter-drone technology and co-founded by Aleksander Leonard Larsen from Sky Mavis, has secured $5.4 million in pre-seed funding to develop European sovereign drone defense systems.

Norwegian defense startup Stendr secures $5.4 million in funding.

Stendr, a startup focused on AI counter-drone technology and co-founded by Aleksander Leonard Larsen from Sky Mavis, has secured $5.4 million in pre-seed funding to develop European sovereign drone defense systems.

Samsung is reportedly developing another TriFold phone, this time featuring a wide screen design.

As broad as it can be.

Samsung is reportedly developing another TriFold phone, this time featuring a wide screen design.

As broad as it can be.

Adobe introduces Firefly AI Assistant to coordinate tasks throughout Creative Cloud.

Adobe's Firefly AI Assistant utilizes natural language to manage tasks within Photoshop, Premiere, Lightroom, and Illustrator. It will soon enter public beta, featuring integration with Anthropic Claude.