Your upcoming earbuds might be able to translate text and recognize objects for you.

Researchers at the University of Washington have created a new prototype system that could transform how individuals engage with artificial intelligence in their daily routines. Named VueBuds, this system incorporates small cameras into typical wireless earbuds, enabling users to inquire about their surroundings in near real-time.

The idea is straightforward yet impactful. A user can fix their gaze on an item, such as a food package with a foreign language, and request the AI to translate it. Within about a second, the system provides a response through the earbuds, facilitating a smooth, hands-free interaction.

A Unique Take on AI Wearables

In contrast to smart glasses, which have experienced adoption challenges due to privacy issues and design constraints, VueBuds offers a more discreet solution. The system utilizes low-resolution, black-and-white cameras embedded in earbuds to take still images instead of continuous video.

University of Washington

These images are sent via Bluetooth to a connected device, where a small AI model processes them locally. This on-device processing eliminates the need to transmit data to the cloud, addressing major concerns regarding wearable cameras.

To enhance privacy further, the earbuds feature a visible indicator light during recording and allow users to immediately delete captured images.

Navigating Power and Performance Challenges

One significant challenge the research team encountered was power consumption. Cameras consume considerably more energy than microphones, making the use of high-resolution sensors, like those in smart glasses, impractical.

To address this, the team opted for a camera roughly the size of a grain of rice, capturing low-resolution grayscale images. This method minimizes battery usage while allowing efficient Bluetooth transmission without sacrificing responsiveness.

Placement was another critical factor. By slightly angling the cameras outward, the system achieves a field of view between 98 and 108 degrees. Although there is a minor blind spot for objects held very close, researchers found that this does not impact usual usage.

The system also merges images from both earbuds into a single frame, enhancing processing speed. This capability enables VueBuds to respond in about one second, compared to two seconds for processing images separately.

Comparative Performance with Smart Glasses

In trials, 74 participants evaluated VueBuds against smart glasses such as Meta’s Ray-Ban models. Despite utilizing lower-resolution images and local processing, VueBuds delivered comparable performance overall.

Unsplash

The findings indicated that participants favored VueBuds for translation tasks, whereas smart glasses excelled at counting objects. In separate tests, VueBuds achieved accuracy rates of approximately 83–84% for translation and object identification, and up to 93% for recognizing book titles and authors.

Significance and Future Directions

The research points to a potential change in the design of AI-driven wearables. By integrating visual intelligence into a device that people already use, the system circumvents many obstacles faced by smart glasses.

Nonetheless, there are still limitations. The current system is unable to interpret color, and its capabilities remain in the preliminary stages. The team intends to explore the addition of color sensors and develop specialized AI models for tasks such as translation and accessibility support.

The researchers will present their results at the Association for Computing Machinery Conference on Human Factors in Computing Systems in Barcelona, providing a preview of a future where everyday devices seamlessly evolve into intelligent assistants.

Other articles

Sony unveils the INZONE H6 Air open-back gaming headset along with purple earbuds.

Sony has broadened its INZONE series with the open-back H6 Air headset designed for immersive gaming, rather than competitive esports, along with translucent purple earbuds.

Sony unveils the INZONE H6 Air open-back gaming headset along with purple earbuds.

Sony has broadened its INZONE series with the open-back H6 Air headset designed for immersive gaming, rather than competitive esports, along with translucent purple earbuds.

Sony introduces the INZONE H6 Air open-back gaming headset and purple earbuds.

Sony has broadened its INZONE collection with the H6 Air headset featuring an open-back design, created for immersive gaming rather than competitive esports, in addition to translucent purple earbuds.

Sony introduces the INZONE H6 Air open-back gaming headset and purple earbuds.

Sony has broadened its INZONE collection with the H6 Air headset featuring an open-back design, created for immersive gaming rather than competitive esports, in addition to translucent purple earbuds.

Your upcoming earbuds might be capable of translating text and recognizing objects for you.

Researchers at the University of Washington developed AI earbuds equipped with cameras that analyze the environment while focusing on privacy and processing information directly on the device.

Your upcoming earbuds might be capable of translating text and recognizing objects for you.

Researchers at the University of Washington developed AI earbuds equipped with cameras that analyze the environment while focusing on privacy and processing information directly on the device.

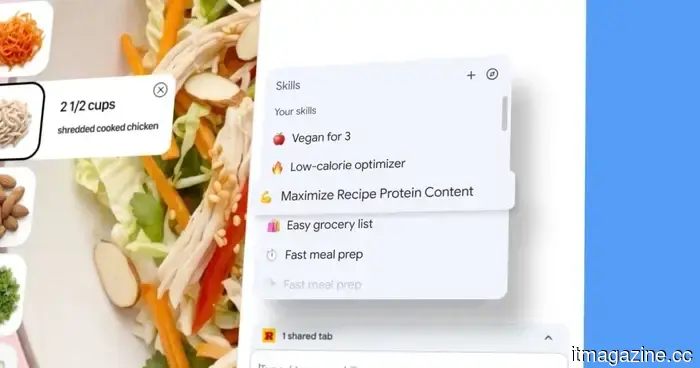

You can now store and reuse Gemini prompts in Chrome using the new Skills feature.

Google has introduced Skills in Chrome, a new functionality that allows users to save Gemini prompts as one-click tools that can be reused and executed across various tabs without the need to retype anything.

You can now store and reuse Gemini prompts in Chrome using the new Skills feature.

Google has introduced Skills in Chrome, a new functionality that allows users to save Gemini prompts as one-click tools that can be reused and executed across various tabs without the need to retype anything.

Sony unveils the INZONE H6 Air open-back gaming headset along with purple earbuds.

Sony has broadened its INZONE collection with the open-back H6 Air headset designed for immersive gaming experiences, rather than competitive esports, along with translucent purple earbuds.

Sony unveils the INZONE H6 Air open-back gaming headset along with purple earbuds.

Sony has broadened its INZONE collection with the open-back H6 Air headset designed for immersive gaming experiences, rather than competitive esports, along with translucent purple earbuds.

How Crypto Exchanges Pave the Path with Scalable and Resilient System Architecture

The digital asset market has expanded rapidly in recent years. Millions of individuals engage in daily trading, with activity surging within minutes during market fluctuations. This growth has compelled every crypto exchange to reevaluate its system architecture. Users now consider infrastructure not only when it fails. [...]

How Crypto Exchanges Pave the Path with Scalable and Resilient System Architecture

The digital asset market has expanded rapidly in recent years. Millions of individuals engage in daily trading, with activity surging within minutes during market fluctuations. This growth has compelled every crypto exchange to reevaluate its system architecture. Users now consider infrastructure not only when it fails. [...]

Your upcoming earbuds might be able to translate text and recognize objects for you.

Researchers at the University of Washington have developed AI earbuds featuring cameras that analyze the environment while emphasizing privacy and utilizing on-device processing.