Studies indicate that AI chatbots form judgments about users, and the outcomes are not always favorable.

Recent research indicates that chatbots are not merely processing your prompts; they are developing psychological profiles of users that contain various biases.

In the latest development in AI, we have transitioned from merely dealing with simple mistakes to more intricate and personal issues. New findings suggest that AI chatbots go beyond processing your requests—they are creating psychological profiles and assessing you in ways that may impact areas such as customer service and loan approvals.

A recent study by the Hebrew University of Jerusalem (shared via Tech Xplore) uncovers the hidden mechanisms through which large language models assess human users. Although we often perceive these bots as impartial tools, the study reveals that they are designed to attribute traits such as competence, honesty, and kindness to users.

The crux of the problem lies in the way AI models understand certain cues. The study discovered that while humans tend to evaluate holistically, AI analyzes individuals by breaking them down into components, scoring personality traits as distinct values, akin to columns in a spreadsheet. This results in a rigid and formulaic judgment style devoid of human subtlety.

Moreover, there are alarming implications regarding how these models determine trustworthiness. In scenarios involving loans or selecting babysitters, AI did not merely consider facts; it developed a notion of trust that favored those perceived as well-meaning, albeit through a mechanical perspective.

The study further underscores that these evaluations are not uniformly applied. Researchers identified notable biases in AI decision-making based on demographic factors such as age, religion, and gender, with disparities arising even when other details about an individual remained consistent. In financial contexts, these biases tended to be more systematic and pronounced than those observed in human decision-makers.

Even more disconcerting is the fact that there is no singular AI viewpoint. The study found that various models produced drastically different evaluations of the same individual, essentially employing different ethical benchmarks.

This issue is significant as such judgments could give rise to a new kind of digital anxiety. We are moving into an era where you may need to modify your behavior to elicit favorable responses from AI. Since different models might reward or punish the same characteristic, the specific AI system selected by a company could unwittingly dictate your eligibility for credit or employment.

As we progress toward greater automation, the AI sector requires more than just improved programming; we need transparency regarding these hidden judgments to prevent digital assistants from inadvertently damaging your reputation or financial status based on their perceptions. AI should aim to simplify our lives, not introduce an unsolicited layer of profiling.

Other articles

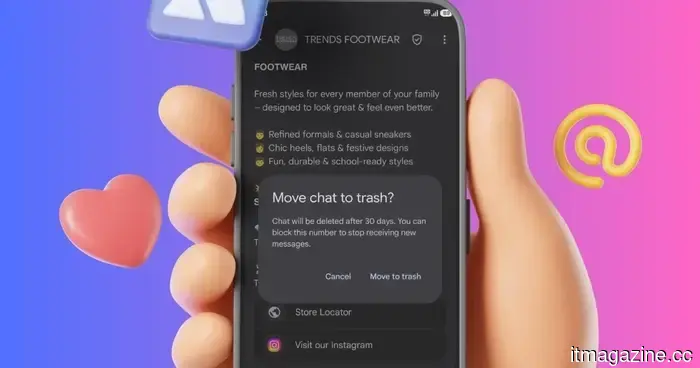

Google Messages is set to add a bit of excitement to your conversations.

Your dull text bubbles are about to undergo a transformation.

Google Messages is set to add a bit of excitement to your conversations.

Your dull text bubbles are about to undergo a transformation.

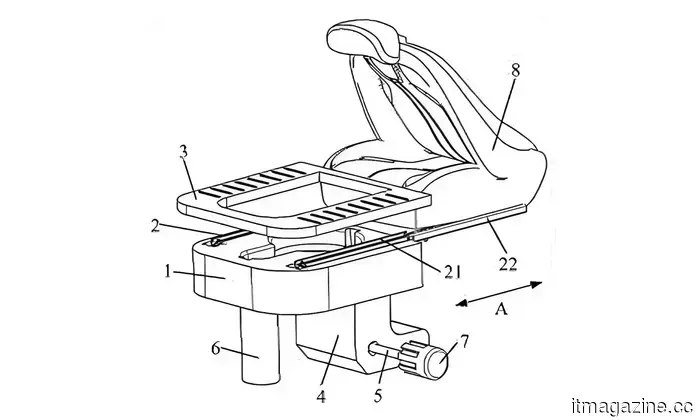

A Chinese car manufacturer has recently submitted a patent for vehicle seats that include a concealed toilet.

Seres has secured a patent for a pull-out toilet concealed beneath the car seat. It's innovative, it's practical, and it could very well be the most surprising automotive feature of the year.

A Chinese car manufacturer has recently submitted a patent for vehicle seats that include a concealed toilet.

Seres has secured a patent for a pull-out toilet concealed beneath the car seat. It's innovative, it's practical, and it could very well be the most surprising automotive feature of the year.

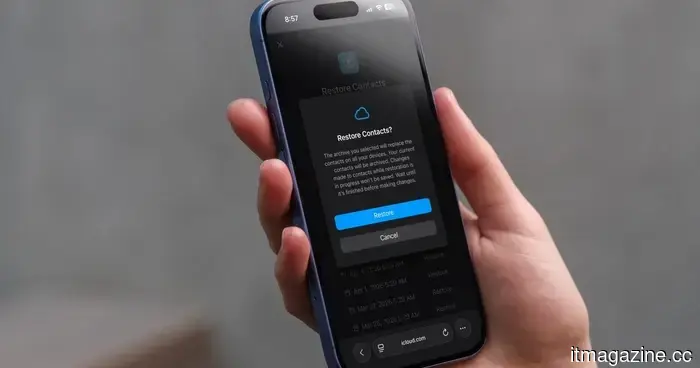

How to recover deleted or lost contacts on your iPhone

Misplacing a contact saved on your iPhone can be a stressful situation, but you can quickly retrieve your lost contacts by using iCloud. We’ll guide you through the process.

How to recover deleted or lost contacts on your iPhone

Misplacing a contact saved on your iPhone can be a stressful situation, but you can quickly retrieve your lost contacts by using iCloud. We’ll guide you through the process.

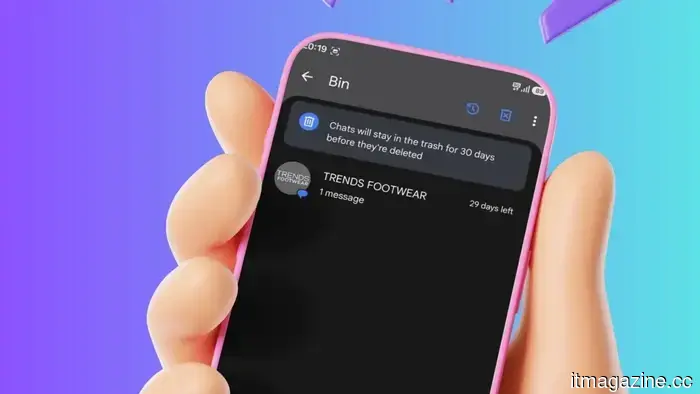

Google Messages is set to add a bit more excitement to your conversations.

Your dull text bubbles are about to receive an upgrade.

Google Messages is set to add a bit more excitement to your conversations.

Your dull text bubbles are about to receive an upgrade.

Studies indicate that AI chatbots form judgments about users, and the outcomes are not always favorable.

Is your AI assistant subtly undermining you? Recent studies indicate that chatbots evaluate users through rigid, mechanical reasoning, and the biases they uncover are more pronounced than those of humans.