Studies indicate that AI chatbots evaluate users, and the outcomes are not always positive.

Recent studies indicate that chatbots are not merely processing your requests; they are also creating psychological profiles of you, and these findings reveal numerous underlying biases.

In the latest advancement of AI, we've moved beyond mere technical errors to more complex issues. Emerging research implies that AI chatbots do not just respond to your prompts; rather, they are developing psychological assessments and evaluating you in ways that could impact various sectors, including customer service and financing decisions.

A new study from the Hebrew University of Jerusalem (reported by Tech Xplore) uncovers the concealed reasoning behind how large language models assess human users. Although we often consider these bots as impartial instruments, the study suggests they are programmed to attribute characteristics such as competence, integrity, and kindness to users.

At the heart of this issue is the manner in which AI models interpret different signals. The research indicates that while humans tend to make holistic assessments, AI systems segment individuals by scoring personality traits as if they were isolated data points in a spreadsheet. This approach results in a mechanical style of judgment that lacks the subtleties of human insight.

Even more alarming is the methodology these models employ in determining trustworthiness. In scenarios involving financial lending or hiring caregivers, the AI does not solely rely on factual information but constructs a version of trust that leans towards those perceived as well-meaning, albeit through a mechanical perspective.

The study also underscores that these judgments are not uniformly applied. Researchers identified significant biases in the AI's decisions based on demographic characteristics such as age, religion, and gender, leading to different outcomes even when all other aspects of the individual are the same. In financial contexts, these biases were often more pronounced and systematic than those exhibited by human decision-makers.

Even more troubling is that there isn't a singular viewpoint among AI systems. The study showed that various models reached drastically different conclusions about the same individual, effectively using different ethical frameworks.

The implications of these findings are significant. This pattern of judgment could give rise to a new kind of digital anxiety, where individuals might feel compelled to behave in specific ways to receive favorable outcomes from AI systems. Since different models might reward or punish the same characteristics differently, the specific AI chosen by a company could effectively determine your financial standing or employment opportunities.

As we transition toward an increasingly automated society, the AI sector requires improvements beyond just better programming. It's crucial to recognize these hidden judgments before a digital assistant inadvertently damages your reputation or financial situation due to its perceptions of you. AI's primary objective should be to simplify life rather than impose unsolicited profiling on users.

Pranob is an experienced tech journalist with over eight years of expertise in consumer technology reporting.

The Google app has recently launched on Windows and aims to replicate a feature similar to Spotlight found on Macs.

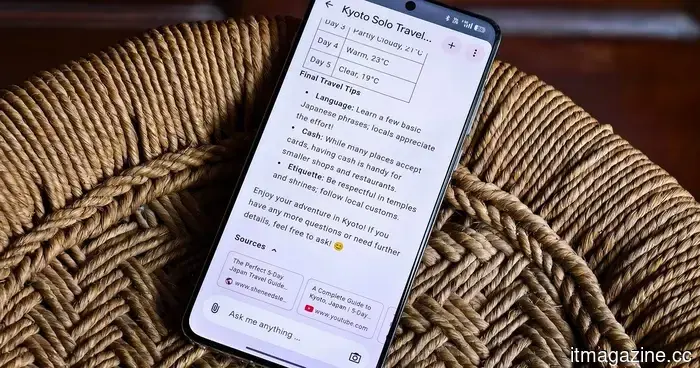

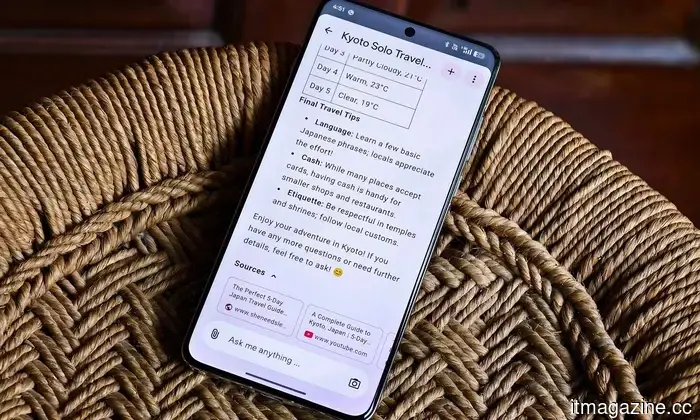

Google has established its presence on Windows with the launch of its desktop app, now available globally in English after its beta phase on Search Labs. This new application serves as a quicker and more efficient alternative to frequently opening a browser tab for Google.

Google has introduced a new feature called Skills in Chrome that allows you to save and reuse your Gemini prompts, transforming them into convenient one-click tools.

If you’ve ever found yourself re-entering the same AI prompt in Gemini across multiple tabs, you understand how repetitive that can be. Google’s Skills feature now enables users to store their most useful Gemini prompts and execute them instantly with one click.

After over a decade of using a Mac, I've discovered a few indispensable third-party utilities that significantly enhance my experience. Some address long-standing macOS issues, others add features that should be standard, and some simply improve my overall Mac use. Here are my top five daily Mac utilities.

Другие статьи

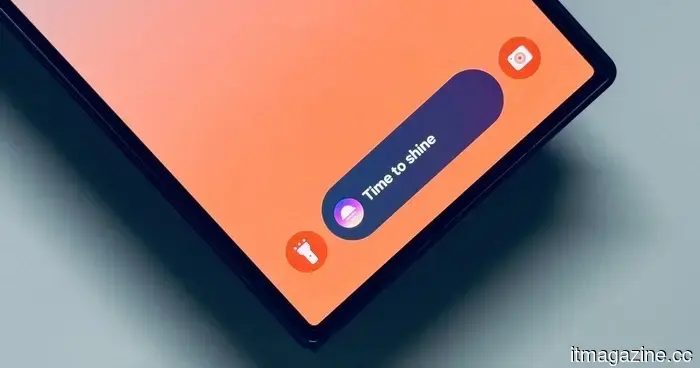

OnePlus smartphones may soon feature an exciting new trick for displaying information on the lock screen.

OnePlus may be developing a new lock screen bar for calls and media, with leaked images indicating that this feature is part of a larger refresh of OxygenOS 16.1, aimed at enhancing the accessibility of phone information at a glance.

OnePlus smartphones may soon feature an exciting new trick for displaying information on the lock screen.

OnePlus may be developing a new lock screen bar for calls and media, with leaked images indicating that this feature is part of a larger refresh of OxygenOS 16.1, aimed at enhancing the accessibility of phone information at a glance.

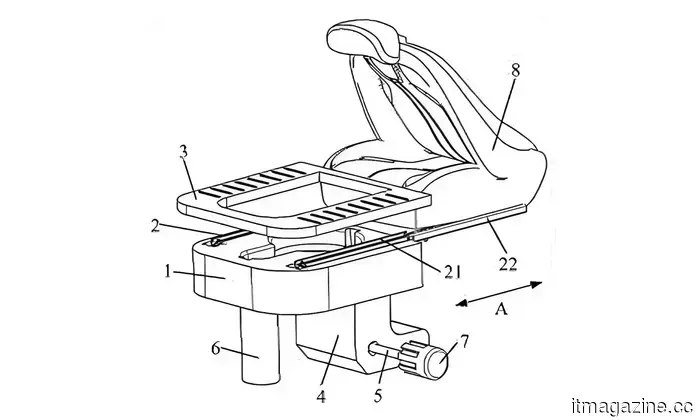

A Chinese car manufacturer has recently submitted a patent for vehicle seats that include a concealed toilet.

Seres has secured a patent for a retractable toilet concealed beneath the car seat. It's genuine, it's innovative, and it could be the most surprising automotive feature of the year.

A Chinese car manufacturer has recently submitted a patent for vehicle seats that include a concealed toilet.

Seres has secured a patent for a retractable toilet concealed beneath the car seat. It's genuine, it's innovative, and it could be the most surprising automotive feature of the year.

This Android smartwatch application is a lifesaver if your daily commute leaves you feeling exhausted and drowsy.

Sleep&Arrive is an innovative Wear OS transit alarm that activates based on your location rather than the time, offering drowsy travelers an intelligent solution to preventing missed stops, silencing alerts, and depending less on fluctuating arrival predictions.

This Android smartwatch application is a lifesaver if your daily commute leaves you feeling exhausted and drowsy.

Sleep&Arrive is an innovative Wear OS transit alarm that activates based on your location rather than the time, offering drowsy travelers an intelligent solution to preventing missed stops, silencing alerts, and depending less on fluctuating arrival predictions.

A Chinese car manufacturer has recently submitted a patent for vehicle seats that include a concealed toilet.

Seres has secured a patent for a pull-out toilet concealed beneath the car seat. It's innovative, it's practical, and it could very well be the most surprising automotive feature of the year.

A Chinese car manufacturer has recently submitted a patent for vehicle seats that include a concealed toilet.

Seres has secured a patent for a pull-out toilet concealed beneath the car seat. It's innovative, it's practical, and it could very well be the most surprising automotive feature of the year.

Samsung S26 Plus Review: Repeatedly Unexciting

The middle child is no longer. For many years, Samsung has adhered to its sacred trinity structure for the Galaxy S series: the standard, the Plus, and the high-end Ultra (previously referred to as “we eliminated the Note but preserved its essence”). During this evolution, the Plus model has subtly lost its unique character. Quick Take […]

Samsung S26 Plus Review: Repeatedly Unexciting

The middle child is no longer. For many years, Samsung has adhered to its sacred trinity structure for the Galaxy S series: the standard, the Plus, and the high-end Ultra (previously referred to as “we eliminated the Note but preserved its essence”). During this evolution, the Plus model has subtly lost its unique character. Quick Take […]

Studies indicate that AI chatbots evaluate users, and the outcomes are not always positive.

Is your AI assistant subtly undermining you? Recent studies indicate that chatbots evaluate users through strict, mechanical reasoning, and the biases they uncover are more pronounced than our own.