Meta halts its AI data operations following a breach that jeopardized its training secrets.

In summary: Meta has halted its partnership with Mercor, a $10 billion AI data startup, following a supply chain attack that divulged what could be the AI industry's best-kept secrets: both personal information and the training methodologies behind the top large language models. This breach, executed through a compromised version of the LiteLLM open-source library, has initiated investigations at OpenAI and Anthropic and led to a class action lawsuit affecting over 40,000 individuals.

Last month, when hackers tampered with a widely used open-source library, they didn't merely acquire personal data. Wired reports that they may have also taken the frameworks for developing some of the most advanced AI models globally.

Meta has paused its collaboration with Mercor, based in San Francisco, which specializes in creating customized training datasets for leading figures in artificial intelligence, after a cyberattack revealed sensitive details about their training techniques. This suspension is indefinite and has caused considerable concern in an industry that has invested billions to keep its proprietary methods confidential.

The startup at the center of it all, Mercor, may not be a familiar name, but it plays a crucial role in the AI economy. Founded in 2023 by Brendan Foody, Adarsh Hiremath, and Surya Midha, who were high school friends competing in speech and debate, the enterprise recruits various professionals—contract workers, engineers, lawyers, doctors, bankers, and journalists—to create high-quality proprietary training data for AI labs. Its clients have included Meta, OpenAI, Anthropic, and Google.

Mercor's rapid ascent is remarkable even by Silicon Valley standards. In October 2025, Mercor secured a $350 million Series C funding round, valuing it at $10 billion and making all three founders the world’s youngest self-made billionaires at age 22. By September 2025, the company achieved an annualized revenue of $500 million, rising from $100 million six months earlier. Its business model, which centers on providing fine-tuning and reinforcement learning data that AI labs depend on but seldom discuss publicly, positioned it as one of the most valuable private firms in the AI supply chain. However, this very positioning has now become its Achilles' heel.

The attack targeting Mercor had its origins upstream. Analysis from Wiz, Snyk, and Datadog Security Labs revealed that a threat group known as TeamPCP compromised the CI/CD pipeline of LiteLLM, an open-source Python library with 97 million monthly downloads used by millions of developers to integrate applications with AI services, and found in approximately 36% of cloud environments. Earlier, TeamPCP exploited a supply chain attack on Trivy, a commonly used security scanner, to access credentials of a LiteLLM maintainer. On March 27, 2026, the group utilized those credentials to release two malicious versions of the LiteLLM package, specifically 1.82.7 and 1.82.8, directly onto PyPI, the Python package repository. The compromised packages were accessible for about 40 minutes before being detected and removed.

The malicious payload was sophisticated. Version 1.82.7 embedded base64-encoded malware directly into the library’s proxy server code, executing upon import, while version 1.82.8 employed a harmful path configuration file that triggered automatically at the start of every Python process. Both versions aimed to collect environment variables, API keys, SSH keys, cloud credentials from AWS, Google Cloud, and Azure, Kubernetes configurations, CI/CD secrets, and database credentials, all of which were exfiltrated to a server at models.litellm[.]cloud.

Mercor confirmed it was "one of thousands of companies" impacted by this attack, which exposed roughly four terabytes of data. Court documents and claims from the involved hacking groups indicate that the stolen data includes 939 gigabytes of source code, a 211-gigabyte user database, and about three terabytes of video interviews and identity verification documents. The leaked information may contain full names and Social Security numbers of over 40,000 current and former Mercor contractors and clients.

The significance of the leaked information cannot be understated. While the exposure of personal information is concerning, what has truly alarmed Meta and captured the interest of other AI labs is an entirely different kind of information.

Due to Mercor's role within the data pipelines of multiple AI companies, the breach may have laid bare specifics regarding data selection criteria, labeling processes, and training strategies that companies have meticulously developed over years and poured billions into safeguarding. While datasets can be replicated, duplicating training methodologies is more challenging and represents a substantive competitive advantage. The Wired report points out that the extent of this potential exposure has prompted numerous AI labs to scrutinize what may have been compromised.

OpenAI, which also utilizes Mercor’s services, stated it is investigating the incident but has not halted ongoing projects with the company. Anthropic, which secured $3

Other articles

WHOOP secures $575 million at a $10.1 billion valuation, indicating a potential IPO on the horizon.

WHOOP raised $575 million in its Series G funding round, tripling its 2021 valuation to $10.1 billion. Supported by Mayo Clinic, Abbott, and Cristiano Ronaldo, the health wearable firm has announced that an IPO is on the horizon.

WHOOP secures $575 million at a $10.1 billion valuation, indicating a potential IPO on the horizon.

WHOOP raised $575 million in its Series G funding round, tripling its 2021 valuation to $10.1 billion. Supported by Mayo Clinic, Abbott, and Cristiano Ronaldo, the health wearable firm has announced that an IPO is on the horizon.

Aiper Experts Duo: This AI-powered pool cleaning team ensures hassle-free pool ownership around the clock.

Pool maintenance has historically been fragmented, with various tools addressing specific issues but seldom collaborating effectively. Activities such as cleaning the floor, skimming the surface, and ensuring water quality have typically necessitated distinct interventions, often carried out at different intervals. What has been lacking is a system that not only automates these tasks […]

Aiper Experts Duo: This AI-powered pool cleaning team ensures hassle-free pool ownership around the clock.

Pool maintenance has historically been fragmented, with various tools addressing specific issues but seldom collaborating effectively. Activities such as cleaning the floor, skimming the surface, and ensuring water quality have typically necessitated distinct interventions, often carried out at different intervals. What has been lacking is a system that not only automates these tasks […]

Is Dunesday no longer a possibility? Could shifting the release date potentially rescue Avengers: Doomsday or Dune: Part Three?

Avengers: Doomsday might receive a new release date, but would that rescue the film and Dune: Part Three from Dunesday this December?

Is Dunesday no longer a possibility? Could shifting the release date potentially rescue Avengers: Doomsday or Dune: Part Three?

Avengers: Doomsday might receive a new release date, but would that rescue the film and Dune: Part Three from Dunesday this December?

Apple has removed this AI app… and now it has unexpectedly returned.

Apple has restored the Anything AI app after initially removing it due to policy violations, in response to backlash and an innovative iMessage workaround created by the developers.

Apple has removed this AI app… and now it has unexpectedly returned.

Apple has restored the Anything AI app after initially removing it due to policy violations, in response to backlash and an innovative iMessage workaround created by the developers.

Is Dunesday finished? Would a new release date truly rescue Avengers: Doomsday or Dune: Part Three?

Avengers: Doomsday could potentially receive a new release date, but would that be enough to rescue the film and Dune: Part Three from facing Dunesday this December?

Is Dunesday finished? Would a new release date truly rescue Avengers: Doomsday or Dune: Part Three?

Avengers: Doomsday could potentially receive a new release date, but would that be enough to rescue the film and Dune: Part Three from facing Dunesday this December?

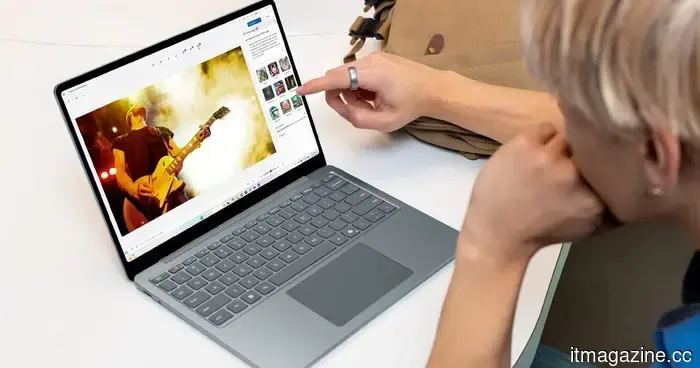

Windows 11 is set to provide haptic feedback for numerous tasks.

Windows 11 is introducing haptic feedback for tasks such as snapping and resizing windows, providing tactile responses for compatible trackpads and input devices.

Windows 11 is set to provide haptic feedback for numerous tasks.

Windows 11 is introducing haptic feedback for tasks such as snapping and resizing windows, providing tactile responses for compatible trackpads and input devices.

Meta halts its AI data operations following a breach that jeopardized its training secrets.

Meta has put its collaboration with the $10B AI data startup Mercor on hold indefinitely following a LiteLLM supply chain attack that revealed the training methods employed by Meta, OpenAI, and Anthropic.