Apple has invested its AI future in Gemini. Here's how it might transform the iPhone for you.

One of the most significant announcements in the tech industry — involving two of the largest tech companies — was summarized in a concise joint statement of under a hundred words. Apple revealed that Gemini will be the driving force behind the revitalization of the Siri assistant and the infrastructure that will support AI software experiences on iPhones and Macs.

“These models will enable future Apple Intelligence features, including a more personalized Siri coming this year,” the company stated. This represents a major victory for Google, good news for users of Apple devices, and an acknowledgment from Apple that it has not been able to compete in the AI race against Google, Meta, or OpenAI at the same level.

The signs have been evident for quite some time. At one point, Apple was reportedly experimenting with Anthropic’s Claude and OpenAI’s GPT models for Siri. Ultimately, the company chose Google, which serves as a significant endorsement of Gemini’s capabilities. Let’s explore what is likely on the horizon for millions of iPhone users, like you and me.

So, what about privacy?

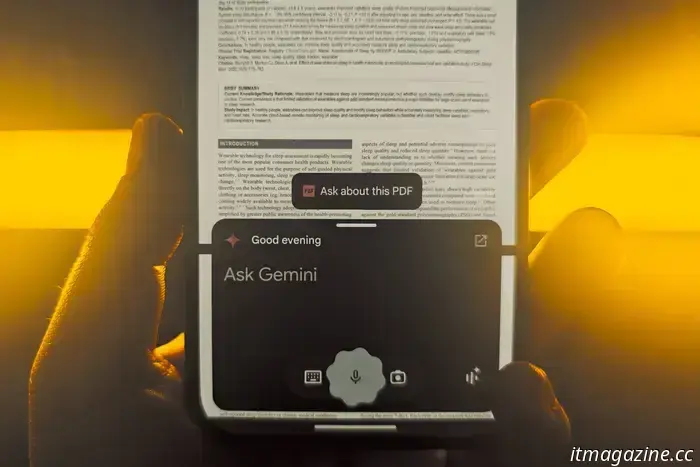

With AI, there’s a major challenge that is hard to ignore. AI chatbots delve deeper into our lives than social media ever did. They have access to our email, calendar, photo gallery, files, and, of course, our daily thoughts. Experts are already wrestling with the emerging issue of a profound emotional connection between humans and AI.

But that’s not all. Each time we summon an AI chatbot, data is transmitted to a company’s servers for processing. In many instances, this data is retained for model training or safety purposes, and opting out is not an option. The proposed solution? On-device AI. For instance, Gemini Nano is an approach that operates on the local silicon of your phone or PC.

No data is transmitted beyond your device. However, this method can be slow and less capable. For media-related tasks or other resource-intensive activities, cloud processing is necessary. So, are you prepared for this, now that Google is powering the AI experiences on your iPhone and Mac, particularly in light of its past conduct? Well, Apple already has a plan in place and has been clear about its commitment to privacy with Gemini at the helm of AI experiences.

Google

“Apple Intelligence will continue to operate on Apple devices and Private Cloud Compute, while adhering to Apple’s top-tier privacy standards,” the company states. This indicates that your data and AI interactions will solely be processed through Private Cloud Compute servers, utilizing custom Apple silicon and the company’s security-first operating system.

“We believe PCC is the most advanced security architecture ever implemented for cloud AI processing at scale,” claims Apple. Through PCC, data is encrypted as soon as it departs from your device. Once the assigned task is complete, both the user request and any shared materials are deleted from the servers.

No user data is stored, and anything that reaches the cloud servers is inaccessible to Apple. Gemini merely provides the intelligence needed to process your text or voice commands, while all subsequent procedures are securely managed on Apple’s protected servers, rather than being sent to Google.

What’s next?

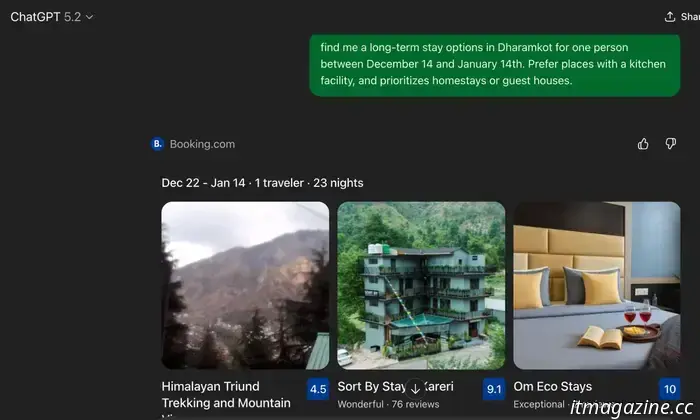

If you have used Gemini and then tasked Siri with the same objectives (and witnessed its shortcomings), you will recognize the distinction. The recent partnership between Google and Apple is bridging that gap. More importantly, it is equipping Apple to deliver its own unique AI experiences.

Overall, the foundational Gemini AI framework will enhance Siri and Apple Intelligence. The exact manner of this enhancement remains unclear, as it is unlikely that Apple will just replicate what's already available. You probably won’t see the Gemini brand being prominently used in these next-generation AI features on your iPhone.

Apple is simply leveraging the intelligence. The overall design and interaction will remain characteristically Apple.

However, when comparing what Gemini can already achieve on Android devices — and what Siri cannot — one can anticipate the advancements soon coming to your iPhone, iPad, and Mac. Apple isn’t merely employing Gemini’s underlying AI technology for Siri and Apple Intelligence, but is integrating it much more deeply.

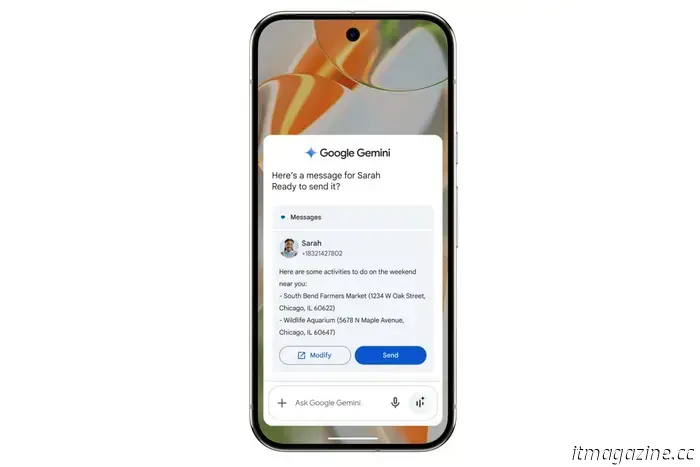

Apple plans to utilize Google’s AI toolkit for its next generation of Apple Foundation models. Think of these models as the essential elements that will empower Apple Intelligence features such as summarization, writing tools, image generation, and cross-app actions.

These models, which were unveiled in 2024, can operate either locally on a device (without needing an internet connection) or on Apple’s cloud servers. A year later, Apple released enhanced versions that were quicker, more adept at processing media, improved in language comprehension, and supported additional languages.

A major incentive was that the Foundation Models framework would allow developers access to these on-device AI capabilities, enhancing the user experience. Imagine opening Spotify, and instead of manual input, you ask Siri to “create a playlist with my most listened-to songs this month.”

That functionality is not yet available on iPhones.

Another limitation is Siri’s fundamental capability. When you pose a question that exceeds basic queries, it tends

Other articles

Your upcoming retro mini PC might resemble a classic PlayStation.

Acemagic's newest retro mini PC takes inspiration from the design of the Dreamcast and PS1, and integrates it with AMD's Gorgon Point platform. It appears to be suited for a TV stand, though the pricing and release date remain unspecified.

Your upcoming retro mini PC might resemble a classic PlayStation.

Acemagic's newest retro mini PC takes inspiration from the design of the Dreamcast and PS1, and integrates it with AMD's Gorgon Point platform. It appears to be suited for a TV stand, though the pricing and release date remain unspecified.

Your initial NVIDIA N1X laptop might be from Dell.

A Dell-tested NVIDIA N1X laptop has recently appeared in a new test record. This strongly suggests that Nvidia is moving forward with its laptop processor plans, although the details regarding Windows drivers and the timing of the launch are still uncertain.

Your initial NVIDIA N1X laptop might be from Dell.

A Dell-tested NVIDIA N1X laptop has recently appeared in a new test record. This strongly suggests that Nvidia is moving forward with its laptop processor plans, although the details regarding Windows drivers and the timing of the launch are still uncertain.

SpaceX's Crew-11 is seen in a video as they get ready to head back home ahead of schedule.

SpaceX's Crew-11 is heading back from the International Space Station (ISS) earlier than planned because of a medical issue involving one of its astronauts. This marks the first instance in which NASA has opted to return a crew member prematurely due to a health concern. The Crew-11 team includes Americans Michael Fincke and Zena Cardman, along with [...]

SpaceX's Crew-11 is seen in a video as they get ready to head back home ahead of schedule.

SpaceX's Crew-11 is heading back from the International Space Station (ISS) earlier than planned because of a medical issue involving one of its astronauts. This marks the first instance in which NASA has opted to return a crew member prematurely due to a health concern. The Crew-11 team includes Americans Michael Fincke and Zena Cardman, along with [...]

Google Withdraws AI Health Summaries After Revelations of Risky Medical Mistakes

In response to safety issues, Google has removed specific AI-generated health summaries and is reevaluating how its search AI manages delicate medical subjects.

Google Withdraws AI Health Summaries After Revelations of Risky Medical Mistakes

In response to safety issues, Google has removed specific AI-generated health summaries and is reevaluating how its search AI manages delicate medical subjects.

Anthropic's Cowork transforms Claude into your active digital partner.

Anthropic's newest tool enables Claude to access local files to assist you with routine tasks.

Anthropic's Cowork transforms Claude into your active digital partner.

Anthropic's newest tool enables Claude to access local files to assist you with routine tasks.

You could acquire a wearable from OpenAI, and it's more than just earbuds.

Smart Pikachu asserts that OpenAI is developing a wearable device called Sweetpea, designed to be worn behind the ear and featuring detachable components. An image resembling a schematic suggests it could combine listening capabilities with sensing technology.

You could acquire a wearable from OpenAI, and it's more than just earbuds.

Smart Pikachu asserts that OpenAI is developing a wearable device called Sweetpea, designed to be worn behind the ear and featuring detachable components. An image resembling a schematic suggests it could combine listening capabilities with sensing technology.

Apple has invested its AI future in Gemini. Here's how it might transform the iPhone for you.

Google's Gemini, in its ideal form, will drive Siri and the expansive Apple Intelligence framework. The groundwork is in place, but the implementation might surpass expectations.