Nvidia DLSS 5 could represent the future of graphics, but I still wish for a large "Off" switch.

For years, achieving photo-realism was considered the ultimate aim for next-generation games. Ray tracing represented a significant advancement, followed by the emergence of super-resolution and super-sampling improvements. However, when Nvidia unveiled its latest breakthrough in video game graphics, the fifth-generation Deep Learning Super Sampling (DLSS 5), it sparked considerable debate. Notably, DLSS 5 isn’t merely a refined update to its predecessors; it represents a profound shift.

Nvidia presents it as a real-time neural rendering model capable of enhancing the photorealistic lighting and material detail in game frames, marking a significant departure from straightforward upscaling. This is a bold technical move, accompanied by aesthetic risks. While it sounds impressive, and genuinely has merits, if DLSS 5 functions as intended, it may enable richer visuals without requiring developers to manually implement every lighting effect in the traditional manner.

Unveiled at GTC, DLSS 5 is scheduled for release in the fall of 2026, touted as Nvidia’s biggest graphics advancement since real-time ray tracing. Yet, the initial response wasn’t one of admiration; instead, it was filled with memes about “AI faces,” “AI slop,” and “yassified” characters. While Nvidia maintains that the critics are mistaken, it does raise the question: do we truly need this technology?

What exactly does DLSS 5 offer, and is it genuinely beneficial?

Nvidia asserts that DLSS 5 utilizes each frame rendered by the game, alongside motion data, to produce enhanced photorealistic lighting and materials in real-time. Ideally, it should improve the depiction of elements like skin, hair, and fabric. The company positions it as part of a larger vision for neural rendering rather than a mere novelty. For photorealistic games aiming for increased realism in lighting, this is an enticing proposition.

This isn’t meant to act as a simple one-click beauty filter. Developers are expected to maintain full control over the intensity, color grading, and masking effects. Moreover, DLSS 5 integrates through Nvidia Streamline, granting studios the ability to determine precisely where the effect is applied and where it isn’t.

There’s a valid argument in favor of DLSS 5. Traditional rendering can be costly, particularly when developers seek cinematic lighting without compromising frame rates. A tool that helps bridge that gap could genuinely benefit players, especially in high-budget, realistic single-player games.

If it’s so sophisticated, why is it frequently referred to as an AI filter?

Nvidia chief Jensen Huang noted that gamers are misunderstanding DLSS 5 during the GTC event, but if that’s true, why is the criticism so widespread? It’s because the discontent isn’t merely a reaction against “AI” technology.

A significant reason the “AI filter” label has stuck is that some of the public explanations characterize DLSS 5 more as intelligent image reinterpretation rather than a technology fully cognizant of a game’s entire 3D environment. Nvidia’s Jacob Freeman stated that the system uses the rendered frame and motion vectors as inputs while keeping the underlying geometry unchanged.

This is precisely why critics are concerned. If DLSS 5 primarily operates from a 2D frame along with motion data, it is essentially making guesses. Such guesswork can result in the uncanny, overly processed appearance observed in early demos.

When a GPU feature begins altering facial tones, lighting moods, or the overall atmosphere of a scene, observers shift from seeing it as a harmless enhancement to viewing it as an aesthetic intrusion.

Is artistic intention at risk?

This is the main concern surrounding DLSS 5. Nvidia CEO Jensen Huang has vigorously defended the technology, emphasizing that developers retain full control over intensity, grading, and masking. While this sounds reassuring in theory, my observations suggest otherwise.

In the demo, DLSS 5 significantly alters color grading and contrast, raising doubts about whether developers genuinely approved those modifications.

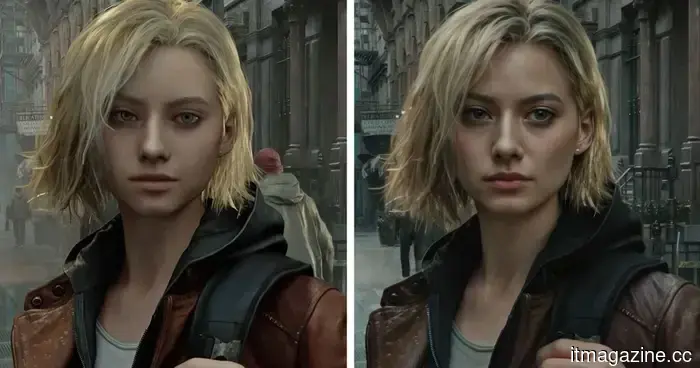

Resident Evil Requiem provides a stark example of this technology, wherein the character Grace appears to have subtle makeup applied to her eyes and lips. Other instances, such as Starfield, further illustrate this somewhat generic appearance, adding “detail” without necessarily enhancing immersion.

Based on various videos and comments online, both gamers and some developers were displeased by the beauty-filter effect on character faces. Although Nvidia claims developers will have complete control, some were reportedly taken by surprise by the announcement, including those working at major studios like Capcom. A developer from Ubisoft noted, “We found out at the same time as the public.”

When the primary selling point shifts to “look how much the AI changed this,” it’s understandable for people to question whether the original artistic vision is being preserved or altered.

Are gamers overreacting or identifying a genuine issue early on?

The community’s response has been chaotic, but it isn't unfounded. Reddit threads are filled with remarks labeling DLSS 5 as “AI slop,” featuring legitimate concerns that the

Other articles

IRONSCALES introduces AI email agents and threat intelligence at RSAC.

IRONSCALES introduces three AI email security agents and a new series on threat intelligence at RSAC 2026, focusing on the increase in AI-driven phishing attacks.

IRONSCALES introduces AI email agents and threat intelligence at RSAC.

IRONSCALES introduces three AI email security agents and a new series on threat intelligence at RSAC 2026, focusing on the increase in AI-driven phishing attacks.

Beyond the Boundary Wire: How Yardcare and the Innovative N1600PRO are Pioneering the Robotic Mower Movement

Yardcare can put an end to your tiring manual mowing today. We present the N1600PRO, a smart, wireless lawnmower designed for homeowners seeking a smart upgrade. Featuring RTK + Vision navigation and intelligent app control, this robotic mower provides a flawlessly trimmed yard without requiring hours of your time.

Beyond the Boundary Wire: How Yardcare and the Innovative N1600PRO are Pioneering the Robotic Mower Movement

Yardcare can put an end to your tiring manual mowing today. We present the N1600PRO, a smart, wireless lawnmower designed for homeowners seeking a smart upgrade. Featuring RTK + Vision navigation and intelligent app control, this robotic mower provides a flawlessly trimmed yard without requiring hours of your time.

Human therapists staged a strike in protest against AI counselors taking their place.

Will AI chatbots soon take the place of your therapist? A total of 2,400 mental health providers from Kaiser Permanente went on strike due to this very concern.

Human therapists staged a strike in protest against AI counselors taking their place.

Will AI chatbots soon take the place of your therapist? A total of 2,400 mental health providers from Kaiser Permanente went on strike due to this very concern.

Munich-based startup Interloom secured $16.5 million in funding.

Munich-based startup Interloom has secured $16.5 million in funding, spearheaded by DN Capital, to develop a system that continuously updates the way organizations make operational decisions.

Munich-based startup Interloom secured $16.5 million in funding.

Munich-based startup Interloom has secured $16.5 million in funding, spearheaded by DN Capital, to develop a system that continuously updates the way organizations make operational decisions.

The Munich-based startup Interloom secured $16.5 million in funding.

Munich-based startup Interloom has secured $16.5 million in funding, led by DN Capital, to develop a system that continuously updates how businesses make operational decisions.

The Munich-based startup Interloom secured $16.5 million in funding.

Munich-based startup Interloom has secured $16.5 million in funding, led by DN Capital, to develop a system that continuously updates how businesses make operational decisions.

Researchers showcase the feasibility of wireless communication underground, capable of penetrating solid bedrock.

Korean scientists have successfully sent voice signals 100 meters underground via magnetic fields, marking a world first that has the potential to revolutionize rescue operations and communication in subterranean environments.

Researchers showcase the feasibility of wireless communication underground, capable of penetrating solid bedrock.

Korean scientists have successfully sent voice signals 100 meters underground via magnetic fields, marking a world first that has the potential to revolutionize rescue operations and communication in subterranean environments.

Nvidia DLSS 5 could represent the future of graphics, but I still wish for a large "Off" switch.

DLSS 5 could represent the most significant advancement in real-time graphics in years, yet its AI-filtered appearance, computational expense, and associated risks cannot be overlooked.